HSBC: £63.9M. Danske Bank: ~$2B. ABN AMRO: €480M. ING: €775M. Standard Chartered: $1.1B. Every one of these fines involved sanctions screening failures — systems that flagged thousands of false positives on common names while missing the structurally complex clients who were actually sanctioned or PEP-adjacent.

Your Sanctions Screening Engine Has Never Seen a Real UHNWI

I spent years watching sanctions screening systems inside traditional banks do exactly two things well: flag “Mohammed” as a potential match against every sanctions list in existence, and miss the Liechtenstein-domiciled family office with a beneficial owner whose cousin sits on a sanctioned entity’s board in a jurisdiction the system was never configured to check.

The false positive problem is not a tuning problem. It is a test data problem.

Most sanctions screening engines are calibrated against profiles that contain a name, a country, and maybe a date of birth. The screening logic does fuzzy name matching, checks the country against OFAC, EU, and UN consolidated lists, and returns a score. When you test this system with 10,000 profiles that have single-jurisdiction exposure and Latin-alphabet names, it works beautifully. False positive rates look manageable. The compliance team signs off on the calibration report.

Then the real client base starts flowing through.

A UHNWI with dual Swiss-Lebanese nationality. Tax domicile in Singapore. A family trust registered in the British Virgin Islands with a nominee director in Panama. The client’s name transliterates three different ways depending on whether you use the Lebanese passport, the Swiss identity card, or the trust registration documents. The sanctions screening engine produces four separate alerts — three are false positives on name variants, and the fourth is a genuine PEP connection that gets buried in the noise.

I have seen this pattern at every traditional bank I have worked with. The screening engine is not broken. It was never tested against the structural complexity it encounters in production. The calibration was done against flat profiles, so the thresholds are set for flat profiles. When multi-jurisdictional, multi-entity, multi-alphabet clients arrive, the system either floods the compliance team with false positives or — worse — suppresses genuine matches because the confidence scores are miscalibrated.

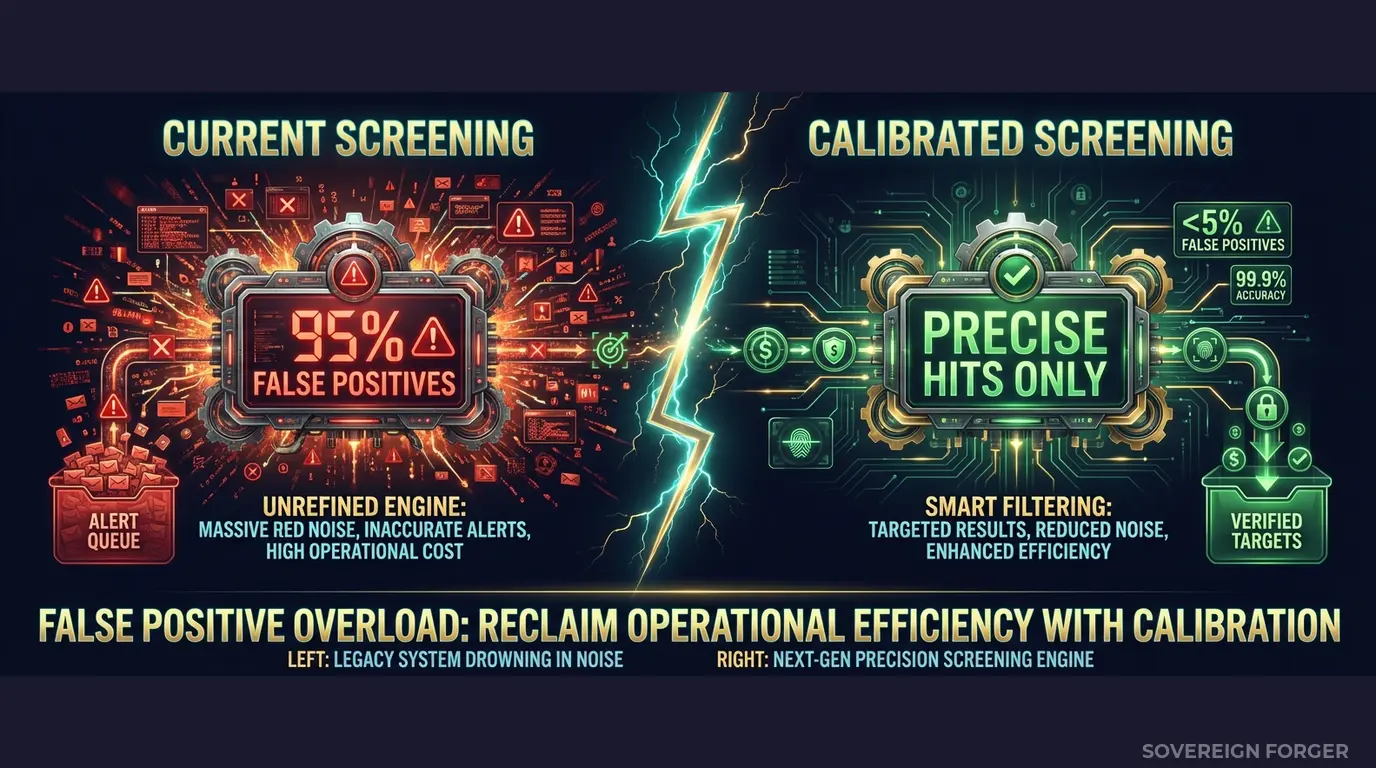

The numbers tell the story. Industry benchmarks put false positive rates in sanctions screening at 95-98%. That means for every 100 alerts, 2 to 5 are genuine. Compliance analysts spend hours clearing false positives that should never have been generated — because the screening engine was tuned against test data that contained none of the structural features that cause false matches in real UHNWI portfolios.

The regulatory exposure is bilateral. Flag too many false positives, and your compliance team drowns — response times increase, genuine alerts get deprioritised, and regulators cite inadequate screening effectiveness. Miss a genuine match because your thresholds were set too high to compensate for the noise, and you face the kind of enforcement action that ends careers. Danske Bank’s $2 billion settlement did not happen because the screening engine was missing. It happened because the screening process was structurally inadequate for the client base it was processing.

Three Approaches That Leave Your Screening Engine Blind

Using copies of production client data. I have been in rooms where the sanctions team proposed extracting real client records into the screening test environment. The logic sounds reasonable — test against the actual clients you need to screen. But this creates an immediate GDPR Article 25 violation. Personal data in a test environment with broader developer access, weaker logging, and insufficient retention controls. For traditional banks operating across multiple jurisdictions, you are now violating data protection laws in every country where those clients are domiciled. The August 2026 EU AI Act enforcement compounds the problem: if your screening AI trains on this data, Article 10 requires documented governance of training data provenance. Real client data in a test environment is a compliance failure you are building into your own infrastructure.

Using anonymized client data. Stripping names and replacing them with synthetic identifiers does not solve the sanctions screening problem — it creates a new one. Sanctions screening is fundamentally about name matching. If you anonymize the names, you have removed the exact dimension your screening engine needs to test against. And the non-name fields still carry re-identification risk: with only 265,000 UHNWIs globally, the combination of net worth tier, offshore jurisdiction, and profession can uniquely identify individuals even without direct identifiers. You end up with test data that cannot test name matching and still violates GDPR. The worst of both worlds.

Using generic synthetic generators. Platform-based generators produce profiles with single-jurisdiction exposure, Latin-alphabet names, and no PEP connections. Your screening engine trains on these profiles and learns that a “normal” client has one country, one name spelling, and zero political exposure. When a real UHNWI arrives with three jurisdictions, a name that transliterates differently across documents, and a family member who held a government position in a sanctioned country, the screening engine has no reference frame. It either flags everything or flags nothing — because the thresholds were calibrated against a population that bears no structural resemblance to your actual high-risk clients.

Real Data vs. Anonymized vs. Born-Synthetic

| Dimension | Real Data | Anonymized | Born-Synthetic |

|---|---|---|---|

| PII present | Yes | Residual | None |

| Name matching testable | Yes | No (names stripped) | Yes (culturally coherent names) |

| Re-identification risk | Certain | Probable (UHNWI) | Impossible |

| Multi-jurisdictional profiles | Yes | Partial (fields correlated) | Yes (by construction) |

| PEP/sanctions signals | Yes (sensitive) | Stripped or residual | Deterministic, realistic |

| GDPR Art. 25 compliant | No | Disputed | Yes |

| EU AI Act Art. 10 | Violation | Unclear | Compliant |

| Certifiable for auditors | No | No | Yes (Certificate of Origin) |

| Fine exposure | Up to 4% global revenue | Up to 4% global revenue | Zero |

Born-Synthetic Sanctions Screening Data Built for Traditional Bank Complexity

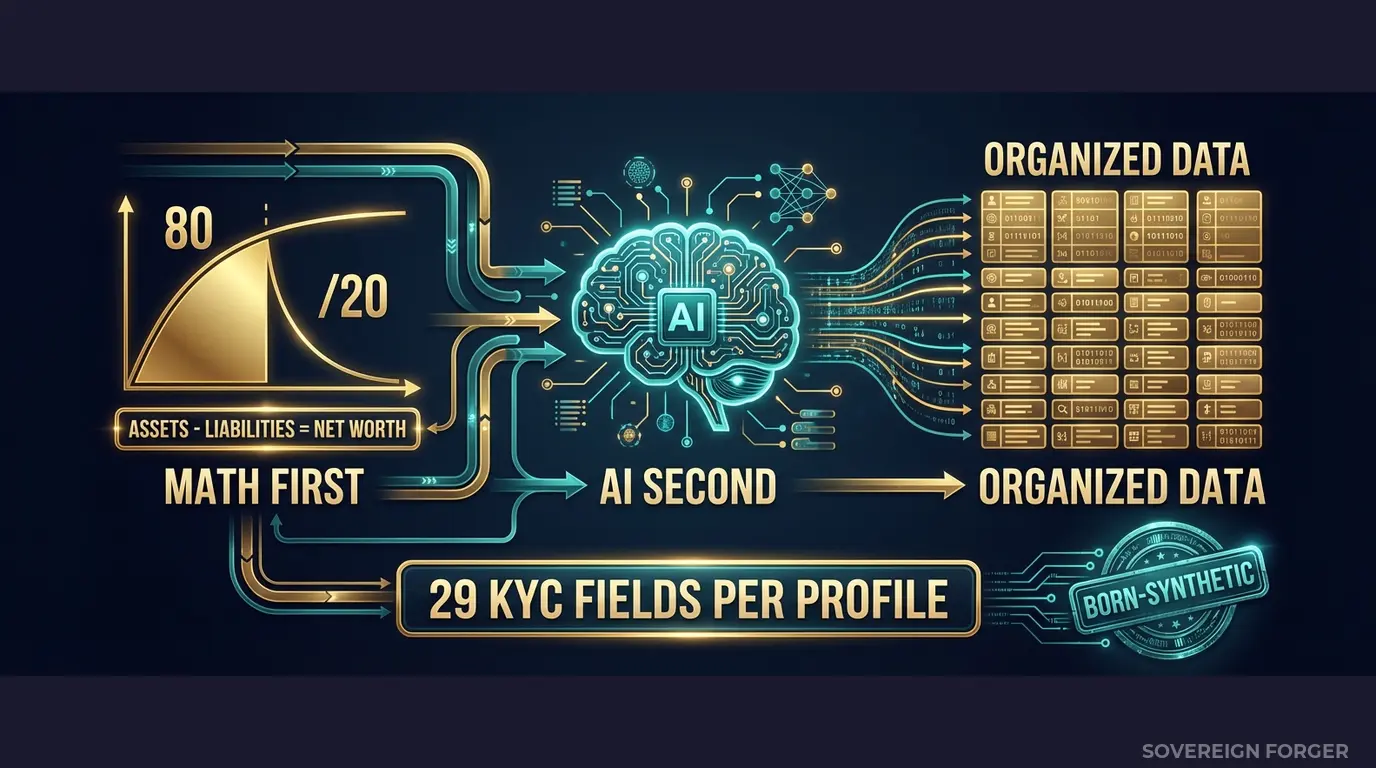

Every profile in the Sovereign Forger KYC dataset is generated from mathematical constraints — not derived from any real person. The generation pipeline works in two stages:

Math First. Net worth follows a Pareto distribution — the way real wealth is actually distributed, not a bell curve. Asset allocations are computed within algebraic constraints: Assets – Liabilities = Net Worth, by construction. Every balance sheet balances on every record. Zero exceptions. This matters for sanctions screening because the financial structure of a profile determines its jurisdictional exposure — and jurisdictional exposure is what drives screening complexity.

AI Second. A local AI model running entirely offline adds narrative context — biography, profession, philanthropic focus — after the financial figures are locked. The AI never touches the numbers. It enriches the profile with culturally coherent details that match the geographic niche and wealth tier. Names are generated from culture-specific onomastic libraries covering Arabic, Chinese, Germanic, Francophone, Latin American, and Southeast Asian naming patterns — the exact naming complexity that breaks fuzzy matching algorithms in production.

Why This Data Fixes Sanctions Screening Specifically

The reason your sanctions screening engine produces 95%+ false positive rates is not algorithmic — it is data-structural. The engine was calibrated against profiles that do not contain the features that cause false matches. Sovereign Forger profiles contain those features by construction:

Sanctions screening signals. Every KYC-Enhanced profile includes `sanctions_screening_result` (clear, potential_match, or confirmed_match), `sanctions_match_confidence` (0-100 score for potential matches), and `high_risk_jurisdiction_flag`. These are not randomly assigned. They are deterministically derived from the profile’s archetype, niche, net worth, and offshore exposure — so a family office manager in the Middle East with Cayman structures gets different screening signals than a tech founder in Silicon Valley with Delaware entities.

PEP indicators. `pep_status` (none, domestic, foreign, international_org), `pep_position`, and `pep_jurisdiction` are computed from the profile’s archetype and niche. The Middle East niche has ~29% PEP incidence. Old Money Europe has a different distribution. These are the rates your screening engine will encounter in production — not the 0% PEP rate in your current test data.

Multi-jurisdictional exposure. Every profile has `tax_domicile` and `offshore_jurisdiction` fields that create the cross-border complexity your screening engine needs to handle. A Swiss-Singapore profile with a BVI vehicle and a Panama nominee structure generates different screening alerts than a LatAm profile with Cayman exposure. Your screening engine needs to process both — and it needs test data that contains both.

Culturally coherent naming. Names are generated from 28 culture-specific naming libraries. Arabic names transliterate differently. Chinese names have distinct romanisation patterns. Germanic compound surnames trigger different fuzzy matching behaviour than single-syllable Malay names. These naming patterns are not cosmetic — they are the exact source of false positives in production sanctions screening.

29 Fields Designed for Sanctions Screening Workflows

Every KYC-Enhanced profile includes the fields your screening pipeline actually needs to process:

Identity & Geography: full_name, residence_city, residence_zone, tax_domicile

Wealth Structure: net_worth_usd, total_assets, total_liabilities, property_value, core_equity, cash_liquidity, assets_composition, liabilities_composition

Professional Context: profession, education, narrative_bio, philanthropic_focus

Offshore Exposure: offshore_jurisdiction, offshore_vehicle

KYC & Screening Signals: kyc_risk_rating, pep_status, pep_position, pep_jurisdiction, sanctions_screening_result, sanctions_match_confidence, adverse_media_flag, source_of_wealth_verified, sow_verification_method, high_risk_jurisdiction_flag

Every field is deterministically derived — not randomly assigned. The same UUID always produces the same profile with the same screening signals. This means your test results are reproducible. Run the same dataset through your screening engine after a calibration change, and you can measure exactly what improved and what regressed.

Built for Traditional Bank Sanctions Screening at Scale

6 Geographic Niches: Silicon Valley, Old Money Europe, Middle East, LatAm, Pacific Rim, Swiss-Singapore — each with culturally coherent wealth patterns, naming complexity, and jurisdictional exposure. Not localized templates. Structural diversity that mirrors your actual global UHNWI client base.

31 Wealth Archetypes: Tech founders, private bankers, commodity traders, family office managers, sovereign family members, real estate developers, shipping dynasty heirs — the actual client profiles that trigger screening alerts in production. Each archetype has distinct offshore patterns, PEP probability, and sanctions exposure.

Sanctions Signal Distribution: Screening results, match confidence scores, PEP statuses, and high-risk jurisdiction flags distributed with realistic frequencies by niche. The Middle East niche has higher PEP incidence. LatAm has higher risk-high ratings. Pacific Rim has distinct offshore jurisdiction patterns. Your screening engine calibration will reflect production reality — not uniform randomness.

Name Complexity by Design: 28 culture-specific naming libraries ensure your fuzzy matching algorithms encounter Arabic patronymics, Chinese romanisation variants, Germanic compound surnames, and Latin American double surnames. These are the names that generate 90% of false positives in production. Your screening engine needs to train against them.

Pricing

| Tier | Records | Price | Best For |

|---|---|---|---|

| Compliance Starter | 1,000 | $999 | Screening calibration, proof of concept |

| Compliance Pro | 10,000 | $4,999 | Full regression suite, threshold tuning |

| Compliance Enterprise | 100,000 | $24,999 | AI training + production-grade screening |

No SDK. No API key. No sales call. Download a file, feed it into your screening engine, and measure how many false positives your current thresholds generate against structurally complex profiles. That number is the calibration gap your current test data hides.

Why This Matters Now

The enforcement trajectory is clear. HSBC paid £63.9M to the FCA. Danske Bank settled for approximately $2 billion across US and European jurisdictions. ABN AMRO paid €480M. ING paid €775M. Standard Chartered paid $1.1 billion. These are not outliers — they are the consistent consequence of sanctions screening systems that were structurally inadequate for the client bases they processed. Every one of these banks had a screening engine. Every one of those engines was tested. The test data was the gap.

Multiple regulators are watching simultaneously. Traditional banks operate under FCA, ECB, FinCEN, MAS, and a dozen other regulators — each with independent sanctions requirements. A screening calibration that satisfies the FCA may not satisfy FinCEN. Your test data needs to contain the jurisdictional complexity that triggers different regulatory regimes simultaneously. Single-jurisdiction test profiles cannot validate multi-regulatory compliance.

The EU AI Act changes the equation. Fully applicable from August 2026, the EU AI Act classifies financial AI as high-risk under Annex III. Article 10 requires documented governance of training data — including provenance, bias assessment, and GDPR compliance. If your sanctions screening AI trains on real or anonymized client data, you need to prove compliance on both GDPR and AI Act simultaneously. Born-Synthetic data eliminates the problem: zero PII by construction, documented provenance via the Certificate of Sovereign Origin, and no lineage to any real person.

The balance sheet test is open source. Every Sovereign Forger record passes algebraic validation: Assets – Liabilities = Net Worth. Run the Balance Sheet Test on our data, then run it on your current test data. If your test data does not pass basic financial consistency checks, your sanctions screening engine is calibrating against profiles that could not exist in reality.

Every dataset ships with a Certificate of Sovereign Origin — documenting the born-synthetic methodology, zero PII lineage, and regulatory alignment. When your compliance team, your auditor, or your regulator asks “where did you get this screening test data and can you prove it contains no real client information?”, you hand them the certificate. That question is coming. The certificate is the answer.

Stress-Test Your Sanctions Screening

Download 100 free KYC-Enhanced UHNWI profiles with sanctions screening signals, PEP indicators, and multi-jurisdictional exposure. Run them through your screening engine. Count the false positives. Count the missed matches. Count the alerts that your current test data never generated.

That delta is the gap between your screening calibration and production reality.

No credit card. No sales call. Just your work email.

Related reading: DORA Synthetic Data Requirements for Resilience Testing — how DORA Article 24-25 mandates synthetic data for threat-led penetration testing.

Frequently Asked Questions

How do traditional banks use synthetic profiles to stress-test sanctions screening systems without exposing real customer data?

Traditional banks configure sanctions screening engines against synthetic profiles that include deliberate near-matches, transliterated names, and aliased identities across OFAC, EU, and UN sanctioned jurisdictions. Because no real individuals are involved, compliance teams can run unlimited adversarial tests — measuring false-positive rates, threshold sensitivity, and watchlist latency — without triggering GDPR Art.25 data minimisation obligations. OCC SR 11-7 requires robust model validation before deployment; synthetic stress-testing provides the documented evidence trail that examiners expect during supervisory review.

What edge cases should traditional bank sanctions screening test suites cover that neobanks typically overlook?

Traditional banks face stricter supervisory scrutiny and must validate screening across correspondent banking chains, trade finance counterparties, and beneficial ownership structures — scenarios neobanks rarely encounter at scale. Test suites should include profiles with dual nationalities, phonetic name variants across Arabic and Cyrillic scripts, politically exposed persons with indirect sanctions exposure, and entities flagged under secondary sanctions programs such as CAATSA. EBA guidelines on ML model validation require banks to demonstrate coverage of at least these edge-case categories before a model enters production.

How many synthetic sanctioned-jurisdiction profiles are needed to satisfy OCC SR 11-7 model validation requirements for a mid-tier traditional bank’s screening engine?

OCC SR 11-7 mandates conceptual soundness testing, ongoing monitoring, and outcomes analysis, but sets no fixed sample size. Industry practice for mid-tier banks running OFAC and EU consolidated list screening is a minimum of 500 to 1,000 synthetic profiles per jurisdiction tested, with at least 15 percent representing true positive matches and 10 percent representing deliberate near-misses. Basel III/IV operational risk frameworks further require that model validation evidence be reproducible and version-controlled, making synthetic datasets preferable to anonymised real data where re-identification risk cannot be fully eliminated.

What does born-synthetic mean and why does it matter specifically for traditional bank sanctions screening data?

Born-synthetic data is generated entirely from mathematical distributions — including Pareto models for wealth concentration — with zero lineage to any real person, account, or transaction. Unlike anonymised or pseudonymised records, born-synthetic profiles carry no residual re-identification risk, making them GDPR Art.25 compliant by construction rather than by process. For traditional bank sanctions screening, this distinction is material: supervisory examiners and EU AI Act Art.10 obligations enforceable August 2026 both require that training and validation data be free of unlawful processing at origin, a standard anonymised data cannot reliably meet.

How can a traditional bank compliance team get started testing sanctions screening workflows with synthetic KYC data?

Sovereign Forger provides 100 free KYC profiles available for instant download via work email, with no credit card required. Each profile contains 29 interlocked fields covering risk ratings, PEP status, sanctions screening flags, source of wealth narratives, and jurisdictional identifiers across multiple sanctioned geographies. The profiles are internally consistent — name, nationality, beneficial ownership, and risk classification are mathematically coherent — enabling immediate integration into screening engine validation pipelines without manual data preparation or legal review of data-sharing agreements.

Learn more about bank sanctions screening synthetic data and how Born Synthetic data addresses this in our glossary and comparison guides.