HSBC: £63.9M. Danske Bank: ~$2B. ABN AMRO: €480M. ING: €775M. Standard Chartered: $1.1B. Behind every one of these fines is a risk scoring model that was calibrated on simple domestic profiles — and collapsed when it encountered the structural complexity of real global wealth.

Your Risk Model Has Never Seen a Legitimate Complex Client

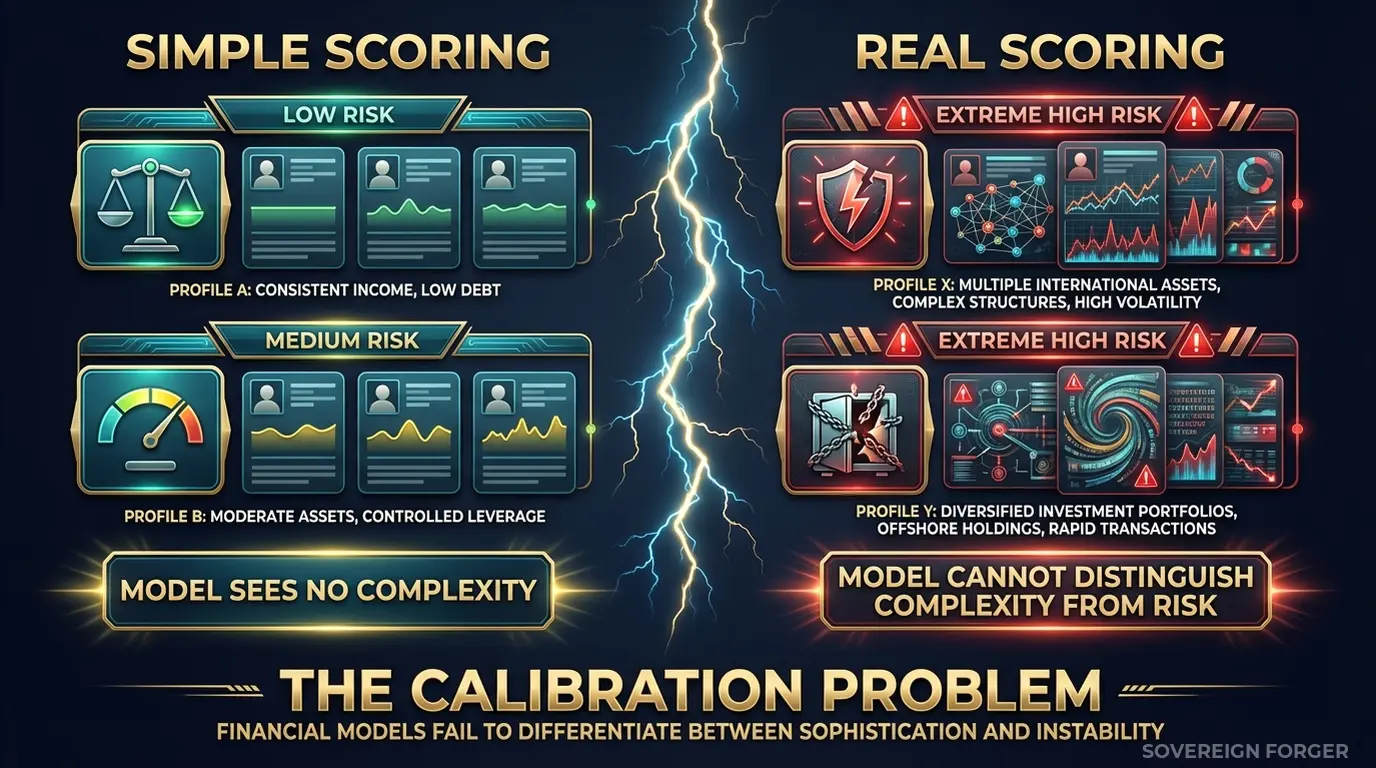

I have sat in a room where a traditional bank’s risk scoring model flagged 94% of incoming UHNWI applications as high-risk. Not because 94% of those clients were suspicious — because the model had been trained on two decades of domestic retail banking data where structural complexity simply did not exist. A client with one account, one jurisdiction, one source of income scored low. Anything else scored high. The model had learned a single rule: complexity equals danger.

This is the risk scoring problem that traditional banks cannot escape. Your model was built on millions of retail profiles where a mortgage, a savings account, and a salary deposit constitute the entire financial architecture. Then a private banking client arrives with a family office in Zurich, a holding company in Luxembourg, a trust in Jersey, real estate in three countries, and PEP-adjacent connections through a board appointment. The model has no reference frame for legitimate structural complexity — so it assigns the same risk score to a fourth-generation European industrialist as it does to a sanctions evasion pattern.

The consequences run in both directions. Flag everything, and your private banking division drowns in false positives. Compliance analysts spend hours clearing clients who were never risky — burning operational budget, delaying onboarding, and pushing high-net-worth relationships to competitors with faster processes. Miss the genuinely suspicious patterns hiding in the noise, and a regulator finds what Danske Bank’s risk model missed: $230 billion in suspicious transactions flowing through an Estonian branch because the scoring model could not distinguish between normal Baltic cross-border activity and Russian money laundering.

I watched a Tier 1 bank spend eighteen months recalibrating its risk engine after a regulatory review. The problem was not the algorithm — it was the calibration data. The model had been trained on a dataset where 99.7% of profiles were domestic retail. The remaining 0.3% were wealth management clients whose records had been anonymized so aggressively that offshore structures, multi-jurisdictional tax arrangements, and entity layering had been stripped out entirely. The model had literally never seen what a legitimate complex client looks like. Every complex profile in production was an anomaly by definition.

The calibration gap is measurable. If your risk scoring training data contains zero profiles with offshore vehicles, zero profiles with PEP-adjacent connections, and zero profiles where net worth is distributed across multiple jurisdictions through layered entities — your model cannot distinguish between structural complexity and genuine financial crime. It will either flag everything or miss everything. Both outcomes end in regulatory action.

Traditional banks face a compounding problem that neobanks do not. You have decades of accumulated client relationships, legacy systems that were built for domestic compliance, and multiple regulators watching simultaneously — FCA, ECB, FinCEN, MAS. Your risk model needs to work across all of these jurisdictions at once, against clients whose wealth architectures span all of them. A model calibrated on single-jurisdiction retail data is not just incomplete — it is structurally incapable of making the distinction that regulators require you to make.

Three Approaches That Break Risk Scoring Calibration

Every traditional bank I have spoken to has tried at least one of these. None of them produce risk scoring data that can teach a model what legitimate complexity looks like.

Using copies of production client data. The most common approach — and the most dangerous. Extract real UHNWI profiles from your core banking system into a development environment for model training. This creates an immediate GDPR Article 25 violation: personal data sitting in environments with weaker access controls, broader team access, and insufficient audit logging. But the regulatory exposure goes further. The EU AI Act becomes fully applicable in August 2026. Financial risk scoring is classified as high-risk AI under Annex III. Article 10 requires documented governance of training data provenance — which means your regulator can ask exactly where every training record came from. If the answer is “copies of real client data in a dev environment,” you are defending two regulatory violations simultaneously.

Using anonymized client data. Stripping names and account numbers from real UHNWI profiles does not solve the re-identification problem. With approximately 265,000 UHNWIs globally, the combination of net worth tier, offshore jurisdiction, entity structure, and tax domicile can uniquely identify individuals even without direct identifiers. A Zurich-based client with $450M net worth, a Cayman trust, and a Luxembourg holding company — how many people match that profile worldwide? Perhaps three. Your “anonymized” data is pseudonymized at best, and GDPR applies in full. Worse, aggressive anonymization strips exactly the structural features — offshore vehicles, multi-jurisdictional arrangements, entity layering — that your risk model needs to learn from. You anonymize the complexity out, and the model learns nothing new.

Using generic synthetic generators. Platform-based synthetic data tools produce profiles that mirror the statistical distribution of your input data. If your input is 99.7% domestic retail, the synthetic output is 99.7% domestic retail with slightly different names. The generator faithfully reproduces the calibration gap you are trying to close. Some teams try to manually add “high-risk” profiles to the synthetic dataset — a handful of records with offshore flags set to true. But wealth architecture is not a single flag. It is the interaction between jurisdiction, entity structure, source of wealth, PEP proximity, and asset composition. A randomly assigned offshore flag on a structurally flat profile teaches your model that offshore exposure is a binary red flag — exactly the wrong lesson.

Real Data vs. Anonymized vs. Born-Synthetic for Risk Calibration

| Dimension | Real Data | Anonymized | Born-Synthetic |

|---|---|---|---|

| PII present | Yes | Residual | None |

| Re-identification risk | Certain | Probable (UHNWI) | Impossible |

| Structural complexity preserved | Yes | Degraded | Full |

| Offshore/entity detail | Present | Often stripped | Architecturally coherent |

| GDPR Art. 25 compliant | No | Disputed | Yes |

| EU AI Act Art. 10 | Violation | Unclear | Compliant |

| Certifiable for auditors | No | No | Yes (Certificate of Origin) |

| Risk model calibration value | High (but illegal) | Low (stripped features) | High (and compliant) |

Born-Synthetic Risk Scoring Data That Teaches Models What Complexity Looks Like

The core problem with risk scoring in traditional banks is not the algorithm — it is the calibration data. Your model needs to learn that a fourth-generation industrialist with three trusts and a foundation is structurally different from a sanctions evasion network with three shell companies and a nominee director. Both are complex. Only one is criminal. Teaching that distinction requires training data where legitimate complexity exists at scale.

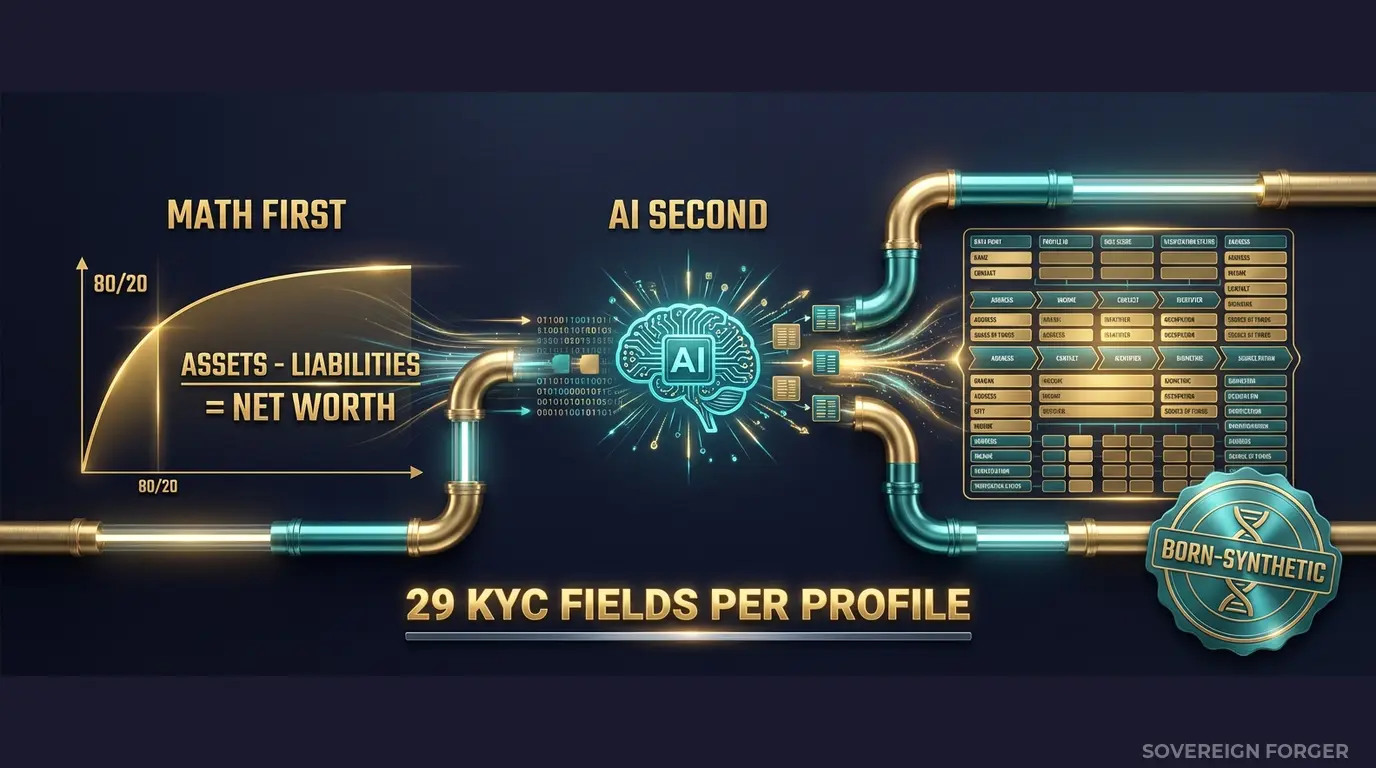

Every profile in the Sovereign Forger KYC dataset is generated from mathematical constraints — not derived from any real person. The pipeline works in two stages that are specifically relevant to risk model calibration:

Math First. Net worth follows a Pareto distribution — the actual statistical shape of real wealth concentration, not a bell curve. This matters for risk scoring because Pareto-distributed data produces the long tail your model needs to learn from: the $50M profiles behave differently from the $500M profiles, which behave differently from the $5B profiles. Asset allocations are computed within algebraic constraints: Assets – Liabilities = Net Worth, by construction. Property values, core equity, cash liquidity, and offshore holdings are allocated according to archetype-specific patterns that mirror how different types of wealth are actually structured. A tech founder concentrates in equity. An old-money dynasty concentrates in real estate and trusts. A commodity trader holds disproportionate cash liquidity. Your risk model learns these patterns instead of treating every high-net-worth client identically.

AI Second. A local AI model — running offline, never touching the network — adds narrative enrichment after the financial figures are locked. Biography, profession, education, philanthropic focus. The AI never modifies the numbers. It creates the contextual texture that makes each profile a coherent individual rather than a row of fields. For risk scoring purposes, this matters: your model can learn to correlate profession, education, and geographic context with risk signals rather than relying solely on numerical thresholds.

29 Fields Calibrated for Risk Model Training

Every KYC-Enhanced profile includes the fields your risk scoring engine needs to make informed decisions:

Identity & Geography: full_name, residence_city, residence_zone, tax_domicile

Wealth Structure: net_worth_usd, total_assets, total_liabilities, property_value, core_equity, cash_liquidity, assets_composition, liabilities_composition

Professional Context: profession, education, narrative_bio, philanthropic_focus

Offshore Exposure: offshore_jurisdiction, offshore_vehicle

KYC Signals: kyc_risk_rating, pep_status, pep_position, pep_jurisdiction, sanctions_screening_result, sanctions_match_confidence, adverse_media_flag, source_of_wealth_verified, sow_verification_method, high_risk_jurisdiction_flag

Why this matters for risk scoring specifically: every KYC field is deterministically derived from the profile’s archetype, niche, net worth, and jurisdiction. A private banker in Swiss-Singapore with $200M net worth and a BVI trust gets a different risk profile than a tech founder in Silicon Valley with the same net worth and a Delaware LLC — because the underlying wealth architectures carry different regulatory implications. Your model learns these structural patterns instead of applying a single threshold to every profile above $10M.

The risk signals are not randomly assigned. PEP status correlates with archetype and niche: Middle East profiles carry higher PEP rates (~29%) because sovereign wealth structures intersect with political positions more frequently in that region. LatAm profiles carry higher base risk ratings (~84% high) reflecting the jurisdictional risk landscape. European and Swiss-Singapore profiles show balanced distributions (~48% low risk) consistent with established regulatory frameworks. These distributions give your risk model realistic base rates to calibrate against — not uniform random noise.

Risk Scoring Calibration Data at Enterprise Scale

6 Geographic Niches: Silicon Valley, Old Money Europe, Middle East, LatAm, Pacific Rim, Swiss-Singapore — each with wealth architecture patterns that reflect how UHNWI wealth is actually structured in that region. Your risk model learns that a Cayman trust held by a European dynasty carries different implications than the same structure held by a LatAm agribusiness baron.

31 Wealth Archetypes: Tech founders, private bankers, commodity traders, family office managers, real estate developers, shipping magnates, sovereign family members — the actual client archetypes that your risk scoring model encounters in production but has never seen in training data. Each archetype has distinct asset allocation patterns, offshore preferences, and risk signal distributions.

Deterministic KYC Signal Distribution: Risk ratings, PEP statuses, sanctions screening results, and source-of-wealth verification methods are deterministically derived from each profile’s structural characteristics — not randomly assigned. The same UUID produces the same risk signals on every generation run. This means your model calibration is reproducible, and your compliance team can audit exactly how the training data was constructed.

Algebraic Balance Sheet Integrity: Every profile passes the constraint Assets – Liabilities = Net Worth. Run any balance validation on 100,000 records and find zero exceptions. When your risk model learns from these profiles, it learns from financially coherent structures — not from randomly generated numbers that could never represent a real wealth architecture.

Pricing

| Tier | Records | Price | Best For |

|---|---|---|---|

| Compliance Starter | 1,000 | $999 | Risk model proof of concept |

| Compliance Pro | 10,000 | $4,999 | Full calibration dataset |

| Compliance Enterprise | 100,000 | $24,999 | Production model training + regression testing |

No SDK. No API key. No sales call. Download a file, load it into your model training pipeline, and measure how your false positive rate changes when the model has seen legitimate complexity at scale.

Why Risk Scoring Calibration Cannot Wait

Enforcement is accelerating on multiple fronts. Traditional banks operate under simultaneous regulatory oversight — FCA, ECB, FinCEN, MAS, and more. The EU AI Act becomes fully applicable in August 2026 and classifies financial risk scoring as high-risk AI under Annex III. Article 10 requires documented governance of training data, including provenance, bias assessment, and GDPR compliance. If your risk model trains on real or anonymized client data, you must demonstrate compliance on both GDPR and AI Act simultaneously, across every jurisdiction where you operate.

The fines are existential, not incremental. HSBC: £63.9M. Danske Bank: approximately $2B in settlements across multiple jurisdictions. ABN AMRO: €480M. ING: €775M. Standard Chartered: $1.1B. These are not fines for small technical violations — they are the direct consequences of risk systems that could not distinguish between legitimate complexity and financial crime at scale. A properly calibrated risk model would not have prevented every one of these cases. But in every case, regulators found that the bank’s systems lacked the ability to process the structural complexity of the clients and transactions involved.

The false positive cost is measurable. Industry estimates place the cost of investigating a single false-positive risk alert between $30 and $150 in analyst time. A risk model that flags 90% of UHNWI applications as high-risk because it has never seen legitimate complexity generates thousands of unnecessary investigations per quarter. At $4,999 for a Compliance Pro calibration dataset, the ROI is realized within the first month of reduced false positives — before considering the regulatory risk reduction.

The balance sheet test is open source. Every Sovereign Forger record passes algebraic validation: Assets – Liabilities = Net Worth. Run the Balance Sheet Test on our data, then run it on whatever synthetic data you are currently using for risk model training. The structural integrity difference is immediate and quantifiable.

Every dataset ships with a Certificate of Sovereign Origin — documenting the born-synthetic methodology, zero PII lineage, and regulatory alignment. When your model validation team asks “where did the training data come from?” and your regulator asks “can you prove this data contains no real client information?”, you hand them the certificate. Born-Synthetic means the question of data provenance has a one-page answer.

Calibrate Your Risk Scoring Models

Download 100 free KYC-Enhanced UHNWI profiles with deterministic risk signals. Run them through your risk scoring engine alongside your current training data. Test whether your model can distinguish between structural complexity and genuine risk.

Count how many profiles your current model flags as high-risk. Then look at those profiles — how many are fourth-generation industrialists, family office managers, or tech founders with entirely legitimate multi-jurisdictional structures? That false positive count is the calibration gap your model carries into production every day.

No credit card. No sales call. Just your work email.

Related reading: DORA Synthetic Data Requirements for Resilience Testing — how DORA Article 24-25 mandates synthetic data for threat-led penetration testing.

Frequently Asked Questions

How can traditional banks use synthetic customer profiles to calibrate risk scoring models without exposing real client data?

Traditional banks operating under OCC SR 11-7 model risk management guidelines must validate risk scoring algorithms against diverse, representative datasets before deployment. Sovereign Forger’s born-synthetic profiles provide balanced populations across low, medium, and high risk tiers, enabling banks to stress-test scoring logic against edge cases such as PEP-adjacent relationships and multi-jurisdictional source-of-wealth patterns. Because no real customer records are involved, validation exercises satisfy GDPR Art.25 data minimisation requirements while still meeting the EBA’s ML model validation standards for statistical robustness.

What specific risk scoring edge cases do traditional banks need synthetic data to cover that standard test datasets cannot provide?

Traditional banks face supervisory scrutiny from the OCC, PRA, and ECB that neobanks typically do not, meaning risk scoring models must handle rare but consequential profiles reliably. Standard internal test datasets are skewed toward approved, low-risk customers and contain fewer than 3 percent high-risk or declined profiles. Sovereign Forger generates synthetic populations with configurable distributions across sanctions exposure, adverse media flags, complex corporate ownership structures, and dual-jurisdiction residency — edge cases that appear in fewer than 1 in 500 real files but trigger disproportionate regulatory penalties when misclassified.

How does using synthetic KYC profiles help traditional banks comply with the EU AI Act Article 10 data governance requirements ahead of the August 2026 enforcement date?

EU AI Act Art.10 classifies credit risk scoring as a high-risk AI application, requiring training and validation datasets to be representative, free from errors, and subject to documented data governance processes. Traditional banks that train or validate scoring models on real customer files face significant documentation burdens and potential GDPR conflicts when repurposing personal data. Synthetic profiles generated by Sovereign Forger carry zero lineage to real persons, satisfy Art.25 privacy-by-design obligations, and come with reproducible generation parameters that fulfil the Art.10 traceability requirements regulators will begin enforcing in August 2026.

What does born-synthetic mean in the context of financial risk scoring data, and why does it matter for traditional banks specifically?

Born-synthetic means each profile is generated entirely from mathematical distributions, including Pareto-distributed wealth figures and Zipf-distributed transaction frequencies, with no source records, anonymisation steps, or real-person lineage at any stage of production. Unlike pseudonymised or anonymised data, born-synthetic records cannot be re-identified, making them GDPR Art.25 compliant by construction rather than by process. For traditional banks, where Basel III/IV capital models and OCC SR 11-7 model inventories must demonstrate clean data provenance, born-synthetic datasets eliminate the legal and reputational risk of inadvertently processing personal data during model development cycles.

How can a traditional bank risk team get started evaluating synthetic KYC profiles for scoring model calibration?

Risk teams can download 100 free KYC profiles immediately via a work email address with no credit card required. Each profile contains 29 interlocked fields covering assigned risk ratings across low, medium, and high tiers, PEP status, sanctions screening results, source-of-wealth narratives, and jurisdiction codes spanning multiple regulatory regimes. The instant download format allows model validation analysts to begin testing scoring algorithm behaviour against realistic edge cases on the same day, without waiting for data governance approvals that would be required before using real customer records.

Learn more about bank risk scoring synthetic data and how Born Synthetic data addresses this in our glossary and comparison guides.