This model validation data is built for exactly this scenario. HSBC: £63.9M. Danske Bank: ~$2B. ABN AMRO: €480M. ING: €775M. Standard Chartered: $1.1B. Behind every one of these fines is a risk model that passed validation — and failed in production. The models worked on the data they were tested against. They were never tested against the data that actually matters.

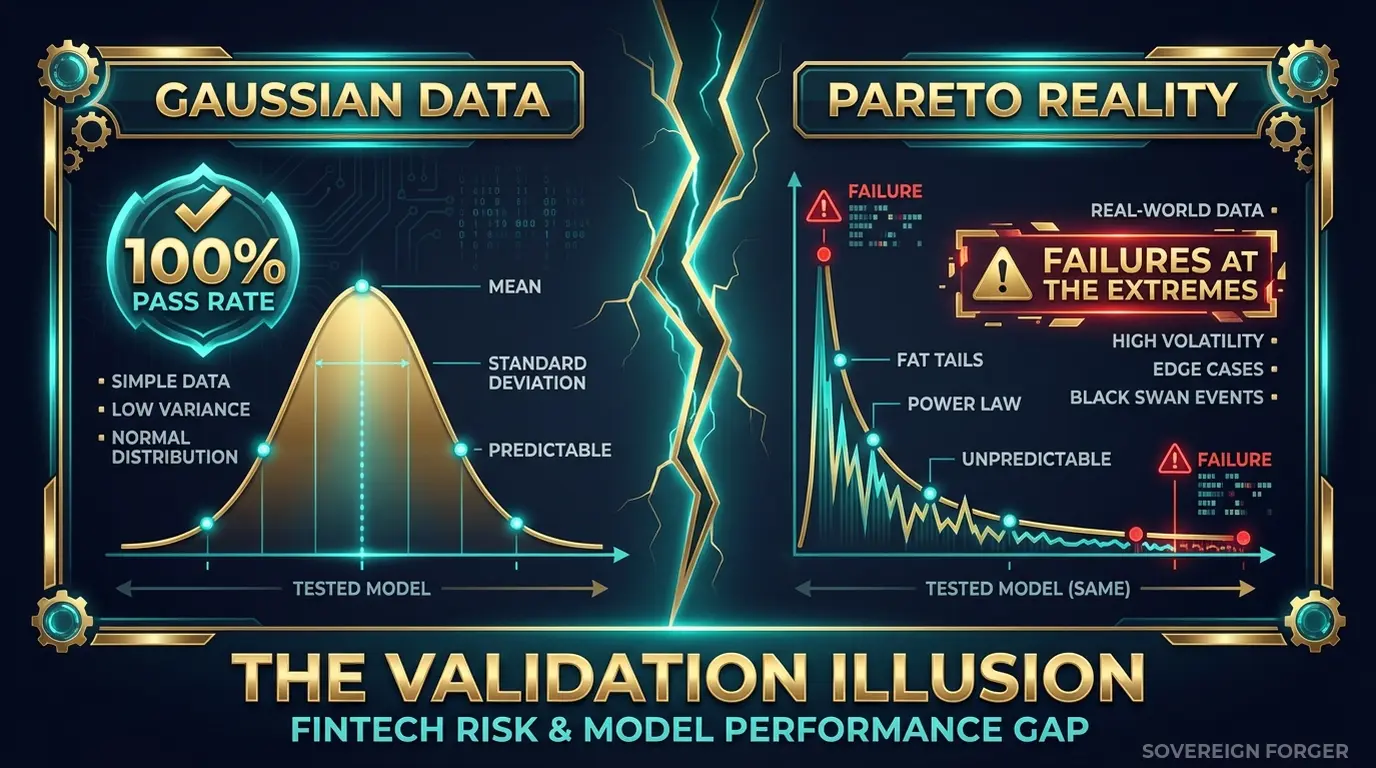

Your Validation Dataset Is Missing the Pareto Tail

I spent years inside financial institutions watching model validation teams run their quarterly cycles. The process looked rigorous on paper: thousands of test profiles, documented methodology, statistical coverage reports, sign-off from model risk management. Green lights across the board.

Then I started looking at the actual distribution of the validation data. It told a different story.

The test profiles clustered in the middle. Net worth between $1M and $20M. Single jurisdiction. One or two asset classes. Clean PEP status. No offshore vehicles. The validation data was a bell curve — normally distributed, symmetrical, easy to reason about.

Real UHNWI wealth is not a bell curve. It is a Pareto distribution — a long, heavy tail where a small number of clients hold disproportionate complexity. The client at the 99th percentile does not look like a slightly wealthier version of the client at the 50th. They look fundamentally different: four jurisdictions instead of one, layered entities instead of direct ownership, PEP connections through family members in different countries, asset compositions that span real estate, private equity, art, and commodity holdings simultaneously.

This is where models break. Not in the fat middle where 80% of clients sit, but in the thin tail where 5% of clients generate 60% of the risk. A model trained and validated on normally distributed data has never encountered the structural complexity that triggers real-world failures. It passes validation because the validation data does not contain the inputs that would make it fail.

I have seen this pattern at three different institutions. A transaction monitoring model validated against profiles with single-country exposure, then deployed on clients with cross-border flows across six jurisdictions. A risk scoring model that assigned accurate ratings to straightforward profiles but collapsed on clients with trust-held assets and nominee structures. A KYC onboarding model that flagged the right things on retail clients but missed every signal on a UHNWI with a Cayman LP layered under a BVI holding company.

The validation reports all said the same thing: model performance meets threshold. The production incidents all said the same thing: the model encountered input patterns it had never seen during validation.

The core failure is distributional. If your validation data follows a Gaussian distribution and your real client base follows a Pareto distribution, you are validating the model against the wrong reality. Your coverage metrics look perfect — 95th percentile, 99th percentile, all green. But those percentiles are computed against the wrong distribution. The 99th percentile of a bell curve is structurally trivial compared to the 99th percentile of a Pareto tail.

Traditional banks have the hardest version of this problem. Decades of accumulated client relationships across multiple geographies. Clients who were onboarded twenty years ago under different regulatory regimes. Wealth structures that evolved over generations. A Deutsche Bank private banking client looks nothing like a Deutsche Bank retail client — and your model needs to handle both. If the validation data only tests the retail side, the model is unvalidated on the side that generates the largest fines.

Three Validation Approaches That Create False Confidence

Validating with production data extracts. The most common approach — and the most legally dangerous. Model risk teams extract client records from production systems into validation environments. Every extraction is a GDPR Article 25 violation waiting to happen: personal data in environments with broader access, weaker controls, and insufficient audit trails. The validation team sees real profiles, but the legal team has no defensible answer when a regulator asks why 50,000 client records are sitting in a Jupyter notebook on a data scientist’s workstation. Under the EU AI Act — fully applicable August 2026 — Article 10 requires documented governance of all data used in AI model development and validation. Extracting production data without formal provenance documentation puts you on the wrong side of two regulations simultaneously.

Validating with anonymized client data. Strip the names, mask the tax IDs, perturb the net worth figures slightly. The validation dataset now looks defensible — until someone runs a re-identification analysis. With only 265,000 UHNWIs globally, the combination of net worth tier, city of residence, offshore jurisdiction, and profession can uniquely identify individuals even without direct identifiers. A BNP Paribas private banking client in Geneva with $400M in assets and a Liechtenstein foundation is not anonymous — that description matches at most a handful of people on earth. Your anonymized validation data is pseudonymized at best, and GDPR applies to pseudonymized data in full. The model validation report says “anonymized.” The regulator says “prove it.”

Validating with off-the-shelf synthetic generators. Platform-based generators produce high-volume, structurally flat profiles. They generate normally distributed data because the underlying models are trained on — or configured to produce — Gaussian distributions. The output looks like thousands of slightly varied retail banking customers. Your model validation achieves high coverage metrics against this data because the data has no structural variance to expose gaps. You are measuring the model’s ability to handle simple inputs, then declaring it validated for complex ones. The coverage report is technically accurate and practically meaningless.

Production Data vs. Anonymized vs. Born-Synthetic for Model Validation

| Dimension | Production Data | Anonymized | Born-Synthetic |

|---|---|---|---|

| PII present | Yes | Residual | None |

| Re-identification risk | Certain | Probable (UHNWI) | Impossible |

| GDPR Art. 25 compliant | No | Disputed | Yes |

| EU AI Act Art. 10 | Violation | Unclear | Compliant |

| Distributional realism | High (same source) | Degraded by perturbation | Pareto by construction |

| Tail coverage | Depends on extraction | Lost in anonymization | Built-in (31 archetypes) |

| Certifiable for auditors | No | No | Yes (Certificate of Origin) |

| Repeatable across cycles | No (data changes) | No (source changes) | Yes (deterministic) |

| Fine exposure | Up to 4% global revenue | Up to 4% global revenue | Zero |

Born-Synthetic Validation Data Built on the Right Distribution

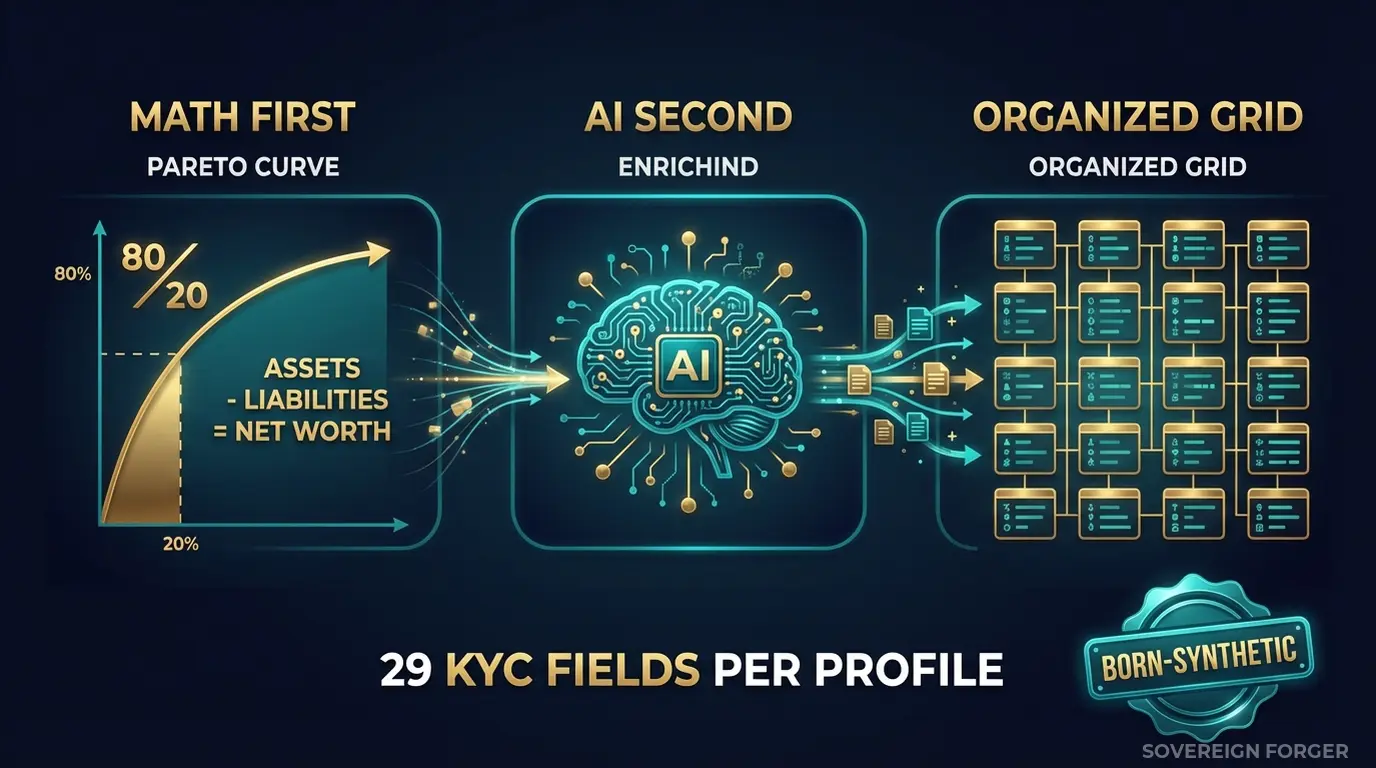

I built the Sovereign Forger pipeline specifically to solve the distributional problem. Every profile is generated from mathematical constraints that encode the way real wealth is actually distributed — not the way most generators assume it is distributed.

Math First. Net worth follows a Pareto distribution with shape parameters calibrated per geographic niche. This is not a cosmetic choice — it is the structural foundation. A Pareto distribution produces the heavy tail that bell curves miss: a small number of profiles with extreme complexity, layered asset structures, and multi-jurisdictional exposure. These are exactly the profiles your model needs to encounter during validation but never does.

Asset allocations are computed within algebraic constraints: Assets – Liabilities = Net Worth, by construction. Every balance sheet balances on every record. Zero exceptions. This matters for model validation because your model is learning relationships between financial variables. If the training and validation data contains records where assets minus liabilities does not equal net worth — which is common in generated data — the model learns a broken relationship and your validation metrics are measuring performance against inconsistent inputs.

AI Second. A local AI model running offline adds narrative context — biography, profession, philanthropic focus — after the financial figures are locked. The AI never touches the numbers. It enriches the profile with culturally and professionally coherent details that match the geographic niche and wealth archetype. For model validation, this means the non-numeric features are consistent with the numeric ones: a tech founder in Silicon Valley has a biography that matches their asset composition, not a randomly assigned narrative that contradicts the financial profile.

Why This Matters for Model Validation Specifically

Model validation is not just about volume — it is about distributional coverage. A validation dataset of 100,000 profiles is worthless if all 100,000 are structurally similar. What matters is whether the dataset contains the full range of input patterns your model will encounter in production.

31 wealth archetypes encode this range. Tech founders, dynasty heirs, commodity traders, private bankers, family office principals, real estate developers, shipping magnates — each archetype generates a distinct wealth structure with different asset compositions, liability patterns, and offshore exposure. Your model sees 31 fundamentally different client architectures, not 100,000 variations of the same template.

6 geographic niches add jurisdictional complexity. A Middle East sovereign family profile has different PEP exposure, different offshore vehicle preferences, and different asset composition patterns than an Old Money European dynasty. Your risk scoring model needs to handle both — and the validation data needs to contain both.

Deterministic KYC signals mean that every profile’s risk rating, PEP status, and sanctions screening result is derived from the profile’s underlying characteristics — not randomly assigned. A high-net-worth individual with offshore vehicles in high-risk jurisdictions gets a higher risk rating than a domestic-only client. This reflects the real correlation structure your model is supposed to learn. Randomly assigned risk labels teach the model that risk is noise. Deterministically derived risk labels teach the model that risk has structure.

Reproducibility across validation cycles. Every profile is generated from a deterministic seed. Run the pipeline twice, get the same output. This means your validation results are reproducible: if the model fails on profile X in Q1, you can verify the fix against the exact same profile in Q2. With production data extracts, the underlying data shifts between cycles and you can never be sure whether performance changes are due to model changes or data changes.

29 Fields Designed for Model Validation

Every KYC-Enhanced profile includes the fields your models actually process:

Identity & Geography: full_name, residence_city, residence_zone, tax_domicile

Wealth Structure: net_worth_usd, total_assets, total_liabilities, property_value, core_equity, cash_liquidity, assets_composition, liabilities_composition

Professional Context: profession, education, narrative_bio, philanthropic_focus

Offshore Exposure: offshore_jurisdiction, offshore_vehicle

KYC Signals: kyc_risk_rating, pep_status, pep_position, pep_jurisdiction, sanctions_screening_result, sanctions_match_confidence, adverse_media_flag, source_of_wealth_verified, sow_verification_method, high_risk_jurisdiction_flag

For model validation specifically, the wealth structure fields and KYC signal fields provide the numeric and categorical inputs your models consume. The Pareto-distributed net worth creates the tail observations your model needs to be tested against. The correlated KYC signals provide the target variables your classification models predict. And the 29-field schema is wide enough to test feature interaction effects — how does the model behave when high net worth combines with high-risk jurisdiction and PEP status simultaneously?

Validation Data That Tests the Full Distribution

6 Geographic Niches: Silicon Valley, Old Money Europe, Middle East, LatAm, Pacific Rim, Swiss-Singapore — each with distinct wealth distributions, jurisdictional exposure, and PEP prevalence. Your model is validated against the global client base, not a single-geography proxy.

31 Wealth Archetypes: The structural diversity that generic generators cannot produce. Each archetype generates a different combination of asset classes, liability structures, offshore vehicles, and risk signals. Your model encounters the full input space it will face in production.

Pareto-Distributed Net Worth: The heavy tail is built into the data by construction. Your coverage metrics reflect actual distributional coverage — not coverage against a bell curve that misses the complexity where failures occur.

Balanced Balance Sheets: Assets – Liabilities = Net Worth on every record. Your model learns from consistent financial relationships, not from data where the fundamental accounting identity is violated.

Deterministic KYC Signals: Risk ratings and PEP statuses derived from profile characteristics, not randomly assigned. The correlation structure in the validation data matches the correlation structure in production.

Pricing

| Tier | Records | Price | Best For |

|---|---|---|---|

| Compliance Starter | 1,000 | $999 | Initial model validation POC |

| Compliance Pro | 10,000 | $4,999 | Full validation suite, stress testing |

| Compliance Enterprise | 100,000 | $24,999 | Enterprise model governance, AI training + validation |

No SDK. No API key. No sales call. Download a file, load it into your validation pipeline, and run your test suite. The data arrives in JSONL and CSV with a Certificate of Sovereign Origin documenting provenance and methodology.

Why This Matters Now

The EU AI Act changes model validation requirements. Financial AI is classified as high-risk under Annex III. Article 10 requires that training and validation data have documented provenance, undergo bias assessment, and comply with GDPR. If your validation dataset is extracted from production, you need to prove GDPR compliance for every record in the dataset. If it is anonymized, you need to prove the anonymization is irreversible — which for UHNWI data, it likely is not. Born-synthetic data eliminates both problems: zero real persons, zero PII, zero lineage to any individual. Full enforcement begins August 2026.

Regulators are explicitly targeting model risk. The FCA’s review of model risk management (SS1/23) requires firms to demonstrate that models are validated against data that reflects the conditions under which the model will operate. The ECB’s TRIM programme flagged insufficient data quality in validation datasets as a systemic issue. The OCC’s SR 11-7 guidance on model risk management states that “the rigor and sophistication of validation should be commensurate with the model’s risk.” Validation against structurally flat data does not meet this standard for models processing complex UHNWI clients.

The fines are not slowing down. HSBC: £63.9M for transaction monitoring failures. Danske Bank: approximately $2B for the Estonian branch money laundering scandal — the largest AML failure in European banking history. ABN AMRO: €480M for systematic compliance failures. ING: €775M. Standard Chartered: $1.1B across multiple enforcement actions. In every case, the underlying models were validated and signed off. The validation data simply did not cover the scenarios that caused the failures.

The balance sheet test is open source. Every Sovereign Forger record passes algebraic validation: Assets – Liabilities = Net Worth. Run the Balance Sheet Test on our data, then run it on whatever data you currently use for model validation. If the balance sheets do not balance, your models are learning from internally inconsistent data — and your validation results are measuring performance against noise.

Every dataset ships with a Certificate of Sovereign Origin — documenting the born-synthetic methodology, Pareto distribution parameters, zero PII lineage, and regulatory alignment. When your model risk management team asks “where did the validation data come from?”, you hand them the certificate. When the regulator asks, your MRM team hands them the same certificate.

Validate Your Models Against Realistic Data

Download 100 free KYC-Enhanced UHNWI profiles. Run your model validation suite. Check whether your model handles the Pareto tail — the complex profiles where most real-world failures occur.

Feed them into your risk scoring model and count how many profiles produce unexpected outputs. Run them through your KYC classification model and check whether the predictions are consistent with the correlated KYC signals. Load them into your transaction monitoring model and observe how it behaves with multi-jurisdictional offshore exposure.

The gap between your model’s performance on these profiles and its performance on your current validation data is the size of your model risk blind spot.

No credit card. No sales call. Just your work email.

Related reading: DORA Synthetic Data Requirements for Resilience Testing — how DORA Article 24-25 mandates synthetic data for threat-led penetration testing.

Frequently Asked Questions

How does synthetic financial data support OCC SR 11-7 compliant model validation at traditional banks?

OCC SR 11-7 requires banks to validate AI and ML models against data that is representative, complete, and free from bias. Sovereign Forger’s born-synthetic profiles preserve Pareto wealth distributions and realistic field correlations, enabling model developers to stress-test credit risk and fraud detection models against statistically accurate edge cases. Because no real customer records are involved, compliance teams can share validation datasets across internal teams and third-party validators without triggering data governance restrictions.

Can traditional banks use synthetic data to satisfy Basel III/IV capital model backtesting requirements under heightened supervisory scrutiny?

Traditional banks face stricter supervisory scrutiny than neobanks, and Basel III/IV demands robust backtesting of internal ratings-based and credit risk models. Synthetic datasets from Sovereign Forger replicate tail-risk wealth concentrations through Pareto-derived distributions, allowing validation teams to generate thousands of statistically plausible scenarios that regulators expect to see tested. EBA guidelines on ML model validation explicitly encourage out-of-sample and adversarial testing, both of which are accelerated when synthetic data removes access bottlenecks to sensitive production records.

How does born-synthetic KYC data reduce model validation cycle times for traditional banks preparing for EU AI Act Article 10 enforcement in August 2026?

EU AI Act Article 10 becomes enforceable in August 2026 and mandates high-quality, representative training and validation data for high-risk AI systems, a category that includes credit scoring models at traditional banks. Using born-synthetic KYC profiles with 29 interlocked fields, model validation teams can construct controlled test suites covering PEP status, sanctions screening outcomes, and source-of-wealth scenarios without waiting for anonymization pipelines or DPA approvals, compressing validation cycles from weeks to hours while meeting the data governance requirements Article 10 imposes.

What does born-synthetic mean, and why does it matter specifically for model validation at traditional banks?

Born-synthetic data is generated entirely from mathematical distributions, such as Pareto curves that reflect real-world wealth concentration, rather than derived or anonymized from any actual customer records. Because there is zero lineage to real persons, it is GDPR Article 25 compliant by construction, meaning privacy is engineered in from the start rather than retrofitted. For traditional banks, where supervisory expectations under OCC SR 11-7 and EBA guidelines require documented data provenance, born-synthetic records provide an auditable, legally clean foundation for model validation that re-identified or pseudonymized data cannot offer.

How can a traditional bank’s model validation team get started with Sovereign Forger synthetic data?

Validation teams can download 100 free KYC profiles instantly using a work email address, with no credit card required. Each profile contains 29 interlocked fields covering risk ratings, PEP status, sanctions screening flags, and source of wealth, all generated from Pareto-calibrated distributions that preserve realistic correlations across financial variables. The dataset is immediately usable for model validation testing under OCC SR 11-7 frameworks, giving risk and compliance analysts a concrete starting point for evaluating whether their AI and ML models perform accurately across the full distribution of customer risk profiles.

Learn more about bank model validation synthetic data and how Born Synthetic data addresses this in our glossary and comparison guides.