HSBC: £63.9M. Danske Bank: ~$2B. ABN AMRO: €480M. ING: €775M. Standard Chartered: $1.1B. Every one of these fines shares a root cause — AML models that learned to flag risk from data that contained none of the structural complexity that defines high-net-worth banking relationships.

Your AML Model Has Never Seen a Real UHNWI

I spent years working with data inside financial institutions. The pattern I saw repeated at every traditional bank was the same: an AML model trained on millions of retail transactions and a few thousand corporate accounts, validated against test profiles that looked like slightly wealthier versions of everyday customers. Single jurisdiction. Single bank account. One employer, one salary, one tax residence. The model scores well on internal benchmarks. Compliance signs off. The model goes to production.

Then it encounters a private banking client with a €180M portfolio distributed across a family office in Zurich, a holding company in Luxembourg, a charitable foundation in Liechtenstein, and a trust in Jersey — all under a single UBO who holds dual citizenship and a tax domicile in a third country. The AML model has two choices, and both are wrong. It flags the entire structure as suspicious — because it has never learned that multi-jurisdictional layering is standard practice for legitimate wealth preservation at this tier. Or it misses the one anomalous signal buried inside the complexity — because it has no baseline for what “normal” looks like at €180M.

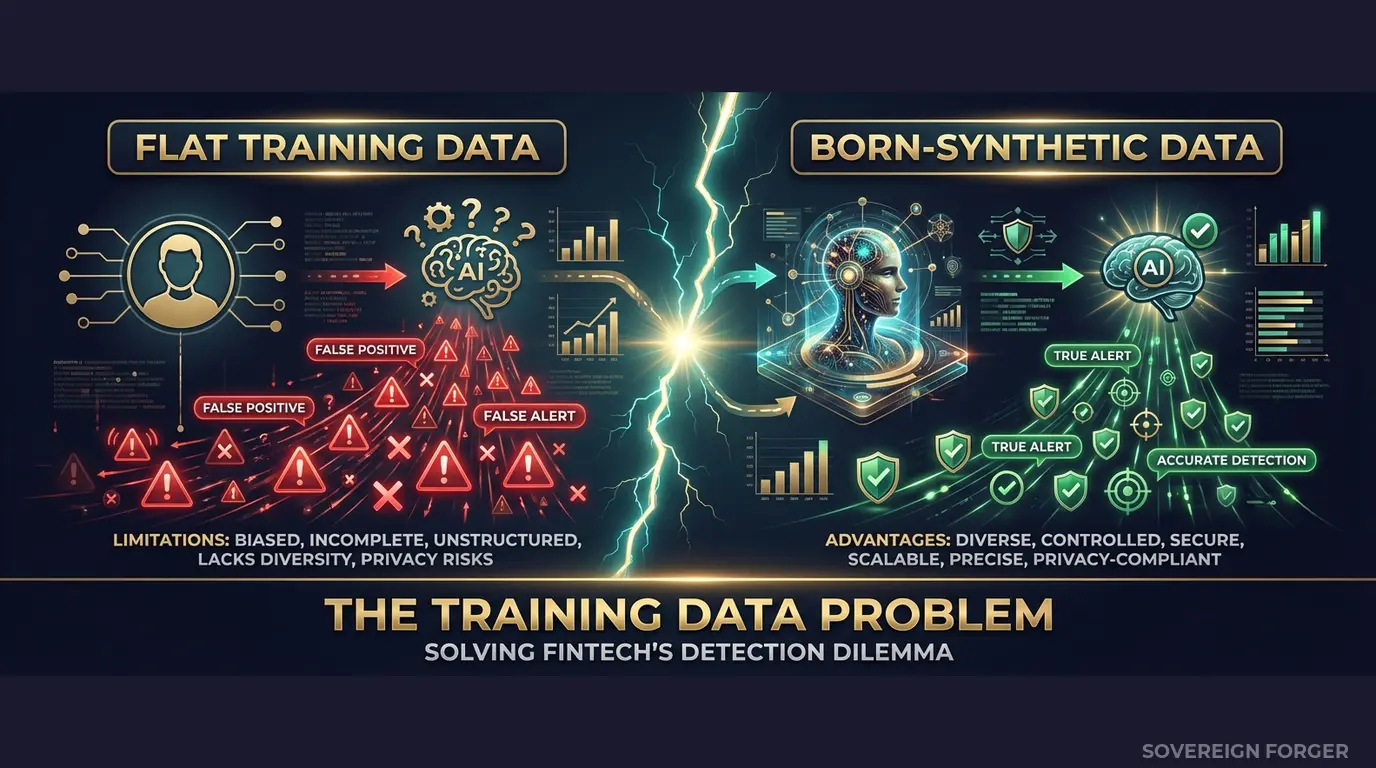

This is not a model quality problem. It is a training data problem. The model learned “normal” from profiles that bear no structural resemblance to the clients who actually trigger Enhanced Due Diligence.

I have watched this play out at scale. A traditional bank with 150 years of history, tens of thousands of UHNWI relationships, and an AML model that generates 95% false positives on its private banking book. The compliance team spends three quarters of its time dismissing alerts that the model should never have raised — because the model learned risk signals from retail data and then applied them to wealth structures it had never encountered during training.

The consequences are measurable on both sides. False positive overload means human analysts waste thousands of hours reviewing legitimate wealth complexity. Missed true positives mean genuine risk signals — the unusual transaction pattern, the newly sanctioned jurisdiction, the PEP connection one layer deep in an ownership chain — slip through because the model cannot distinguish them from the baseline noise it was never trained to understand.

The regulatory reality is unforgiving. The FCA, the ECB, FinCEN, and MAS do not accept “our model was not trained on representative data” as a defense. When Danske Bank facilitated €200 billion in suspicious transactions through its Estonian branch, the root cause was not a missing rule — it was an entire client segment that the compliance infrastructure was not designed to monitor. The training data gap is the compliance gap.

Three Approaches That Do Not Work for AML Model Training

Traditional banks have been operating AML programs for decades. The irony is that this institutional history makes the training data problem worse, not better — because the legacy approaches are deeply embedded and rarely questioned.

Training on production client data. This is the default at most traditional banks: extract client records into a model training environment, sometimes with light masking, sometimes without. At a bank with 50,000 UHNWI relationships, this feels like a data advantage. It is actually a compliance liability. GDPR Article 25 requires data protection by design — personal data in model training environments with broader team access, weaker logging, and often inadequate purpose limitation is a violation waiting for an audit. The EU AI Act Article 10, fully enforceable from August 2026, adds a second layer: high-risk AI systems (financial AML is explicitly listed in Annex III) require documented governance of training data, including provenance, bias assessment, and lawful processing proof. Using real client data for AML model training now requires compliance with both regulations simultaneously — and most banks cannot demonstrate either.

Training on anonymized client data. Stripping PII from real UHNWI profiles sounds like a solution, but it fails on two fronts. First, the re-identification risk: there are approximately 265,000 UHNWIs globally. The combination of net worth range, jurisdiction, offshore vehicle type, profession, and philanthropic focus can uniquely identify individuals even without their name or tax ID. A regulator — or a plaintiff’s attorney — can argue that your “anonymized” training data is merely pseudonymized, and GDPR applies in full. Second, the coverage problem: your existing client base represents a biased sample. You cannot train a model to detect risk in client segments you do not yet serve. If your bank is expanding into Middle Eastern family offices or Pacific Rim shipping dynasties, your historical data contains zero training examples for those wealth architectures.

Training on generic synthetic data. Platform-based generators produce profiles with the right column headers but the wrong distributions. They generate net worth from uniform or Gaussian distributions — not the Pareto distribution that governs real wealth concentration. They assign offshore jurisdictions randomly instead of correlating them with wealth tier and geographic niche. They create KYC risk ratings with no relationship to the underlying profile. An AML model trained on this data learns patterns that do not exist in reality. The model becomes confident and wrong — the most dangerous state for a compliance system.

Real Data vs. Anonymized vs. Born-Synthetic

| Dimension | Real Client Data | Anonymized | Born-Synthetic |

|---|---|---|---|

| PII present | Yes | Residual | None |

| Re-identification risk | Certain | Probable (UHNWI) | Impossible |

| GDPR Art. 25 compliant | No | Disputed | Yes |

| EU AI Act Art. 10 | Violation | Unclear | Compliant |

| Covers unseen client segments | No | No | Yes (6 niches, 31 archetypes) |

| Wealth distribution | Biased (your book) | Biased (your book) | Pareto (realistic) |

| Certifiable for auditors | No | No | Yes (Certificate of Origin) |

| Fine exposure | Up to 4% global revenue | Up to 4% global revenue | Zero |

Born-Synthetic AML Training Data Built for Traditional Bank Compliance

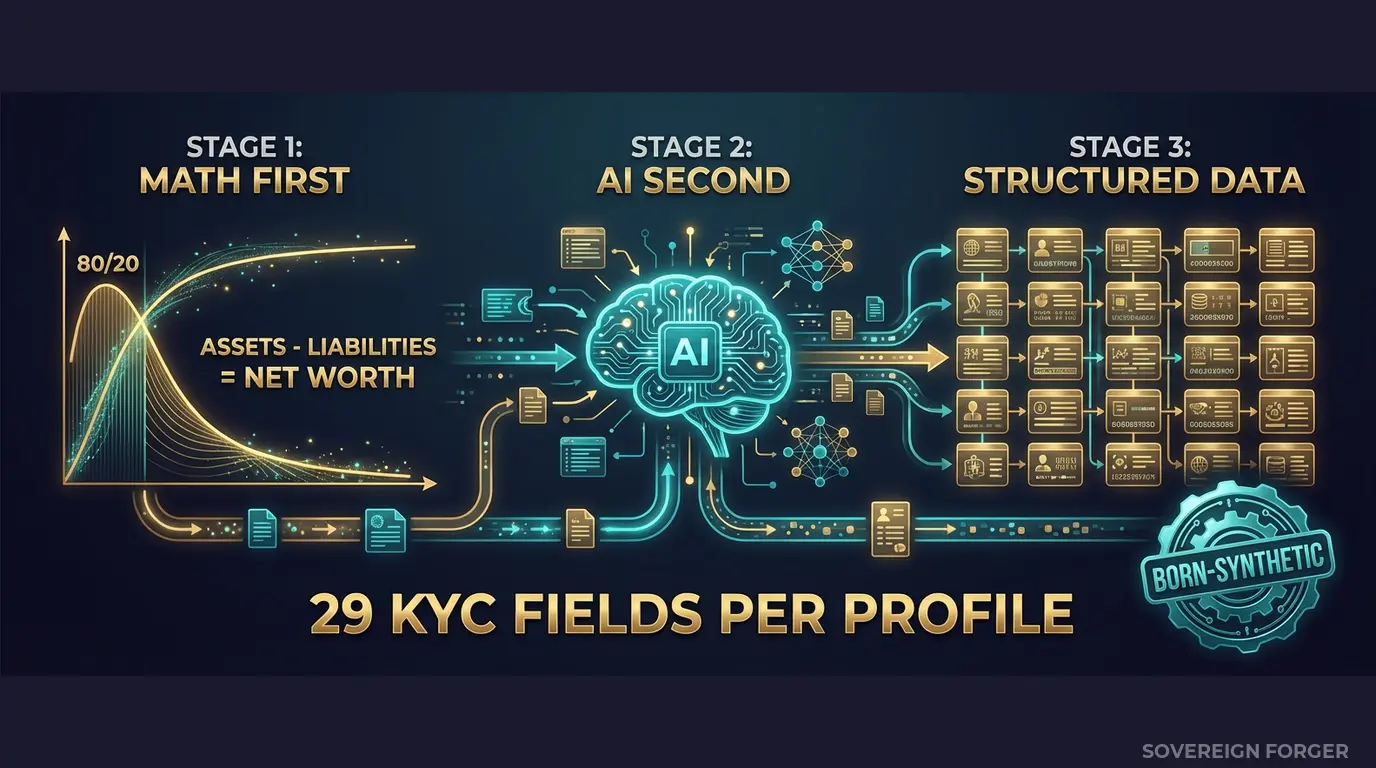

The core problem with AML training data is not volume — it is structural realism. An AML model needs to learn what legitimate UHNWI complexity looks like before it can learn what genuine risk looks like. Every profile in the Sovereign Forger KYC dataset is generated from mathematical constraints that replicate the structural patterns of real high-net-worth wealth — without deriving from any real person.

Math First. Net worth follows a Pareto distribution — the long-tail distribution that governs real wealth concentration. This is not a cosmetic choice. If your training data follows a Gaussian distribution, your model learns that most clients cluster around the mean with symmetrical tails. Real wealth does not work that way. The top 1% of UHNWIs hold wealth that is orders of magnitude above the median, and their financial structures are correspondingly more complex. I set the Pareto shape parameter to match empirical wealth data, so the model trains on a distribution that mirrors the world it will operate in.

Asset allocations are computed within algebraic constraints: Assets – Liabilities = Net Worth, by construction. Property values, core equity, cash liquidity, offshore holdings — each component is derived from the archetype and niche, not assigned randomly. A Swiss private banker has a different asset composition than a LatAm agribusiness baron, because their wealth structures are fundamentally different. Every balance sheet balances on every record. Zero exceptions.

AI Second. After the financial figures are locked, a local AI model adds narrative enrichment — biography, profession, education, philanthropic focus. The AI runs entirely offline on local hardware. No record ever touches the network. The enrichment is culturally coherent: a Middle Eastern sovereign family member gets a biography that reflects actual dynastic wealth patterns, not a generic template with the name swapped.

How This Solves the AML Training Data Problem

The 29 KYC-Enhanced fields are designed to give your AML model the signals it needs to learn the difference between structural complexity and genuine risk:

Identity & Geography: full_name, residence_city, residence_zone, tax_domicile — including multi-jurisdictional configurations that your retail data does not contain.

Wealth Structure: net_worth_usd, total_assets, total_liabilities, property_value, core_equity, cash_liquidity, assets_composition, liabilities_composition — algebraically balanced, Pareto-distributed, archetype-specific.

Professional Context: profession, education, narrative_bio, philanthropic_focus — enabling your model to learn that “commodity trader based in Singapore with a charitable foundation in Geneva” is a common legitimate pattern, not an anomaly.

Offshore Exposure: offshore_jurisdiction, offshore_vehicle — correlated with wealth tier and niche. A BVI holding company under a Delaware LLC is standard architecture for a Silicon Valley founder; the same structure on a profile with €2M net worth is a different signal entirely. Your model needs to learn this distinction.

KYC Signals: kyc_risk_rating, pep_status, pep_position, pep_jurisdiction, sanctions_screening_result, sanctions_match_confidence, adverse_media_flag, source_of_wealth_verified, sow_verification_method, high_risk_jurisdiction_flag — every field deterministically derived from the profile’s archetype, niche, net worth, and jurisdiction. Not randomly assigned. Not uniformly distributed.

The key insight for AML model training: the KYC signal distributions vary by niche in ways that match real-world patterns. Middle Eastern profiles carry higher PEP rates (~29%) because sovereign family and government-adjacent wealth is structurally prevalent in that region. LatAm profiles carry higher risk ratings (~84% high-risk) because the combination of offshore vehicles, cross-border flows, and specific jurisdictions triggers more risk flags by construction. Your model trains on these realistic distributions — not on a flat 33/33/33 split across risk categories that exists nowhere in practice.

Built for Traditional Bank AML Model Training at Scale

6 Geographic Niches: Silicon Valley, Old Money Europe, Middle East, LatAm, Pacific Rim, Swiss-Singapore — each with distinct wealth architectures, offshore patterns, and KYC signal distributions. A model trained on all six learns the global UHNWI landscape your private banking division actually operates in.

31 Wealth Archetypes: Private bankers, commodity traders, family office principals, real estate developers, sovereign family members, tech founders, shipping magnates — the actual client profiles that your AML model encounters in production. Not “high net worth individual with large bank balance.”

Realistic KYC Signal Distributions: Risk ratings, PEP statuses, sanctions screening results, and source-of-wealth verification methods distributed with frequencies that match real-world patterns by niche. Your model learns that a 3% PEP rate in Silicon Valley and a 29% PEP rate in the Middle East are both normal — and calibrates its risk scoring accordingly.

Algebraic Consistency: Every record passes the balance sheet test: Assets – Liabilities = Net Worth. When your model trains on structurally consistent data, it learns to flag genuine inconsistencies in production — because it has a baseline for what “correct” looks like.

Pricing

| Tier | Records | Price | Best For |

|---|---|---|---|

| Compliance Starter | 1,000 | $999 | Model validation, proof of concept |

| Compliance Pro | 10,000 | $4,999 | Full model retraining cycle |

| Compliance Enterprise | 100,000 | $24,999 | Production AML training + ongoing testing |

No SDK. No API key. No sales call. Download a file, feed it into your model training pipeline, and measure the difference in false positive rates and true positive capture within one retraining cycle.

Why This Matters Now

The enforcement trajectory is clear. Danske Bank: ~$2B across multiple jurisdictions. HSBC: £63.9M from the FCA alone. ING: €775M. ABN AMRO: €480M. Standard Chartered: $1.1B. These fines are not historical curiosities — they are accelerating. The FCA issued more financial crime penalties in 2024-2025 than in any prior two-year period. The ECB’s Joint Supervisory Team is conducting AML-focused inspections across significant institutions. FinCEN’s enforcement actions increasingly target the adequacy of compliance programs, not just the failures they produce.

The EU AI Act changes the equation. Starting August 2026, financial AI systems — including AML models — are classified as high-risk under Annex III. Article 10 requires documented governance of training data: provenance, bias assessment, GDPR compliance, and representativeness. If your AML model trains on real client data, you need to demonstrate lawful processing for model training (a purpose most consent frameworks do not cover). If it trains on anonymized data, you need to prove the anonymization is irreversible (nearly impossible for UHNWI populations). Born-Synthetic data eliminates both requirements — there is no real person in the lineage, by construction.

Multi-regulator exposure multiplies the risk. A traditional bank operating across the UK, EU, US, and Singapore faces simultaneous compliance requirements from the FCA, ECB, FinCEN, and MAS. A training data violation is not a single fine — it is a fine in every jurisdiction where the model operates. Born-Synthetic data is compliant across all four regulatory frameworks because the compliance property is structural: zero PII means zero jurisdictional exposure.

The balance sheet test is open source. Every Sovereign Forger record passes algebraic validation: Assets – Liabilities = Net Worth. Run the Balance Sheet Test on our data, then run it on the training data your AML model currently uses. If the balance sheets do not balance, your model is learning from structurally broken data — and its outputs reflect that.

Every dataset ships with a Certificate of Sovereign Origin — documenting the born-synthetic methodology, zero PII lineage, and regulatory alignment across GDPR, EU AI Act, and CCPA. When your compliance officer, your auditor, or your regulator asks “where did this AML training data come from?”, you hand them the certificate. The conversation ends there.

Train Your AML Model on Data That Reflects Reality

Download 100 free KYC-Enhanced UHNWI profiles. Feed them into your AML pipeline. Check whether your model can distinguish structural complexity from genuine risk signals — or whether it flags every multi-jurisdictional profile as suspicious because it has never encountered one during training.

That gap between “flagged as risky” and “actually risky” is where your false positives live. And it is where your next fine comes from.

No credit card. No sales call. Just your work email.

Related reading: DORA Synthetic Data Requirements for Resilience Testing — how DORA Article 24-25 mandates synthetic data for threat-led penetration testing.

Frequently Asked Questions

What offshore and cross-border transaction patterns does AML training data from Sovereign Forger include for traditional bank detection models?

Sovereign Forger’s AML training data for traditional banks includes layered offshore holding structures across 40+ jurisdictions, correspondent banking chains with FATF grey-list counterparties, trade-based money laundering indicators, and structured cash deposits calibrated to stay below CTR thresholds. Each synthetic profile interlocks beneficial ownership trees, cross-border wire frequencies, and currency mixing patterns, giving compliance teams the realistic adversarial complexity required under OCC SR 11-7 model validation standards without exposing real customer data.

How does synthetic AML training data help traditional banks satisfy EBA model validation guidelines before the EU AI Act Article 10 enforcement deadline in August 2026?

Traditional banks face stricter supervisory scrutiny than neobanks, including EBA requirements that ML models demonstrate representative, bias-free training corpora. Sovereign Forger’s born-synthetic profiles provide statistically balanced distributions of risk tiers, PEP exposure, and sanctions-adjacent behavior, supporting documentation required under EBA model validation guidelines. With EU AI Act Article 10 becoming enforceable in August 2026, banks procuring training data now can evidence data governance completeness during regulatory examinations without retroactive remediation.

Can synthetic AML training data realistically replicate the source-of-wealth complexity that traditional bank private banking and wealth management clients present?

Sovereign Forger generates profiles that include multi-generational inherited wealth narratives, business-sale proceeds with supporting corporate structures, dividend income from complex investment vehicles, and real estate liquidation events across multiple jurisdictions. These profiles are calibrated to reflect Pareto-distributed wealth concentrations consistent with actual high-net-worth demographics. This level of source-of-wealth fidelity is critical for training AML models that must distinguish legitimate wealth accumulation from placement-stage laundering without producing excessive false positives that erode customer relationships.

What does born-synthetic mean, and why does it matter specifically for training AML detection models at traditional banks?

Born-synthetic means every profile is generated entirely from mathematical distributions, including Pareto wealth curves and empirical transaction frequency models, with zero lineage to any real person. No real customer records were anonymized, pseudonymized, or statistically transformed to produce the data. For traditional banks, this distinction is decisive: it satisfies GDPR Article 25 data protection by design and by default as a structural property rather than a compliance claim, eliminates re-identification risk that regulators scrutinize under OCC SR 11-7, and removes the need for data-sharing agreements, DPIAs, or subject-access obligations before model training begins.

How can a traditional bank compliance team get started evaluating Sovereign Forger’s AML training data before committing to a procurement decision?

Sovereign Forger provides 100 free synthetic KYC profiles available for instant download via a verified work email address, with no credit card required. Each profile contains 29 interlocked fields covering risk ratings, PEP status, sanctions screening results, source of wealth narratives, and beneficial ownership structures, giving model validation teams enough volume to run baseline classifier tests and assess field fidelity against internal data dictionaries. The sample set is sized to allow preliminary bias audits and schema compatibility checks before engaging procurement or legal review.

Learn more about bank AML training data synthetic and how Born Synthetic data addresses this in our glossary and comparison guides.