This risk scoring data is built for exactly this scenario. Starling Bank: £29M. HSBC: £63.9M. N26: €9.2M. Your clients’ fines are your product’s failure. Every one of these penalties traces back to financial crime controls that could not distinguish structural complexity from genuine risk — because the risk scoring models were never calibrated against realistic UHNWI profiles.

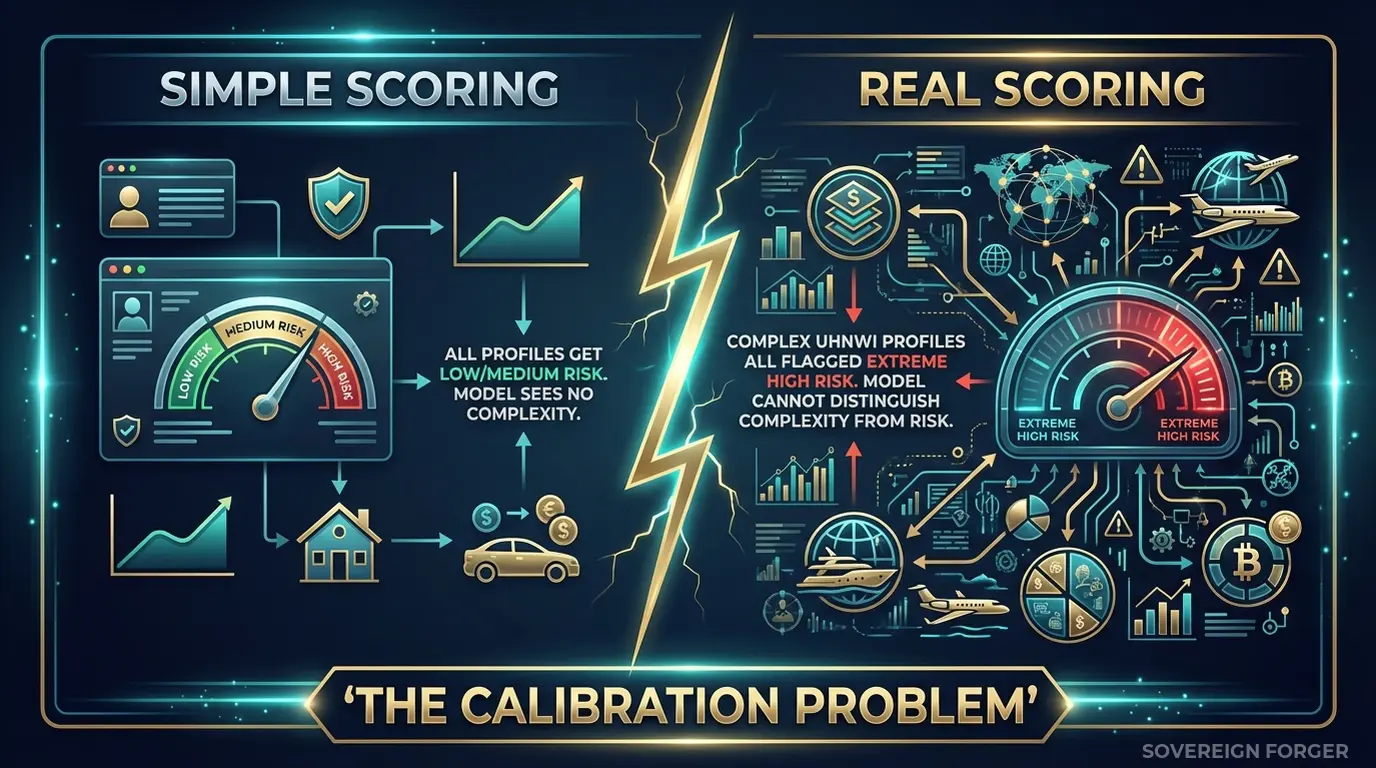

Your Risk Scoring Model Has a Calibration Problem

I have sat in product demos where a RegTech vendor showed their risk scoring engine flagging every single profile above $50M net worth as high-risk. Every one. A tech founder in Palo Alto with a straightforward Delaware LLC — high risk. A retired Swiss banker with a single-jurisdiction portfolio — high risk. A London-based family office manager with thirty years of documented wealth history — high risk.

The vendor called it “conservative.” I called it broken.

Here is what was actually happening: the risk scoring model had been trained and validated on profiles with simple structures. Single jurisdiction, single entity, one source of wealth, one bank relationship. In that training world, the only profiles with offshore vehicles, multi-jurisdictional tax domiciles, and layered entity structures were the ones labeled as suspicious. So the model learned a rule that was never written into its logic but was embedded in its training data: structural complexity equals risk.

This is not conservatism. It is a calibration failure. And it creates two problems simultaneously.

Problem one: false positive flooding. When every complex profile scores as high-risk, your client’s compliance team drowns in alerts. Analysts spend hours reviewing legitimate UHNWI clients who happen to have a family trust in Liechtenstein or a tax domicile in Singapore. Alert fatigue sets in. Real risks get lost in the noise. I have seen compliance teams where 92% of high-risk alerts were false positives — not because the analysts were careless, but because the scoring model had never learned what a legitimate complex profile looks like.

Problem two: regulatory exposure. Regulators are not just checking whether your client catches bad actors. They are checking whether the controls are proportionate. An EDD process that flags every UHNWI as high-risk is not risk-based — it is a blanket policy wearing a risk-scoring mask. When the FCA or BaFin audits your client’s AML controls and discovers that the risk model assigns identical scores to a sanctioned entity and a retired Swiss surgeon, the finding is not “too conservative.” The finding is “inadequate risk differentiation.” And that finding traces straight back to your product.

The core issue is data, not algorithms. Your scoring engine might be excellent. Your thresholds might be well-tuned. Your feature engineering might be sophisticated. But if the training and validation data contains zero examples of legitimate multi-jurisdictional wealth structures — zero profiles where a Cayman LP is a standard estate planning vehicle rather than a red flag — then the model has no basis for distinguishing between structural complexity and genuine risk. It will treat them as identical because, in its training data, they were.

This is the problem I built Sovereign Forger to solve. Not by improving algorithms, but by giving the algorithms something they have never had: training data where complex profiles exist across the full spectrum of risk, not only at the high-risk end.

Three Approaches That Don’t Work for Risk Scoring Calibration

RegTech companies face a unique version of the test data problem. Your product is the control layer. If your product is miscalibrated, your client gets fined — and then they blame you, cancel the contract, and tell every compliance officer in their network. The data you use to train and validate your risk models is not a QA detail. It is a product liability issue.

Using anonymized client data from pilot customers. Some RegTech vendors negotiate access to anonymized client portfolios from early adopters. This creates three problems at once. First, the dataset is biased toward a single institution’s client mix — a neobank’s UHNWI population looks nothing like a private bank’s. Second, GDPR Article 25 applies to the anonymized data in your development environment just as strictly as it applies in production. Third, with only 265,000 UHNWIs globally, the combination of net worth tier, offshore jurisdiction, and profession can re-identify individuals even without names. Your vendor agreement probably does not cover the scenario where a regulator argues your “anonymized” test data is actually pseudonymized.

Using generic synthetic data generators. Platform-based generators produce profiles that are structurally flat. Single jurisdiction, no offshore vehicles, no entity layering, no PEP-adjacent connections. These generators model retail banking populations and scale the numbers up. A $200M profile generated this way is a $20K profile with more zeros — it has no trust structures, no multi-jurisdictional tax planning, no family office wrapper. When you validate your risk model against these profiles, you are testing whether the model can score simple profiles correctly. You are not testing whether it can differentiate between legitimate complexity and genuine risk — because the test data contains no legitimate complexity at all.

Using manually crafted edge cases. I have seen RegTech teams build libraries of 50–100 hand-crafted “complex” profiles for model validation. A compliance analyst writes a profile with specific red flags, the QA team runs it through the model, and everyone checks that the right alerts fire. This tests detection, not calibration. You know the model flags the hand-crafted suspicious profile. You do not know how it scores the ten thousand legitimate UHNWI profiles that share some of the same structural features without being suspicious. You have tested the edges without testing the middle — and the middle is where calibration failures hide.

Real Data vs. Anonymized vs. Born-Synthetic for Risk Model Training

| Dimension | Real/Anonymized Data | Generic Synthetic | Born-Synthetic |

|---|---|---|---|

| PII present | Residual | None | None |

| Re-identification risk | Probable (UHNWI) | None | Impossible |

| Structural complexity | Real but biased | Absent | Full spectrum |

| Risk signal distribution | Skewed to one institution | Uniform/random | Niche-realistic |

| GDPR Art. 25 compliant | Disputed | Yes | Yes |

| EU AI Act Art. 10 | Unclear | Compliant | Compliant |

| Calibration validity | Limited by sample bias | Invalid — no complexity | Valid across archetypes |

| Certifiable for auditors | No | No | Yes (Certificate of Origin) |

Born-Synthetic KYC Data Built for Risk Scoring Calibration

The fundamental requirement for risk scoring calibration is data where structural complexity varies independently of risk level. You need high-complexity profiles that are genuinely low-risk. You need simple profiles that are genuinely high-risk. You need the full matrix — not just the diagonal where complexity and risk move together.

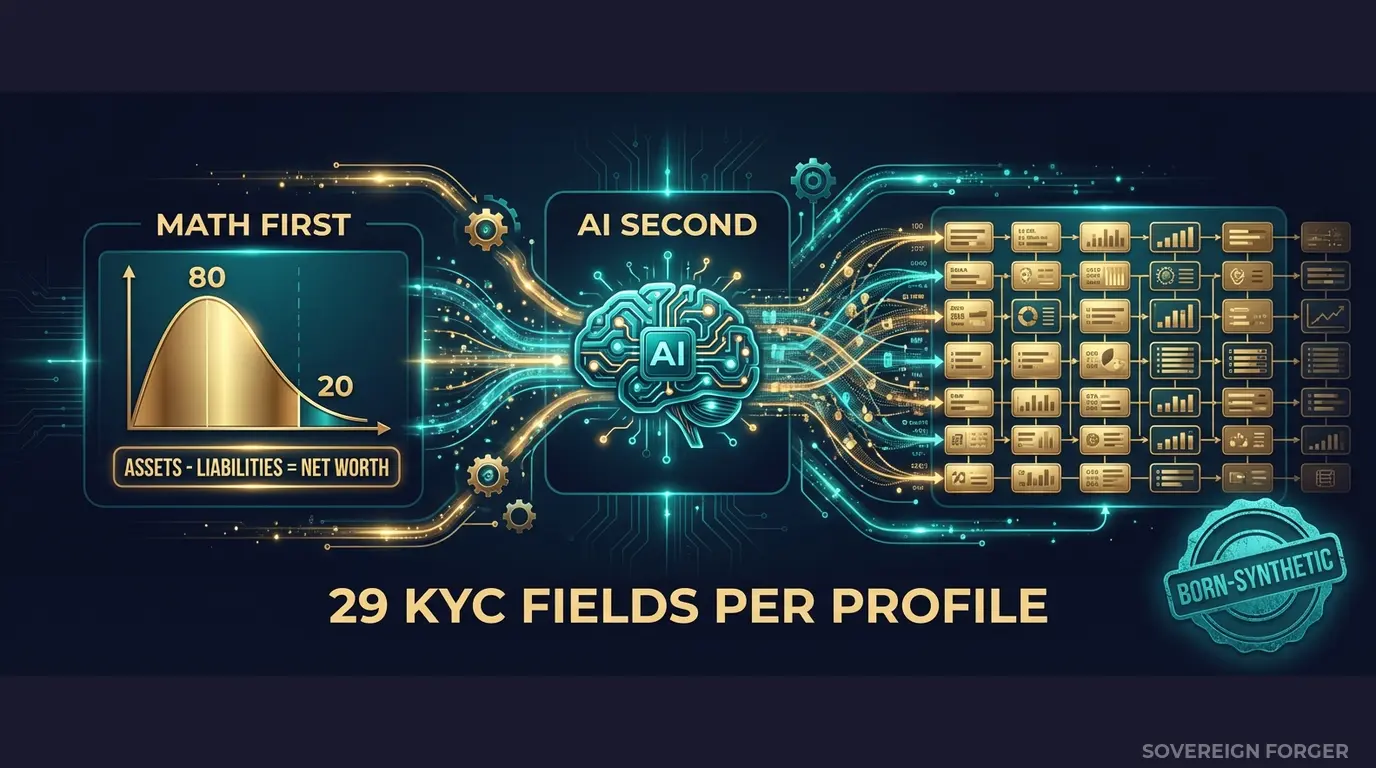

Every profile in the Sovereign Forger KYC dataset is generated from mathematical constraints that produce exactly this independence. The generation pipeline works in two stages:

Math First. Net worth follows a Pareto distribution — the way real wealth is actually distributed, with a long tail of extreme concentrations. Asset allocations are computed within algebraic constraints: Assets – Liabilities = Net Worth, by construction. Every balance sheet balances on every record. Zero exceptions. Offshore vehicles, entity structures, and jurisdictional exposures are assigned based on archetype and niche — a tech founder in Silicon Valley gets different structures than a commodity trader in the Middle East, because their wealth architectures are structurally different.

AI Second. A local AI model running entirely offline adds narrative context — biography, profession, philanthropic focus — after the financial figures are locked. The AI never touches the numbers. It enriches the profile with culturally coherent details that match the geographic niche and wealth tier. No data leaves the machine. No API calls. No cloud.

Why This Matters for Risk Scoring Specifically

The KYC signal layer is where this dataset becomes directly useful for risk model calibration. Every KYC-Enhanced profile includes deterministic risk signals derived from the profile’s archetype, niche, net worth, and jurisdiction:

`kyc_risk_rating` — low, medium, or high. Critically, this is distributed by niche with realistic frequencies, not randomly assigned. A LatAm profile with resource extraction wealth and an offshore vehicle in Panama scores differently than a Swiss private banker with the same net worth. Your model receives examples where high-net-worth does not automatically equal high-risk.

`pep_status` — none, domestic, foreign, or international_org. PEP rates vary by niche: Middle East profiles show approximately 29% PEP incidence, reflecting the intersection of wealth and government in the region. European profiles show lower rates. Your model learns that PEP status correlates with geography and archetype, not with risk alone.

`sanctions_screening_result` — clear, potential_match, or confirmed_match. Combined with `sanctions_match_confidence` (0–100), this gives your scoring engine graduated signal rather than binary flags.

`adverse_media_flag` and `high_risk_jurisdiction_flag` — each derived from the profile’s specific jurisdictional footprint and wealth source. A profile with legitimate business in a high-risk jurisdiction gets the flag without the sanctions match — exactly the scenario where your model needs to make a nuanced decision rather than a blanket one.

`source_of_wealth_verified` and `sow_verification_method` — tax returns, bank statements, third-party verification, or self-declared. The verification method correlates with jurisdiction and archetype. Your model receives realistic distributions of verification completeness.

The key insight: these fields are not randomly shuffled across profiles. They are deterministically derived using SHA-256 hashing of the profile UUID, which means the same profile always produces the same KYC signals, and the signals are internally consistent. A profile cannot simultaneously have a clean sanctions screen and a confirmed match. A PEP cannot have zero high-risk jurisdiction exposure. The data is coherent — which is exactly what your risk model needs to learn from.

29 Fields for End-to-End Risk Scoring Validation

Identity & Geography: full_name, residence_city, residence_zone, tax_domicile

Wealth Structure: net_worth_usd, total_assets, total_liabilities, property_value, core_equity, cash_liquidity, assets_composition, liabilities_composition

Professional Context: profession, education, narrative_bio, philanthropic_focus

Offshore Exposure: offshore_jurisdiction, offshore_vehicle

KYC Signals: kyc_risk_rating, pep_status, pep_position, pep_jurisdiction, sanctions_screening_result, sanctions_match_confidence, adverse_media_flag, source_of_wealth_verified, sow_verification_method, high_risk_jurisdiction_flag

Every field feeds directly into the features your risk scoring engine consumes. No transformation layer needed. No field mapping exercise. Load the JSONL, point your model at it, and start validating.

Built for RegTech Risk Scoring Validation at Scale

6 Geographic Niches: Silicon Valley, Old Money Europe, Middle East, LatAm, Pacific Rim, Swiss-Singapore. Each niche produces structurally distinct wealth patterns — different offshore vehicle preferences, different PEP incidence rates, different jurisdiction exposures. Your risk model gets calibrated against the full diversity of global UHNWI wealth, not a single region’s client mix.

31 Wealth Archetypes: Tech founders, sovereign family members, commodity traders, private bankers, real estate developers, family office managers, philanthropists, political figures. Each archetype carries different structural complexity without implying different risk levels. A tech founder with a Delaware LLC and a Cayman SPV is not inherently riskier than a retired surgeon with a single bank account — but most risk models treat them as if they are.

Deterministic KYC Signal Distributions: Risk ratings, PEP statuses, sanctions screening results, and source-of-wealth verification methods are distributed with niche-realistic frequencies. LatAm profiles show approximately 84% high-risk incidence (reflecting jurisdictional exposure). European profiles show approximately 48% low-risk. These are not arbitrary numbers — they reflect the structural characteristics of wealth in each region.

Reproducible Results: Every KYC signal is derived from a SHA-256 hash of the profile UUID. Same profile, same signals, every time. Your model validation is reproducible. Your regression tests are stable. Your audit trail is deterministic.

Pricing

| Tier | Records | Price | Best For |

|---|---|---|---|

| Compliance Starter | 1,000 | $999 | Model validation, proof of concept |

| Compliance Pro | 10,000 | $4,999 | Full regression suite, A/B testing |

| Compliance Enterprise | 100,000 | $24,999 | Production model training + validation |

No SDK. No API key. No sales call. Download a JSONL file, load it into your pipeline, and start scoring. CSV also included for teams that prefer spreadsheet review.

Why This Matters Now

Your clients are getting fined, and the scrutiny is increasing. Starling Bank paid £29M for inadequate financial crime controls. HSBC paid £63.9M. N26 paid €9.2M. When these institutions review what went wrong, the trail leads back to risk models that could not differentiate between legitimate complexity and genuine risk. If your product provided those models — or the data that trained them — you are part of the post-mortem.

The EU AI Act changes the equation for RegTech vendors. Financial AI is classified as high-risk under Annex III. Article 10 requires documented governance of training data, including provenance, bias assessment, and GDPR compliance. If your risk scoring engine trains on anonymized client data from a pilot customer, you need to prove compliance on both GDPR and EU AI Act simultaneously — for your product, not just for your client’s deployment. Full enforcement begins August 2026.

The calibration test is measurable. Take your current risk model. Score 1,000 Sovereign Forger KYC profiles. Count how many high-net-worth, low-risk profiles your model assigns to the highest risk tier. That number is your false positive rate on complex legitimate clients — a number you have never been able to measure because your test data never contained complex legitimate clients.

The balance sheet test is open source. Every Sovereign Forger record passes algebraic validation: Assets – Liabilities = Net Worth. Run the Balance Sheet Test on our data, then run it on whatever test data you currently use for model validation. If the balance sheets do not balance, your risk model is learning from structurally incoherent data — and its outputs will reflect that incoherence.

Every dataset ships with a Certificate of Sovereign Origin — documenting the born-synthetic methodology, zero PII lineage, and regulatory alignment. When your client’s auditor asks where the model validation data came from, you hand them the certificate. When your own compliance review asks the same question, you have a documented answer. Born-Synthetic data. Zero real PII. Compliant by construction — not by anonymization.

Calibrate Your Risk Scoring Models

Download 100 free KYC-Enhanced UHNWI profiles with deterministic risk signals. Run them through your scoring engine. Count how many legitimate complex profiles your model assigns to the highest risk tier.

That count is the false positive rate your clients are living with — and the product liability you are carrying.

No credit card. No sales call. Just your work email.

Frequently Asked Questions

Why do risk scoring models trained on limited or homogeneous KYC data fail in production environments?

Risk scoring models require calibration across the full spectrum of customer risk profiles to function reliably. When training data lacks diversity in jurisdictions, risk ratings, or edge cases such as PEP exposure or complex source-of-wealth scenarios, models develop blind spots that produce systematic miscalibration. Regulators increasingly scrutinize model governance under EU AI Act Art.10, which mandates training data that is representative, free of errors, and complete. Neobanks including Starling (fined £29M) and N26 (fined €9.2M) demonstrate the cost of inadequate AML controls downstream of poor model foundations.

How can RegTech providers demonstrate risk scoring platform accuracy to prospective clients without exposing real customer data?

RegTech providers routinely need to run live demonstrations, proof-of-concept integrations, and sandbox environments for prospective bank and insurer clients. Using real customer data in these contexts violates GDPR Art.25 data minimisation principles and creates serious liability exposure. Synthetic KYC profiles allow vendors to populate demo environments with hundreds of realistic records spanning low, medium, and high risk ratings, multiple jurisdictions, PEP flags, and sanctions hits, enabling credible product demonstrations without any personal data leaving controlled systems or triggering regulatory scrutiny.

What specific risk scoring edge cases must synthetic KYC profiles cover to produce well-calibrated AML and fraud models?

Effective risk scoring calibration requires profiles representing statistically rare but high-consequence scenarios that real datasets often underrepresent. These include politically exposed persons across jurisdictions, customers with adverse media hits, complex multi-layered source-of-wealth structures, sanctions list proximity, and customers whose transaction patterns mimic legitimate behaviour while crossing risk thresholds. A dataset covering only common low-risk profiles will produce models that score 95 percent of customers accurately but systematically fail on the 5 percent who pose actual regulatory risk, precisely the failure mode that draws supervisory action.

What does born-synthetic mean for KYC data, and why does it matter specifically for RegTech risk scoring applications?

Born-synthetic data is generated entirely from mathematical distributions such as Pareto models for wealth and income, with no source records derived from or linked to real individuals. Unlike anonymised or pseudonymised data, born-synthetic KYC profiles have zero lineage to real persons, eliminating re-identification risk by construction rather than by policy. This makes the data GDPR Art.25 compliant by design, not by assertion. For RegTech risk scoring, this distinction is critical because model training pipelines, audit trails, and client-facing demonstrations all require documented data provenance that withstands regulatory review without legal exposure.

How quickly can a RegTech team begin testing a risk scoring model using Sovereign Forger synthetic KYC profiles?

Sovereign Forger provides 100 free synthetic KYC profiles available for instant download via work email registration with no credit card required. Each profile contains 29 interlocked fields designed to maintain internal consistency across demographics, financials, and compliance attributes. The dataset covers the full range of risk ratings from low through high, PEP status indicators, sanctions screening flags, and source-of-wealth classifications, giving risk scoring teams an immediately usable calibration dataset that reflects realistic regulatory edge cases from the first day of model development.

Learn more about RegTech risk scoring data and how Born Synthetic data addresses this in our glossary and comparison guides.