This model validation data is built for exactly this scenario. Starling Bank: £29M. HSBC: £63.9M. N26: €9.2M. Your clients paid those fines — and every one traces back to AML and KYC models that passed validation but failed in production. If the data you validate against does not contain the complexity your clients face, your product is the liability.

Your Validation Suite Is Testing the Easy Part

I have sat across from RegTech product teams who showed me their model validation dashboards. Precision: 94%. Recall: 91%. F1 score: 0.92. Every metric green. Every chart trending in the right direction.

Then I asked a simple question: what does the tail of your validation distribution look like?

Silence.

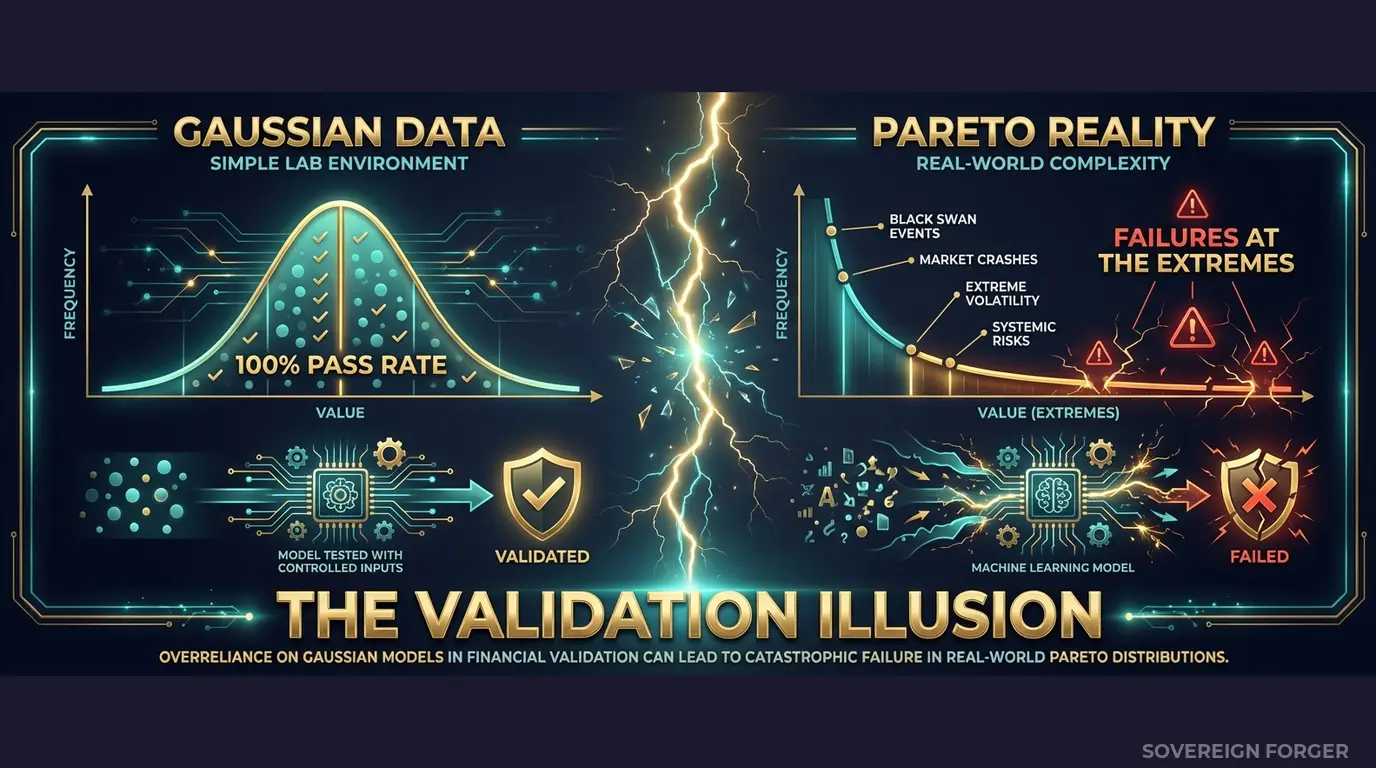

Here is what I mean. In any financial population, wealth follows a Pareto distribution — a small number of individuals hold a disproportionate share of complexity. These are the profiles with four jurisdictions, layered offshore vehicles, PEP-adjacent family connections, and source-of-wealth paths that cross three continents. They represent maybe 5% of the client base. But they generate 60-80% of the alerts, edge cases, and false positives that determine whether your AML screening model actually works in production.

Most validation datasets do not contain these profiles. They contain 10,000 variations of the same structural template: single jurisdiction, single income source, straightforward identity, moderate net worth. The model learns to classify these correctly — because they are easy. Then your client onboards their first real UHNWI with a Cayman Islands LP, a Singapore family office, and PEP exposure through a cousin who held a ministerial position in the UAE. Your model has never seen anything structurally similar. It either misses the risk entirely or flags everything indiscriminately, drowning the compliance team in false positives.

This is not a model quality problem. It is a validation data problem.

I have watched this pattern repeat across every category of RegTech product — transaction monitoring, sanctions screening, risk scoring, customer due diligence automation. The model works on the bulk of the distribution. It fails on the tail. And the tail is precisely where regulators look, where fines originate, and where your client’s trust in your product evaporates.

The math is unforgiving. If your validation dataset follows a Gaussian distribution (bell curve) instead of a Pareto distribution (power law), your model has never been tested against the wealth structures that actually exist in the top 1%. You are validating against a fictional population — and your metrics reflect fictional performance.

When Starling Bank was fined £29M, the FCA’s enforcement notice cited “serious and widespread” failings in automated screening systems. When HSBC paid £63.9M, the underlying issue was the same: systems that worked in controlled environments but failed against the real complexity of high-net-worth international clients. Your RegTech product was supposed to prevent these outcomes. If your validation data does not contain this complexity, it cannot.

Three Approaches That Do Not Validate Your Model

Every RegTech team I have spoken with uses one of three approaches for model validation — and all three share the same structural flaw.

Using your client’s production data. Some vendors negotiate access to anonymized client data for model tuning and validation. Set aside the GDPR Article 25 risk for a moment — the deeper problem is selection bias. You are validating your model against the population it already serves. If your client’s current customer base is 90% domestic retail, your model validates beautifully against domestic retail. The moment your client expands into cross-border UHNWI onboarding, your model enters territory it has never been tested against. Worse, if you use this data to train AI models, the EU AI Act Article 10 requires documented provenance and governance — and “we got it from our client under NDA” is not a governance framework.

Using internally generated synthetic data. Many RegTech engineering teams build their own synthetic generators. I have reviewed a dozen of these. They all share the same problem: the generator was built by engineers who understand data formats but not wealth architecture. The profiles are structurally flat — they have the right column headers and valid value ranges, but no internal coherence. Net worth does not decompose correctly into assets and liabilities. Offshore jurisdictions are randomly assigned rather than derived from the wealth archetype. PEP status has no correlation with geography or profession. The data passes schema validation. It fails structural validation. Your model trains on incoherent profiles and learns incoherent patterns.

Using open-source or academic datasets. Publicly available synthetic financial datasets serve research purposes — they are not built for production model validation. They typically contain 1,000-5,000 records, a handful of fields, and zero representation of UHNWI complexity. Scaling them to 100K records produces 100K copies of the same structural template. Your validation metrics look stable because your data has no variance, not because your model handles variance well.

Production Data vs. Internal Synthetic vs. Born-Synthetic

| Dimension | Client Production Data | Internal Synthetic | Born-Synthetic |

|---|---|---|---|

| PII present | Yes (even “anonymized”) | None | None |

| Wealth distribution | Biased to current clients | Gaussian (flat) | Pareto (realistic) |

| Balance sheet integrity | Real but restricted | Often incoherent | Algebraically guaranteed |

| UHNWI complexity | Only if client has UHNWIs | Minimal | 31 archetypes × 6 niches |

| GDPR Art. 25 | Violation in test envs | Compliant | Compliant |

| EU AI Act Art. 10 | Provenance unclear | Undocumented | Certified (Certificate of Origin) |

| Auditor-ready | No | No | Yes |

| Pareto tail coverage | Incidental | Missing | By construction |

Born-Synthetic Data Built for RegTech Model Validation

I built Sovereign Forger because I saw the same failure mode repeatedly: models that validated perfectly and failed catastrophically. The root cause was always the data, never the model architecture. So I built the data layer that was missing.

Every profile in the Sovereign Forger dataset is generated from mathematical constraints — not derived from any real person, not sampled from any real population.

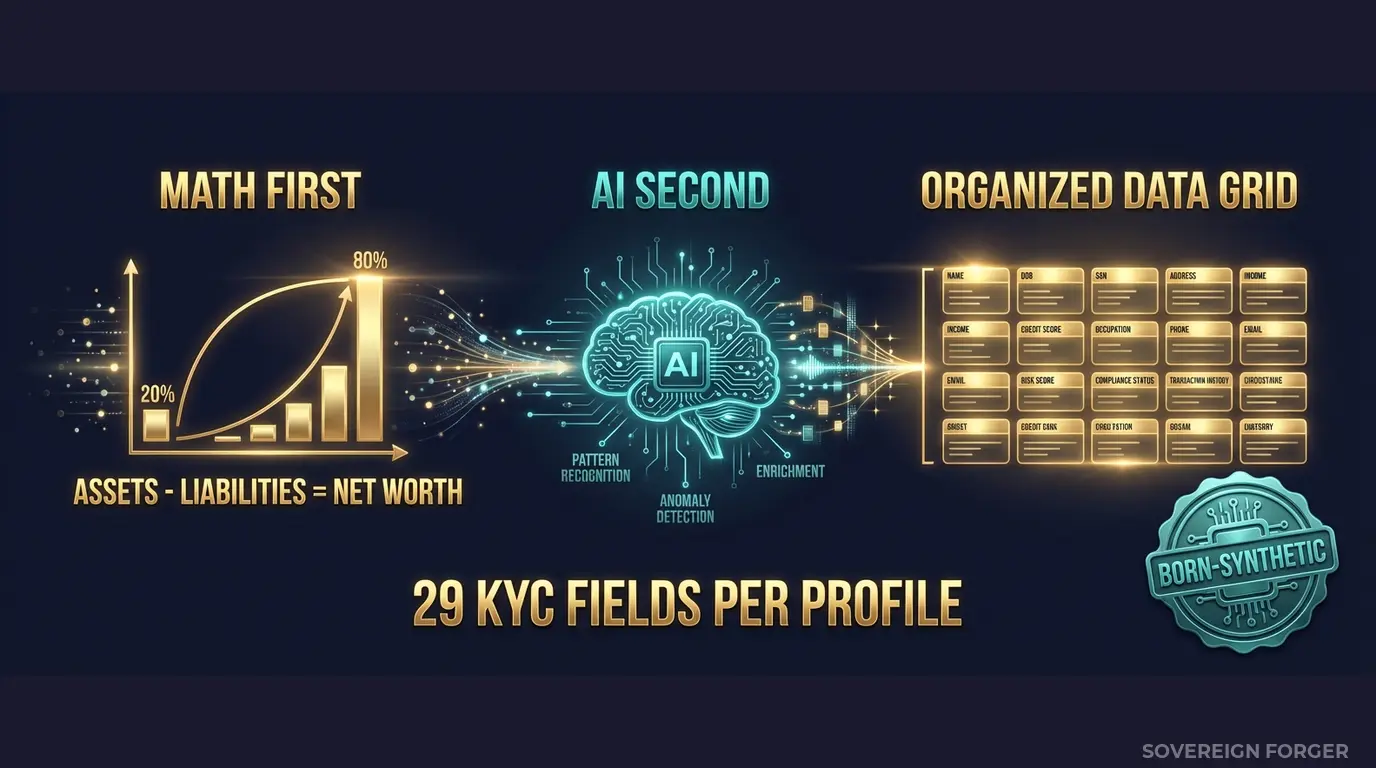

Math First. Net worth follows a Pareto distribution — the same power-law distribution that governs real-world wealth concentration. This is not a cosmetic choice. It means your validation dataset contains the same long tail that your model will encounter in production: a small number of extremely complex profiles with multi-jurisdictional structures, high offshore exposure, and edge-case wealth compositions. Asset allocations are computed within algebraic constraints: Assets – Liabilities = Net Worth, by construction. Every balance sheet balances on every record. Zero exceptions. When your model validation suite checks for internal consistency, every record passes — because the consistency is mathematical, not cosmetic.

AI Second. After the financial figures are locked, a local AI model — running entirely offline, on hardware I control — adds narrative context: biography, profession, philanthropic focus. The AI never touches the numbers. It enriches the profile with culturally coherent details that match the geographic niche and wealth archetype. A tech founder in Silicon Valley gets a different biography than a commodity trader in Singapore — not because of random assignment, but because the enrichment layer understands the archetype.

Why This Matters for Model Validation Specifically

Model validation requires three properties that most synthetic data does not provide:

1. Distributional coverage. Your model needs to be tested against the full range of inputs it will encounter — including the tail. A Pareto-distributed dataset means your validation naturally covers simple profiles (the bulk), moderately complex profiles (the mid-range), and highly complex multi-jurisdictional UHNWIs (the tail). You do not need to manually construct edge cases. The distribution generates them.

2. Internal coherence. Every field in a Sovereign Forger profile is derived from the same underlying archetype and constraints. Net worth, asset composition, offshore jurisdiction, KYC risk rating, PEP status — these are not independently generated columns. They are interdependent variables within a coherent financial identity. When your model learns correlations from this data, it learns correlations that exist in the real world. When you validate against this data, you validate against structurally plausible inputs — not random noise in a financial format.

3. Provenance documentation. The EU AI Act Article 10 requires that high-risk AI systems — which includes financial AI under Annex III — document the provenance, preparation, and governance of their training and validation data. Every Sovereign Forger dataset ships with a Certificate of Sovereign Origin that documents the generation methodology, confirms zero PII lineage, and provides the audit trail your compliance team needs. When a regulator or auditor asks “what data did you validate this model against, and where did it come from?”, you hand them the certificate.

29 Fields Designed for Model Validation Pipelines

Every KYC-Enhanced profile includes the fields your validation pipeline needs to exercise:

Identity & Geography: full_name, residence_city, residence_zone, tax_domicile

Wealth Structure: net_worth_usd, total_assets, total_liabilities, property_value, core_equity, cash_liquidity, assets_composition, liabilities_composition

Professional Context: profession, education, narrative_bio, philanthropic_focus

Offshore Exposure: offshore_jurisdiction, offshore_vehicle

KYC Signals: kyc_risk_rating, pep_status, pep_position, pep_jurisdiction, sanctions_screening_result, sanctions_match_confidence, adverse_media_flag, source_of_wealth_verified, sow_verification_method, high_risk_jurisdiction_flag

Every KYC field is deterministically derived from the profile’s archetype, niche, net worth, and jurisdiction. A PEP in the Middle East niche has a different risk distribution than a tech founder in Silicon Valley — because the underlying regulatory exposure is different. This means your model validation captures niche-specific performance differences, not just aggregate accuracy.

Validation Data That Covers the Full Distribution

6 Geographic Niches: Silicon Valley, Old Money Europe, Middle East, LatAm, Pacific Rim, Swiss-Singapore. Each niche has its own wealth architecture, cultural naming conventions, offshore patterns, and KYC risk distributions. Validate your model’s performance per niche, not just in aggregate — because a model that works for European clients may fail on Pacific Rim wealth structures.

31 Wealth Archetypes: Tech founders, sovereign family members, commodity traders, private bankers, shipping dynasty heirs, family office managers — the actual client profiles that generate the alerts, edge cases, and false positives your model needs to handle. Each archetype has distinct asset compositions, offshore preferences, and KYC signal patterns.

Pareto-Distributed Wealth: Net worth follows a power-law distribution with calibrated shape parameters per niche. Your validation dataset naturally contains the long tail — the complex, high-net-worth profiles where model failures concentrate in production. No manual edge case construction required.

KYC Signal Distributions by Niche: Risk ratings, PEP statuses, sanctions screening results, and source-of-wealth verification methods are distributed with realistic frequencies that vary by geography. LatAm profiles carry higher risk ratings. Middle East niches have higher PEP incidence. Swiss-Singapore profiles show more complex offshore structures. Your model validation captures these geographic patterns.

Pricing

| Tier | Records | Price | Best For |

|---|---|---|---|

| Compliance Starter | 1,000 | $999 | Model validation proof of concept |

| Compliance Pro | 10,000 | $4,999 | Full validation suite, bias testing |

| Compliance Enterprise | 100,000 | $24,999 | Production-scale validation + AI training |

No SDK. No API key. No sales call. Download a file, load it into your validation pipeline, and see what your model actually does with realistic complexity.

Why This Matters Now

Your clients are getting fined — and they will ask why your product did not prevent it. Starling Bank paid £29M. HSBC paid £63.9M. N26 paid €9.2M. Block paid $120M. Every one of these fines involved AML and KYC systems — many built or powered by RegTech vendors — that failed against real-world complexity. If your product was part of that stack, your next sales cycle gets harder. If your product was validated against data that did not contain this complexity, the question is not whether a client failure will happen — it is when.

The EU AI Act changes the liability equation. Fully applicable from August 2026, the AI Act classifies financial AI as high-risk under Annex III. Article 10 requires documented governance of training and validation data — including provenance, bias assessment, and GDPR compliance. If your models are validated against data with unclear provenance, undocumented generation methodology, or residual PII risk, you are exposed. Born-Synthetic data with a Certificate of Origin is the cleanest path to Article 10 compliance.

Model validation is becoming a competitive differentiator. When your sales team pitches to a bank’s compliance department, the first question is increasingly: “How do you validate your models?” The answer “we use internally generated data” invites scrutiny. The answer “we validate against 100,000 born-synthetic profiles covering 31 wealth archetypes across 6 geographic niches, with a Certificate of Sovereign Origin documenting zero PII lineage” closes the conversation.

The balance sheet test is open source. Every Sovereign Forger record passes algebraic validation: Assets – Liabilities = Net Worth. Run the Balance Sheet Test on our data, then run it on whatever data you currently use for validation. The difference is measurable — and it tells you how much structural noise your model has been learning from.

Every dataset ships with a Certificate of Sovereign Origin — documenting the born-synthetic methodology, zero PII lineage, and regulatory alignment. This is not a marketing document. It is the audit artifact your client’s compliance team needs when their regulator asks how their AI models are validated.

Validate Your Models Against Realistic Data

Download 100 free KYC-Enhanced UHNWI profiles. Run your model validation suite. Check whether your model handles the Pareto tail — the complex profiles where most real-world failures occur.

If your precision drops on multi-jurisdictional profiles, if your risk scoring model has never seen a PEP with offshore exposure in three niches, if your sanctions screening confidence scores collapse on culturally diverse names — you will see it in the results.

That gap between your current validation metrics and your performance on this data is the gap between your product’s promise and your product’s reality.

No credit card. No sales call. Just your work email.

Frequently Asked Questions

How does synthetic KYC data expose weaknesses in AML model validation that real customer data cannot?

Real customer data reflects historical patterns that models have already learned, creating circular validation. Sovereign Forger’s born-synthetic KYC profiles introduce edge cases, adversarial PEP combinations, and rare sanctions-screening scenarios at controlled frequencies — stress-testing models against distributions they have not seen. RegTech providers can validate recall rates on sub-1% fraud prevalence populations with statistically precise Pareto wealth distributions, surfacing false-negative rates that production data masks until a regulatory breach occurs.

Why do RegTech client demonstrations to neobanks require purpose-built synthetic financial data rather than anonymised production records?

Neobanks such as Starling (fined £29M by the FCA) and N26 (fined €9.2M by BaFin) were penalised specifically for AML control failures. Demonstrating a RegTech platform using anonymised production data risks re-identification, violates GDPR Art.25 data-minimisation obligations, and cannot reproduce the exact risk-score distributions needed to benchmark model performance. Sovereign Forger generates demographically coherent profiles with correlated income, source-of-wealth, and risk-rating fields, making client demonstrations both compliant and statistically credible.

How should a RegTech provider structure model validation datasets to satisfy EU AI Act Article 10 data governance requirements?

EU AI Act Art.10 requires that training and validation datasets be relevant, representative, and free from errors that could produce discriminatory outcomes. Sovereign Forger’s synthetic profiles preserve Pareto wealth distributions and realistic correlations between 29 interlocked financial fields, ensuring validation sets reflect the statistical properties of real portfolios without containing personal data. This allows RegTech providers to document dataset provenance, demonstrate representativeness to auditors, and satisfy Art.10 obligations without data-sharing agreements or subject-access liabilities.

What does born-synthetic mean in the context of RegTech model validation, and why does it matter?

Born-synthetic means each profile is generated entirely from mathematical distributions, including Pareto wealth curves and empirically calibrated correlation matrices, with zero lineage to any real individual. No real record is anonymised, tokenised, or transformed. This is materially distinct from pseudonymised data because there is no original dataset from which re-identification is theoretically possible. For RegTech model validation, born-synthetic data is GDPR Art.25 compliant by construction, eliminates data-breach liability during testing cycles, and allows unrestricted sharing across development, staging, and client environments without data-processing agreements.

How can a RegTech provider get started validating their AI models with Sovereign Forger synthetic KYC data?

Sovereign Forger provides 100 free synthetic KYC profiles with 29 interlocked fields available for instant download via a work email address, with no credit card required. Each profile includes risk ratings, PEP status, sanctions-screening flags, and source-of-wealth classifications generated with statistically accurate distributions. This allows a RegTech provider to immediately benchmark their model’s precision and recall against a realistic, compliant dataset before committing to a larger validation programme.

Learn more about RegTech model validation data and how Born Synthetic data addresses this in our glossary and comparison guides.