Your clients are getting fined — Starling Bank £29M, HSBC £63.9M, N26 €9.2M — and every fine traces back to AML systems that could not tell the difference between legitimate UHNWI complexity and genuine laundering signals. If the model was trained on flat data, it learned a flat world. Your product pays the price.

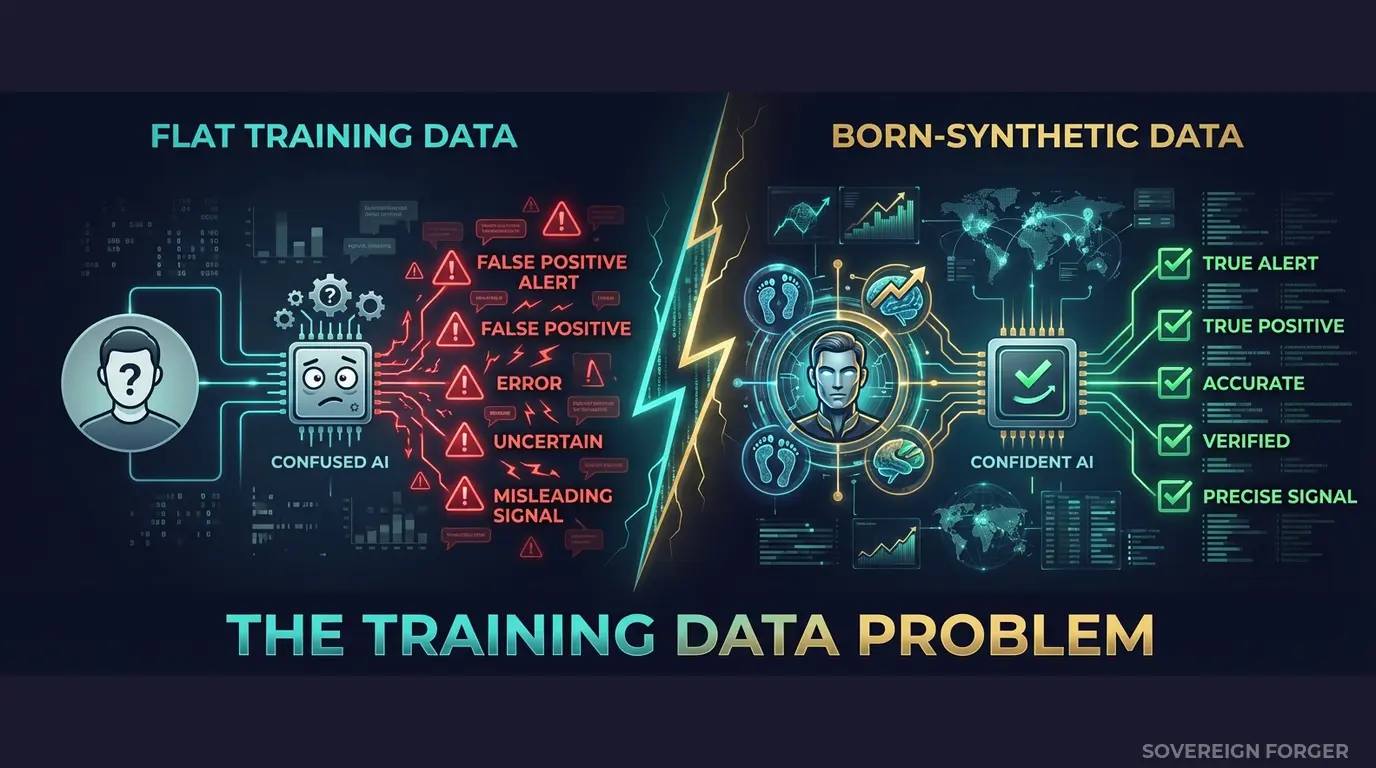

Your AML Model Has a Training Data Problem

I have sat across the table from RegTech product teams who could not explain why their AML model performed brilliantly in validation and catastrophically in production. The precision scores were excellent. The recall numbers looked solid. The false positive rate was within acceptable bounds. Every benchmark passed.

Then their client — a neobank scaling into UHNWI onboarding — deployed the model against real traffic. Within three months, the compliance team was drowning in false positives on legitimate multi-jurisdictional wealth structures, while genuine risk signals from PEP-adjacent connections passed through undetected. The model had never seen the difference between a tech founder with a Delaware holding company and a Cayman LP layered for tax purposes, and a politically exposed person routing funds through the same jurisdictional corridor. It treated both as equally suspicious — or equally benign — because the training data contained neither pattern.

This is not a model architecture problem. It is a training data problem. And it is the specific problem that is costing RegTech companies their clients, their reputation, and increasingly, their own regulatory exposure.

The pattern repeats across the industry. ComplyAdvantage, Napier AI, Lucinity, Unit21, Flagright, Fenergo, Sumsub, NICE Actimize, WorkFusion — every AML vendor validates their product against some form of test data before shipping it to banks, neobanks, and payment processors. The question is what that test data looks like.

If it looks like single-jurisdiction individuals with straightforward employment income and one bank account, then the model learns that the world of financial clients is structurally simple. It learns that offshore vehicles are rare. It learns that multi-jurisdictional tax arrangements are anomalous. It learns that PEP connections are binary — present or absent — rather than graded through family relationships, business partnerships, and political adjacency.

When that model encounters the first real UHNWI portfolio — four jurisdictions, trust structures, an offshore LP, PEP-adjacent family connections, and a philanthropic foundation used for legitimate tax optimization — it has two failure modes. Either it flags everything as suspicious, generating a false positive storm that overwhelms your client’s compliance team. Or it flags nothing, because the model has no frame of reference for what legitimate complexity looks like versus what genuine risk looks like.

Both failure modes end the same way. Your client gets fined. They call your sales team. They ask why the product they are paying six or seven figures for did not catch what a junior compliance analyst would have spotted manually. And the honest answer — the one nobody wants to say out loud — is that the model was never trained on data that reflected the structural complexity of high-net-worth financial relationships.

The indirect liability is real. When Starling Bank was fined £29M, the question regulators asked was not just about the bank’s internal controls — it was about the tools the bank relied on. When HSBC paid £63.9M, the enforcement action examined the entire compliance technology stack. RegTech vendors are not named in the fine, but they are named in the post-mortem. And increasingly, procurement teams at financial institutions are demanding evidence that their RegTech vendors validate products against realistic, diverse, and compliant test data. If you cannot demonstrate that, you lose the deal to someone who can.

Three Approaches That Do Not Train AML Models Properly

Every RegTech vendor I have spoken with uses one of three approaches to generate training data for their AML models. All three produce the same structural blind spot.

Using client data for model training. Some RegTech vendors negotiate data-sharing agreements with early clients to use anonymized transaction and profile data for model development. This creates three simultaneous problems. First, GDPR Article 25 requires data protection by design — using real personal data, even anonymized, in development environments with broader team access is a compliance risk. Second, the data reflects a single institution’s client base, creating model bias toward that institution’s demographics. Third, with only 265,000 UHNWIs globally, the combination of wealth tier, jurisdiction, offshore vehicle, and profession can re-identify individuals even without direct identifiers. Your training data is not as anonymous as your legal team thinks it is.

Using off-the-shelf synthetic generators. Platform-based synthetic data tools — Mostly AI, Tonic, Gretel — are designed to mimic the statistical distribution of an input dataset. They need real data as input. If you feed them 10,000 retail banking profiles, they produce 100,000 retail banking profiles with similar distributions. They do not generate UHNWI wealth architecture from first principles. They do not model Pareto-distributed net worth. They do not create multi-layered offshore structures, culturally coherent professional backgrounds, or deterministically derived KYC risk signals. They scale what you already have — and if what you already have is structurally flat, they scale flatness.

Using hand-crafted test scenarios. Some product teams build manual test cases — 50 to 200 carefully designed profiles that exercise specific AML rules. A PEP from a high-risk jurisdiction. A sanctions near-match. An adverse media flag. These profiles are useful for unit testing specific rules, but they are catastrophically insufficient for training machine learning models. Two hundred hand-crafted profiles do not teach a model what the distribution of risk signals looks like across 100,000 real-world UHNWI portfolios. They teach the model that risk comes in the exact shapes the product team imagined — and nothing else.

Client Data vs. Generic Synthetic vs. Born-Synthetic

| Dimension | Client Data | Generic Synthetic | Born-Synthetic |

|---|---|---|---|

| PII present | Yes (anonymized) | Derived from real data | None |

| Re-identification risk | Probable (UHNWI) | Inherited from source | Impossible |

| GDPR Art. 25 compliant | Disputed | Depends on source | Yes |

| EU AI Act Art. 10 | Requires documentation | Requires source audit | Compliant by construction |

| UHNWI structural complexity | Single-institution bias | Mirrors input (typically flat) | 31 archetypes, 6 niches |

| KYC signal realism | Real but narrow | Statistically copied | Deterministically derived |

| Scalable to 100K+ | Limited by client consent | Yes (but flat) | Yes (with full complexity) |

| Certifiable for auditors | No | Depends on vendor | Yes (Certificate of Origin) |

Born-Synthetic AML Training Data Built for RegTech Model Validation

I built Sovereign Forger specifically because I watched the training data problem destroy AML model performance from the inside. The profiles in every dataset are generated from mathematical constraints — not derived from any real person, not anonymized from any client database, not statistically copied from any input file.

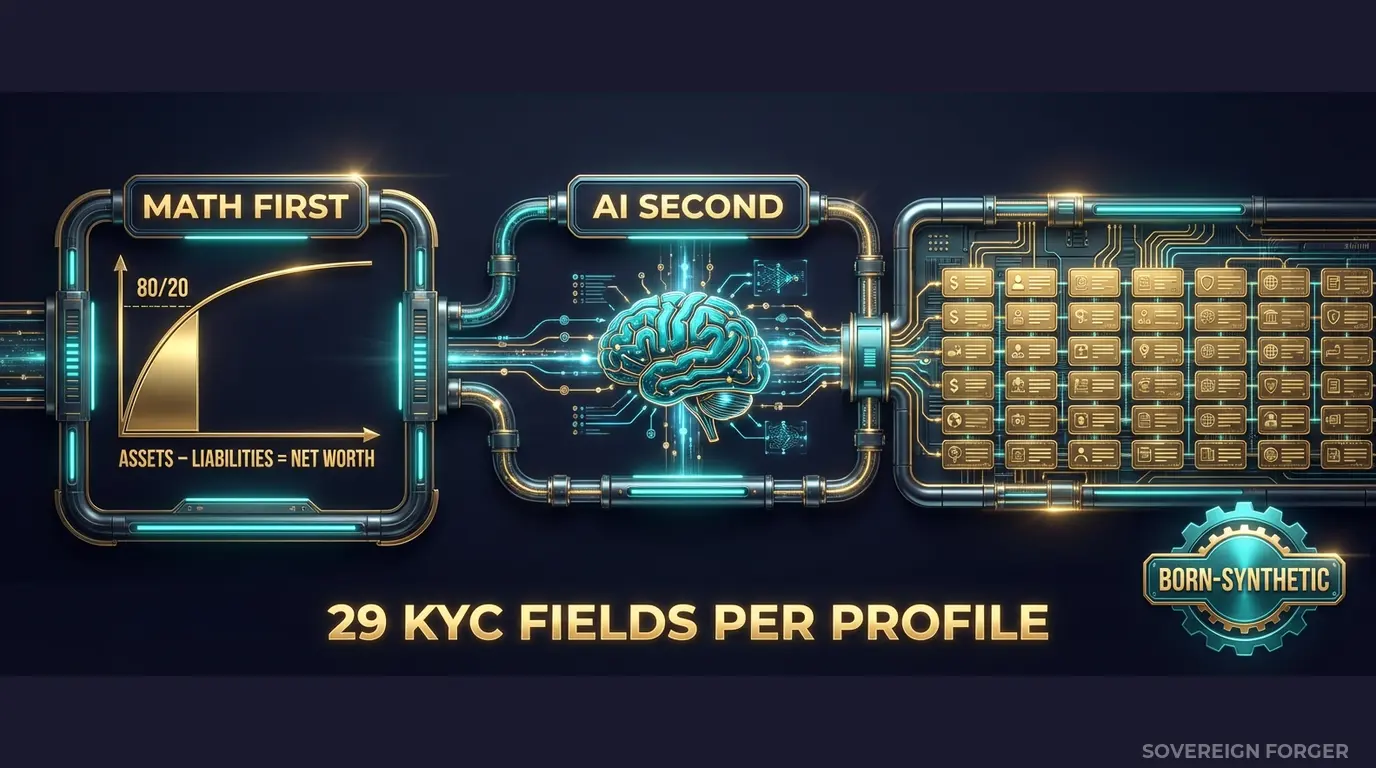

The generation pipeline works in two stages, and the separation between them is what makes the data structurally different from anything else on the market.

Math First. Net worth follows a Pareto distribution — the actual statistical shape of real wealth concentration, not a Gaussian bell curve. This is not a cosmetic choice. Pareto distributions produce the long-tail outliers that AML models must learn to handle: the client whose net worth is 50x the median, whose asset structure is correspondingly more complex, and whose KYC risk profile requires Enhanced Due Diligence. If your training data follows a bell curve, your model never sees these profiles in meaningful volume. It treats them as anomalies rather than as a predictable segment of the client population.

Asset allocations are computed within algebraic constraints: Assets – Liabilities = Net Worth, by construction. Every balance sheet balances on every record. This matters for AML training because real wealth structures have internal consistency — property values, equity holdings, cash positions, and debt all relate to each other within a financial logic. Models trained on internally inconsistent profiles learn noise. Models trained on algebraically coherent profiles learn structure.

AI Second. After the financial figures are locked, a local AI model running entirely offline adds narrative context — biography, profession, education, philanthropic focus. The AI never touches the numbers. It enriches the profile with culturally coherent details that match the geographic niche, wealth tier, and archetype. A tech founder in Silicon Valley gets a different biography than a commodity trader in Singapore — not because a template was swapped, but because the enrichment model understands the contextual difference.

Why This Matters for AML Model Training Specifically

AML models need to learn a specific skill: distinguishing between legitimate structural complexity and genuine risk signals. This is the hardest problem in financial crime detection, and it cannot be learned from flat data.

A real UHNWI with four jurisdictions, a Cayman Islands LP, and a Delaware holding company is not necessarily suspicious. That structure is standard for a tech founder who has raised venture capital, holds offshore IP, and manages tax optimization across multiple residences. A competent AML model should flag this profile for Enhanced Due Diligence — not for a Suspicious Activity Report.

But a profile with the same jurisdictional complexity, a PEP connection through a family member, an adverse media flag, and a source-of-wealth verification that relies entirely on self-declaration — that profile requires a fundamentally different response.

The only way an AML model learns this distinction is by training on data that contains both patterns in realistic proportions. Sovereign Forger’s KYC-Enhanced dataset includes:

– Risk rating distribution by niche: Latin America profiles show ~84% high risk (reflecting the jurisdictional profile), while European and Swiss-Singapore profiles show ~48% low risk. These distributions match the real-world regulatory risk landscape — your model trains on signal, not noise.

– PEP distribution by niche: Middle East profiles contain ~29% PEP connections (reflecting the prevalence of sovereign family and government-adjacent wealth), while Silicon Valley profiles contain significantly fewer. Your model learns that PEP frequency varies by geography — not that PEP is a uniform 5% everywhere.

– Sanctions screening results: Profiles include clear, potential_match, and confirmed_match statuses with confidence scores — giving your model graded training signal rather than binary flags.

– Source-of-wealth verification methods: tax_returns, bank_statements, third_party, self_declared — distributed realistically by archetype and jurisdiction. Your model learns that self-declared SoW from a high-risk jurisdiction with PEP connections requires different handling than third-party-verified SoW from a low-risk jurisdiction.

Every KYC field is deterministically derived from the profile’s archetype, niche, net worth, and jurisdiction using SHA-256 hashing — not randomly assigned. This means the same UUID always produces the same KYC signals, enabling reproducible model training and regression testing. Train your model today, adjust parameters, retrain tomorrow — the underlying data is identical, and any performance difference is attributable to your model changes, not data drift.

29 Fields Designed for AML Pipeline Ingestion

Every KYC-Enhanced profile includes the fields your AML model actually needs to process:

Identity & Geography: full_name, residence_city, residence_zone, tax_domicile

Wealth Structure: net_worth_usd, total_assets, total_liabilities, property_value, core_equity, cash_liquidity, assets_composition, liabilities_composition

Professional Context: profession, education, narrative_bio, philanthropic_focus

Offshore Exposure: offshore_jurisdiction, offshore_vehicle

KYC Signals: kyc_risk_rating, pep_status, pep_position, pep_jurisdiction, sanctions_screening_result, sanctions_match_confidence, adverse_media_flag, source_of_wealth_verified, sow_verification_method, high_risk_jurisdiction_flag

These are not arbitrary fields. They are the specific data points that AML screening engines, transaction monitoring systems, and risk scoring models consume as input features. The dataset drops directly into your pipeline — no field mapping, no schema transformation, no manual enrichment.

Built for RegTech AML Model Training at Scale

6 Geographic Niches: Silicon Valley, Old Money Europe, Middle East, LatAm, Pacific Rim, Swiss-Singapore — each with distinct wealth architectures, jurisdictional patterns, and KYC signal distributions. Your model trains on the full geographic diversity of global UHNWI wealth, not a single-market slice.

31 Wealth Archetypes: Tech founders, private bankers, commodity traders, family office managers, sovereign family members, real estate developers, shipping magnates, agribusiness barons — the actual client profiles that stress-test AML models in production. Each archetype produces a different ownership structure, different jurisdictional exposure, and different KYC risk profile.

Deterministic KYC Derivation: Every risk rating, PEP status, sanctions result, and source-of-wealth method is derived from the profile’s underlying structure — not randomly sprinkled. Train your model on data where the risk signals have an explanatory relationship to the profile’s financial architecture, the way they do in real client populations.

Reproducible Training Runs: SHA-256 hashing ensures the same UUID always produces the same KYC fields. Run your training pipeline today, tune hyperparameters, retrain next week — the data is byte-for-byte identical. Any performance delta comes from your model changes, not data variation.

Pricing

| Tier | Records | Price | Best For |

|---|---|---|---|

| Compliance Starter | 1,000 | $999 | Model prototyping, feature testing |

| Compliance Pro | 10,000 | $4,999 | Full training pipeline, A/B model comparison |

| Compliance Enterprise | 100,000 | $24,999 | Production model training + regression suite |

No SDK. No API key. No data-sharing agreement. No six-month procurement cycle. Download JSONL or CSV, point your training pipeline at the file, and start a training run within the hour.

Why This Matters Now

Your clients’ regulatory exposure is your business risk. When Starling Bank was fined £29M, the enforcement narrative examined the entire compliance technology stack — not just the bank’s internal decisions. When HSBC paid £63.9M for anti-money laundering control failures, procurement teams across the industry asked their RegTech vendors one question: “Can you prove your product was validated against realistic data?” If the answer is “we tested with 500 generic profiles,” you are one client fine away from losing every deal in your pipeline.

The EU AI Act changes the game for RegTech specifically. Financial AI is classified as high-risk under Annex III. Article 10 requires documented governance of training data — including provenance, bias assessment, and GDPR compliance. If your AML model trains on real client data, you need to document consent chains, anonymization methodology, and re-identification risk assessment — for every client whose data you used. If your model trains on born-synthetic data with a Certificate of Sovereign Origin, you hand the certificate to the auditor and the conversation is over. Full enforcement begins August 2026.

The false positive problem is a training data problem. The industry consensus is that 95-98% of AML alerts are false positives. The standard response is to build better models or hire more investigators. I have watched RegTech teams optimize model architecture for months without improving false positive rates — because the model was trained on data that never contained the structural patterns that distinguish legitimate complexity from genuine risk. You cannot optimize your way out of a training data gap. You fill the gap with better data.

The balance sheet test is open source. Every Sovereign Forger record passes algebraic validation: Assets – Liabilities = Net Worth. Run the Balance Sheet Test on our data, then run it on your current training data. If your current data contains records where the balance sheet does not close, your model is learning from numerically inconsistent profiles — and every inference it makes carries that inconsistency forward.

Every dataset ships with a Certificate of Sovereign Origin — documenting the born-synthetic methodology, zero PII lineage, and regulatory alignment. When your client’s auditor asks where the training data came from, you hand them the certificate. When your own compliance team asks whether you have EU AI Act Article 10 documentation, you hand them the certificate. It is one document that answers both questions.

Train Your AML Model on Data That Reflects Reality

Download 100 free KYC-Enhanced UHNWI profiles. Feed them into your AML pipeline. Check whether your model can distinguish structural complexity from genuine risk signals — whether it knows the difference between a legitimate four-jurisdiction wealth structure and a laundering corridor.

If it cannot tell the difference, the training data is why.

No credit card. No sales call. Just your work email.

Frequently Asked Questions

How does synthetic AML training data help neobanks reduce the risk of regulatory fines?

Neobanks such as Starling, fined £29M by the FCA, and N26, fined €9.2M by BaFin, faced penalties partly because their AML models were trained on insufficient or unrepresentative data. Sovereign Forger’s born-synthetic profiles include offshore holding structures, layered cross-border transaction chains, and PEP exposure flags that mirror the typologies regulators scrutinise. Training on 50,000 or more such profiles sharpens detection thresholds before a single real customer record is processed.

Which AML risk typologies are covered in the synthetic financial profiles for model training?

Profiles incorporate trade-based money laundering indicators, shell company ownership chains across high-risk jurisdictions, structuring patterns below reporting thresholds, and adverse media flags. Each record interlocks source-of-wealth narratives with transaction velocity anomalies and sanctions-list proximity scores. RegTech providers can use these typologies to benchmark their detection engines against EU AMLD6 and FATF Recommendation 10 requirements without relying on anonymised live customer data that carries residual re-identification risk.

Can RegTech vendors use synthetic AML data for client demonstrations as well as internal model development?

Yes, and this is one of the primary deployment patterns. A RegTech vendor demonstrating a transaction monitoring platform to a traditional bank or insurer cannot legally expose real customer profiles. Sovereign Forger’s synthetic datasets provide a library of realistic risk scenarios — complete with risk ratings, adverse media, and cross-border payment flows — that make demonstrations credible and regulatorily defensible. EU AI Act Article 10 requires high-quality, representative training data for AI systems; using born-synthetic profiles satisfies that obligation across both internal and client-facing contexts.

What does born-synthetic mean and why does it matter specifically for AML model training data?

Born-synthetic means every profile is generated from first principles using mathematical distributions such as the Pareto distribution for wealth concentration, with zero lineage to any real natural person. No real record was ever collected, anonymised, or transformed. This architecture satisfies GDPR Article 25 privacy-by-design requirements by construction, removing the legal basis concerns that attach to anonymised datasets. For AML training specifically, it also means edge-case typologies — ultra-high-net-worth offshore structures, for example — can be generated at arbitrary volume without the scarcity constraints of real-world compliance data.

How can a RegTech team get started with synthetic AML training data from Sovereign Forger?

Teams can download 100 free synthetic KYC profiles instantly using a work email address, with no credit card required. Each profile contains 29 interlocked fields covering risk ratings, PEP status, sanctions screening results, and source-of-wealth narratives, ensuring internal consistency across the record rather than independently randomised fields. The sample set is sized to allow immediate integration testing with an existing AML pipeline, giving compliance engineers and data scientists a concrete basis for evaluating data quality before committing to a larger training dataset.