Block: $120M. Western Union: $586M. MoneyGram: $125M. PayPal: repeated enforcement actions across multiple jurisdictions. In every case, the onboarding process cleared clients that should have triggered deeper review — because the simulation data used to build and test those workflows never contained the structural complexity that real high-risk clients bring through the door.

Your Onboarding Simulation Tests the Happy Path. Production Is Not Happy.

I have sat in rooms where payment processor compliance teams demonstrated their onboarding flow to regulators. The simulation was flawless. A client enters their details, the system runs KYC checks, risk scoring triggers at the right thresholds, EDD escalation routes to the right team. Green lights across the board.

Then I watched the same system process a real merchant onboarding — a commodities trading firm with a beneficial owner holding dual nationality, a tax domicile in a third country, and a foundation in Liechtenstein feeding into a Cayman LP. The onboarding form had one field for “country.” The client needed three. The risk scoring model assigned “medium” because the registered address was in London. The PEP screening missed the beneficial owner’s brother, who held a government advisory role in a jurisdiction the system did not flag. The source-of-wealth documentation was marked “verified” because the client uploaded bank statements — from a bank in a jurisdiction the compliance team had never tested against.

This is what onboarding simulation failure looks like at a payment processor. It is not a system crash. It is a quiet pass — a green light on a client who should have been escalated, reviewed, and documented. The system worked exactly as designed. It was designed against data that did not reflect reality.

Payment processors face a unique version of this problem. Unlike retail banks that onboard individuals one at a time, payment processors onboard merchants, platforms, and institutional clients who bring layered entity structures, cross-border fund flows, and beneficial ownership chains that span multiple jurisdictions simultaneously. A single merchant onboarding can involve four countries, two entity types, and a PEP connection that only surfaces at the third layer of ownership. Your onboarding simulation needs to test for all of this — not just the straightforward case where a sole proprietor in London signs up with a UK passport.

The pattern behind the fines is consistent. Western Union paid $586M because its agent network onboarded clients without adequate due diligence — the systems were not built to catch the complexity of cross-border remittance patterns. Block paid $120M because its Cash App onboarding allowed accounts that should have been flagged under BSA requirements. MoneyGram paid $125M for similar failures in its agent compliance framework. In every case, the onboarding process existed. It was tested. It passed QA. And it failed in production because the test data was structurally simpler than the clients who actually triggered regulatory scrutiny.

The gap is measurable. If your onboarding simulation data contains zero multi-jurisdictional tax structures, zero offshore vehicles, zero PEP-adjacent beneficial owners, and zero high-risk jurisdiction flags, then you have never tested the onboarding paths that regulators specifically audit. You have a simulation that demonstrates your system works for simple clients. You have no evidence it works for the clients who generate enforcement actions.

Three Approaches That Leave Your Onboarding Blind

Payment processors have unique constraints that make the test data problem worse than in traditional banking. You onboard at scale — thousands of merchants per month, across dozens of countries, with automated decisioning that must be right on the first pass. There is no relationship manager catching edge cases over lunch. The onboarding flow is the compliance control, and the simulation data determines whether that control actually works.

Using copies of production data. I have seen payment processors extract real merchant data into staging environments for onboarding testing. The logic sounds reasonable — test against real complexity. But the moment that data enters a test environment, you have created a GDPR Article 25 violation. Test environments have broader access, weaker logging, and often sit in different infrastructure with different security postures. The EU AI Act makes this worse: if your onboarding decisioning uses machine learning models trained on this data, Article 10 requires documented governance of training data provenance. You cannot document provenance for data that should not be in that environment in the first place.

Using anonymized merchant data. Stripping names and registration numbers from real merchant profiles does not eliminate re-identification risk — particularly for high-value payment processor clients. A commodities trading platform with $200M in annual volume, registered in the Netherlands, with a beneficial owner in the UAE and an offshore subsidiary in the BVI is not anonymous. There may be fewer than a dozen entities matching that profile globally. A determined analyst — or a regulator — can re-identify the merchant from the structural fingerprint alone. Your “anonymized” simulation data is pseudonymized at best, and GDPR applies in full.

Using generic synthetic generators. Platform-based tools generate flat profiles — a business name, a country, a revenue figure. They produce the digital equivalent of “Acme Corp, United Kingdom, $5M revenue.” Your onboarding simulation trains against these profiles and learns that merchant onboarding is straightforward. Then a real client arrives with a multi-layered ownership structure, three jurisdictions, and a PEP connection at the beneficial ownership level, and the simulation has no frame of reference for what should happen. The system either passes the client without adequate checks or flags everything indiscriminately, creating an alert volume that drowns the compliance team.

Real Data vs. Anonymized vs. Born-Synthetic

| Dimension | Real Data | Anonymized | Born-Synthetic |

|---|---|---|---|

| PII present | Yes | Residual | None |

| Re-identification risk | Certain | Probable (UHNWI/HNW merchants) | Impossible |

| GDPR Art. 25 compliant | No | Disputed | Yes |

| EU AI Act Art. 10 | Violation | Unclear | Compliant |

| Certifiable for auditors | No | No | Yes (Certificate of Origin) |

| Multi-jurisdictional complexity | Yes (but illegal to use) | Degraded by stripping | Full (by construction) |

| Onboarding edge cases | Present but inaccessible | Partially preserved | Systematically generated |

| Fine exposure | Up to 4% global revenue | Up to 4% global revenue | Zero |

Born-Synthetic KYC Data Built for Payment Processor Onboarding Simulation

I built Sovereign Forger because I watched compliance teams run onboarding simulations against data that guaranteed success. Every profile was simple. Every test passed. Every regulator eventually found the gaps. The solution is not more testing — it is better test data.

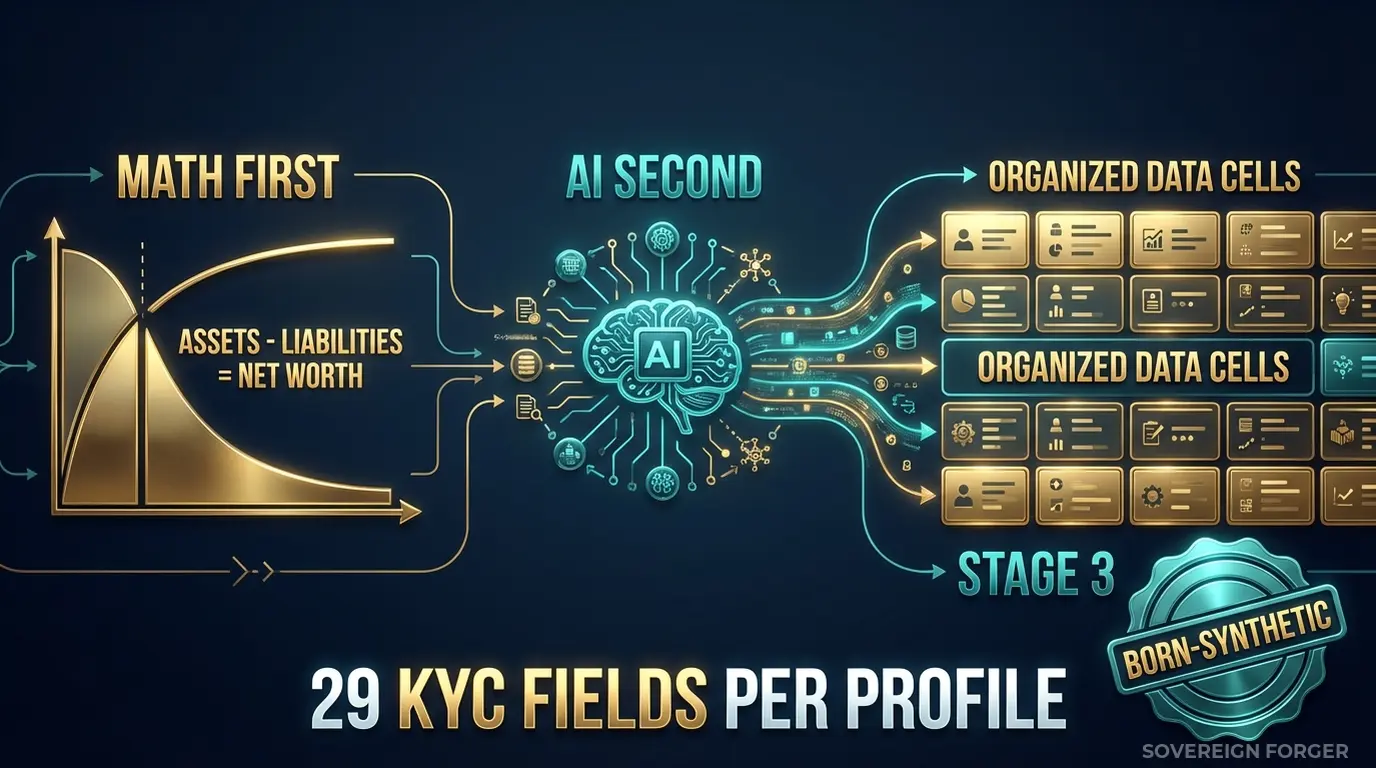

Every profile in the Sovereign Forger KYC dataset is generated from mathematical constraints — not derived from any real person, any real merchant, or any real transaction. The generation pipeline works in two stages:

Math First. Net worth follows a Pareto distribution — the way real wealth concentrates, with a long tail of extreme values that generic generators never produce. Asset allocations are computed within algebraic constraints: Assets – Liabilities = Net Worth, by construction. Property values, equity holdings, cash positions, and liability structures are internally consistent on every single record. This means your onboarding simulation processes profiles with realistic financial complexity, not random numbers that happen to fall in a plausible range.

AI Second. A local AI model — running offline, on hardware I control — adds narrative context after the financial figures are locked. Biography, profession, education, philanthropic focus. The AI never touches the numbers. It enriches the profile with culturally coherent details that match the geographic niche and wealth tier. A commodity trader in LatAm gets a different professional narrative than a fintech founder in Singapore — because the underlying wealth structures, typical jurisdictions, and cultural contexts are different.

How This Solves Onboarding Simulation Specifically

Payment processor onboarding is a sequence of decisions: data capture, identity verification, risk scoring, PEP/sanctions screening, source-of-wealth assessment, and EDD escalation. Each decision point needs test data that exercises both the pass path and the fail path.

Data capture completeness. Your onboarding form asks for country of residence, profession, and source of funds. A profile with tax domicile in Switzerland, residence in Singapore, and offshore vehicles in the BVI tests whether your form can capture multi-jurisdictional structures — or whether it silently drops the complexity into a single “country” field.

Risk scoring calibration. A profile flagged `kyc_risk_rating: high` with `pep_status: foreign` and `high_risk_jurisdiction_flag: true` should trigger a different onboarding path than a `risk_rating: low` profile with no PEP exposure. Your simulation needs both — in realistic proportions, not 50/50 splits that inflate alert volumes or 99/1 splits that never test the escalation path.

EDD trigger testing. Enhanced Due Diligence is triggered by specific combinations — PEP status, high-risk jurisdiction, unusual source of wealth, sanctions proximity. Each of these triggers exists in the Sovereign Forger KYC dataset with realistic frequency distributions by niche. Middle East profiles show higher PEP rates (~29%) because that reflects the actual demographic of sovereign wealth in that region. LatAm profiles show higher risk ratings (~84% high-risk) because of the jurisdictional exposure patterns in that niche.

Source-of-wealth documentation. The `source_of_wealth_verified` and `sow_verification_method` fields tell your onboarding simulation how the source of wealth was assessed — tax returns, bank statements, third-party verification, or self-declaration. Your system handles each method differently. Your simulation should test each one.

29 Fields Designed for Onboarding Simulation

Every KYC-Enhanced profile includes the fields your onboarding pipeline processes from initial data capture through final decisioning:

Identity & Geography: full_name, residence_city, residence_zone, tax_domicile

Wealth Structure: net_worth_usd, total_assets, total_liabilities, property_value, core_equity, cash_liquidity, assets_composition, liabilities_composition

Professional Context: profession, education, narrative_bio, philanthropic_focus

Offshore Exposure: offshore_jurisdiction, offshore_vehicle

KYC Signals: kyc_risk_rating, pep_status, pep_position, pep_jurisdiction, sanctions_screening_result, sanctions_match_confidence, adverse_media_flag, source_of_wealth_verified, sow_verification_method, high_risk_jurisdiction_flag

Every KYC field is deterministically derived from the profile’s archetype, niche, net worth, and jurisdiction — not randomly assigned. A semiconductor dynasty heir in Pacific Rim gets different risk signals than a private banker in Swiss-Singapore, because the underlying wealth structures, offshore exposure patterns, and regulatory profiles are fundamentally different. Your onboarding simulation processes profiles that behave like real clients — without containing any real client data.

Built for Payment Processor Onboarding Testing at Scale

6 Geographic Niches: Silicon Valley, Old Money Europe, Middle East, LatAm, Pacific Rim, Swiss-Singapore — each with culturally coherent wealth patterns, offshore jurisdiction preferences, and KYC signal distributions that reflect the actual regulatory landscape of that region.

31 Wealth Archetypes: Tech founders, commodity traders, shipping dynasty heirs, private bankers, real estate developers, family office managers — the actual client profiles that stress-test your onboarding workflow. Not “Person A, Country B, $X million.”

Realistic KYC Signal Distributions: Risk ratings, PEP statuses, sanctions screening results, and source-of-wealth verification methods distributed with realistic frequencies by niche. Your simulation processes the same proportion of high-risk clients that your production environment encounters — so your alert volumes, escalation rates, and EDD capacity requirements are calibrated against reality.

Algebraically Balanced Financial Profiles: Every record passes the balance sheet test: Assets – Liabilities = Net Worth. Your onboarding system can validate financial consistency on every profile without encountering the arithmetic errors that plague randomly generated test data.

Pricing

| Tier | Records | Price | Best For |

|---|---|---|---|

| Compliance Starter | 1,000 | $999 | Onboarding flow QA, proof of concept |

| Compliance Pro | 10,000 | $4,999 | Full regression suite, multi-niche testing |

| Compliance Enterprise | 100,000 | $24,999 | AI model training + production simulation |

No SDK. No API key. No sales call. Download a file, open it in Python or Excel, and feed it directly into your onboarding pipeline.

Why This Matters Now

Enforcement against payment processors is intensifying. FinCEN, the FCA, and EU regulators have made it clear that payment processors are held to the same compliance standards as banks — with less patience for gaps. Block paid $120M for BSA violations in Cash App onboarding. Western Union’s $586M settlement centered on agent network onboarding failures. MoneyGram’s $125M penalty followed the same pattern. Regulators are not asking whether you have an onboarding process. They are asking whether that process was tested against realistic complexity.

The EU AI Act changes the equation. Fully applicable from August 2026, the Act classifies financial AI as high-risk under Annex III. If your onboarding decisioning uses machine learning — for risk scoring, PEP screening, or automated EDD triage — Article 10 requires documented governance of training data. That means provenance, bias assessment, and GDPR compliance of the data your models learned from. Born-Synthetic data has documentable provenance by construction: generated from mathematical distributions, enriched by a local AI model, zero lineage to any real person.

The balance sheet test is open source. Every Sovereign Forger record passes algebraic validation: Assets – Liabilities = Net Worth. Run the Balance Sheet Test on our data, then run it on whatever test data your onboarding simulation currently uses. The difference is measurable — and it tells you how many financially inconsistent profiles your system has been trained against.

Every dataset ships with a Certificate of Sovereign Origin — documenting the born-synthetic methodology, zero PII lineage, and regulatory alignment. When your compliance officer asks “where did this onboarding test data come from?”, when your auditor asks “can you prove no real client data was used in testing?”, you hand them the certificate. Born-Synthetic data. Compliant by construction — not by anonymization.

Simulate Realistic Client Onboarding

Download 100 free KYC-Enhanced UHNWI profiles. Run the full onboarding simulation — from initial data capture through KYC checks, risk scoring, and EDD triggers. Count how many profiles exercise paths your current test data has never touched. Count how many trigger PEP checks, high-risk jurisdiction flags, or source-of-wealth escalations.

That count is the gap between your simulation and production. That gap is where fines come from.

No credit card. No sales call. Just your work email.

Related reading: PCI DSS Test Data — Why 4.0 Bans Real Cards in Test Environments — how PCI DSS 4.0 Requirement 6.5.4 prohibits production data in testing.

Frequently Asked Questions

How does synthetic KYC data help payment processors stress-test onboarding flows without violating PCI DSS 4.0?

PCI DSS 4.0 Requirement 6.5.4, mandatory since March 2025, explicitly prohibits real cardholder data in test environments. Sovereign Forger’s born-synthetic profiles give QA teams statistically realistic KYC records, including card numbers, IBANs, and identity documents, that carry zero lineage to real individuals. This allows processors to run full onboarding regression suites, including negative-path and edge-case scenarios, across hundreds of profiles without triggering a PCI scope violation or requiring test environment segregation audits.

What profile diversity is needed to adequately test cross-border AML screening in a customer onboarding pipeline?

Effective AML onboarding tests require profiles spanning multiple nationalities, name scripts, politically exposed person designations, sanctions list matches, and layered source-of-wealth narratives. Regulators including the PSR and FATF guidance on correspondent banking expect processors to demonstrate their screening logic handles transliterated names, dual citizenships, and high-risk jurisdiction flags without producing excessive false positives. Synthetic datasets built from realistic demographic distributions let QA engineers verify that screening thresholds catch true matches across at least 30 to 50 distinct country-of-origin combinations before a single real applicant enters the funnel.

How can a neobank use synthetic onboarding data to validate its EU AI Act Article 10 compliance for automated KYC decisioning?

EU AI Act Article 10 requires high-risk AI systems, which include automated identity verification and credit-risk scoring used in onboarding, to be trained and tested on data that is sufficiently representative and free of bias. Synthetic profiles generated from calibrated statistical distributions allow compliance teams to audit decision models against underrepresented demographic segments, such as applicants with non-Latin name characters or thin credit files, producing the documented test evidence regulators expect. This supports both pre-deployment conformity assessments and ongoing monitoring obligations under the Act.

What does born-synthetic financial data mean, and why does it matter specifically for payment processor onboarding testing?

Born-synthetic data is generated entirely from mathematical distributions such as the Pareto distribution for wealth concentration rather than derived, anonymized, or masked from any real individual’s records. Because no natural person underlies the data, there is zero re-identification risk and no lineage that could constitute personal data under GDPR. This makes the dataset compliant with GDPR Article 25 privacy-by-design requirements by construction, not by process. For payment processor onboarding testing, where KYC files contain high-sensitivity fields like passport numbers, income figures, and PEP status, this distinction eliminates the legal risk of a data breach in the test environment entirely.

How quickly can a payment processor QA team get started with synthetic onboarding profiles from Sovereign Forger?

Sovereign Forger provides 100 free synthetic KYC profiles available for instant download using a work email address, with no credit card required. Each profile contains 29 interlocked fields covering risk ratings, PEP status, sanctions screening flags, source of wealth narratives, and supporting identity document data, ensuring referential consistency across the full onboarding record. Teams can load profiles directly into staging environments within minutes, giving engineers a ready-made dataset to begin onboarding flow stress-tests, threshold tuning, and compliance evidence generation the same day without waiting for legal approval to use real applicant data.

Learn more about payment processor onboarding test data and how Born Synthetic data addresses this in our glossary and comparison guides.