Block: $120M. Western Union: $586M. MoneyGram: $125M. PayPal: multiple enforcement actions across jurisdictions. Every one of these penalties traces back to AML systems that could not distinguish between legitimate cross-border complexity and genuine financial crime — because the training data never taught them the difference.

Your AML Model Has Never Seen a Real High-Risk Profile

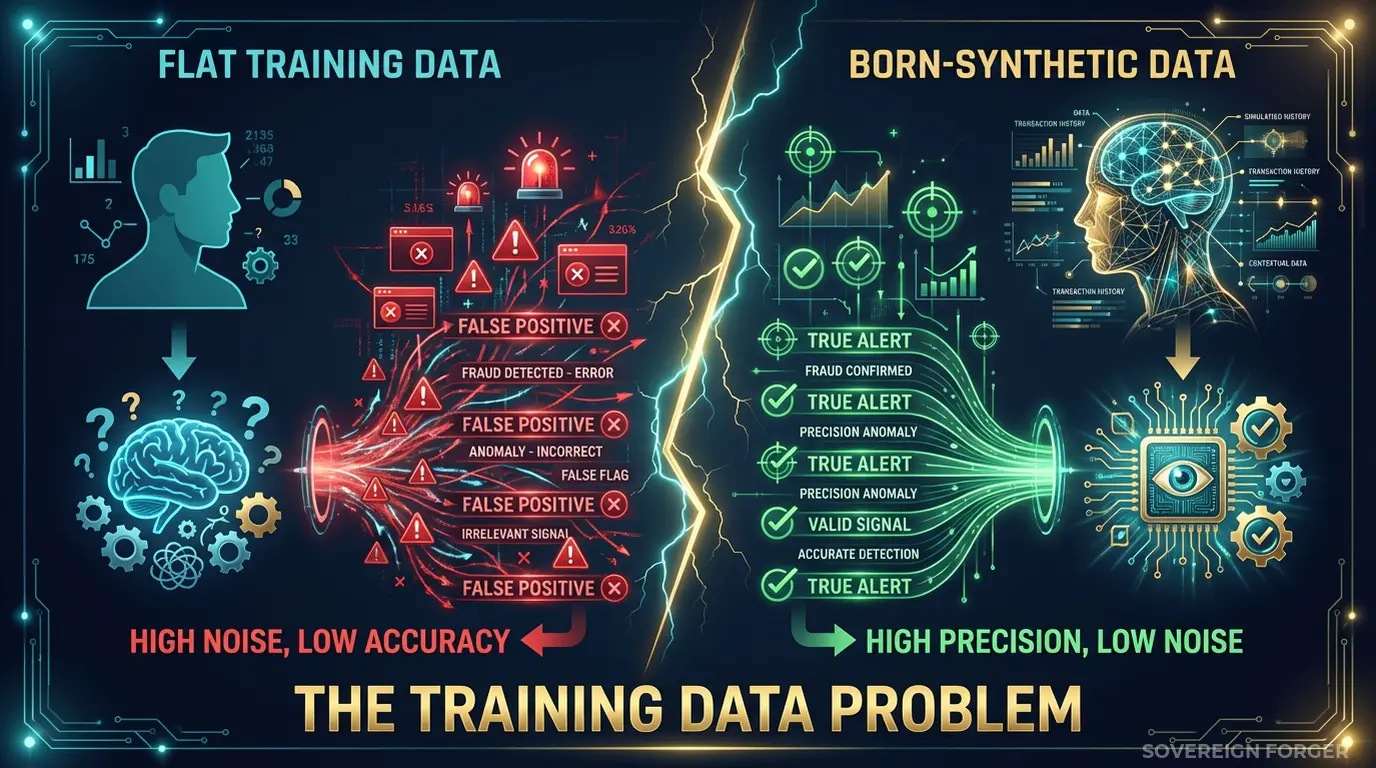

I spent years watching payment processors build AML models. The pattern is always the same. The data science team trains a classifier on internal transaction data — supplemented with a few thousand synthetic profiles generated by whatever tool procurement approved. The profiles are structurally identical: single jurisdiction, single currency, clean counterparties, predictable transaction patterns. The model learns that normal looks simple and anything complex looks suspicious.

Then the model goes into production. A commodities trading firm in Singapore routes a legitimate $4.2M payment through a Cayman Islands intermediary to a counterparty in Panama. The payment clears three jurisdictions, two currencies, and an offshore vehicle. The AML model flags it immediately — not because it detected a genuine risk signal, but because it has never seen a legitimate transaction with this level of structural complexity. It learned that offshore means suspicious, because every offshore entity in its training data was labeled as high risk.

Multiply this by the volume that payment processors handle. Stripe processes hundreds of billions in payments annually. Block moves money across borders for millions of merchants. Adyen operates in dozens of markets simultaneously. At this scale, a false positive rate of even 2% means thousands of legitimate transactions frozen, hundreds of compliance analysts reviewing alerts that should never have been generated, and real suspicious activity buried under noise.

This is the problem I have seen destroy AML programs from the inside. The model is not broken. The training data is broken. When your AML classifier has never encountered a legitimate UHNWI with a trust in the Channel Islands, a tax domicile in Switzerland, and PEP-adjacent family connections — it has no baseline for what legitimate complexity looks like. Everything complex becomes suspicious. Everything suspicious becomes an alert. Every alert becomes a cost.

The numbers tell the story. Western Union paid $586M because its AML systems failed to detect actual money laundering across its global remittance network — while simultaneously generating so many false positives on legitimate cross-border transactions that investigators could not keep up. Block paid $120M because its Cash App growth outpaced its AML capacity, and the models trained on simple payment patterns could not scale to the complexity of real-world financial flows. MoneyGram paid $125M for the same fundamental failure: AML systems that looked good in testing and collapsed under the structural complexity of production traffic.

The root cause in every case is identical. The AML training data was structurally simpler than the real transaction environment. The model learned a simplified version of the world. When the real world showed up, the model failed.

Three Approaches That Do Not Work for Payment Processor AML

Payment processors face a unique version of this problem. Unlike banks that onboard clients one by one, payment processors see the entire spectrum of financial complexity in a single day — from a $12 coffee shop transaction to a $50M cross-border settlement between entities in four jurisdictions. Your AML model must work across that entire range. Most training data does not.

Using internal transaction data to train AML models. This is the default approach, and it creates two immediate problems. First, your own transaction history contains the biases of your current AML system — if the system already misses certain risk patterns, the training data reinforces those blind spots. Second, using real customer data for model training creates a GDPR Article 25 exposure that most payment processors underestimate. The data flows from production into model training environments with broader access, weaker controls, and limited logging. When the EU AI Act Article 10 enforcement begins in August 2026, you will need to document the provenance and governance of every dataset used to train financial AI — including your AML classifier. Real customer data makes that documentation a liability.

Using anonymized transaction records. Stripping names and account numbers from cross-border payment records does not eliminate re-identification risk — particularly for high-value payment corridors where transaction volumes are low enough to identify patterns. A $15M wire from a specific offshore jurisdiction to a specific counterparty type on a specific date is effectively a fingerprint, with or without the account holder’s name attached. Regulators increasingly recognize this: the EDPB has clarified that pseudonymized data remains personal data under GDPR if re-identification is reasonably possible. For UHNWI payment patterns, it almost always is.

Using generic synthetic data generators. Platform-based generators produce retail-scale profiles — simple identity fields, single jurisdictions, uniform risk distributions. They generate the financial equivalent of stock photos: technically correct, structurally meaningless. Your AML model trains on these profiles and learns that wealth is simple, jurisdictions are domestic, and offshore exposure does not exist. Then a legitimate merchant settlement routes through a BVI intermediary and the model has zero frame of reference for whether this is normal or suspicious.

Real Data vs. Anonymized vs. Born-Synthetic

| Dimension | Real Transaction Data | Anonymized Records | Born-Synthetic |

|---|---|---|---|

| PII present | Yes | Residual | None |

| Re-identification risk | Certain | Probable (high-value corridors) | Impossible |

| GDPR Art. 25 compliant | No | Disputed | Yes |

| EU AI Act Art. 10 | Violation risk | Unclear provenance | Compliant |

| Certifiable for auditors | No | No | Yes (Certificate of Origin) |

| Reflects UHNWI complexity | Partially (biased by existing system) | Partially (minus identifiers) | Yes (31 archetypes × 6 niches) |

| Fine exposure | Up to 4% global revenue | Up to 4% global revenue | Zero |

Born-Synthetic AML Training Data Built for Payment Processor Complexity

I built the Sovereign Forger pipeline specifically to solve the training data problem I kept seeing in financial institutions. The core principle is simple: if your AML model needs to distinguish between legitimate complexity and genuine risk, the training data must contain both — in realistic proportions, with realistic structure, and with zero exposure to real persons.

Every profile in the Sovereign Forger KYC dataset is generated from mathematical constraints — not derived from any real person, any real transaction, or any real payment record.

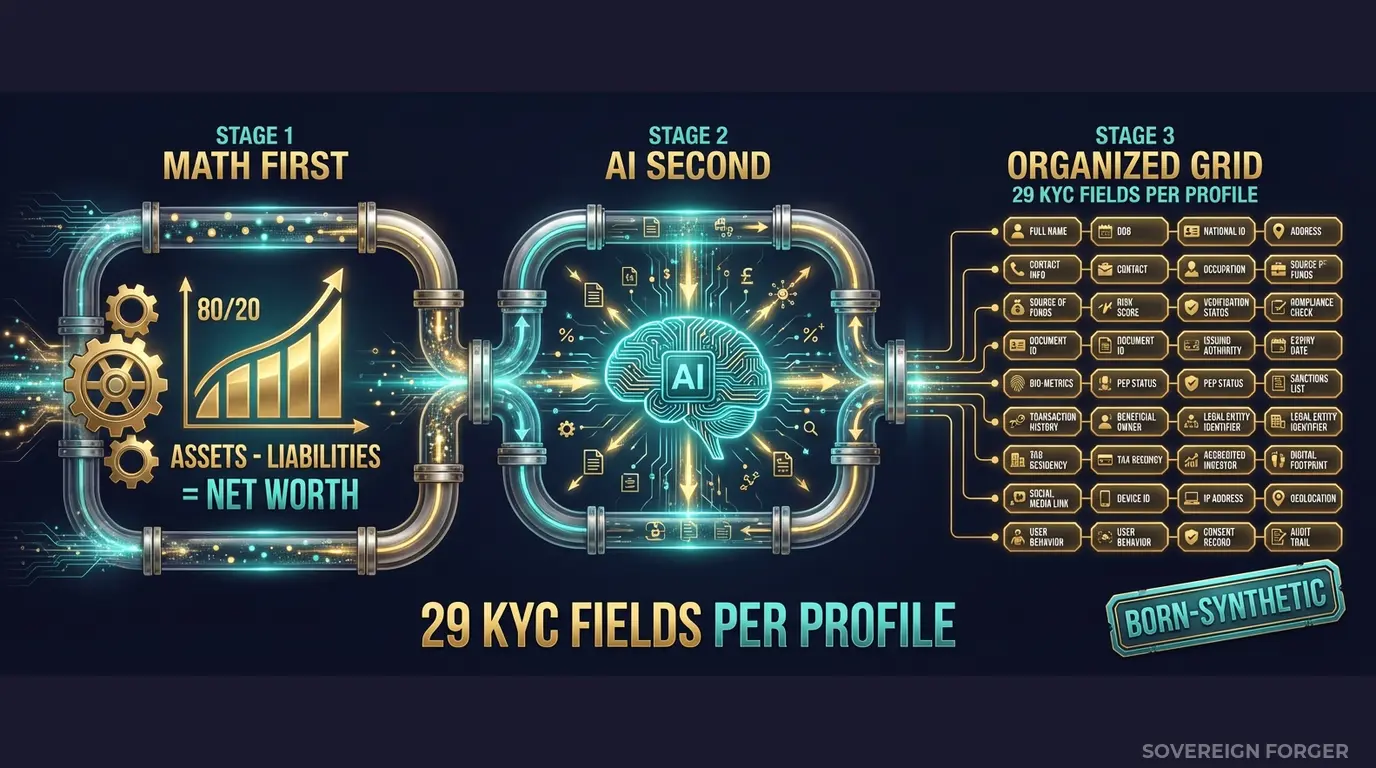

Math First. Net worth follows a Pareto distribution — the actual shape of real wealth distribution, not a bell curve. This matters for AML training because Pareto-distributed data produces the right frequency of ultra-high-net-worth profiles at the tail. A payment processor’s AML model needs to learn what a $200M net worth profile looks like structurally, not just a scaled-up version of a $200K profile. The wealth composition is computed within algebraic constraints: Assets – Liabilities = Net Worth, by construction. Every balance sheet balances on every record. Zero exceptions. When your AML model ingests this data, it learns wealth structures that are internally consistent — the way real wealth is structured.

AI Second. After the financial figures are locked and immutable, a local AI model (running entirely offline — no data ever leaves the machine) adds narrative enrichment: biography, profession, philanthropic focus, and other contextual details. The AI enrichment makes every profile unique and culturally coherent, but it never touches the numbers or the KYC signals. This separation is what makes the data trustworthy for model training — the financial structure is mathematically guaranteed, and the narrative context is linguistically realistic.

How This Solves the AML Training Data Problem

The specific value for payment processor AML models is in the KYC signal layer. Every profile includes 29 fields designed to teach your classifier what risk actually looks like across different wealth architectures:

KYC Risk Rating is derived from the profile’s archetype, offshore exposure, and jurisdiction — not randomly assigned. A family office manager in Switzerland with a Liechtenstein trust gets a different risk profile than a tech founder in Silicon Valley with a Delaware LLC. Your model learns that risk correlates with structural complexity, not with geography alone.

PEP Status follows realistic distributions by niche. Middle East profiles carry approximately 29% PEP exposure (reflecting sovereign wealth and government-adjacent business structures). European profiles carry lower PEP rates but higher offshore complexity. Your AML model learns the actual correlation between PEP status and wealth architecture — not a uniform 5% PEP rate across all profiles.

Sanctions Screening Results include clear, potential match, and confirmed match statuses with match confidence scores. Potential matches are the edge cases that destroy AML teams — names that partially match sanctions lists, jurisdictions that overlap with restricted countries, entity structures that resemble sanctioned patterns but are legitimate. Your model must learn to handle these, and it can only learn from training data that contains them in realistic proportions.

Source of Wealth Verification includes four verification methods (tax returns, bank statements, third-party verification, self-declared) distributed by archetype. A commodity trader self-declares differently than a private equity partner with third-party audited returns. Your AML model learns that verification method correlates with wealth source, not with risk level.

29 Fields That Map to Your AML Pipeline

Identity & Geography: full_name, residence_city, residence_zone, tax_domicile

Wealth Structure: net_worth_usd, total_assets, total_liabilities, property_value, core_equity, cash_liquidity, assets_composition, liabilities_composition

Professional Context: profession, education, narrative_bio, philanthropic_focus

Offshore Exposure: offshore_jurisdiction, offshore_vehicle

KYC Signals: kyc_risk_rating, pep_status, pep_position, pep_jurisdiction, sanctions_screening_result, sanctions_match_confidence, adverse_media_flag, source_of_wealth_verified, sow_verification_method, high_risk_jurisdiction_flag

Every field is deterministically derived — meaning the same UUID always produces the same KYC fields. This matters for AML model training because you can reproduce results, audit training data provenance, and demonstrate to regulators exactly how every data point was generated. No black boxes. No unexplainable inputs.

Built for Payment Processor AML at Scale

6 Geographic Niches: Silicon Valley, Old Money Europe, Middle East, LatAm, Pacific Rim, Swiss-Singapore. Payment processors operate globally — your AML model must handle wealth from every major corridor. Each niche has culturally coherent naming conventions, wealth structures, offshore patterns, and KYC signal distributions that reflect the actual financial architecture of that region.

31 Wealth Archetypes: Tech founders routing Series C proceeds through offshore vehicles. Commodity traders with counterparties in multiple restricted jurisdictions. Family office managers distributing assets across three continents. Real estate developers with complex trust structures. These are the profiles that generate the most AML alerts in production — and the profiles your training data must contain.

Realistic Signal Distribution: Risk ratings are not uniformly distributed. PEP statuses correlate with geography and archetype. Sanctions screening results include the ambiguous partial matches that consume 80% of your compliance team’s time. Source of wealth verification methods vary by profession. Your model trains on data that reflects the actual complexity of cross-border payment compliance — not a simplified version of it.

Offline Generation, Zero Cloud Exposure: The entire pipeline runs on local hardware. No profile data ever touches a network, a cloud API, or a third-party service. For payment processors subject to PCI DSS and SOC 2, this eliminates an entire category of vendor risk assessment.

Pricing

| Tier | Records | Price | Best For |

|---|---|---|---|

| Compliance Starter | 1,000 | $999 | AML model proof of concept, initial training |

| Compliance Pro | 10,000 | $4,999 | Full model training cycle |

| Compliance Enterprise | 100,000 | $24,999 | Production AML model training + ongoing testing |

No SDK. No API key. No sales call. Download a file, feed it into your AML pipeline, and measure the difference.

Why This Matters Now

The enforcement trajectory is clear. Block paid $120M in 2024. Western Union’s $586M penalty remains the benchmark for cross-border payment AML failures. MoneyGram’s $125M fine established that remittance-scale operations face the same scrutiny as banks. PayPal has faced multiple enforcement actions across jurisdictions. FinCEN, the FCA, and EU regulators are all increasing scrutiny on payment processor AML programs — and the focus is shifting from “do you have an AML system” to “how was your AML system trained and tested.”

The EU AI Act changes everything for AML models. Financial AI is classified as high-risk under Annex III. Article 10 requires documented governance of training data — including provenance, bias assessment, and compliance with data protection law. If your AML classifier trains on real customer data or poorly anonymized records, you will need to prove GDPR compliance on the training data itself. Born-Synthetic data eliminates this requirement entirely: there is no personal data in the training set, so GDPR does not apply to the training data. The Certificate of Sovereign Origin documents this for your auditor.

The false positive problem is a cost problem. Every false positive alert costs between $25 and $75 to investigate. At payment processor volumes, a 1% reduction in false positive rate can save millions annually. The way to reduce false positives is not to loosen your AML rules — it is to train your model on data that includes legitimate structural complexity so it stops flagging every offshore vehicle as suspicious. I have seen teams cut their false positive rate by training on profiles that actually resemble their high-value merchant base. The model learns that a BVI intermediary is not inherently suspicious — it depends on the full wealth structure around it.

The balance sheet test is open source. Every Sovereign Forger record passes algebraic validation: Assets – Liabilities = Net Worth. Run the Balance Sheet Test on our data, then run it on your current training data. If your current data does not pass, your AML model is training on financially incoherent profiles — learning patterns from data that could not exist in the real world.

Every dataset ships with a Certificate of Sovereign Origin — documenting the born-synthetic methodology, zero PII lineage, and regulatory alignment. When your compliance officer asks “where did the AML training data come from,” you hand them the certificate. When your auditor asks “does this data contain any real person’s information,” the answer is documented and certifiable: no.

Train Your AML Model on Data That Reflects Reality

Download 100 free KYC-Enhanced UHNWI profiles. Feed them into your AML pipeline. Check whether your model can distinguish structural complexity from genuine risk signals.

If your current training data contains zero offshore vehicles, zero PEP-adjacent connections, and zero multi-jurisdictional wealth structures, your model has never learned the difference between legitimate complexity and actual risk. That gap is where the fines come from.

No credit card. No sales call. Just your work email.

Related reading: PCI DSS Test Data — Why 4.0 Bans Real Cards in Test Environments — how PCI DSS 4.0 Requirement 6.5.4 prohibits production data in testing.

Frequently Asked Questions

How does synthetic AML training data help payment processors meet PCI DSS 4.0 requirements without exposing real cardholder data?

PCI DSS 4.0 Requirement 6.5.4, mandatory since March 2025, explicitly prohibits the use of live primary account numbers in test and training environments. Payment processors using Sovereign Forger’s born-synthetic financial profiles satisfy this requirement by construction — no real card data is ever introduced into the pipeline. Compliance teams can validate AML model performance across thousands of synthetic transaction scenarios, including high-velocity cross-border patterns, without triggering a PCI DSS scope violation or requiring data masking workflows.

Can synthetic financial profiles realistically represent the offshore structures and layering techniques that payment processors need to detect under cross-border AML regulations?

Sovereign Forger generates synthetic profiles with interlocked fields that mirror real-world typologies: shell company ownership chains, multi-jurisdiction fund flows, correspondent banking relationships, and structuring patterns below reporting thresholds. These profiles are calibrated to reflect risk distributions consistent with FATF guidance and PSR cross-border AML obligations. Models trained on this data learn to flag layering and integration-stage activity with measurably higher precision than models trained on anonymised historical data, which tends to under-represent rare high-risk typologies by design.

How does training AML detection models on synthetic KYC profiles reduce the regulatory exposure payment processors face under the EU AI Act?

EU AI Act Article 10 requires that training datasets for high-risk AI systems be relevant, representative, and free from significant errors. Synthetic KYC profiles from Sovereign Forger are generated to pre-specified risk distributions, ensuring PEP coverage, sanctions-list adjacency, and adverse-media indicators are represented at statistically defensible rates. This gives compliance officers documented evidence that model training data met governance standards before deployment, directly supporting the conformity assessments required for AML systems classified as high-risk under Annex III of the Act.

What does born-synthetic mean in the context of payment processor AML training data, and why does it matter for GDPR compliance?

Born-synthetic means each financial profile is generated entirely from mathematical distributions — including Pareto distributions for wealth concentration and transaction value spread — with zero lineage to any real individual. No real person’s data is anonymised, pseudonymised, or derived from an existing record. This satisfies GDPR Article 25 privacy-by-design by construction rather than by process: there is no personal data to protect, no data subject rights to manage, and no re-identification risk to model. For payment processors operating across EU jurisdictions, this eliminates the legal basis analysis that anonymised datasets still require.

How can a payment processor AML team get started with Sovereign Forger’s synthetic training data?

Sovereign Forger provides 100 free synthetic KYC profiles available for instant download via a verified work email address, with no credit card required. Each profile contains 29 interlocked fields covering risk ratings, PEP status, sanctions screening results, adverse media flags, source of wealth narratives, and cross-border transaction indicators. The sample set is structured to include a statistically meaningful spread of risk tiers, giving data science and compliance teams enough variety to begin feature engineering and baseline model evaluation before committing to a full dataset licence.