I build Born-Synthetic financial datasets from statistical distributions, not from AI output. When a paper published in Nature in 2024 confirmed that AI models degrade when trained on AI-generated data, it validated a design decision I had made from day one: the financial skeleton of every profile must come from mathematics, not from model inference.

This is not an abstract research finding. Model collapse is happening now, and it has specific consequences for financial AI.

Key Takeaway: Model collapse occurs when AI models are recursively trained on AI-generated data — distributions narrow, rare events disappear, and tail behavior degrades. Financial AI (credit scoring, fraud detection, AML) depends on accurate tail distributions. Born-Synthetic data is immune because its core generation is mathematical (Pareto distributions, algebraic constraints), not model-based. There is no recursive loop to degrade.

The Contamination Problem

The scale of AI-generated content on the internet is accelerating. Search results, blog posts, product descriptions, documentation — an increasing proportion of new web content is written by language models trained on the internet.

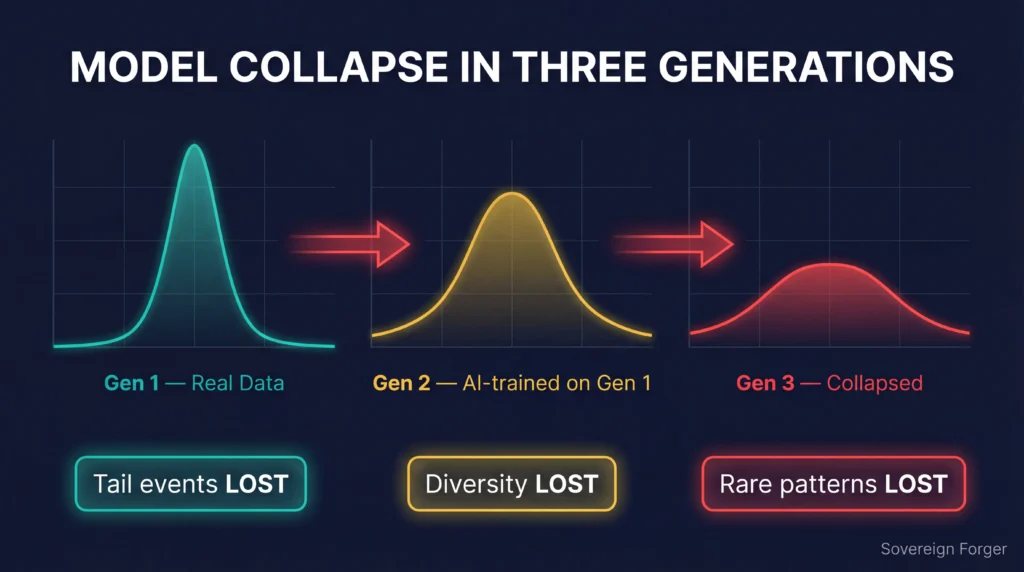

This creates a recursive loop: Model A trains on web data. Model A generates text that gets published. Model B trains on web data that now includes Model A’s output. Model B’s output goes back to the web. Each generation loses statistical fidelity to the original distribution.

The practical consequences for AI in financial services are severe:

Credit scoring models trained on data that includes AI-generated patterns learn to predict AI output, not real customer behavior.

Fraud detection systems calibrated against AI-contaminated data miss real-world anomaly patterns that have been smoothed out.

Risk models that depend on tail distributions — where financial risk concentrates — lose exactly those tails through recursive training.

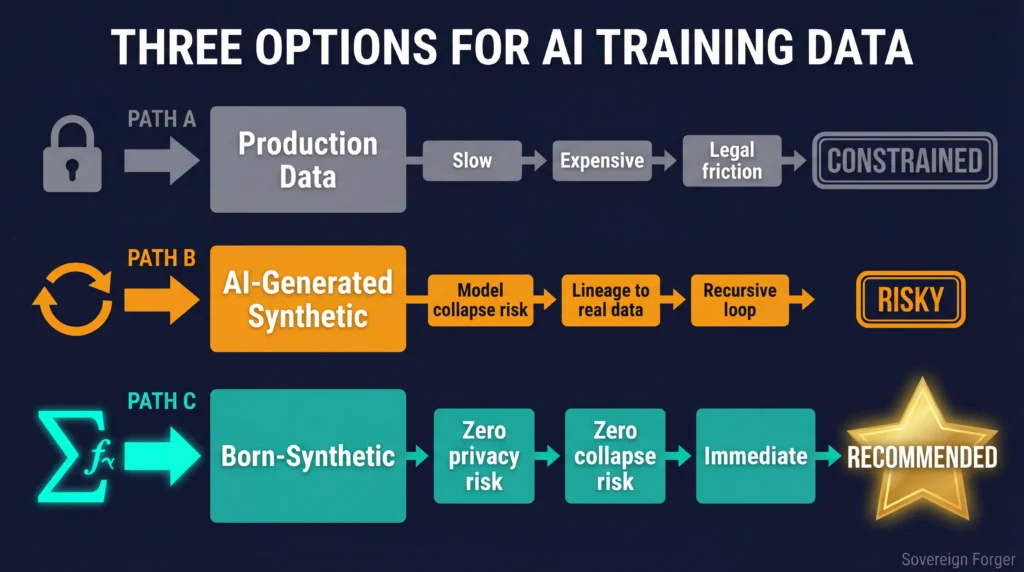

Why Most Synthetic Data Makes It Worse

Here is where it gets counterintuitive: most synthetic data generation makes the model collapse problem worse, not better.

The dominant approach to synthetic data works like this: input real data, train a generative model (GAN, VAE, or transformer) on that data, use the trained model to generate new records.

The output is, by definition, AI-generated data. It carries the same model collapse risk as any other AI output. If you train a fraud detection model on synthetic data generated by another AI model, you are feeding the recursive loop.

Some platforms mitigate this through careful statistical validation. But the fundamental architecture remains: AI in, AI out, recursive degradation risk.

How Born-Synthetic Data Breaks the Loop

Born-Synthetic data does not use AI models to generate its core data. The financial skeleton of every profile — net worth, assets, liabilities, income, risk ratings — is generated from statistical distributions and algebraic constraints. No neural networks. No generative models. No AI inference.

Contextual details are added in a single enrichment pass — not a recursive training loop. The output is never fed back as training data to itself or any other model.

The distinction is architectural:

- AI-generated synthetic data: Model output → feeds future training → recursive degradation

- Born-Synthetic data: Mathematical generation → single-pass enrichment → no feedback path

There is nothing for model collapse to act on because the core generation is deterministic mathematics, not probabilistic model inference.

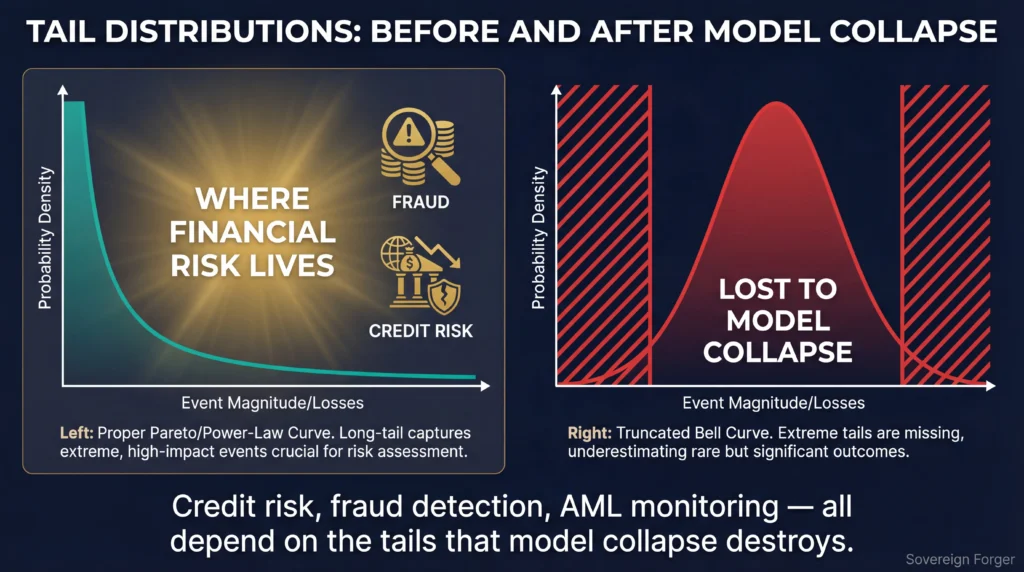

Why Tail Distributions Matter in Finance

Model collapse disproportionately affects the tails of distributions — the rare, extreme events that drive financial risk. A wealth distribution that loses its tail underestimates the probability of very high net worth individuals. A transaction monitoring model that loses tail behavior misses the unusual patterns that indicate money laundering.

Financial AI applications live in the tails. Credit risk, fraud detection, AML monitoring, stress testing — all depend on accurate representation of rare events. When recursive training smooths these tails, the resulting models systematically underestimate risk.

Born-Synthetic data preserves tails by construction. Pareto distributions are generated directly, not inferred from training data. The long tail is a mathematical input, not a statistical artifact that can be lost through recursive degradation.

Implications for EU AI Act Compliance

The EU AI Act Article 10 requires that training data for high-risk AI systems be “relevant, sufficiently representative, and to the best extent possible, free of errors.” Financial AI systems — credit scoring, fraud detection, insurance pricing — are classified as high-risk.

Model collapse creates a specific Article 10 problem: data that was representative when first generated becomes progressively less representative through recursive use. This degradation is difficult to detect and nearly impossible to document. How do you prove that your training data has not been contaminated by other models’ output?

Born-Synthetic data provides a clean answer: the data was never generated by an AI model in the first place. The statistical distributions are documented, auditable, and deterministic. The Certificate of Sovereign Origin establishes provenance that no recursive contamination has occurred.

Enforcement begins August 2026. See the full Compliance Timeline for every deadline that affects your AI training data strategy.

The Practical Takeaway

If you are training AI models for financial applications, the model collapse problem will intensify as AI-generated content continues to proliferate. The question is not whether your training data will be contaminated — it is whether you are building on a foundation that is immune to contamination.

Born-Synthetic data is immune because it never enters the recursive loop. The financial distributions come from mathematics. The cultural context comes from a single-pass enrichment with no feedback. The quality comes from deterministic verification, not statistical approximation.

The organizations that invest in mathematically-grounded training data now will have cleaner models, better compliance documentation, and more defensible AI systems than those relying on recursively-contaminated alternatives.

I built Born-Synthetic financial profiles specifically for this reason. 29 interlocked fields. Six geographic niches. Pareto-distributed wealth. Zero AI contamination. Documented provenance.

Download 100 free KYC-Enhanced profiles and see the difference.

FAQ

Q: What is model collapse?

A: Model collapse occurs when AI models are trained on data generated by other AI models. Each generation of training loses statistical fidelity to the original distribution — rare events disappear, distributions narrow, and the model becomes progressively less representative of reality. The term was established by a landmark Nature paper in 2024.

Q: Does all synthetic data cause model collapse?

A: Only synthetic data generated by AI models carries model collapse risk. Born-Synthetic data is generated from mathematical distributions (Pareto curves, algebraic constraints), not from neural network inference. There is no model output to recursively contaminate.

Q: How does model collapse affect financial AI?

A: Financial AI depends on accurate tail distributions — the rare, extreme events that drive credit risk, fraud detection, and AML monitoring. Model collapse disproportionately degrades these tails, causing models to systematically underestimate risk.

Q: Can model collapse be detected?

A: Detection is difficult because the degradation is gradual and often invisible in aggregate statistics. Overall distributions may look similar while tail behavior deteriorates. Continuous monitoring of distributional properties can help, but prevention through mathematically-grounded data generation is more reliable than detection.

Q: How does this relate to EU AI Act compliance?

A: Article 10 requires training data to be “sufficiently representative.” Model collapse makes data progressively less representative through recursive use. Born-Synthetic data generated from mathematical distributions maintains representativeness by construction and provides auditable provenance that satisfies Article 10 documentation requirements.