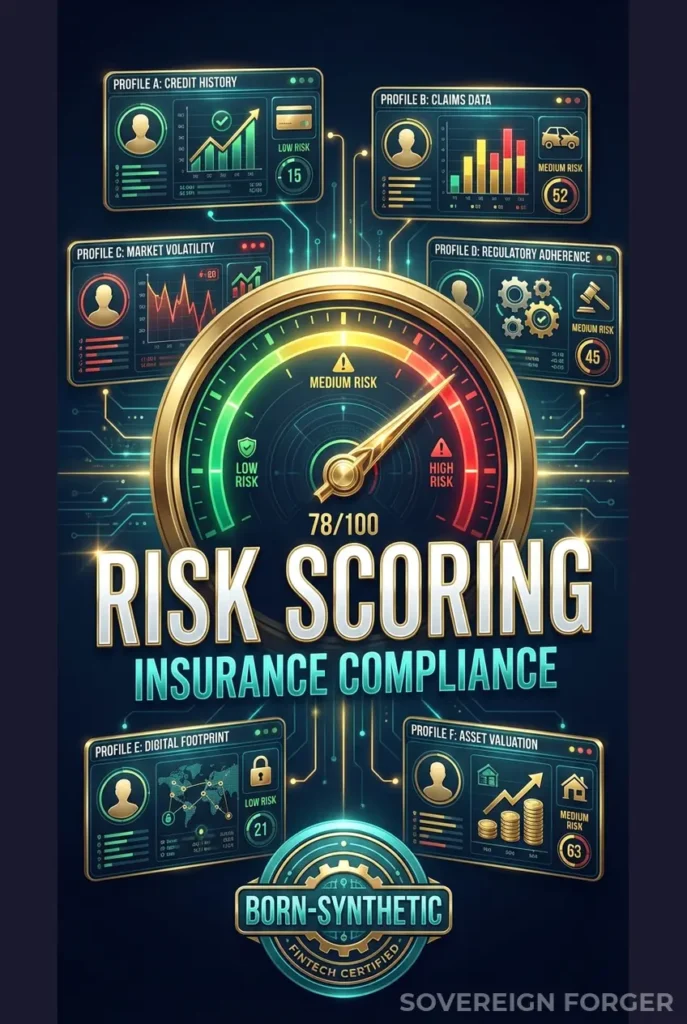

This risk scoring data is built for exactly this scenario. AXA: €2.3M from CNIL. Lloyd’s: repeated enforcement actions. Generali: regulatory investigations across multiple jurisdictions. Insurance is no longer exempt from banking-grade AML scrutiny — and risk scoring models trained on simple policyholder data are producing false positives on every complex UHNWI who walks through the door.

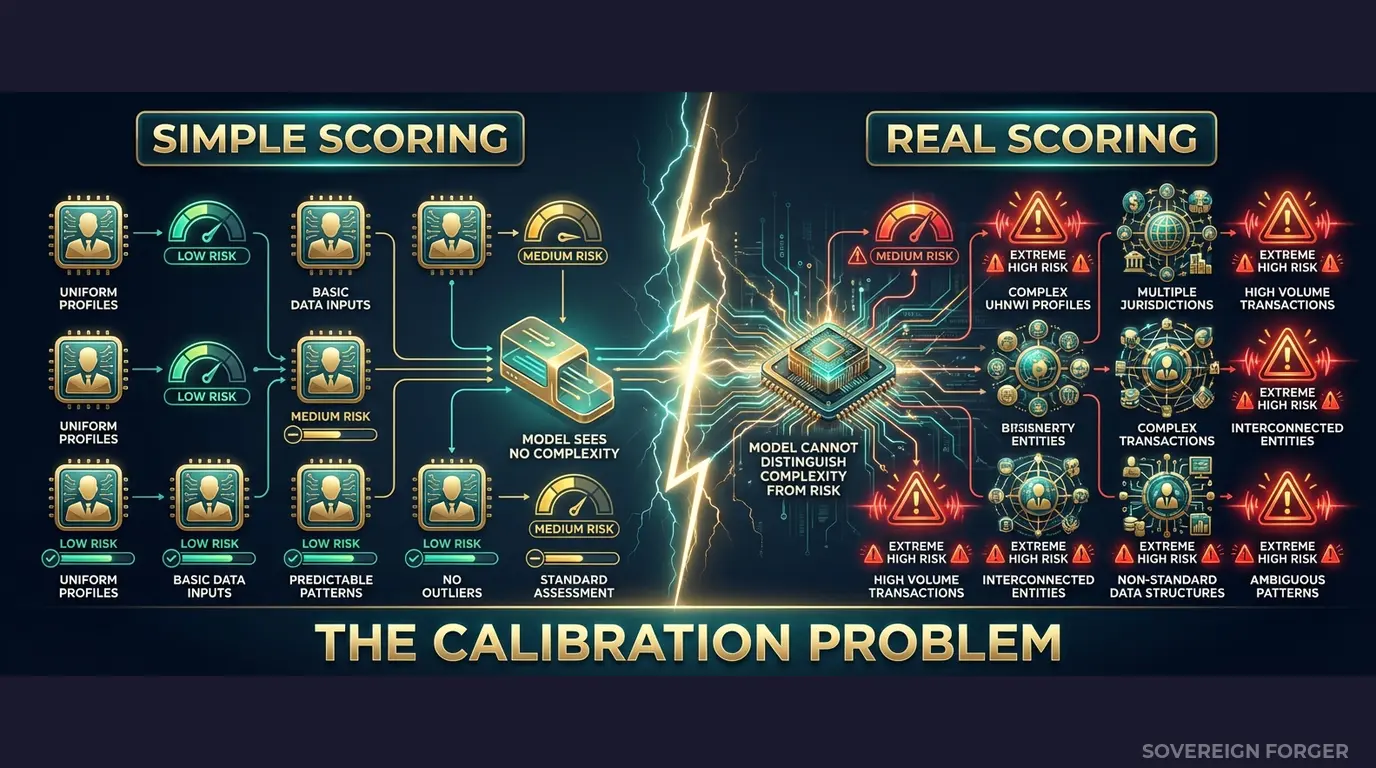

Your Risk Scoring Model Has a Calibration Problem

I have spent time inside insurance compliance operations, and the pattern I see is always the same. A risk scoring model gets built. The team trains it on internal policyholder data — mostly domestic, mostly retail, mostly single-jurisdiction. The model learns what “normal” looks like: one country of residence, one source of income, no offshore structures, no PEP connections. It works well. Precision looks good. The compliance team signs off.

Then the high-value book starts growing. A life insurance policy for €5M comes in from a client with dual nationality — Swiss and Lebanese. Net worth distributed across a BVI trust, a Luxembourg holding company, and direct real estate in London. The client is not a PEP, but a family member held a ministerial position in Beirut eight years ago. The model scores this profile as extreme risk. Maximum alert. Manual review required.

The same week, fifteen more UHNWI applications arrive. A German industrialist with a family office in Liechtenstein. A Singapore-based shipping magnate with premium financing through a Cayman vehicle. A Brazilian agribusiness heir with property in Zurich and a charitable foundation in Delaware. Every single one gets flagged as high risk. Every single one requires manual review. The compliance team is drowning.

Here is what actually happened: the model never learned the difference between structural complexity and genuine risk. In its training data, complex meant suspicious — because the training data contained no legitimate examples of complex wealth. Every multi-jurisdictional profile it ever saw was either a real suspicious activity report or a hand-crafted red-team scenario. So the model concluded, reasonably, that multiple jurisdictions plus offshore structures plus high net worth equals risk.

This is not a model failure. It is a training data failure.

I have watched this destroy operational efficiency at insurance companies that moved into the UHNWI segment. False positive rates above 80% on high-value policies. Compliance analysts spending three hours per case on manual review — not because the client is suspicious, but because the model cannot distinguish a tech founder’s standard Delaware LLC from a shell company layering scheme. The model treats both identically because it was never trained on the first category.

The regulatory pressure is making this worse, not better. EIOPA has been extending banking-grade AML requirements to insurance for the past three years. National regulators — BaFin, ACPR, the FCA — are applying the same KYC and risk assessment standards to life insurance, premium financing, and high-value general insurance that they apply to banking. The 5th Anti-Money Laundering Directive explicitly brought insurance intermediaries into scope. The 6th AMLD tightened it further. And now the EU AI Act classifies financial risk assessment AI as high-risk under Annex III, requiring documented governance of training data.

So you cannot lower your risk thresholds to reduce false positives — the regulator will fine you for inadequate screening. And you cannot keep the thresholds high — because your compliance team cannot process 200 manual reviews per week when 170 of them are false alarms triggered by legitimate structural complexity.

The only way out is to fix the training data. Your risk scoring model needs to learn what legitimate UHNWI wealth architecture looks like — multi-jurisdictional, multi-vehicle, multi-layered — so it can distinguish complexity from crime. And the data you train it on cannot be real client data, because GDPR Article 25 and the EU AI Act Article 10 both restrict using personal data for model training without explicit governance frameworks.

You need born-synthetic profiles with realistic wealth structures, deterministic risk signals, and zero PII. That is what I built.

Three Approaches That Make Risk Scoring Worse

Insurance companies trying to fix their risk scoring calibration typically try one of three approaches. I have seen all three fail for the same structural reason: they do not solve the data distribution problem.

Training on internal policyholder data. This is the default. Your model learns from your existing book. But if your book is 95% domestic retail policyholders and 5% complex UHNWI, the model learns that complexity is an outlier — and outliers are suspicious. Expanding the UHNWI book takes years. And using real client data for model training creates an immediate GDPR Article 25 exposure: personal data flowing into training pipelines with weaker controls than production systems. Under the EU AI Act Article 10, you also need to document provenance, bias assessment, and data governance for every training dataset. Real policyholder data makes this documentation nearly impossible to maintain.

Using anonymized claims or policy data. Stripping names and policy numbers from real UHNWI insurance clients does not eliminate re-identification risk. There are approximately 265,000 UHNWIs globally. The combination of net worth tier, jurisdiction, offshore vehicle type, and policy size can uniquely identify individuals even without direct identifiers. A €5M life insurance policy held through a Liechtenstein foundation by someone with Swiss-Lebanese dual nationality — how many people in the world match that description? Regulators can argue, correctly, that your “anonymized” data is merely pseudonymized, and GDPR applies in full.

Using generic synthetic data generators. Platform-based generators produce flat profiles — single jurisdiction, simple income, no offshore structures. When you feed these into a risk scoring model, the model learns that all profiles look roughly the same, with risk determined by a handful of binary flags. Then a real UHNWI arrives with three jurisdictions, two trusts, and a PEP-adjacent family connection, and the model has no frame of reference. It defaults to maximum risk because every dimension of the profile is outside the training distribution.

Real Data vs. Anonymized vs. Born-Synthetic

| Dimension | Real Data | Anonymized | Born-Synthetic |

|---|---|---|---|

| PII present | Yes | Residual | None |

| Re-identification risk | Certain | Probable (UHNWI) | Impossible |

| GDPR Art. 25 compliant | No | Disputed | Yes |

| EU AI Act Art. 10 | Violation | Unclear | Compliant |

| Certifiable for auditors | No | No | Yes (Certificate of Origin) |

| Fine exposure | Up to 4% global revenue | Up to 4% global revenue | Zero |

| Risk signal realism | High (but restricted) | Degraded by anonymization | Deterministic, niche-coherent |

| Complex wealth structures | Limited to existing book | Limited to existing book | 31 archetypes × 6 niches |

Born-Synthetic Data That Teaches Risk Models What Legitimate Complexity Looks Like

The core problem with insurance risk scoring is not the model architecture — it is the training distribution. If your model has never seen a legitimate UHNWI with four jurisdictions, a family trust, and a PEP-adjacent connection, it cannot score that profile correctly. It will treat every dimension of complexity as additive risk, because that is all the training data taught it.

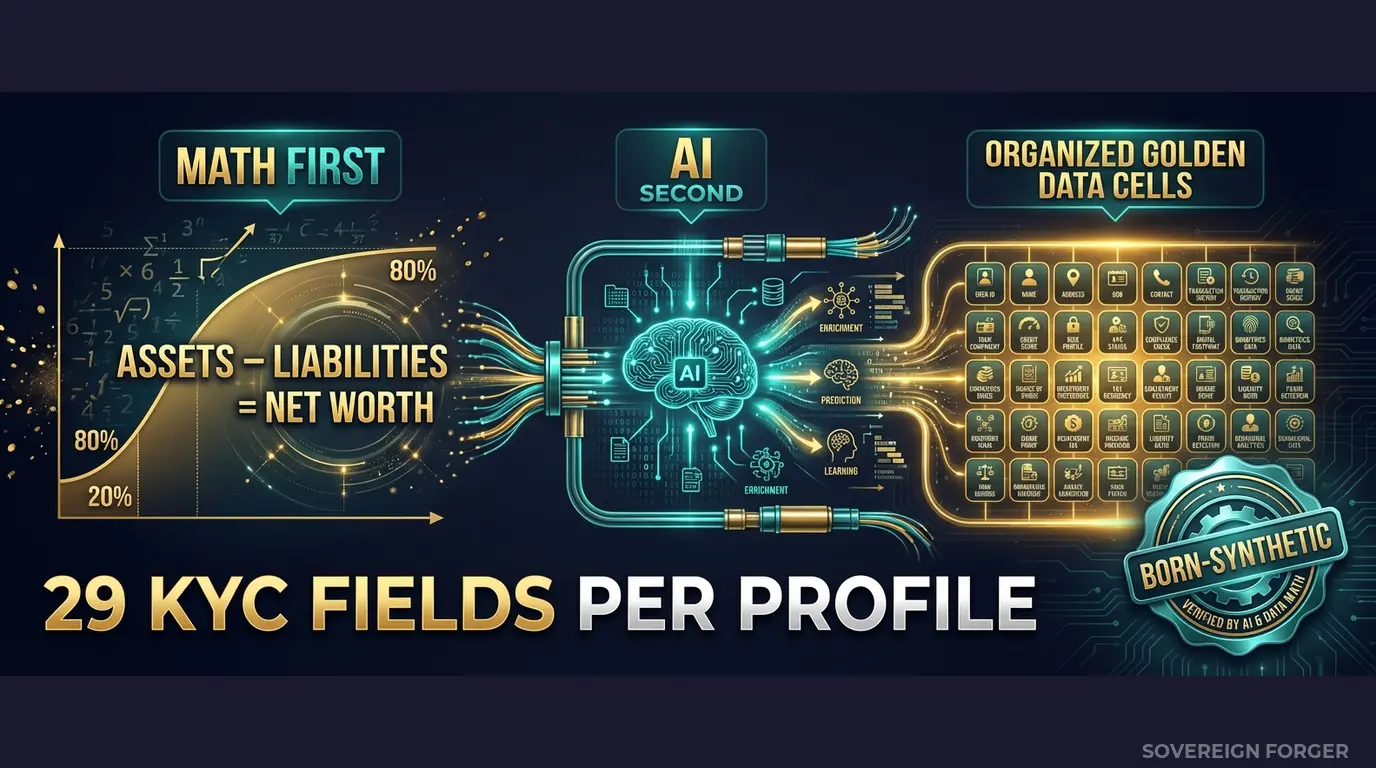

I built the Sovereign Forger pipeline to solve this specific problem. Every profile is generated from mathematical constraints, not derived from any real person. The generation works in two stages:

Math First. Net worth follows a Pareto distribution — the way real wealth is actually distributed, not a Gaussian curve. Asset allocations are computed within algebraic constraints: Assets – Liabilities = Net Worth, by construction. Property values, core equity, cash liquidity, and offshore holdings are proportioned according to the wealth archetype and geographic niche. Every balance sheet balances on every record. Zero exceptions. When your risk model ingests these profiles, it encounters the same statistical shape as a real UHNWI population — because the math is the same math.

AI Second. A local AI model adds narrative context — biography, profession, philanthropic focus — after the financial figures are locked. The AI runs entirely offline, on local hardware. No record ever touches the internet. The AI never modifies the numbers. It enriches each profile with culturally coherent details that match the geographic niche and wealth tier.

How This Fixes Risk Scoring Specifically

The 29 KYC-Enhanced fields include the exact signals your risk scoring model needs to learn calibration:

`kyc_risk_rating` — low, medium, or high. Deterministically derived from archetype, niche, net worth, and jurisdiction. A Swiss private banker with a Zurich domicile gets a different base risk than a commodity trader domiciled in Panama. Your model learns that risk is a function of specific combinations, not a function of complexity alone.

`pep_status` — none, domestic, foreign, or international_org. Distributed with realistic frequencies by niche. Middle East profiles show ~29% PEP incidence. European profiles show ~12%. Your model learns that PEP status is correlated with geography, not uniformly distributed.

`sanctions_screening_result` — clear, potential_match, or confirmed_match. With `sanctions_match_confidence` (0-100) for potential matches. Your model learns to distinguish between a name collision (confidence 30) and a genuine match (confidence 90) — a distinction that flat synthetic data never provides.

`adverse_media_flag` and `high_risk_jurisdiction_flag` — binary signals that interact with other fields. A high-risk jurisdiction flag combined with a low kyc_risk_rating teaches your model that jurisdiction alone does not determine risk. A high-risk jurisdiction combined with a potential sanctions match and adverse media teaches it when to escalate.

`source_of_wealth_verified` and `sow_verification_method` — whether wealth origin has been verified, and how (tax_returns, bank_statements, third_party, self_declared). Your model learns that self-declared source of wealth combined with a high-risk jurisdiction is a different signal than tax-return-verified wealth from the same jurisdiction.

The key insight: every KYC field is deterministically derived from the profile’s underlying wealth architecture. The signals are not randomly sprinkled. They emerge from the same structural logic that produces them in real compliance workflows. A tech founder with a Delaware LLC and a Cayman trust gets different risk signals than a Middle Eastern sovereign family member with the same net worth — because the underlying structures are different, and the KYC signals reflect that.

When you train your risk scoring model on 10,000 or 100,000 of these profiles, it learns to decompose complexity into its component signals. Multi-jurisdictional is not automatically high risk. Offshore vehicle is not automatically suspicious. PEP-adjacent is not automatically a deal-breaker. The model learns the conditional relationships — and your false positive rate on legitimate UHNWIs drops from 80% to something your compliance team can actually manage.

Built for Insurance Risk Scoring at Scale

6 Geographic Niches: Silicon Valley, Old Money Europe, Middle East, LatAm, Pacific Rim, Swiss-Singapore — each with culturally coherent wealth patterns that mirror the actual UHNWI populations your insurance products serve. A life insurance application from a Pacific Rim shipping dynasty looks structurally different from one filed by an Old Money European industrialist. Your risk model should know the difference.

31 Wealth Archetypes: Tech founders, private bankers, sovereign family members, commodity traders, real estate developers, family office managers — the actual client profiles that trigger Enhanced Due Diligence when they apply for high-value life insurance, premium financing, or keyman policies.

KYC Signal Distribution by Niche: Risk ratings, PEP statuses, sanctions screening results, and source-of-wealth verification methods distributed with realistic frequencies. LatAm profiles show ~84% high risk rating. Middle East profiles show ~29% PEP incidence. European and Swiss-Singapore profiles show ~48% low risk. Your model trains on the distributions it will encounter in production.

Deterministic Reproducibility: Every profile is generated from a SHA-256 hash of its UUID. Same UUID, same KYC signals, every time. Your model validation pipeline can reproduce results exactly. No stochastic variation between training runs.

Pricing

| Tier | Records | Price | Best For |

|---|---|---|---|

| Compliance Starter | 1,000 | $999 | Risk model proof of concept |

| Compliance Pro | 10,000 | $4,999 | Full model calibration suite |

| Compliance Enterprise | 100,000 | $24,999 | Production-grade training data |

No SDK. No API key. No sales call. Download a file, load it into your model training pipeline, and measure the difference in false positive rates.

Why This Matters for Insurance Now

Insurance is the next AML frontier. EIOPA has been progressively extending banking-grade AML requirements to the insurance sector. The 5th Anti-Money Laundering Directive brought insurance intermediaries explicitly into scope. National regulators — BaFin fined insurance firms for AML deficiencies, ACPR increased inspections of French insurers, the FCA expanded its financial crime oversight to include life insurance products. High-value life insurance, premium financing, and single premium policies are recognized money laundering vehicles, and regulators are treating them accordingly.

The fines are crossing into insurance. AXA received a €2.3M fine from CNIL. Lloyd’s has faced repeated enforcement actions. Generali has dealt with regulatory investigations across multiple jurisdictions. These are not banking fines applied to insurance by analogy — they are enforcement actions specifically targeting insurance companies for inadequate AML controls. The trajectory is clear: what happened to neobanks between 2022 and 2025 is happening to insurance between 2025 and 2028.

The EU AI Act changes the equation. Financial risk scoring AI is classified as high-risk under Annex III. Article 10 requires documented governance of training data — provenance, bias assessment, representativeness, and GDPR compliance. If your risk model trains on real policyholder data, you need to prove compliance on both GDPR and the AI Act simultaneously. If it trains on born-synthetic data with a Certificate of Sovereign Origin, the compliance documentation writes itself.

The balance sheet test is open source. Every Sovereign Forger record passes algebraic validation: Assets – Liabilities = Net Worth. Run the Balance Sheet Test on our data, then run it on your current risk model training data. If your training data does not pass this test, your model is learning from profiles where the numbers do not add up — and every prediction it makes inherits that inconsistency.

Every dataset ships with a Certificate of Sovereign Origin — documenting the born-synthetic methodology, zero PII lineage, and regulatory alignment. When your compliance officer or external auditor asks where the risk model training data came from, you hand them the certificate. Born-Synthetic data. Zero real PII. Compliant by construction — not by anonymization.

Calibrate Your Risk Scoring Models

Download 100 free KYC-Enhanced UHNWI profiles with deterministic risk signals. Feed them into your risk scoring pipeline. Test whether your model can distinguish between structural complexity and genuine risk.

Count how many profiles your current model flags as high risk that are actually legitimate UHNWI wealth structures. That number is the size of your false positive problem — and the cost in analyst hours, policyholder friction, and regulatory exposure.

No credit card. No sales call. Just your work email.

Related reading: DORA Synthetic Data Requirements for Resilience Testing — how DORA Article 24-25 mandates synthetic data for threat-led penetration testing.

Frequently Asked Questions

Why do insurance risk scoring models struggle to distinguish legitimate wealth complexity from financial crime indicators?

Risk scoring algorithms are typically trained on retail banking data where offshore structures and multi-jurisdictional assets are rare. When these models encounter UHNWI clients with legitimate trust arrangements, nominee directors, and cross-border holdings, they generate false positives — flagging legal structures as suspicious. Testing with synthetic profiles that include realistic offshore vehicles, PEP status distributions, and sanctions screening results exposes these calibration failures before they reach production.

How does Sovereign Forger generate statistically realistic risk distributions for insurance testing?

The pipeline uses Pareto distributions calibrated to real wealth concentration patterns across six geographic niches. Risk ratings, PEP flags, and sanctions results are derived deterministically from each profile’s archetype and jurisdiction using SHA-256 hashing — producing consistent, reproducible distributions. For example, Middle East profiles show approximately 29% PEP exposure while Latin American profiles show 84% elevated risk ratings, reflecting real-world patterns without containing any real person’s data.

Can born-synthetic risk scoring test data replace anonymized policyholder records under GDPR Art.25?

Born-synthetic data eliminates the re-identification risk that persists with anonymized records. Research demonstrates that 99.98% of individuals can be re-identified in anonymized datasets with just 15 attributes. Sovereign Forger profiles contain 29 fields generated from zero — no real person exists in the data by construction. This satisfies GDPR Art.25 data protection by design requirements more robustly than any anonymization or pseudonymization technique.

What edge cases should insurance risk scoring models be tested against that standard test data misses?

Standard test data typically lacks profiles with high net worth combined with high-risk jurisdiction exposure, legitimate PEP status with clean sanctions records, or complex multi-layer ownership through BVI, Cayman, and Panama vehicles. Sovereign Forger generates all 31 wealth archetypes across six niches — including tech founders with offshore SPVs, old money dynasties with multi-generational trusts, and sovereign family members with diplomatic immunity — ensuring risk scoring models encounter the full spectrum of legitimate complexity.

Learn more about insurance risk scoring synthetic data and how Born Synthetic data addresses this in our glossary and comparison guides.