AXA: €2.3M from CNIL. Lloyd’s: repeated enforcement actions. Generali, Zurich, Allianz — all under intensifying regulatory scrutiny. Insurance is no longer exempt from banking-grade AML standards. And every one of these enforcement actions exposed the same structural failure: models validated against data that never included the profiles where real-world losses occur.

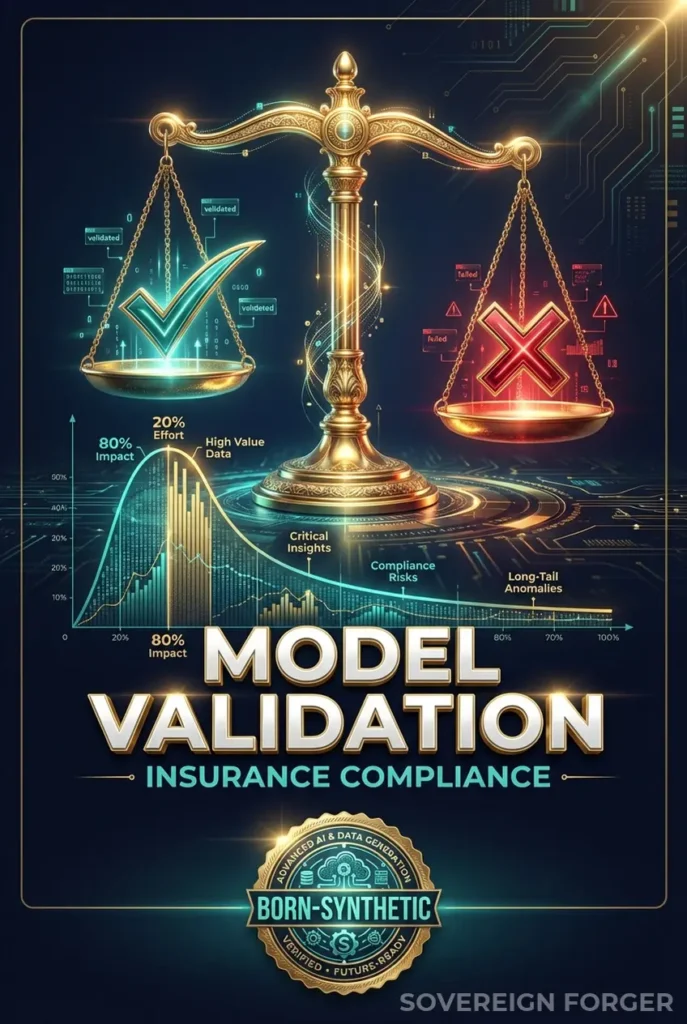

Your Validation Data Is Missing the Tail

I have sat in rooms where actuarial teams presented model validation results with 98% accuracy, green dashboards across every metric, and full sign-off from internal audit. The models had been tested against hundreds of thousands of synthetic profiles. Everything looked perfect.

Six months later, the same models failed catastrophically on a cluster of high-net-worth life insurance policies. Premium financing arrangements layered through offshore vehicles. Beneficiary structures that spanned three jurisdictions. PEP-adjacent policyholders whose risk signals were invisible to a model that had never seen that pattern in its validation data.

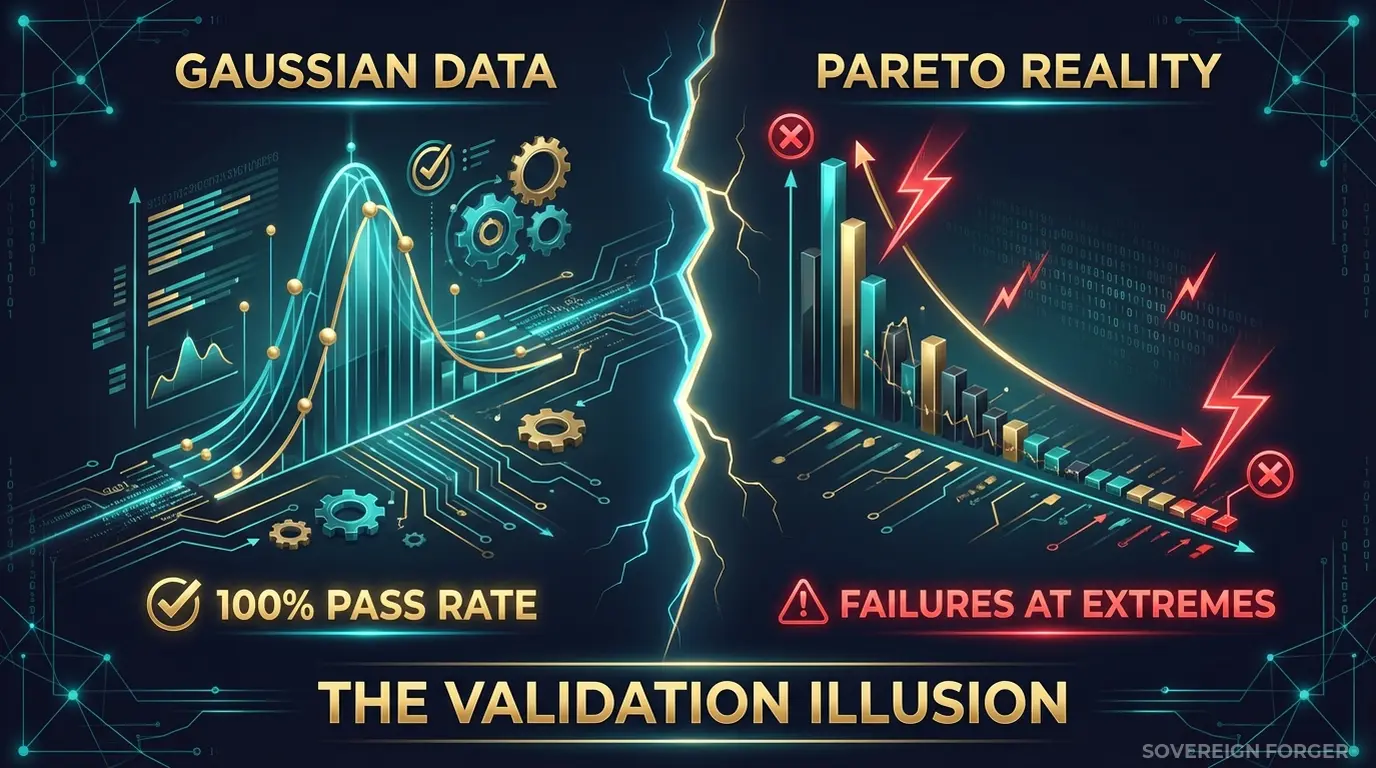

This is the core problem with model validation in insurance: the data you validate against determines what your model can recognize. If your validation dataset contains only normally distributed wealth profiles — middle-market policyholders with single jurisdictions, straightforward beneficiary structures, and no offshore exposure — then your model has been validated against the simple 80% of your book. It has never been tested against the complex 20% where real losses, regulatory failures, and AML violations concentrate.

In insurance, this is not an abstract statistical concern. It is the operational gap that regulators are now actively targeting.

EIOPA’s guidelines on AI governance in insurance explicitly require that model validation datasets reflect the full distribution of real-world inputs — including edge cases and tail scenarios. National regulators across Europe are extending banking-grade AML requirements to the insurance sector, driven by the recognition that life insurance, premium financing, and high-value policies are increasingly used as money laundering vehicles.

The logic is straightforward: if a €5M single-premium life insurance policy can serve the same function as a €5M bank deposit for layering purposes, then the AML controls — and the models behind them — must meet the same standard. But insurance compliance systems were not built for this level of scrutiny. They were built for underwriting risk, not financial crime risk. And the validation data reflects that legacy.

The result: models that perform well on actuarial metrics but fail on AML detection, fraud identification, and regulatory risk scoring — precisely the areas where enforcement is accelerating.

I built Sovereign Forger’s KYC-Enhanced profiles because I watched this pattern repeat across financial services. The insurance sector is simply the latest to discover that model validation is only as good as the data you validate against. And if that data does not contain the Pareto tail — the complex, multi-jurisdictional, high-net-worth profiles where most real-world model failures occur — your validation results are meaningless.

Three Approaches That Do Not Validate What Matters

Insurance companies face a unique version of the test data problem. Unlike banks, where KYC infrastructure has matured over two decades, insurers are retrofitting AML and AI governance requirements onto systems designed for actuarial analysis. The validation data available reflects this gap.

Using policyholder data from production. Some teams extract real policyholder records into model validation environments. This creates an immediate GDPR Article 25 violation — personal data processed in environments with weaker access controls, broader team access, and insufficient logging. For insurance, the risk is compounded: policyholder data includes health information, beneficiary details, and premium payment histories that qualify as special category data under GDPR Article 9. The regulatory exposure is not just a fine — it is a reputational catastrophe. And with the EU AI Act fully applicable from August 2026, using this data for AI model training or validation requires documented governance of data provenance that most insurers cannot provide.

Using anonymized policyholder data. Stripping names and policy numbers from UHNWI insurance profiles does not eliminate re-identification risk. With only 265,000 UHNWIs globally, the combination of premium amount, policy type, jurisdiction, and beneficiary structure can uniquely identify individuals. A €3M single-premium life insurance policy issued in Liechtenstein with a Cayman trust as beneficiary narrows the population to single digits. A regulator — or a plaintiff’s attorney — can argue that your “anonymized” validation data is pseudonymized at best, and GDPR applies in full.

Using generic synthetic generators. Platform-based generators produce structurally flat profiles — single jurisdiction, no offshore vehicles, no entity layering, no wealth composition detail. They generate retail insurance customers with larger policy values, not actual UHNWI wealth architecture. Your model validates against these profiles and learns that high-value policies are simple. Then a real client arrives with premium financing through a Luxembourg holding company, a beneficiary chain spanning four entities, and PEP connections through a board membership — and the model has no frame of reference. It was never validated against this level of structural complexity.

Real Data vs. Anonymized vs. Born-Synthetic

| Dimension | Real Policyholder Data | Anonymized | Born-Synthetic |

|---|---|---|---|

| PII present | Yes (incl. health data) | Residual | None |

| Re-identification risk | Certain | Probable (UHNWI) | Impossible |

| GDPR Art. 25 compliant | No | Disputed | Yes |

| EU AI Act Art. 10 | Violation | Unclear | Compliant |

| Covers Pareto tail | Partially | Partially | By construction |

| Certifiable for auditors | No | No | Yes (Certificate of Origin) |

| Fine exposure | Up to 4% global revenue | Up to 4% global revenue | Zero |

Born-Synthetic Profiles Built for Insurance Model Validation

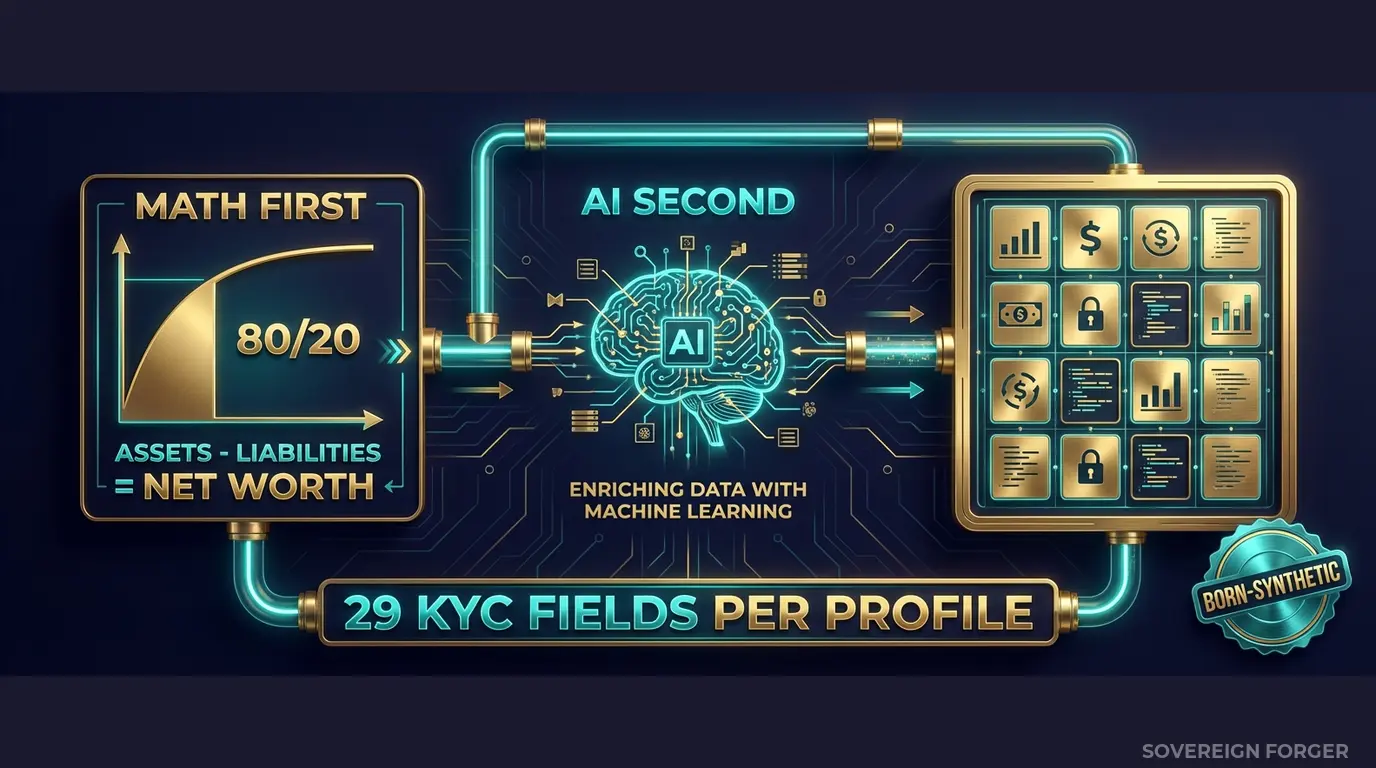

Every profile in the Sovereign Forger KYC dataset is generated from mathematical constraints — not derived from any real person, any real policyholder, or any real claim. The generation pipeline works in two stages, and this separation is what makes it work for model validation.

Math First. Net worth follows a Pareto distribution — the actual shape of real wealth distribution, not a Gaussian approximation. This is critical for insurance model validation: if your validation data uses a normal distribution for wealth, your model has never seen the long tail where policy values, premium amounts, and risk concentrations behave differently from the median. In Sovereign Forger profiles, asset allocations are computed within algebraic constraints: Assets – Liabilities = Net Worth, by construction. Every balance sheet balances on every record. Zero exceptions. Property values, core equity, cash liquidity, and liability structures are internally consistent — because your model validation is only meaningful if the input data is internally consistent.

AI Second. A local AI model adds narrative context — biography, profession, philanthropic focus — after the financial figures are locked. The AI never touches the numbers. It enriches the profile with culturally coherent details that match the geographic niche and wealth tier. This matters for insurance models that incorporate text fields, profession-based risk scoring, or geographic risk weighting.

Why Pareto Distribution Matters for Insurance Model Validation

Most synthetic data generators use a normal distribution for wealth. This produces a dataset where the majority of profiles cluster around a mean, with thin tails on both sides. For retail insurance products, this might be adequate.

For UHNWI insurance products — life insurance, premium financing, high-value property, art and collectibles, key-person coverage — this is fundamentally wrong. Real wealth follows a power law. The top 1% of your book holds a disproportionate share of total insured value, and their risk profiles are structurally different from the median policyholder.

I set the Pareto shape parameter for each niche based on how real wealth concentrates in that geography. Silicon Valley founders have different concentration patterns than Old Money European dynasties or Middle East sovereign families. When you validate your model against Sovereign Forger data, you are validating against the actual distribution shape — including the tail where your models are most likely to fail.

29 Fields Designed for Model Validation

Every KYC-Enhanced profile includes the fields your insurance models need to process:

Identity & Geography: full_name, residence_city, residence_zone, tax_domicile

Wealth Structure: net_worth_usd, total_assets, total_liabilities, property_value, core_equity, cash_liquidity, assets_composition, liabilities_composition

Professional Context: profession, education, narrative_bio, philanthropic_focus

Offshore Exposure: offshore_jurisdiction, offshore_vehicle

KYC Signals: kyc_risk_rating, pep_status, pep_position, pep_jurisdiction, sanctions_screening_result, sanctions_match_confidence, adverse_media_flag, source_of_wealth_verified, sow_verification_method, high_risk_jurisdiction_flag

For insurance model validation specifically, the combination of `net_worth_usd`, `total_assets`, `assets_composition`, `kyc_risk_rating`, and `pep_status` creates the input vector that stress-tests your models against realistic complexity. A high-net-worth profile with offshore vehicles in a high-risk jurisdiction and PEP-adjacent status is the exact scenario where insurance models fail in production — and where regulators look first.

Every KYC field is deterministically derived from the profile’s archetype, niche, net worth, and jurisdiction. A tech founder in Silicon Valley gets different risk signals than a commodity trader in the Pacific Rim, because the underlying wealth structures — and therefore the insurance risk profiles — are genuinely different.

Built for Insurance Model Validation at Scale

6 Geographic Niches: Silicon Valley, Old Money Europe, Middle East, LatAm, Pacific Rim, Swiss-Singapore — each with culturally coherent wealth patterns that reflect how high-net-worth insurance exposure actually distributes globally.

31 Wealth Archetypes: Tech founders, private bankers, commodity traders, family office managers, real estate developers, sovereign family members — the actual client archetypes that drive premium concentration and risk outliers in insurance books.

Pareto-Distributed Wealth: Not a bell curve. Not uniformly random. The actual power-law distribution that governs real UHNWI wealth — the distribution your model needs to handle correctly, and the distribution that most validation datasets get wrong.

KYC Signal Distribution: Risk ratings, PEP statuses, sanctions screening results, and source-of-wealth verification distributed with realistic frequencies by niche. A Middle East niche has a ~29% PEP rate. A LatAm niche has ~84% high risk. These are not arbitrary — they reflect the regulatory reality of each geography.

Pricing

| Tier | Records | Price | Best For |

|---|---|---|---|

| Compliance Starter | 1,000 | $999 | Model validation proof of concept |

| Compliance Pro | 10,000 | $4,999 | Full validation suite, stress testing |

| Compliance Enterprise | 100,000 | $24,999 | AI training + production model validation |

No SDK. No API key. No sales call. Download a file, open it in Python or your actuarial platform, and run your validation suite.

Why This Matters Now for Insurance

Insurance AML enforcement is accelerating. EIOPA and national regulators are systematically extending banking-grade AML requirements to the insurance sector. Life insurance, premium financing, and single-premium policies are recognized as money laundering vehicles — and the compliance infrastructure that worked for underwriting risk is not sufficient for financial crime risk. Models trained and validated on legacy data will not meet the new standard.

The EU AI Act classifies financial AI as high-risk. Under Annex III, AI systems used for creditworthiness assessment, risk scoring, and fraud detection in financial services — including insurance — are classified as high-risk. Article 10 requires documented governance of training and validation data, including provenance, bias assessment, and GDPR compliance. If your validation data was derived from real policyholders, you need to prove compliance on GDPR, the AI Act, and sector-specific regulations simultaneously. Born-Synthetic data eliminates the first two concerns by construction.

AXA’s €2.3M CNIL fine was about data governance, not a security breach. The enforcement trend is clear: regulators are not waiting for data breaches to act. They are auditing data handling practices proactively. Model validation data that contains real or pseudonymized policyholder data is a liability sitting in your infrastructure, waiting for an audit to find it.

The balance sheet test is open source. Every Sovereign Forger record passes algebraic validation: Assets – Liabilities = Net Worth. Run the Balance Sheet Test on our data, then run it on your current validation data. If your validation data cannot pass a basic algebraic consistency check, what is your model actually learning from it?

Every dataset ships with a Certificate of Sovereign Origin — documenting the born-synthetic methodology, zero PII lineage, and regulatory alignment. When your model validation auditor asks “where did you get this data and can you prove it contains no PII?”, you hand them the certificate. The conversation is over in thirty seconds.

Validate Your Models Against Realistic Data

Download 100 free KYC-Enhanced UHNWI profiles. Run your model validation suite. Check whether your model handles the Pareto tail — the complex, multi-jurisdictional, high-net-worth profiles where most real-world failures occur.

If your model performs the same on these profiles as it does on your current validation data, your model is robust. If it does not — you have found the gap before a regulator does.

No credit card. No sales call. Just your work email.

Related reading: DORA Synthetic Data Requirements for Resilience Testing — how DORA Article 24-25 mandates synthetic data for threat-led penetration testing.

Frequently Asked Questions

Why do insurance model validation results degrade when tested against UHNWI profiles?

Most insurance models are validated using retail-scale data with simple asset structures. UHNWI clients hold multi-jurisdictional trusts, offshore vehicles, and complex liability compositions that create statistical edge cases. Models trained or validated without this complexity pass internal checks but fail when exposed to real high-net-worth portfolios — producing inaccurate risk scores, mispriced policies, and regulatory scrutiny from Solvency II supervisors.

How does born-synthetic data improve insurance model validation without GDPR exposure?

Born-synthetic profiles are generated from mathematical distributions — Pareto wealth curves and algebraic balance constraints — not derived from real policyholder records. This means validation teams can test models against statistically realistic UHNWI edge cases without triggering GDPR Art.25 data protection by design requirements. Every record passes a balance check (assets minus liabilities equals net worth) by construction, ensuring the validation inputs are structurally sound.

What fields does Sovereign Forger provide for insurance model validation testing?

Each synthetic profile includes 29 interlocked fields covering identity, wealth composition, offshore structures, KYC risk ratings, PEP status, sanctions screening results, and source of wealth verification. For model validation specifically, the asset composition breakdown (property, equity, cash liquidity), liability structure, and net worth distribution provide the statistical variety needed to stress-test actuarial and underwriting models across six geographic niches.

Does synthetic model validation data satisfy DORA Article 24-25 resilience testing requirements for insurers?

Yes. DORA, enforceable since January 2025, requires insurance undertakings to conduct regular ICT resilience testing including systems that process financial crime and identity data. Articles 24-25 explicitly anticipate the use of synthetic data for threat-led penetration testing. Sovereign Forger profiles provide the complexity and statistical realism needed to satisfy EIOPA supervisory expectations without creating secondary privacy risks from using production data in test environments.

How many synthetic profiles are typically needed for a statistically meaningful insurance model validation?

For a single geographic niche, 10,000 profiles (Compliance Pro tier at $4,999) provide sufficient statistical coverage to validate model behavior across all 31 wealth archetypes and edge cases. Enterprise validation programs covering multiple jurisdictions typically use 100,000 profiles per niche (Compliance Enterprise at $24,999) to ensure coverage of rare combinations — such as high-risk PEP profiles with complex offshore trust structures in specific tax domiciles.

Learn more about insurance model validation synthetic data and how Born Synthetic data addresses this in our glossary and comparison guides.