Key Takeaway: GDPR Article 25 applies to every environment where personal data is processed — including test, QA, and staging. Copying production data into test databases creates full GDPR liability. Born-synthetic data eliminates this risk entirely because no real person exists in the dataset.

Nobody asks the obvious question: what data is running in your test environment right now? I have been in rooms where the answer was “copies of production.” Full PII. In QA.

Every financial institution tests its KYC and AML systems before deployment. If the answer involves copies of real customer profiles — even anonymized, masked, or pseudonymized — you have a GDPR test data problem that most compliance teams have not yet confronted. And enforcement is coming.

This is not a theoretical risk. In 2023, the Irish Data Protection Commission fined Meta €1.2 billion for data transfers that lacked adequate protection. The Danish DPA issued a ban on Google Workspace in education because student data was processed without sufficient safeguards. The pattern is clear: regulators are no longer accepting “but it was internal” as a defence. Test environments are next.

What Does GDPR Article 25 Require for Test Environments?

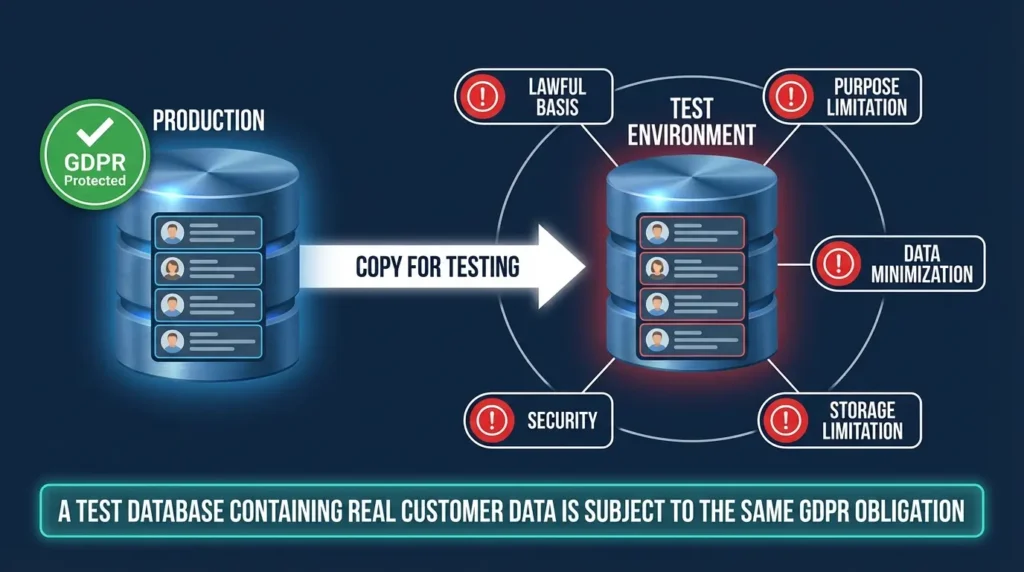

GDPR Article 25 mandates “data protection by design and by default.” This is not limited to production systems. The regulation applies to the processing of personal data in any context — including development, testing, QA, and staging environments.

When a compliance team copies customer records into a test database to validate a new screening rule, that copy is a processing operation under GDPR. The personal data in those records retains its legal status regardless of the environment it sits in. A test database containing real customer data is subject to the same obligations as the production database: lawful basis, purpose limitation, data minimization, storage limitation, and security.

Most organizations treat test environments as internal tools outside the scope of data protection. Article 25 says otherwise.

Let me break down the specific obligations that apply:

Lawful basis. You need a legal justification for processing that data in a test environment. “We needed to test our screening rules” is not automatically a valid basis under Article 6. The original consent or legitimate interest that justified collecting the data likely did not include “use in test environments by the engineering team.”

Purpose limitation. Data collected for KYC onboarding cannot simply be repurposed for QA testing without a compatibility assessment under Article 6(4). Most institutions skip this step entirely.

Data minimization. Article 25 requires that only data necessary for the specific purpose is processed. Testing a new AML rule rarely requires 500,000 full customer records. Yet that is exactly what gets copied.

Storage limitation. Test databases are notorious for outliving their purpose. I have seen QA environments with customer data from three years ago, long after the test cycle ended. No retention policy. No deletion schedule.

Security. Article 32 requires appropriate security measures proportionate to the risk. Test environments typically run with weaker access controls, shared credentials, and less monitoring than production. The data is the same; the protection is not.

Why Does Anonymization Fail for UHNWI Test Data?

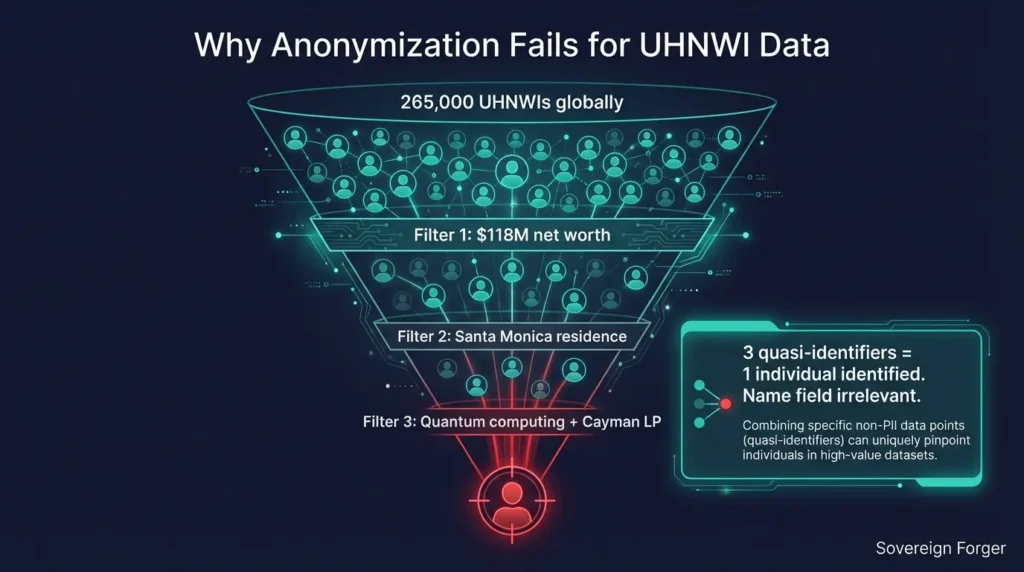

The standard response is anonymization: remove names, scramble account numbers, mask direct identifiers. This works for mass-market retail data where individual records are statistically interchangeable.

For UHNWI profiles, anonymization is structurally insufficient. The population of individuals with net worth above $30 million is approximately 265,000 globally, according to Capgemini’s World Wealth Report. Each profile is highly distinctive — a unique combination of wealth tier, jurisdiction, offshore structure, profession, and geographic location.

Research on re-identification attacks has demonstrated that as few as three quasi-identifiers can uniquely identify individuals in sparse populations. A landmark study by Latanya Sweeney at Harvard showed that 87% of the US population could be uniquely identified by ZIP code, birth date, and gender alone. For UHNWI profiles, the quasi-identifiers are far richer. A profile showing “$118M net worth, Santa Monica residence, quantum computing profession, Cayman LP” may correspond to zero, one, or very few real individuals globally — whether or not the name field has been removed.

The GDPR test is whether re-identification is “reasonably likely” considering “all means reasonably likely to be used” (Recital 26). For UHNWI data, the answer is increasingly yes. As re-identification techniques improve — and as public databases grow richer — the anonymization gap widens every quarter.

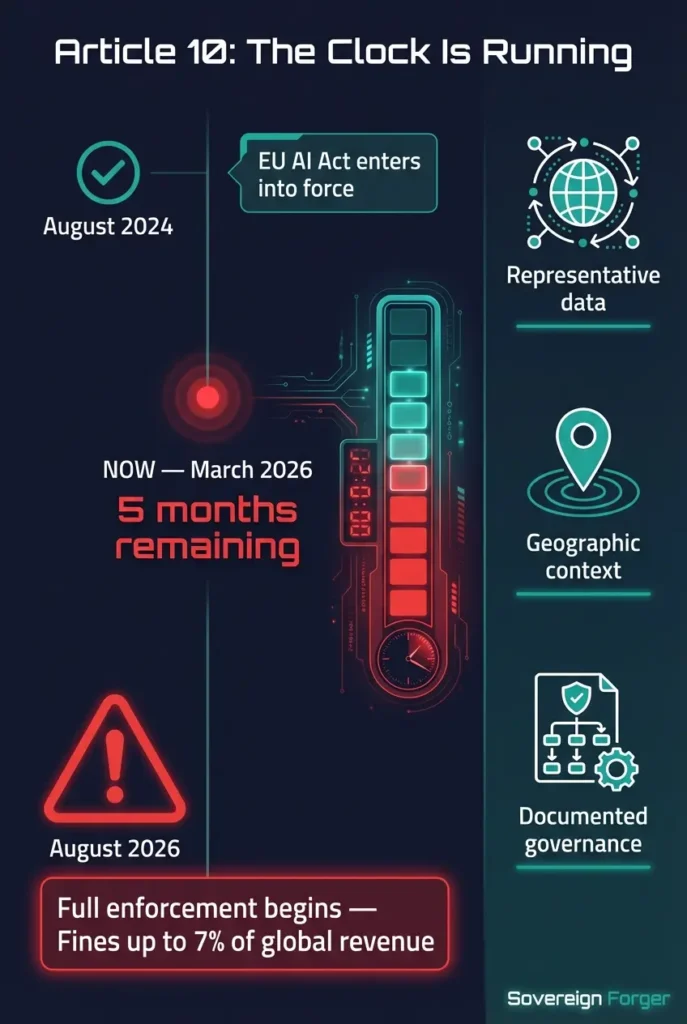

How Does the EU AI Act Make This Worse?

Article 10 of the EU AI Act adds a second layer. AI systems used in financial services — credit scoring, risk assessment, fraud detection — are classified as high-risk. Their training and testing datasets must meet governance requirements including quality, relevance, representativeness, and compliance with applicable data protection law.

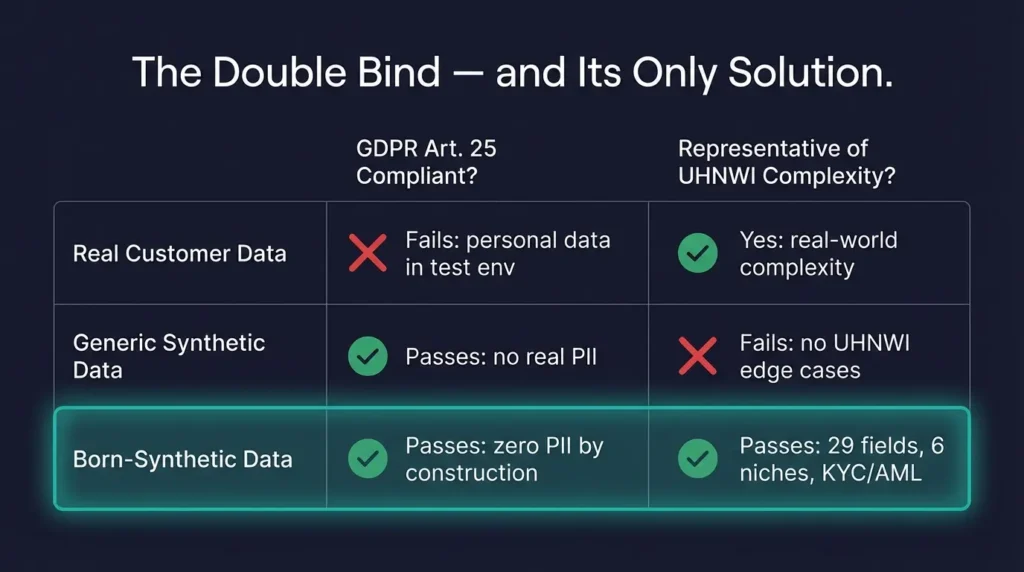

This creates a double bind: your AI training data must be representative of real-world complexity (including UHNWI edge cases), but it must also be GDPR-compliant. Using real data fails the second test. Using generic synthetic data fails the first.

Enforcement begins August 2026. Fines reach 7% of global revenue. Combined with GDPR fines of up to 4% of global turnover or EUR 20 million, the total regulatory exposure for non-compliant test data is staggering.

What Is the Born-Synthetic Alternative?

Born-synthetic data eliminates both problems simultaneously. No real individual was used as input at any stage. There is no person to re-identify — not because their identity was hidden, but because they never existed.

The generation starts from mathematical distributions — Pareto for wealth, algebraic constraints for balance sheets, geographic models for jurisdictions. A local, offline LLM adds narrative context after the numbers are locked. The result is data that is representative of UHNWI complexity without any lineage to real people.

For KYC/AML testing specifically, I built compliance-grade profiles with 29 interlocked fields — PEP status, risk ratings, sanctions screening results, adverse media flags — all deterministically derived. Zero AI on the KYC fields. Fully reproducible.

Every dataset ships with a Certificate of Sovereign Origin documenting the synthesis method. When the auditor asks where your test data came from, you have the answer in writing.

See Born Synthetic vs Anonymized for the deeper technical comparison, and The Compliance Blind Spot for why generic test data fails EDD systems.

What Should Compliance Teams Do Right Now?

Here is a practical implementation checklist for teams that want to close this gap before August 2026:

Step 1: Audit your test environments. Identify every database, staging system, and QA environment that contains real customer data. Most institutions discover 3-5 copies they did not know about. Document what data is in each, who has access, and when it was last refreshed.

Step 2: Assess re-identification risk. For each test dataset, evaluate whether the combination of quasi-identifiers could identify real individuals. Pay special attention to UHNWI data, PEP records, and any profiles with distinctive combinations of wealth, jurisdiction, and profession.

Step 3: Evaluate your legal basis. Confirm that the processing of personal data in each test environment has a valid legal basis under Article 6. If the original purpose did not include testing, you likely need a compatibility assessment or a new basis.

Step 4: Replace high-risk test data. Start with the datasets that carry the highest re-identification risk — typically UHNWI profiles, PEP records, and any data involving sensitive jurisdictions. Born-synthetic replacements can be deployed immediately without pipeline changes.

Step 5: Document everything. Article 25 compliance is not just about what you do — it is about what you can prove. Document your test data governance policy, your replacement timeline, and the provenance of any synthetic data you deploy.

Frequently Asked Questions

Does GDPR Article 25 apply to internal test environments that are not connected to the internet?

Yes. Article 25 applies to the processing of personal data regardless of where it occurs. An air-gapped test environment containing real customer records is still processing personal data under GDPR. The obligation to implement data protection by design and by default has no exception for internal systems.

Is pseudonymized data sufficient for GDPR compliance in test environments?

Pseudonymized data is still personal data under GDPR (Recital 26). Pseudonymization reduces risk but does not eliminate GDPR obligations. For UHNWI profiles specifically, the distinctive combination of quasi-identifiers makes pseudonymization particularly weak — re-identification remains reasonably likely even without direct identifiers.

What is the difference between anonymized data and born-synthetic data under GDPR?

Anonymized data starts from real individuals and attempts to remove identifying information. If re-identification is reasonably likely, it remains personal data. Born-synthetic data is generated from mathematical distributions with no real person as input. Since no individual ever existed in the data, GDPR personal data rules do not apply. The risk profile is fundamentally different.

Can we use production data for testing if we have customer consent?

Theoretically yes, but practically difficult. Consent must be specific, informed, and freely given (Article 7). Most customer consent forms do not explicitly cover use in test environments, QA pipelines, or AI training. Relying on consent also creates ongoing management burden — customers can withdraw consent at any time, which would require purging their data from every test copy.

How quickly can born-synthetic data replace real test data in an existing compliance pipeline?

Born-synthetic datasets use standard formats (JSONL, CSV) with documented schemas. Most teams integrate within one sprint. The 29-field KYC/AML schema maps directly to standard compliance testing fields — PEP status, risk rating, sanctions results, adverse media flags. No pipeline redesign required. Download a free 100-record sample and test the fit before committing.

The Deadline Is August 2026

EU AI Act enforcement starts in five months. The window for upgrading your test data governance is narrowing. 29 fields. Zero PII. Born-Synthetic. This is what GDPR-compliant test data actually looks like.

Download 100 free KYC-Enhanced profiles and run them through your EDD pipeline.