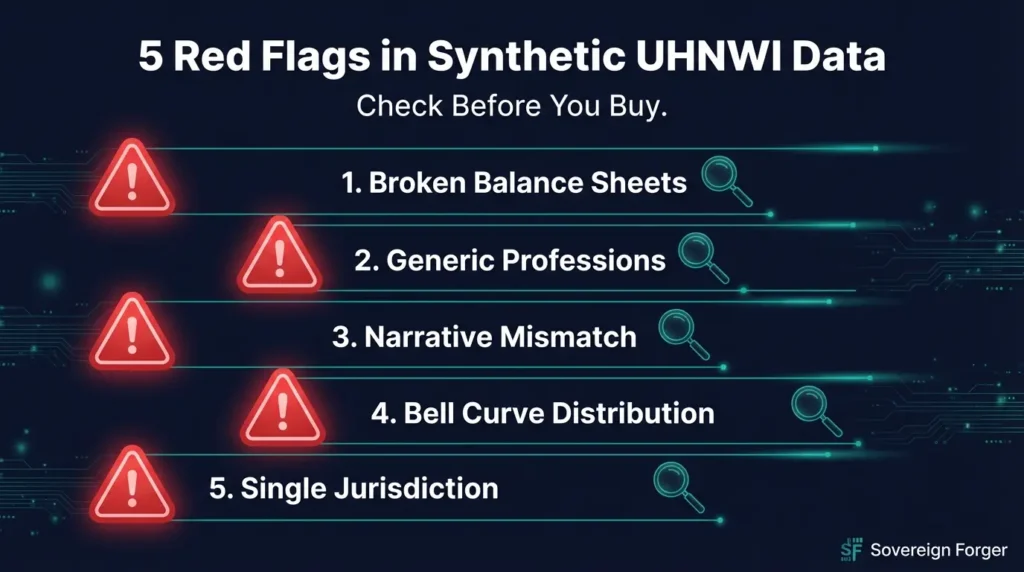

I have audited sample files from every major synthetic data provider in the financial space. Five checks, sixty seconds each. Most fail at least three.

A five-minute audit of any sample file will tell you whether the provider understands UHNWI data — or is simply generating plausible-looking numbers with no structural integrity.

Here are the five checks that matter.

Red Flag 1: The Balance Sheet Does Not Balance

Open the file. Pick any record. Calculate: Total Assets minus Total Liabilities. Does it equal Net Worth?

If it does not — on even a single record — the financial fields were generated independently rather than derived from algebraic constraints. This means the provider’s pipeline samples each number from a separate distribution and hopes they are “close enough.” At the UHNWI level, “close enough” means your compliance tools are being tested against records that violate the fundamental accounting identity.

Check ten records. If any of them fail, the entire dataset is unreliable. This is a binary test — partial compliance is not compliance. You can automate it with our open-source Balance Sheet Test tool on GitHub.

I detail the full methodology — including asset decomposition checks — in The Balance Sheet Test.

Red Flag 2: Every Professional Is Generic

Look at the profession field across fifty records. Do you see “business executive,” “entrepreneur,” “investor,” and “consultant” repeated with no further specificity?

Real UHNWI wealth creation follows identifiable pathways. In Silicon Valley, you see venture-backed founders, principals of family offices, managing partners of VC funds, and CTOs who exercised pre-IPO options. In the pharmaceutical sector, you see biotech founders, clinical development executives, and patent portfolio holders.

If the profession field reads like a random pick from a list of ten generic titles, the provider is not modeling wealth creation patterns. They are filling a column. Your AI learns nothing about the correlation between profession, asset structure, and wealth tier — correlations that exist in every real UHNWI dataset.

Red Flag 3: The Narrative Contradicts the Numbers

If the dataset includes narrative fields — a biography, an asset description, or a wealth summary — read one carefully. Then cross-reference every dollar amount in the narrative against the structured fields.

If the narrative says “property holdings valued at approximately $21 million” but the property_value field shows $18,400,000, the narrative and the structured data were generated by different processes that are not synchronized. This is surprisingly common in LLM-generated datasets where the text model writes a plausible story without access to the actual financial figures.

Every dollar amount in the narrative should match the structured fields exactly. Not approximately. Exactly.

Red Flag 4: The Wealth Distribution Is a Bell Curve

Plot the net worth values. If the distribution is symmetric — roughly as many profiles above the mean as below it — the data was sampled from a Gaussian distribution rather than a Pareto distribution.

UHNWI wealth follows a power law. Most profiles should cluster at the lower end of the range, with a long tail extending to much higher values. A bell curve distribution signals that the provider used the simplest possible random number generator without calibrating to empirical wealth data.

This matters for your AI because the frequency of profiles at different wealth tiers directly affects how your model weights and classifies them. Wrong frequency means wrong weights means wrong predictions.

For the full mathematical argument, see Pareto, Not Gaussian: The Math Behind Realistic Wealth Distribution.

Red Flag 5: Everyone Lives in One Jurisdiction

Count the distinct jurisdictions in the offshore or entity fields. If 95% of profiles list the same jurisdiction — or worse, if there is no offshore field at all — the data does not model the multi-jurisdictional reality of UHNWI wealth.

At the $30 million-plus level, multi-jurisdictional structuring is the norm, not the exception. Onshore operating entities, offshore holding structures, real estate in multiple countries, and banking relationships across three or more jurisdictions are standard. If your test data does not reflect this, your compliance system is being tested against a fantasy.

See The Compliance Blind Spot for what happens when EDD systems go live against real UHNWI complexity.

Run These Checks on Our Data

Download 100 free born-synthetic Silicon Valley UHNWI profiles. Run all five checks — starting with the Balance Sheet Test. I publish the sample specifically so you can audit it before committing — because born-synthetic data should prove itself mathematically, not just look plausible. If any check fails, do not buy.

Frequently Asked Questions

What are the main red flags when evaluating a synthetic data provider?

Five critical red flags: (1) Cannot demonstrate balance sheet integrity across their dataset, (2) Requires your real data as input (creates GDPR liability), (3) Uses Gaussian rather than Pareto distributions for wealth data, (4) Cannot show cultural specificity in naming and wealth patterns, (5) Does not provide provenance documentation or certificates of origin for their data.

How can I verify a synthetic data provider’s quality claims?

Request a sample and run three tests: balance sheet test (do assets minus liabilities equal net worth for every record?), distribution test (does wealth follow a Pareto power-law?), and diversity test (are names culturally appropriate, are offshore jurisdictions realistic, are professions archetype-specific?). If any test fails, the provider’s core generation methodology is flawed.

Why does it matter if a synthetic data provider requires real data as input?

If the provider needs your real data, you are processing personal data under GDPR. This requires a legal basis, a Data Protection Impact Assessment, data processing agreements, and ongoing compliance obligations. If the synthetic data has lineage to real persons, it may still be considered personal data. Born-synthetic data requires no input data, eliminating this entire chain of GDPR obligations.

What should a Certificate of Sovereign Origin include?

A proper provenance certificate should document: the generation methodology (mathematical distributions used), that no real personal data was used as input (born-synthetic attestation), the number of fields and records, the compliance frameworks addressed (GDPR, EU AI Act, CCPA), and a unique certificate ID for audit traceability. Sovereign Forger includes this certificate with every dataset delivery.

How does Sovereign Forger differ from Mostly AI, Tonic, and Gretel?

Three fundamental differences: (1) No input data required — Sovereign Forger generates from mathematical distributions, not from your real data. (2) Math First approach — financial constraints are enforced algebraically before AI adds narrative context. (3) Offline processing — the local LLM never sends data to the cloud. Competitors require real data input, use cloud processing, and generate from learned distributions rather than domain-specific mathematical models.