Key Takeaway: The EU AI Act Article 10 requires governed, representative, and documented training data for all high-risk AI systems — including those used in financial services. Full enforcement begins August 2026 with fines up to 7% of global revenue. Born-synthetic data is the only approach that satisfies both Article 10 representativeness and GDPR data protection simultaneously.

August 2026. Five months from now. If your EU AI Act training data governance is not documented, you are about to face fines up to 7% of global revenue. I am not exaggerating — I am quoting the regulation.

The EU AI Act is not coming. It is here. The regulation entered into force in August 2024. Full enforcement of high-risk AI system requirements — including Article 10 on training data governance — begins August 2026. If your organization uses AI for financial services — credit scoring, risk assessment, AML monitoring, fraud detection, KYC screening — your obligations are about to become enforceable.

And here is what keeps me up at night: most financial institutions I have spoken with have no plan for this. They assume their current training data — a mix of production copies, anonymized subsets, and vendor-provided samples — will pass an Article 10 audit. It will not.

What Does EU AI Act Article 10 Actually Require?

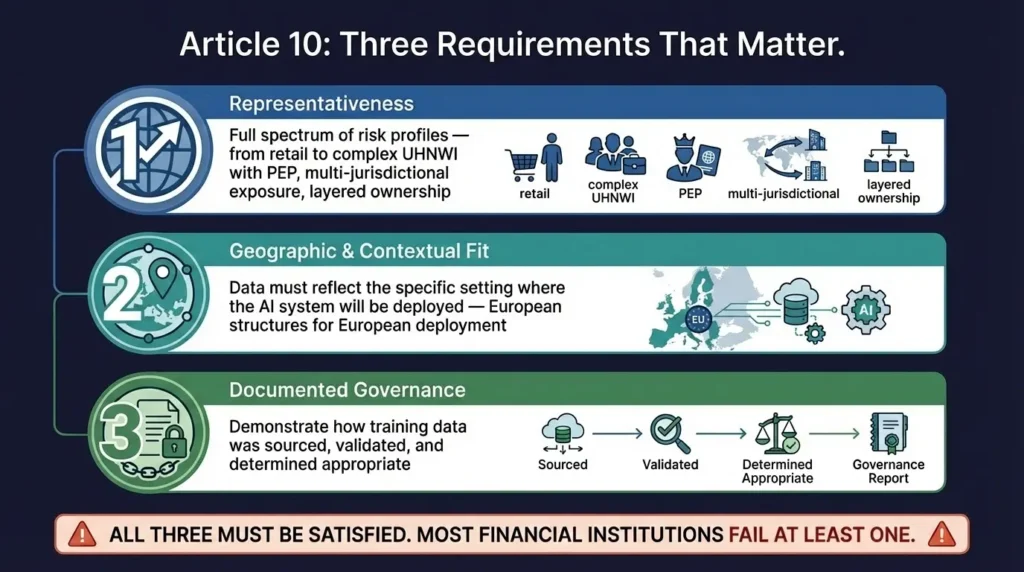

Article 10 establishes data governance obligations for high-risk AI systems. The requirements apply to training, validation, and testing datasets. Three provisions matter most for financial services:

First, representativeness. Datasets must be “relevant, sufficiently representative, and to the extent possible, free of errors and complete.” For AML and KYC AI, this means your training data must include the full spectrum of client risk profiles — from low-risk retail customers to complex UHNWI structures with multi-jurisdictional exposure, PEP connections, and layered beneficial ownership.

This is where most institutions fail. Their training data skews heavily toward retail profiles. The UHNWI edge cases — the exact profiles that trigger Enhanced Due Diligence, the ones that involve trusts layered through three jurisdictions, the PEP-adjacent family members — are underrepresented or missing entirely. According to Deloitte’s 2024 AML Compliance Survey, 68% of financial institutions reported that their test data did not adequately cover high-risk client segments.

Second, geographic and contextual fit. Datasets must “take into account the specific geographical, contextual, behavioural, or functional setting within which the high-risk AI system is intended to be used.” An AML model deployed in Europe must be trained on profiles reflecting European wealth structures, jurisdictions, and regulatory context — not just American retail banking data.

I have seen European banks training AML models on datasets dominated by US-centric profiles. No offshore structures in the Channel Islands. No Swiss private banking patterns. No Middle Eastern hawala networks. The model passes internal validation because the test data matches the training data — both are equally unrepresentative. Then it goes live and misses the patterns that matter.

Third, documented governance. You need to demonstrate how your training data was sourced, validated, and determined to be appropriate. This is the requirement that catches institutions by surprise. It is not enough to have good data — you must prove it is good, with documentation that an auditor can review.

Why Does Article 10 Create a Double Bind for Financial Institutions?

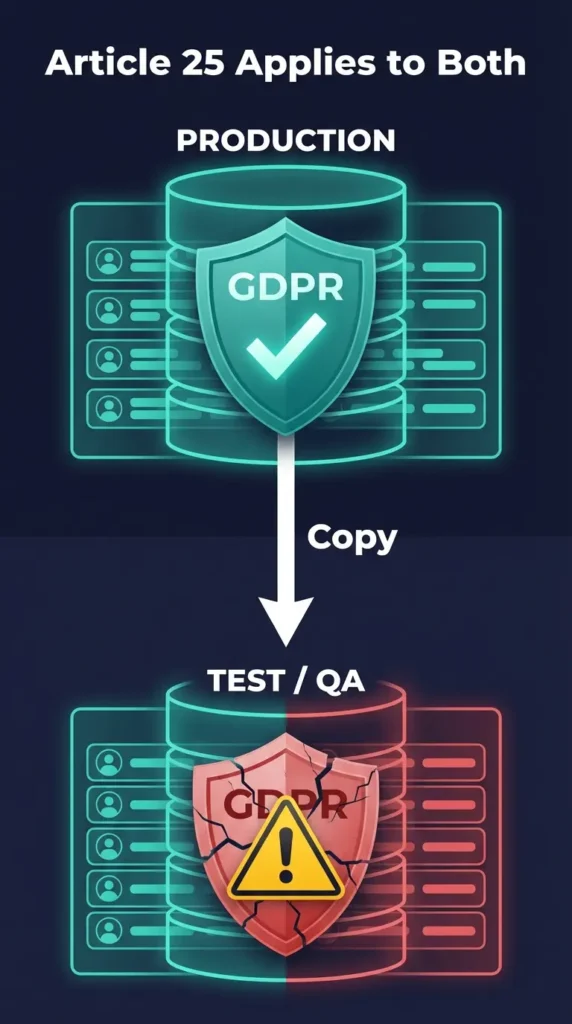

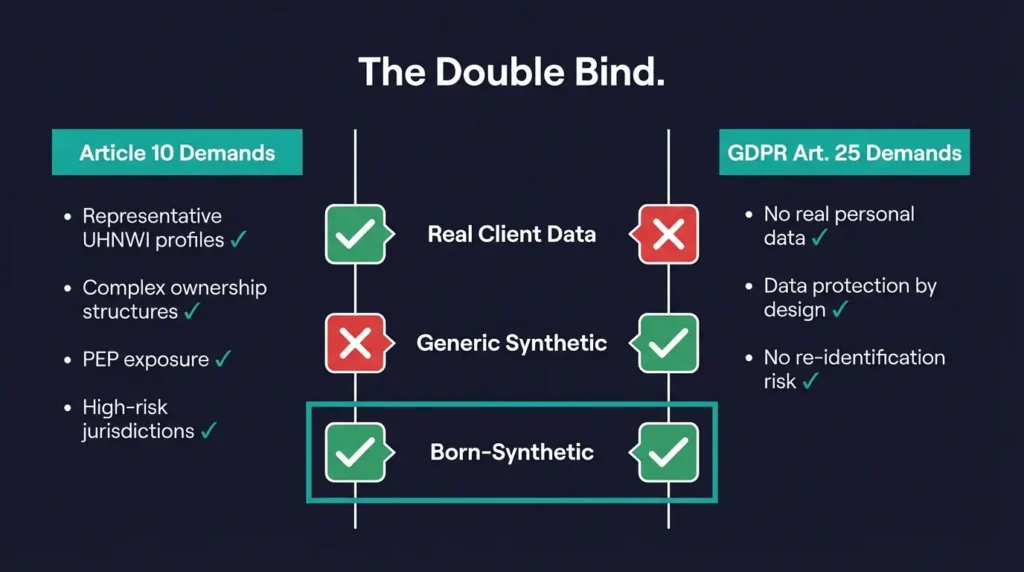

Here is where financial institutions get stuck. Article 10 requires representativeness — your data must include realistic UHNWI profiles, complex ownership structures, PEP exposure, and high-risk jurisdictions. But GDPR Article 25 prohibits real personal data in development and testing environments.

Using real client data for AI training satisfies representativeness but violates data protection. Using generic synthetic data satisfies data protection but fails representativeness. Using anonymized real data creates a grey zone that grows riskier as re-identification techniques improve.

I call this the compliance double bind. Every path that starts with real data leads to a GDPR problem. Every path that starts with generic data leads to an Article 10 problem. The only path that satisfies both starts with data that was never real to begin with.

Born-synthetic data is the only approach that satisfies both requirements simultaneously. The data is generated from mathematical distributions that mirror real-world wealth patterns — Pareto distributions, geographic weighting, culturally accurate archetypes — without any input from real individuals.

What Does “Governed Training Data” Actually Look Like?

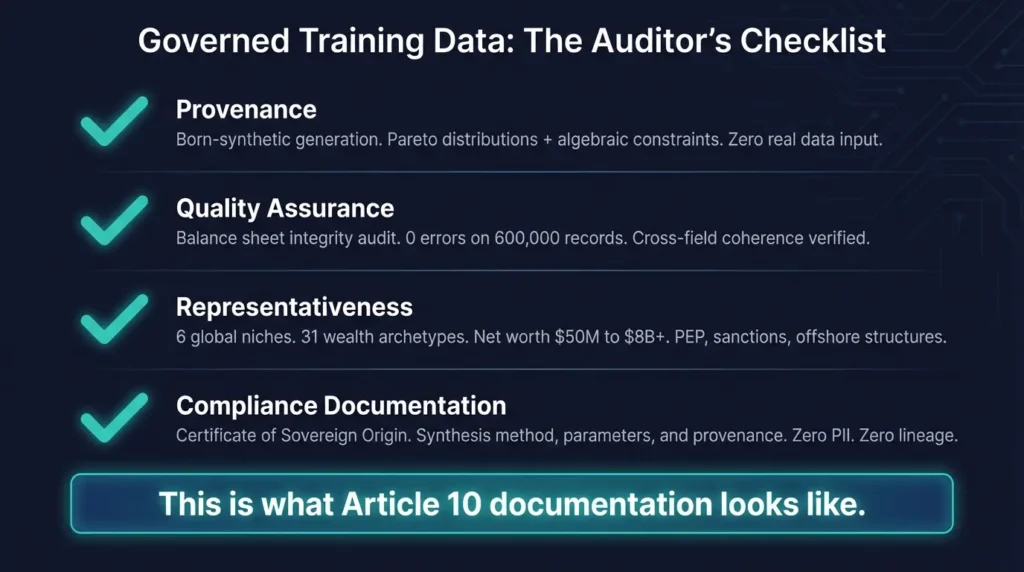

Article 10 compliance requires documentation. Let me be specific about what an auditor will ask for, and what your answers need to look like:

Provenance documentation. Where did the data come from? For born-synthetic data: generation using Pareto distributions and algebraic constraints, with offline LLM enrichment. Zero real data as input. Fully described methodology. Every parameter documented.

I built the Sovereign Forger pipeline to produce exactly this documentation. The Certificate of Sovereign Origin that ships with every dataset specifies the generation method, the mathematical distributions used, the constraint system, and the enrichment process. When your auditor asks “where did this training data come from?” you hand them a certificate, not an explanation.

Quality assurance records. How was quality ensured? Every record passes a balance sheet integrity audit (Assets minus Liabilities equals Net Worth, zero exceptions). Cross-field coherence verified. Cultural and geographic consistency maintained across 31 wealth archetypes and 6 global niches. Zero ghost names. Zero placeholder leaks. Zero first-person voice artifacts.

According to the European Banking Authority’s 2025 guidance on AI model validation, institutions must demonstrate that training data quality controls are “systematic, documented, and repeatable.” Running a one-time check is not sufficient — you need an audit trail showing that quality gates are embedded in the generation process.

Representativeness evidence. How was representativeness achieved? 600,000 profiles spanning six regions — Silicon Valley, Old Money Europe, Middle East, LatAm, Pacific Rim, Swiss-Singapore. Net worth range $50M to $8B+. Complex structures including offshore vehicles, multi-entity ownership, trust and foundation exposure. PEP status distributions that reflect real-world prevalence by region.

Compliance chain. How was data protection compliance maintained? Certificate of Sovereign Origin documenting synthesis method, parameters, and provenance. Zero PII. Zero lineage. Born-synthetic from the first byte.

This is exactly what every Sovereign Forger dataset provides — and what the Certificate of Sovereign Origin documents for every shipment.

How Should Compliance Teams Prepare for August 2026?

Five months is not a lot of time. Here is the implementation path I recommend, based on conversations with compliance teams at institutions ranging from neobanks to tier-1 global banks:

Step 1: Inventory your AI systems. List every AI/ML model used in financial services — AML monitoring, fraud detection, credit scoring, KYC screening, risk assessment. For each, document what training data was used and where it came from. Most institutions discover 2-3 models they forgot about.

Step 2: Classify risk levels. Under the EU AI Act, AI systems used for creditworthiness assessment and credit scoring are explicitly classified as high-risk (Annex III, Section 5b). AML monitoring systems likely qualify under the general financial services classification. Map each system to its risk category.

Step 3: Audit training data governance. For each high-risk system, assess whether the training data meets the three Article 10 requirements: representativeness, geographic/contextual fit, and documented governance. Be honest. If your AML model was trained on retail-only data with no UHNWI representation, that is an Article 10 gap.

Step 4: Replace non-compliant training data. For datasets that fail representativeness or data protection requirements, deploy born-synthetic alternatives. Standard formats (JSONL, CSV) mean integration takes days, not months. The 29-field KYC/AML schema includes PEP status, risk ratings, sanctions screening results, and adverse media flags — the exact fields your AML models need.

Step 5: Build the documentation package. Assemble the provenance, quality, representativeness, and compliance documentation for each high-risk system. This is what the national AI supervisory authority will request during an audit. Having it ready before enforcement begins is the difference between a warning and a fine.

Step 6: Establish ongoing governance. Article 10 is not a one-time checkbox. As models are retrained and data is refreshed, the governance obligations persist. Build the process now — data sourcing, quality gates, documentation, audit trail — so it scales.

What Is the Financial Argument for Acting Now?

The maximum fine under the EU AI Act for non-compliance with high-risk system requirements is 7% of global annual turnover or EUR 35 million, whichever is higher. For context, that is significantly higher than GDPR’s 4% cap.

A Compliance Enterprise dataset — 100,000 born-synthetic profiles with full KYC/AML metadata, Certificate of Sovereign Origin, and documented provenance — costs $24,999.

$24,999 for full compliance documentation versus 7% of revenue. I will let you do the math. The deadline is August 2026.

For background on why GDPR Article 25 specifically prohibits real data in test environments, see Why GDPR Article 25 Bans Real Data in Test Environments. For context on why generic test data fails EDD systems, see The Compliance Blind Spot.

Frequently Asked Questions

Which AI systems in financial services are classified as high-risk under the EU AI Act?

AI systems used for creditworthiness assessment and credit scoring are explicitly high-risk (Annex III). AML monitoring, fraud detection, and KYC screening systems are likely classified under the broader financial services provisions. Any AI system that influences decisions about individuals’ access to financial services warrants a high-risk assessment.

Does Article 10 apply to AI models already in production, or only new deployments?

Article 10 applies to all high-risk AI systems placed on the market or put into service after August 2026, including existing models that are updated or retrained. If your AML model receives a training data refresh after the enforcement date, the refreshed data must comply. Institutions should treat any model retraining cycle as a compliance trigger.

Can we satisfy Article 10 representativeness with anonymized production data?

Technically possible, but increasingly risky. Anonymized data must still comply with GDPR — and for UHNWI profiles, anonymization often fails the re-identification test. You satisfy one regulation while creating exposure under another. Born-synthetic data avoids this trade-off entirely by generating representative profiles with zero lineage to real individuals.

What documentation does an Article 10 audit actually require?

Expect requests for: data provenance records (where did the training data come from), quality assurance logs (how were errors detected and corrected), representativeness assessments (does the data cover the deployment context), and compliance certifications (how was data protection ensured). The Certificate of Sovereign Origin covers all four areas for born-synthetic datasets.

How does Article 10 interact with DORA for financial institutions?

The Digital Operational Resilience Act (DORA), fully applicable since January 2025, requires financial entities to test ICT systems with scenarios that reflect real-world operational risks. Combined with Article 10, this means your AI testing data must be both operationally representative and privacy-compliant. Born-synthetic data satisfies both requirements — realistic complexity without real personal data.

Download 100 free KYC-Enhanced profiles — 29 fields, Certificate of Origin included — and evaluate the data before August.