I have had this conversation dozens of times. “We anonymized the data, so we’re GDPR-compliant.” Every time, I ask the same question: can you prove no individual can be re-identified from what remains? The answer is always silence.

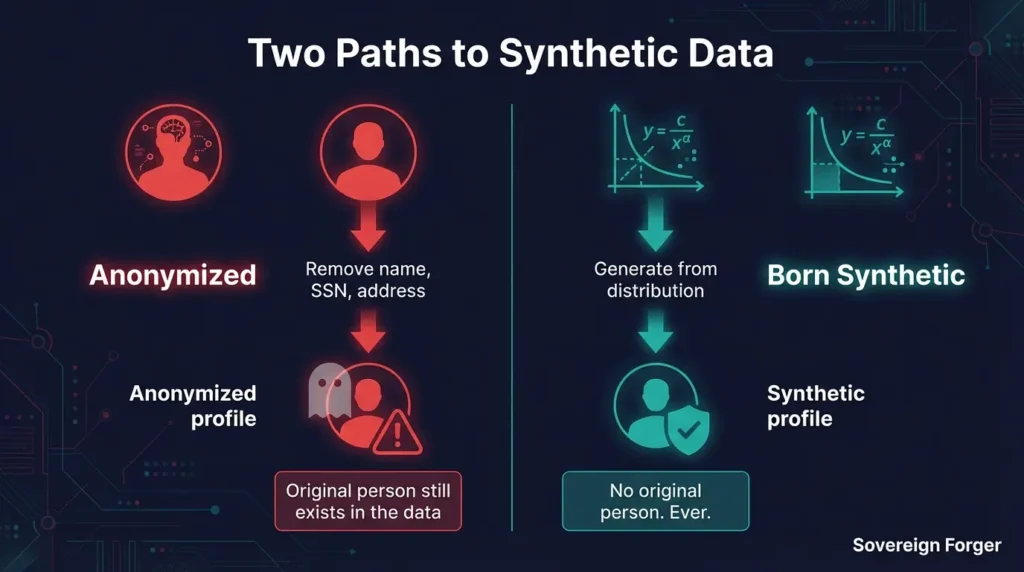

These approaches sound similar. Under GDPR, they are fundamentally different — and the distinction determines whether your dataset is a compliance asset or a compliance liability.

The Anonymization Illusion

Anonymization removes direct identifiers from a dataset — names, tax IDs, account numbers. The assumption is that without these identifiers, the remaining data cannot be linked back to the original person.

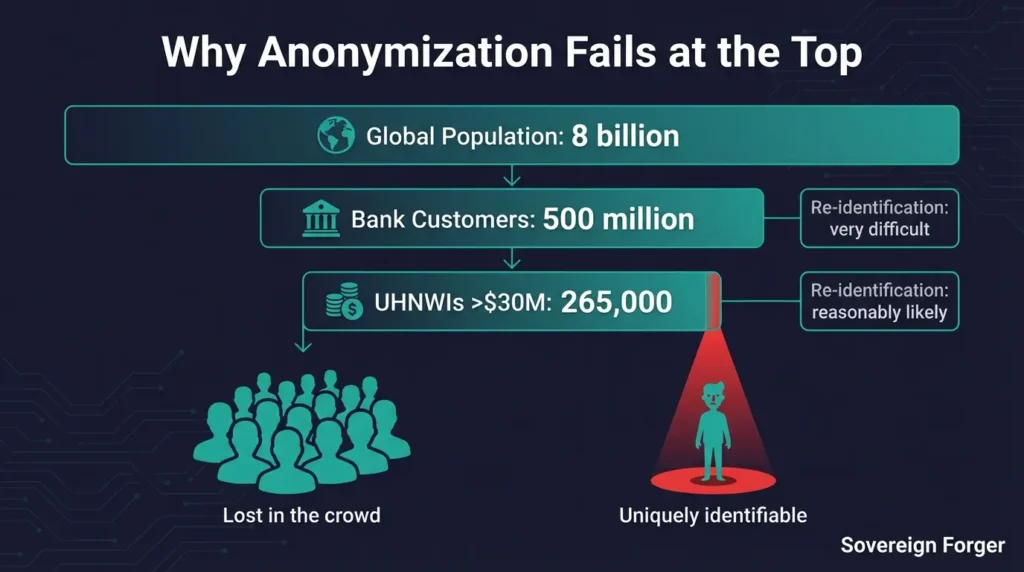

This assumption has been invalidated repeatedly. Research published over the past decade has demonstrated that combinations of quasi-identifiers — age, zip code, profession, transaction patterns — can re-identify individuals in datasets that were considered safely anonymized. The more unusual the individual, the easier the re-identification.

UHNWI profiles are, by definition, unusual. A person with $118 million in net worth, a residence in Santa Monica, a background in quantum computing, and a Cayman Exempted Limited Partnership is not hiding in a crowd. Even without a name attached, the combination of wealth tier, profession, geography, and offshore structure may uniquely identify a small number of real individuals — or even a single one.

For a WealthTech or RegTech company building products for this market, using anonymized UHNWI data as training or testing input creates a re-identification risk that grows more serious every year as linkage algorithms improve.

For more on why generic approaches fail at this wealth tier, see Why Generic Synthetic Data Fails for Wealth Management AI.

What GDPR Actually Says

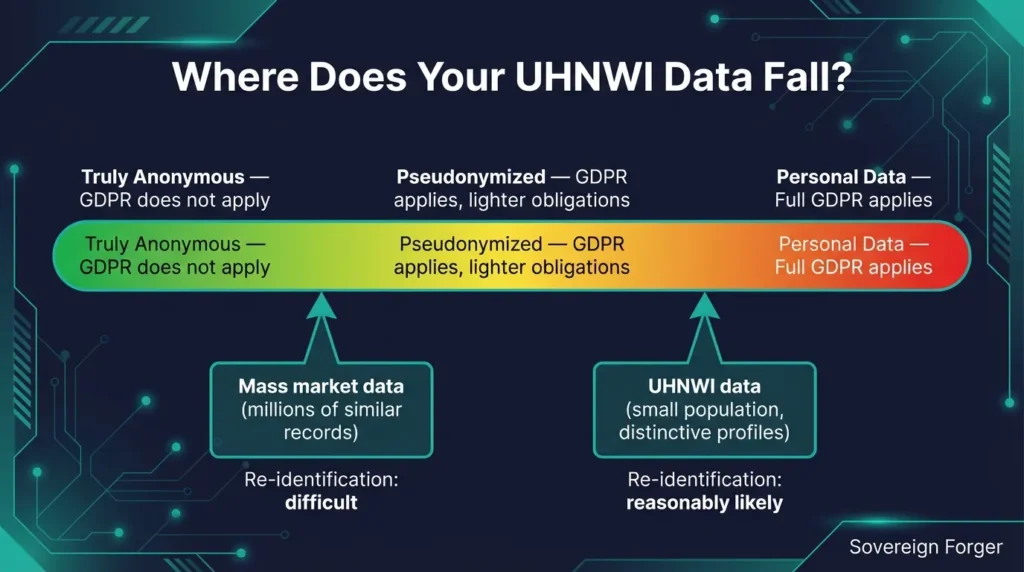

GDPR distinguishes between anonymized data and pseudonymized data, but the boundary between them depends on whether re-identification is “reasonably likely.” Recital 26 states that all means “reasonably likely to be used” for identification should be considered — including means available to third parties.

For mass-market data with millions of similar records, re-identification may be difficult enough to qualify as truly anonymous. For UHNWI data, the calculus is different. The population is small. The profiles are distinctive. The quasi-identifiers are rich. A regulator assessing whether your “anonymized” UHNWI dataset qualifies as anonymous under GDPR would have legitimate grounds to argue that re-identification is reasonably likely.

If your dataset is classified as pseudonymized rather than anonymous, GDPR still applies. You need a lawful basis for processing it. You need to respect data subject rights. And you need to defend your anonymization methodology to regulators if challenged.

The Born-Synthetic Alternative

Born-synthetic data sidesteps this entire risk surface. No real individual is used as input at any stage of the generation process. There is no original person to re-identify — not because their identity was removed, but because they never existed.

This is why I built the Sovereign Forger pipeline the way I did. The generation process starts from mathematical distributions — a Pareto distribution for wealth, constrained splits for asset allocation, geographic models for residence and jurisdiction. An AI model adds narrative richness — biography, profession, philanthropy — but it generates these from patterns, not from any individual’s real data.

The result is a dataset where GDPR does not apply, because there is no personal data — original, anonymized, or pseudonymized — anywhere in the pipeline. The data is synthetic from the first byte.

This is not a theoretical distinction. It is a practical one that determines whether your legal team needs to maintain a Data Protection Impact Assessment for your test data, whether data subject access requests could theoretically apply, and whether a breach of your test environment triggers notification obligations.

Why This Matters More at the UHNWI Tier

The re-identification risk of anonymized data is inversely proportional to the size and homogeneity of the source population. For retail banking customers, the population is large and the profiles are similar — re-identification is genuinely difficult.

For UHNWIs, the population is small (roughly 265,000 individuals globally above $30 million, according to published wealth reports) and the profiles are highly distinctive. Each combination of wealth tier, jurisdiction, offshore structure, and professional background narrows the candidate pool dramatically.

A company that uses anonymized UHNWI data is making a bet that no future re-identification technique will be able to link their synthetic-looking records back to real individuals. Given the pace of advancement in record linkage and inference attacks, this is a bet with a declining expected value.

Born-synthetic data removes the bet entirely.

Verify the Approach

Download 100 free Silicon Valley UHNWI profiles. No real individual was used as input. No anonymization was applied — because there was nothing to anonymize. I publish the full methodology so you can verify every step before spending a dollar.

Once you download the sample, you can verify the mathematical integrity using The Balance Sheet Test — or run our open-source audit tool on GitHub.

Related: GDPR Article 25 and Test Data: What Your Test Environment Is Missing

Related: Five Re-Identification Attacks That Break Anonymized Financial Data

Frequently Asked Questions

What is the difference between born-synthetic and anonymized data?

Anonymized data starts from real individuals and attempts to remove identifying information through masking, generalization, or perturbation. Born-synthetic data is generated from mathematical distributions and domain knowledge — no real person’s data is ever involved. The critical difference: anonymized data always carries residual re-identification risk because it has lineage to real people. Born-synthetic data has zero lineage by construction.

Is anonymized data considered personal data under GDPR?

Truly anonymized data falls outside GDPR scope (Recital 26). However, the threshold for anonymization is extremely high: data must be irreversibly stripped of all identifiers such that re-identification is not reasonably likely by any means. Research has shown that 99.98% of individuals in anonymized datasets can be re-identified using just 15 demographic attributes. Most anonymized data is actually pseudonymized, which remains personal data under GDPR.

Why is born-synthetic data GDPR-compliant by construction?

Born-synthetic data never processes, stores, or transforms any individual’s personal data. Since GDPR applies to the processing of personal data, and born-synthetic generation involves zero personal data at any stage, GDPR obligations (consent, right to erasure, data minimization) simply do not apply. This is compliance by design — GDPR Article 25 — rather than compliance by post-processing.

Can anonymized data be re-identified?

Yes. Multiple peer-reviewed studies demonstrate successful re-identification attacks on anonymized datasets. Sweeney showed that 87% of the US population can be uniquely identified by ZIP code, birth date, and gender alone. Rocher et al. proved that 99.98% of Americans can be re-identified in any dataset using 15 attributes. For UHNWI profiles with distinctive quasi-identifiers, the risk is even higher.

Does using born-synthetic data eliminate the need for a Data Protection Impact Assessment?

If the born-synthetic data has no lineage to real individuals, a DPIA for the data itself is not required since no personal data is being processed. However, if the synthetic data is used alongside real data, or if the generation process involves real data as input, a DPIA may still be necessary for that processing activity. Sovereign Forger data requires no input data, so no DPIA is needed for the dataset itself.