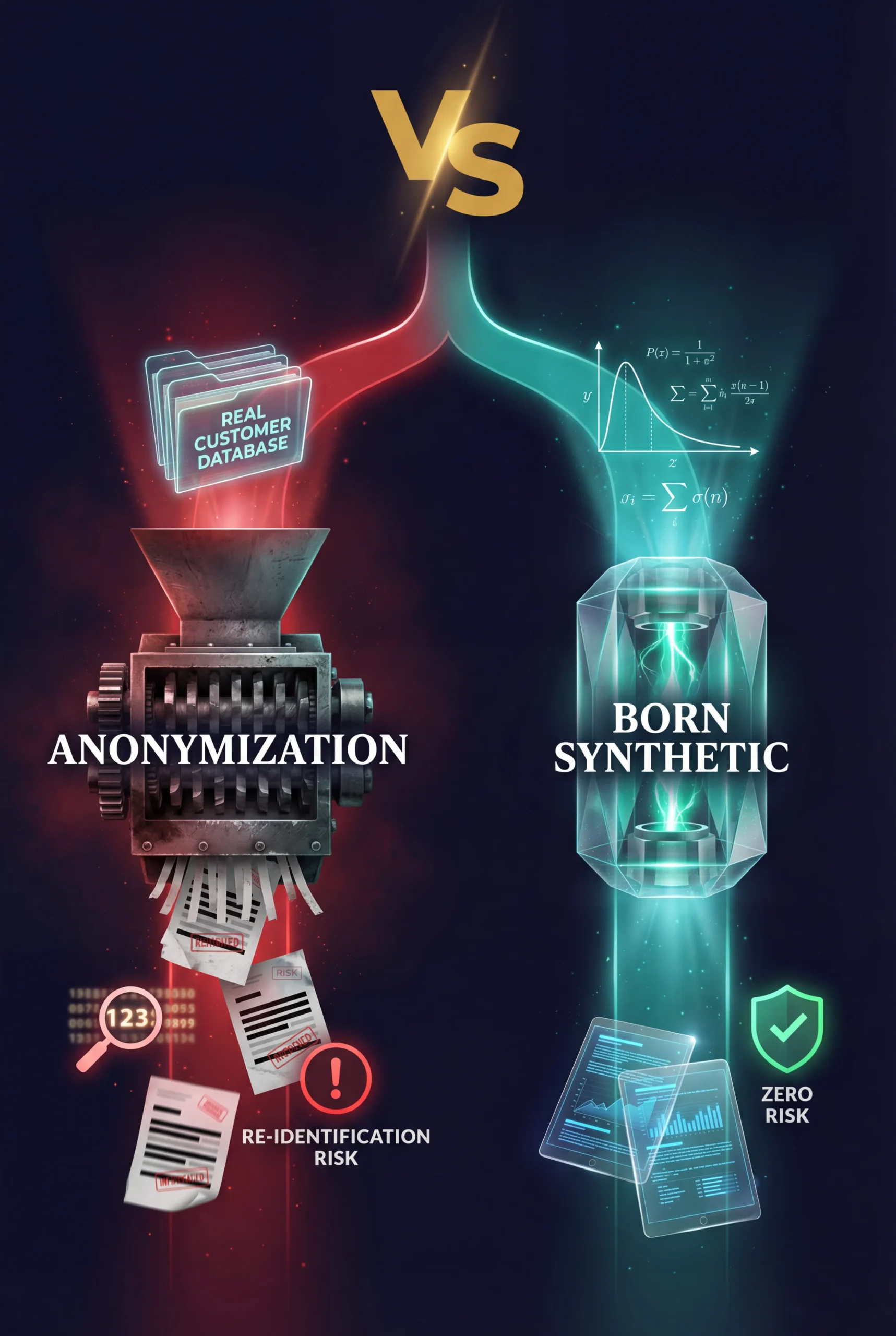

Born Synthetic vs Data Anonymization — Why Starting From Zero Beats Starting From Real

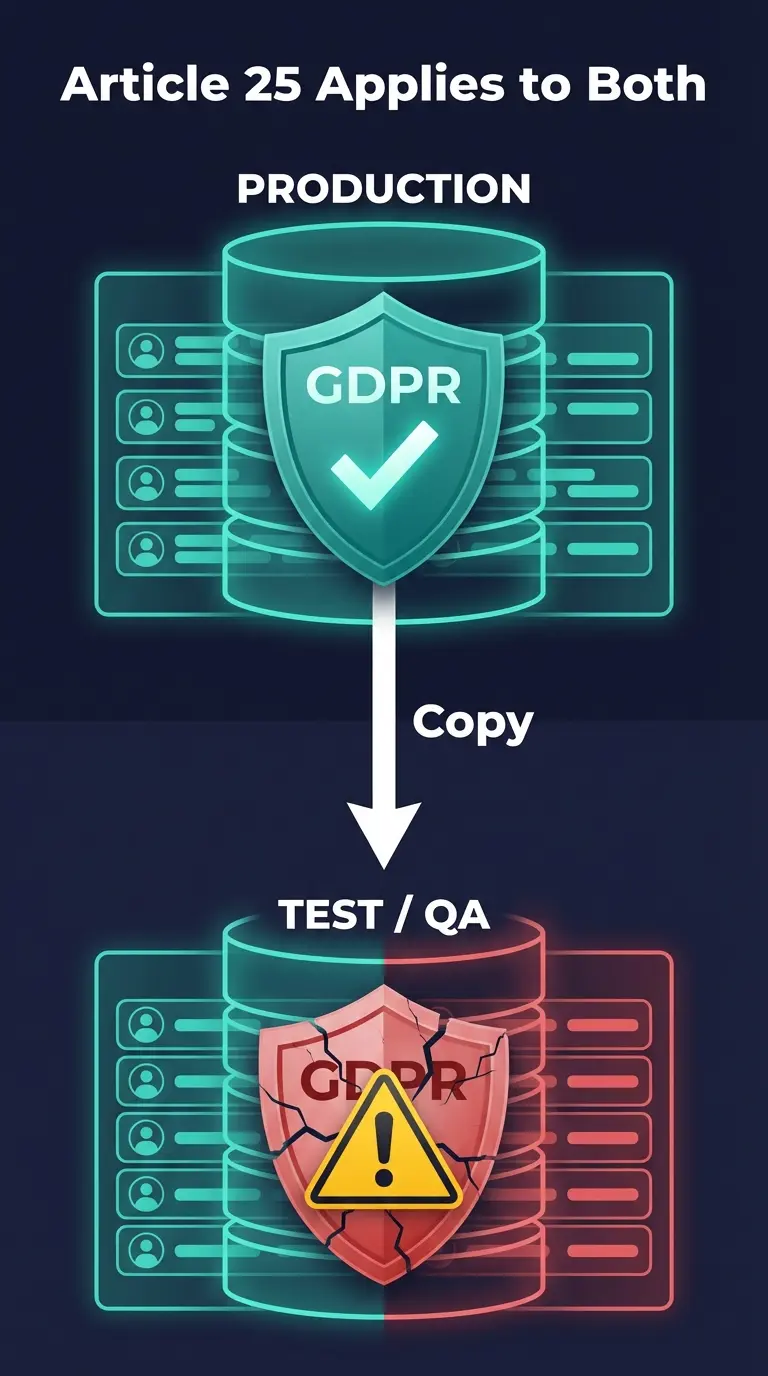

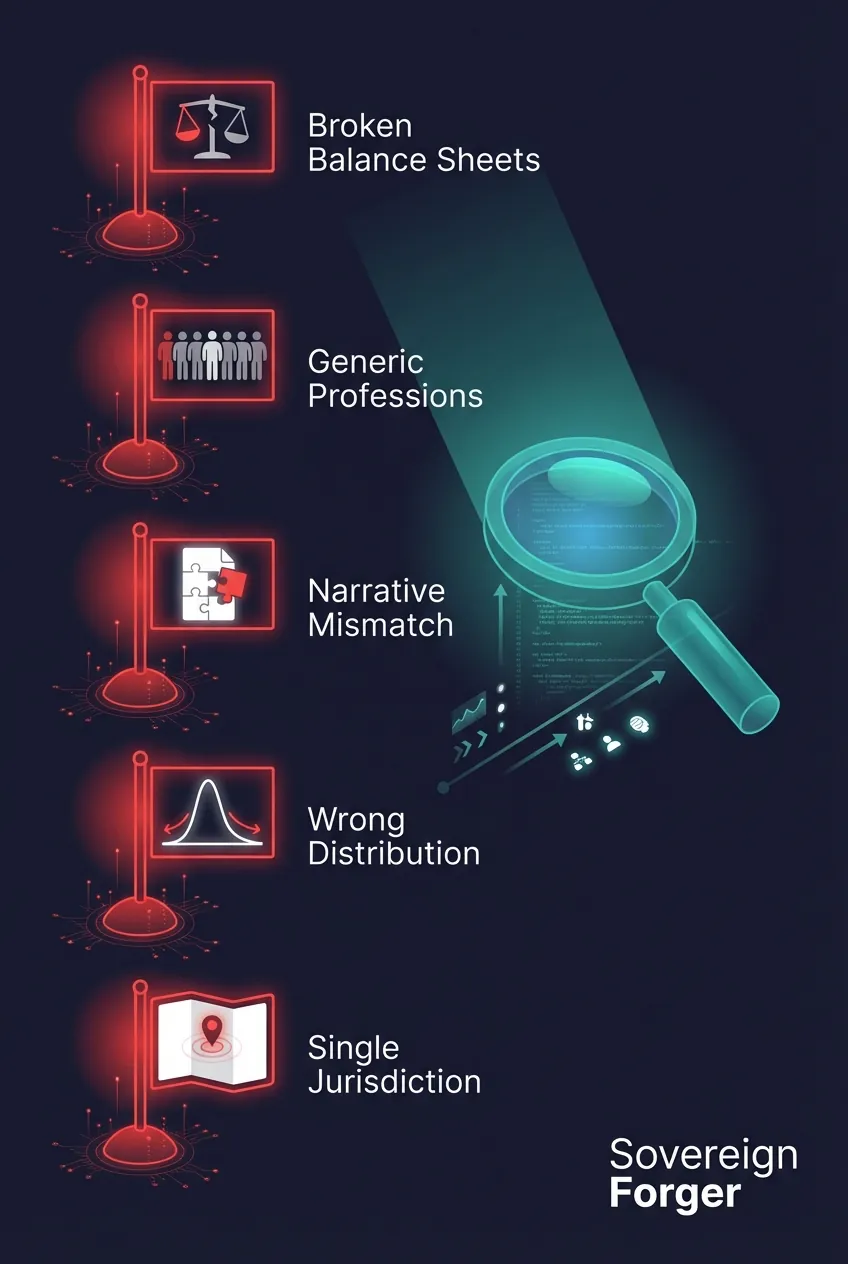

I have had this conversation dozens of times. A compliance officer tells me: “We anonymize our data, so we’re covered.” Every time, I ask the same question: if your anonymization fails, what happens? The answer is always silence. Because they know. A single re-identification event doesn’t just create a GDPR fine — it destroys the […]

Born Synthetic vs Data Anonymization — Why Starting From Zero Beats Starting From Real Read Post »