I have had this conversation dozens of times. A compliance officer tells me: “We anonymize our data, so we’re covered.” Every time, I ask the same question: if your anonymization fails, what happens? The answer is always silence. Because they know. A single re-identification event doesn’t just create a GDPR fine — it destroys the entire premise their testing infrastructure is built on.

This is not a theoretical debate. It is a structural choice that determines whether your data strategy has a hidden liability or not.

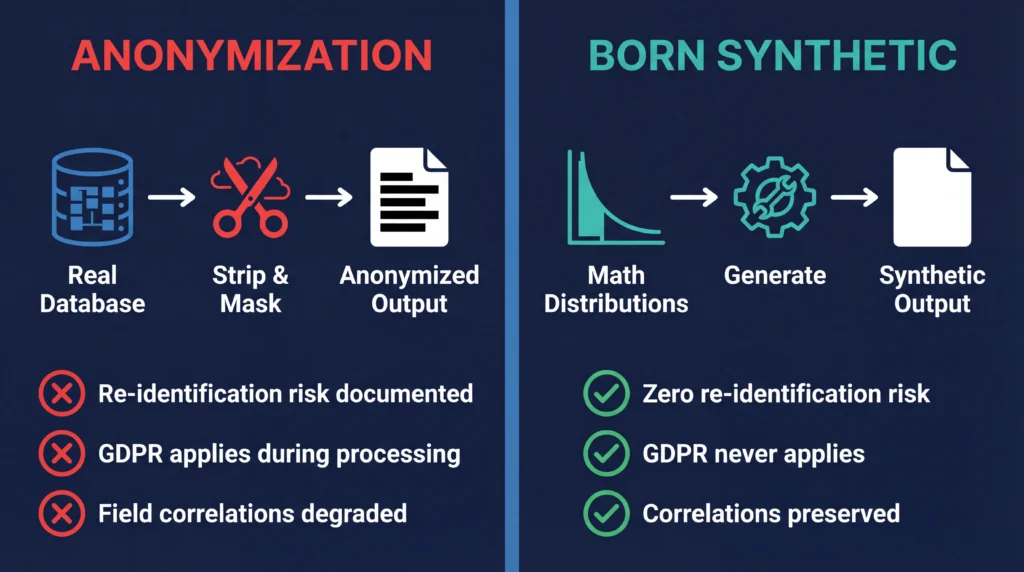

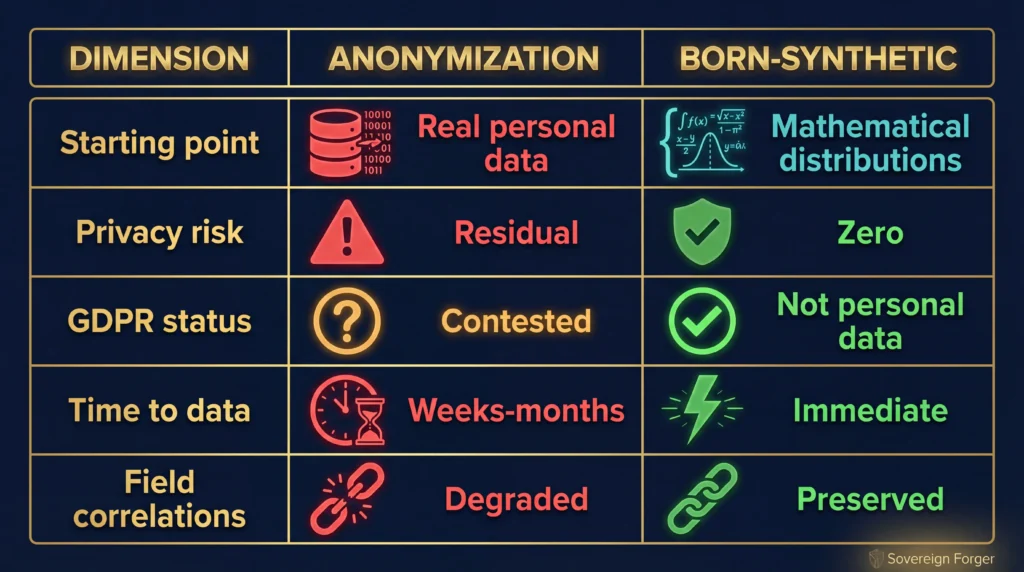

Key Takeaway: Data anonymization starts from real records and strips them down — carrying residual re-identification risk, GDPR processing obligations during transformation, and degraded field correlations. Born-Synthetic data starts from mathematical distributions and builds up — with zero privacy risk, zero GDPR obligations, and preserved statistical properties. The choice is architectural, not incremental.

What Anonymization Actually Does

Data anonymization starts with real personal data and applies transformations to reduce identification risk. The standard techniques are well-known: masking replaces identifying values, generalization reduces precision, suppression removes high-risk fields, perturbation adds noise, and k-anonymity ensures each record matches at least k-1 others.

The goal is to make the data “anonymous enough” that it no longer qualifies as personal data under GDPR. The problem is that “anonymous enough” is a moving target — and the target keeps moving in the wrong direction.

The Re-Identification Problem

Anonymized data carries a fundamental vulnerability: the original data still exists beneath the transformations. Given sufficient auxiliary information, anonymized records can be re-linked to real individuals.

This is not theoretical. Research teams have demonstrated successful re-identification attacks on anonymized financial datasets using publicly available information. A “Very High” risk client with a specific jurisdiction, industry sector, and wealth range may be uniquely identifiable even after removing their name and account number.

The European Data Protection Board has acknowledged this risk explicitly. Their guidance states that anonymization is a processing operation — meaning GDPR applies to the data until anonymization is complete. And if the anonymization can be reversed, it was never truly complete.

The privacy-utility tradeoff

This is the structural problem that no anonymization technique has solved:

- Heavy generalization removes the precision needed for model training

- Suppression of high-risk fields eliminates exactly the edge cases you need to test

- Perturbation degrades correlations between fields

- K-anonymity produces uniform clusters instead of realistic distributions

You get privacy OR utility — not both. The harder you anonymize, the less useful the data becomes. The more useful you keep it, the higher the re-identification risk.

What Born-Synthetic Data Does Differently

Born-Synthetic data does not start from real data at all. There is no “original” to strip, mask, or transform. Every record is generated from mathematical distributions and cultural models.

The architecture separates into distinct stages: statistical distributions define wealth levels, demographic patterns, and financial behavior. Algebraic constraints enforce internal consistency across all financial fields — every profile is a coherent entity, not a random assembly of values. Cultural models add contextual realism — names match nationalities, wealth structures match geographic norms.

The result is data that behaves like real financial data — because the mathematics underlying real wealth distributions is the same mathematics used to generate it. But no real person’s data was ever input, processed, or referenced.

What the Regulators Say

GDPR and anonymization

The GDPR does not define a specific anonymization standard. Recital 26 states that data is anonymous when it “does not relate to an identified or identifiable natural person.” But the threshold for “identifiable” is interpreted differently by different data protection authorities.

The Article 29 Working Party (now EDPB) has warned that anonymization is difficult to achieve in practice, especially for rich datasets with many attributes. Financial data — with its combination of numerical precision, geographic specificity, and behavioral patterns — is particularly vulnerable.

Born-Synthetic data sidesteps this debate entirely. There is no natural person to identify or re-identify. The data was never personal data at any point in its lifecycle.

EU AI Act Article 10

The EU AI Act requires documented data governance for AI training data, especially for high-risk AI systems in financial services. For anonymized data, compliance means documenting the anonymization process, demonstrating its effectiveness, and maintaining records of the original data processing. For Born-Synthetic data, compliance means documenting the generation methodology and statistical properties — a fundamentally simpler obligation.

Enforcement begins August 2026. Sanctions reach €20M or 4% of global turnover.

DORA resilience testing

The Digital Operational Resilience Act requires financial entities to test their systems with “realistic but safe” data. Anonymized production data meets the “realistic” requirement but carries safety concerns. Born-Synthetic data meets both requirements by design.

See DORA Requires Synthetic Data for Resilience Testing for the full analysis.

When Anonymization Still Makes Sense

I am not arguing that anonymization is obsolete. It remains the right choice when you need to preserve specific real-world patterns that cannot be modeled mathematically, when your analysis requires exact replication of production data distributions, or when regulatory requirements mandate that test data be derived from production data.

For financial compliance testing, AI model training, vendor evaluation, and analytics — where you need data that behaves like real data, not data that was real data — Born-Synthetic is the more defensible choice.

The Bottom Line

Anonymization asks: “How much can we strip from real data while keeping it useful?”

Born-Synthetic asks: “How much realism can we build from mathematics alone?”

The first question has a structural tension — privacy and utility pull in opposite directions. The second question has no such tension — the data is private by construction and useful by design.

For financial institutions facing GDPR obligations, EU AI Act requirements, and DORA resilience testing mandates, the question is not whether synthetic data is needed. The question is whether your synthetic data still carries lineage to real people.

Born-Synthetic data carries none.

Take the GDPR Risk Assessment to see where your current data practices create exposure — the scoring covers anonymization risk as well.

Download 100 free KYC-Enhanced profiles and see what zero-lineage data looks like.

FAQ

Q: What exactly does “born synthetic” mean?

A: Born-Synthetic data is generated entirely from mathematical distributions and cultural models — no real customer data is used as input at any stage. Unlike anonymized or GAN-based synthetic data, there is no “original” dataset. The data is synthetic from birth, which means zero lineage to real individuals and zero GDPR processing obligations.

Q: Is anonymized data still considered personal data under GDPR?

A: It depends on the effectiveness of the anonymization. The EDPB has stated that if re-identification is possible using “reasonably likely” means, the data remains personal data. For rich financial datasets with many attributes, achieving true anonymization is extremely difficult. Born-Synthetic data avoids this question entirely — it was never personal data.

Q: Can born-synthetic data replace anonymized data for all use cases?

A: Not all. When you need to preserve specific real-world patterns that cannot be modeled mathematically — such as rare disease correlations in clinical data — anonymization may still be necessary. For financial compliance testing, AI training, and analytics, Born-Synthetic data provides equivalent or superior utility with zero privacy risk.

Q: How does born-synthetic data maintain statistical validity without real data as input?

A: The same mathematical distributions that describe real-world phenomena are used to generate the data. Wealth follows Pareto distributions. Demographics follow geographic patterns. Financial behavior follows sector-specific norms. The mathematics is the same — only the starting point differs.

Q: What is the Certificate of Sovereign Origin?

A: A provenance document that ships with every Born-Synthetic dataset, certifying the generation methodology, the integrity audit results, and the complete chain of evidence confirming no real data was used. It is designed to satisfy regulatory documentation requirements under GDPR, EU AI Act, and DORA.