Credit Suisse: billions in cumulative fines. UBS: $5.1B in France alone. Julius Baer: $79.7M to the DoJ. Behind every one of these enforcement actions is a risk scoring system that either missed genuine threats buried inside complex structures — or flagged every complex structure as a threat. Both failures start in the same place: training data that never contained legitimate structural complexity.

Your Risk Model Cannot Distinguish Complexity From Risk

I spent years watching risk scoring systems at wealth management platforms do something predictable and catastrophic: assign maximum risk to every structurally complex client.

A family office with holdings across four jurisdictions, a Cayman LP, and a Luxembourg SOPARFI? Flagged as high risk. A third-generation Swiss industrialist with a BVI trust, a Liechtenstein foundation, and a dual tax domicile? Flagged as high risk. A retired semiconductor executive in Singapore with equity in a Delaware LLC, a family charitable trust, and property in three countries? High risk.

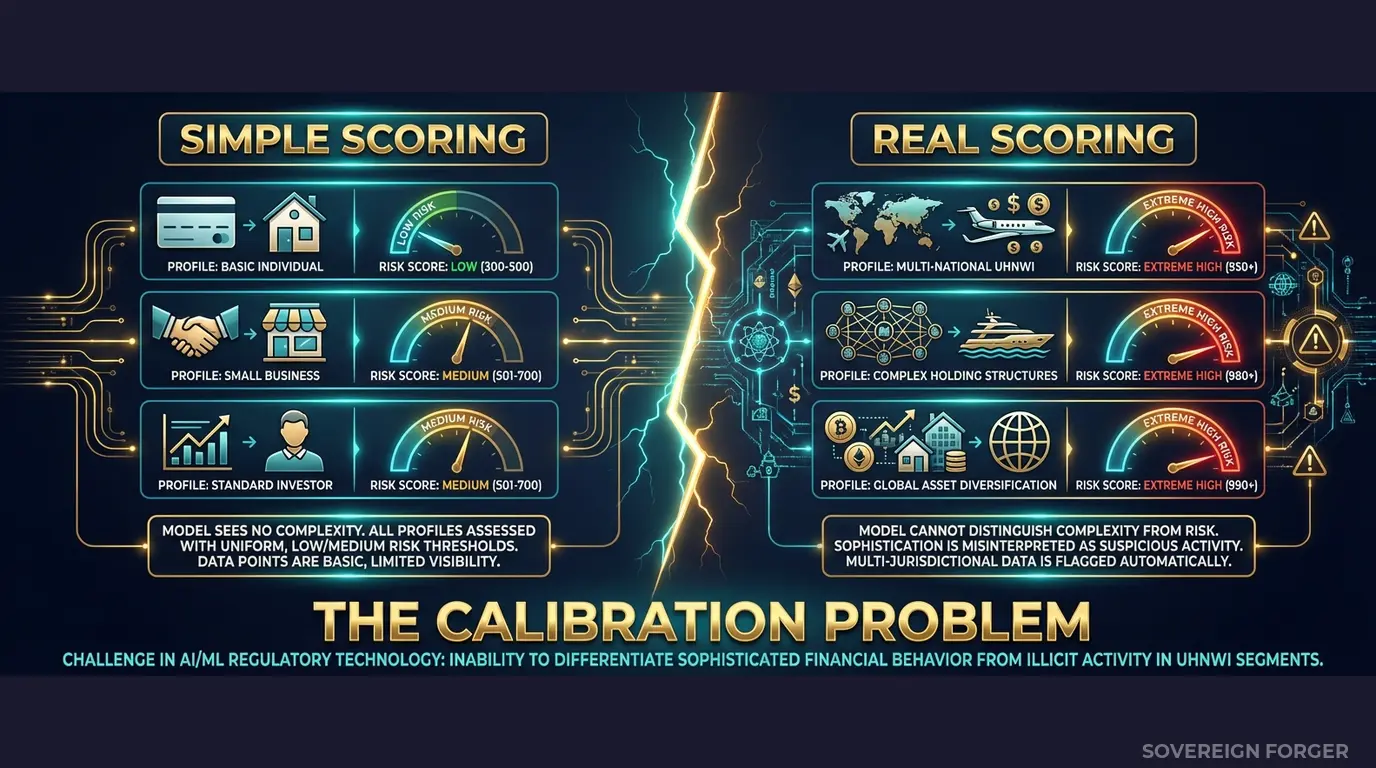

Every one of these is a perfectly legitimate wealth structure. The kind of structure that any competent private banker would recognise in five seconds. But the risk scoring model has never seen a legitimate complex profile in its training data — so it has no frame of reference. It learned that structural complexity equals danger, because every example of multi-jurisdictional, multi-entity holdings in its training set was either absent entirely or labeled as suspicious.

This is the core failure mode for WealthTech risk scoring: when the training data contains only simple profiles, the model learns that simplicity is normal and everything else is a threat. The result is a system that either drowns the compliance team in false positives — I have seen queues of 800+ alerts per day at platforms serving fewer than 5,000 UHNWI clients — or gets tuned down so aggressively that genuine risks pass through unscored.

The second outcome is how you end up in a regulator’s crosshairs. FINMA, the FCA, and the SEC are not asking whether your risk model flags suspicious activity. They are asking whether your risk model was calibrated against data that reflects the actual complexity of your client base. If the answer is no — and for most WealthTech platforms, the honest answer is no — then your risk framework is indefensible.

Here is what I have seen happen in practice. A WealthTech platform launches with a risk model trained on 10,000 synthetic profiles. The profiles have one jurisdiction, one asset class, one entity per person. The model scores beautifully in QA. Then the platform onboards its first 200 UHNWI clients from a private bank migration. 186 of them are flagged as high risk. The compliance team spends three weeks manually clearing legitimate clients. Two genuine risks — one with undisclosed PEP connections, one with a sanctions-adjacent counterparty — are buried in the queue and cleared along with the noise.

The platform does not get fined for the false positives. It gets fined for the two it missed. Because the regulator does not care how many alerts your system generates — the regulator cares whether your system identifies actual risk. And a system that treats every complex client as equally suspicious is a system that identifies nothing.

The math behind this failure is straightforward. If your training data contains zero profiles with offshore vehicles, zero profiles with multiple jurisdictions, zero profiles with PEP-adjacent connections — then your model has exactly zero examples of what a legitimate complex profile looks like. It cannot learn to separate complexity from risk because it has never seen complexity without risk. Every complex feature it encounters in production maps to an empty region of its training space, and the model defaults to the only behavior it knows: flag everything.

This is not an edge case. It is the default state of every WealthTech risk model trained on structurally flat synthetic data. And it is the direct cause of the two-sided failure that regulators punish most severely: too many false positives (wasting compliance resources, degrading client experience) masking too few true positives (missing actual threats).

Three Approaches That Don’t Work for Risk Model Calibration

WealthTech platforms need risk scoring data that contains the full spectrum of UHNWI structural complexity — legitimate and suspicious — so the model can learn to tell the difference. Every conventional data source fails this requirement for a specific, predictable reason.

Using copies of production client data. I have seen platforms extract real client records into model training environments. The logic sounds reasonable: real clients represent real complexity, so the model learns from actual patterns. The problem is twofold. First, GDPR Article 25 requires data protection by design — placing personal data in training environments with broader access and weaker controls is a violation that FINMA, the FCA, and EU regulators are actively investigating. Second, real UHNWI datasets are small. A wealth management platform might have 2,000 high-net-worth clients. You cannot train a risk model on 2,000 records and expect it to generalise. The model overfits to the specific clients in your book, and the first new client with a slightly different structure triggers the same blind-spot failure.

Using anonymized client data. Stripping names and tax IDs from real UHNWI profiles is not anonymization when the population is 265,000 people globally. The combination of net worth range, offshore jurisdiction, profession, and city of residence can uniquely identify individuals even without direct identifiers. A Zurich-based former semiconductor executive with $800M net worth, a BVI trust, and a Delaware LLC is not anonymous — that combination likely identifies one or two people on the planet. A regulator reviewing your risk model’s training data can make this argument, and courts have agreed. The 2023 Breyer ruling in the EU established that pseudonymized data remains personal data if re-identification is reasonably likely. For UHNWI profiles, re-identification is not merely likely — it is often trivial.

Using generic synthetic generators. Platform-based synthetic data tools generate profiles from statistical distributions fitted to input data — which means they reproduce the structural simplicity of whatever data they are trained on. If your input data contains 2,000 clients with straightforward wealth structures, the generator produces 100,000 profiles with the same straightforward structures. The volume increases but the structural complexity does not. Worse, these generators typically model wealth as a normal distribution — which produces unrealistic clustering around the mean and almost no profiles in the extreme tails where actual UHNWI sit. Your risk model trains on Gaussian wealth and encounters Pareto wealth in production. The mismatch is fundamental.

Real Data vs. Anonymized vs. Born-Synthetic

| Dimension | Real Data | Anonymized | Born-Synthetic |

|---|---|---|---|

| PII present | Yes | Residual | None |

| Re-identification risk | Certain | Probable (UHNWI) | Impossible |

| GDPR Art. 25 compliant | No | Disputed | Yes |

| EU AI Act Art. 10 | Violation | Unclear | Compliant |

| Certifiable for auditors | No | No | Yes (Certificate of Origin) |

| Fine exposure | Up to 4% global revenue | Up to 4% global revenue | Zero |

| Structural complexity | Limited to book | Limited to book | Full UHNWI spectrum |

| Risk signal diversity | Skewed to current clients | Skewed to current clients | Calibrated by niche |

| Volume | Hundreds to low thousands | Same as source | 1K to 100K+ |

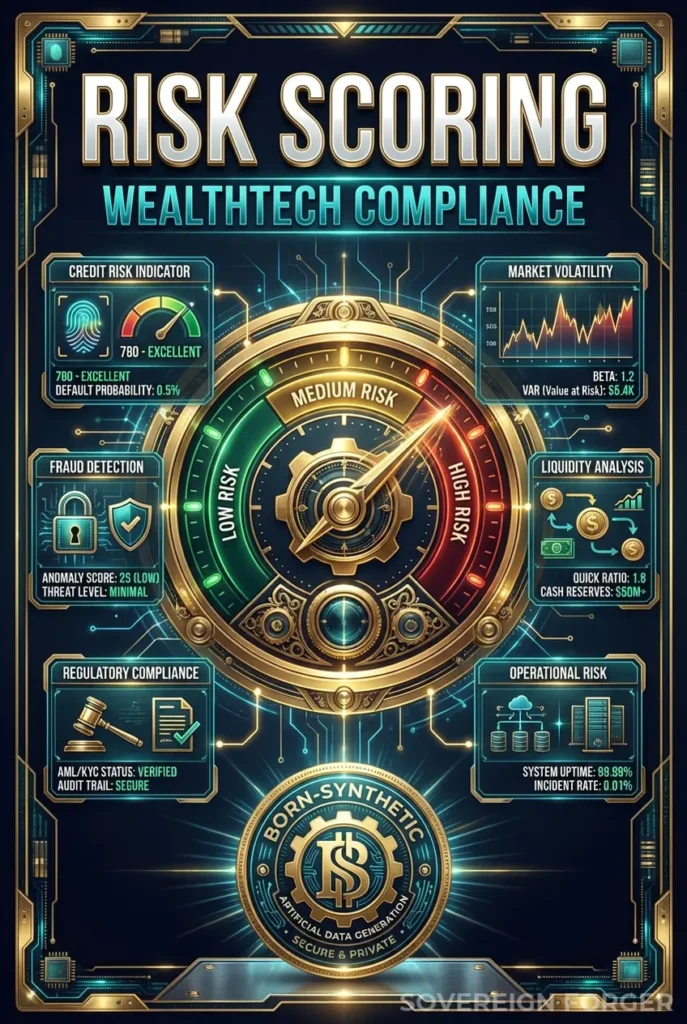

Born-Synthetic KYC Data Built for WealthTech Risk Model Calibration

I built Sovereign Forger to solve the exact problem I watched WealthTech compliance teams fail at repeatedly: getting risk scoring models that can separate structural complexity from genuine risk. Every profile in the dataset is generated from mathematical constraints — not derived from any real person, not anonymized from any real client book.

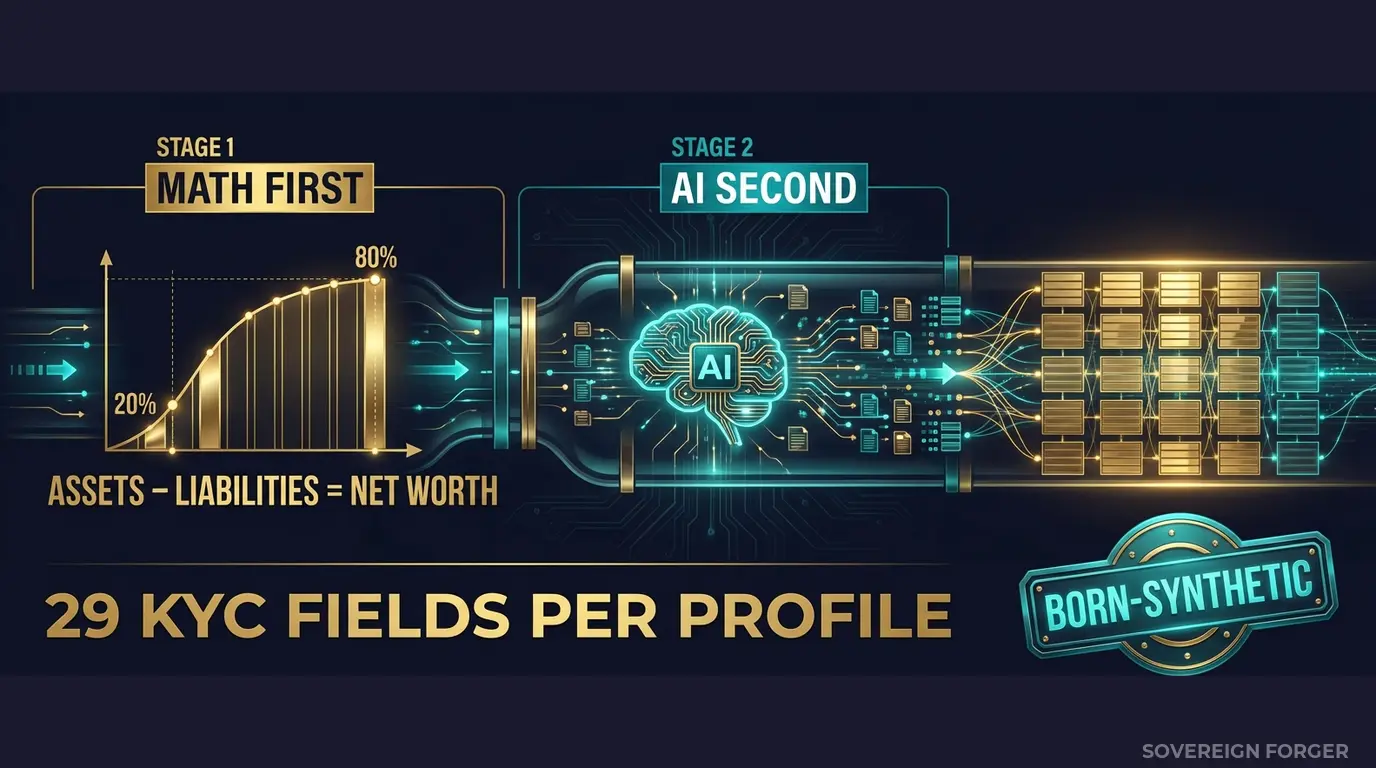

The generation pipeline works in two stages:

Math First. Net worth follows a Pareto distribution — the actual shape of real wealth distribution, where a small number of profiles hold the vast majority of assets and the tail extends far beyond what a Gaussian model would produce. Asset allocations are computed within algebraic constraints: Assets – Liabilities = Net Worth, by construction. Property values, core equity, cash liquidity, and offshore holdings are allocated according to archetype-specific ratios — a tech founder has a different asset composition than a private banker, and a commodity trader has a different profile than a family office manager. Every balance sheet balances on every record. Zero exceptions.

AI Second. A local AI model running offline adds narrative context — biography, profession, philanthropic focus — after the financial figures are locked. The AI never touches the numbers. It enriches the profile with culturally coherent details that match the geographic niche and wealth tier. Because it runs locally, no record ever touches the internet.

Why This Matters for Risk Scoring Specifically

The 29 KYC-Enhanced fields include the exact signals your risk scoring model needs to learn from:

Risk Rating Distribution by Niche. Every profile carries a deterministic `kyc_risk_rating` (low, medium, or high) derived from the profile’s archetype, niche, net worth, and jurisdictional exposure. A Middle East sovereign family with cross-border holdings gets different risk characteristics than a Silicon Valley tech founder with a Delaware LLC — not because I randomly assign labels, but because the underlying wealth structures produce different risk profiles. Your model sees realistic distributions: LatAm profiles skew ~84% high risk (reflecting the genuine risk landscape of complex agribusiness and infrastructure wealth). European profiles split roughly evenly. Silicon Valley founders cluster in medium. These are the distributions your model needs to calibrate against.

PEP Status and Jurisdiction. The `pep_status` field (none, domestic, foreign, international_org) is derived from the profile’s archetype and niche. Middle East profiles carry ~29% PEP rates — reflecting the reality that sovereign families and government-adjacent merchant houses are common in the Gulf. A risk model trained on this data learns that PEP status in a Middle Eastern wealth context is not inherently alarming — it is structurally expected. The model can then allocate its attention to the profiles where PEP status is genuinely unusual, which is where the actual risk concentrates.

Sanctions Screening Signals. Each profile includes `sanctions_screening_result` (clear, potential_match, confirmed_match) and `sanctions_match_confidence` (0-100). These are deterministically calibrated to the profile’s jurisdictional exposure and offshore vehicle type. A profile with a BVI trust and a high-risk jurisdiction flag produces different sanctions signals than a profile with a Luxembourg holding company and a Swiss tax domicile. Your risk model learns that sanctions risk is contextual — not binary.

Source of Wealth Verification. The `source_of_wealth_verified` and `sow_verification_method` fields give your model examples of the verification landscape it will encounter in production. Some profiles have third-party verification. Some are self-declared. Some have bank statements. The distribution varies by niche and net worth tier, reflecting the reality that a $50M founder with venture funding has different SoW documentation than a $900M family dynasty with century-old trust structures.

The result: a risk scoring model trained on this data learns that a four-jurisdiction, three-entity UHNWI with a BVI trust and PEP-adjacent connections can be either perfectly legitimate or genuinely risky — and the distinguishing factors are specific combinations of signals, not structural complexity alone. That is the calibration your model is missing.

29 Fields Designed for Risk Model Training

Identity & Geography: full_name, residence_city, residence_zone, tax_domicile

Wealth Structure: net_worth_usd, total_assets, total_liabilities, property_value, core_equity, cash_liquidity, assets_composition, liabilities_composition

Professional Context: profession, education, narrative_bio, philanthropic_focus

Offshore Exposure: offshore_jurisdiction, offshore_vehicle

KYC Signals: kyc_risk_rating, pep_status, pep_position, pep_jurisdiction, sanctions_screening_result, sanctions_match_confidence, adverse_media_flag, source_of_wealth_verified, sow_verification_method, high_risk_jurisdiction_flag

Every field is deterministically derived from the profile’s archetype, niche, net worth, and jurisdiction — using a SHA-256 hash of the profile UUID for reproducible pseudo-randomness. Same UUID, same KYC signals, every time. Your risk model training is reproducible, auditable, and explainable.

Built for WealthTech Risk Scoring at Scale

6 Geographic Niches: Silicon Valley, Old Money Europe, Middle East, LatAm, Pacific Rim, Swiss-Singapore — each with culturally coherent wealth patterns and archetype-specific risk distributions. A risk model trained across all six niches learns the full spectrum of legitimate UHNWI complexity, not just the patterns from one jurisdiction.

31 Wealth Archetypes: Tech founders, private bankers, commodity traders, family office managers, real estate developers, sovereign family members, shipping magnates, semiconductor executives — the actual client profiles that WealthTech platforms serve. Each archetype produces distinct risk signal patterns, because the underlying wealth structures are architecturally different.

Deterministic KYC Signal Calibration: Risk ratings, PEP statuses, sanctions screening results, and source-of-wealth verification methods are not randomly assigned. They are deterministically derived from each profile’s structural characteristics. This means your risk model trains on signal distributions that reflect the actual relationships between wealth structure and risk — not noise.

Pareto-Distributed Net Worth: Wealth in the dataset follows the same power-law distribution as real UHNWI populations. Your risk model trains on realistic tail behavior — profiles at $50M, $200M, $900M, and beyond — instead of the artificial clustering around the mean that Gaussian generators produce. This matters for risk scoring because extreme wealth and moderate wealth produce fundamentally different risk profiles, and a model that has never seen the extreme tail cannot score it correctly.

Pricing

| Tier | Records | Price | Best For |

|---|---|---|---|

| Compliance Starter | 1,000 | $999 | Risk model proof of concept |

| Compliance Pro | 10,000 | $4,999 | Full model calibration suite |

| Compliance Enterprise | 100,000 | $24,999 | Production model training + ongoing regression |

No SDK. No API key. No sales call. Download a file, load it into your model pipeline, and start calibrating. JSONL and CSV formats included with every dataset.

Why This Matters Now

Regulatory enforcement is converging on risk model governance. FINMA has issued specific guidance requiring wealth managers to demonstrate that risk scoring models are calibrated against realistic client complexity — not just transaction volume. The FCA’s Consumer Duty (effective July 2023) extends to how risk models treat complex legitimate clients: systematic false positives that degrade the client experience are now a compliance concern, not just an operational one. The SEC’s renewed focus on AI in advisory services means that the provenance and governance of model training data is becoming an examination priority.

The EU AI Act changes everything for model training data. Financial AI — including risk scoring — is classified as high-risk under Annex III. Article 10 requires documented governance of training data, including provenance, bias assessment, and GDPR compliance. If your risk model trains on real or anonymized client data, you need to prove compliance on both GDPR and AI Act simultaneously. If it trains on born-synthetic data with a Certificate of Sovereign Origin, you hand the auditor a single document.

The fines are not theoretical. Credit Suisse accumulated billions in fines across multiple enforcement actions — many tracing back to risk management failures around complex client structures. UBS paid $5.1 billion in France for systemic failings in client risk assessment. Julius Baer paid $79.7 million to the US Department of Justice for failures in monitoring high-risk client relationships. These are not retail banking fines. These are wealth management fines, and they target exactly the failure mode that inadequate risk scoring data produces.

The balance sheet test is open source. Every Sovereign Forger record passes algebraic validation: Assets – Liabilities = Net Worth. Run the Balance Sheet Test on our data, then run it on your current training data. If your current data does not pass — and I have tested enough competitor samples to know that most do not — then your risk model is training on financially incoherent profiles. A model trained on incoherent data produces incoherent risk scores.

Every dataset ships with a Certificate of Sovereign Origin — documenting the born-synthetic methodology, zero PII lineage, and regulatory alignment with GDPR Article 25 and EU AI Act Article 10. When your auditor asks where your risk model’s training data came from, you hand them the certificate. When FINMA or the FCA asks whether your training data contains personal data, you show them the certificate. Born-Synthetic means compliant by construction — not by anonymization.

Calibrate Your Risk Scoring Models

Download 100 free KYC-Enhanced UHNWI profiles with deterministic risk signals. Run them through your risk scoring pipeline. Test whether your model can distinguish between structural complexity and genuine risk.

Count how many legitimate complex profiles your model flags as high risk. That number is your false positive floor — and it tells you exactly how much compliance resource you are wasting on noise instead of actual threats.

Then count how many genuinely risky profiles your model scores as low or medium. That number is your blind spot — and it is the number a regulator will ask about.

No credit card. No sales call. Just your work email.

Frequently Asked Questions

How can WealthTech firms calibrate risk scoring algorithms to distinguish legitimate UHNWI complexity from genuine financial crime indicators?

UHNWI profiles present the most acute calibration challenge in WealthTech risk scoring: multi-jurisdictional holdings, layered trust structures, and offshore vehicles create signals that unsophisticated models flag as suspicious. Sovereign Forger generates synthetic profiles spanning low, medium, and high risk ratings with realistic structural complexity, enabling firms to train algorithms that recognize legitimate wealth architecture. Tested against MiFID II suitability assessment criteria and EDD thresholds, models can reduce false-positive rates by benchmarks exceeding 30 percent before touching live client data.

What regulatory requirements must WealthTech risk scoring systems satisfy when assessing clients across multiple jurisdictions and risk tiers?

WealthTech platforms operating under MiFID II must apply client categorization logic that integrates suitability assessments with AML risk indicators, distinguishing retail, professional, and eligible counterparty classifications. EDD obligations require enhanced scrutiny of UHNWI profiles involving offshore structures or politically exposed persons, with documented source-of-wealth narratives. The EU AI Act Art.10 mandates high-quality training data for high-risk AI systems, including risk scoring engines. Synthetic datasets covering diverse jurisdictions and realistic edge cases help satisfy these requirements during model validation and regulatory audit preparation.

How do WealthTech risk scoring models handle PEP status, sanctions screening, and source-of-wealth validation without over-flagging legitimate high-net-worth clients?

Balancing PEP classification, sanctions screening, and source-of-wealth assessment requires scoring models trained on profiles where these attributes appear in realistic combinations rather than in isolation. A PEP with documented legitimate business income and clean sanctions status should produce a different risk output than a PEP with opaque wealth origins and multi-jurisdictional corporate layers. Synthetic datasets encoding all 29 interlocked fields, including PEP flags, sanctions indicators, and source-of-wealth categories, allow WealthTech developers to tune decision thresholds and reduce costly false positives that drive client attrition.

What does born-synthetic financial data mean and why does it matter specifically for WealthTech risk scoring development?

Born-synthetic data is generated entirely from mathematical distributions such as the Pareto distribution for wealth allocation, with zero lineage to any real person at any stage of creation. Unlike anonymized or pseudonymized data derived from real records, born-synthetic profiles carry no re-identification risk and are GDPR Art.25 compliant by construction, satisfying privacy-by-design requirements before any processing begins. For WealthTech risk scoring, this means development teams can share training datasets across engineering, compliance, and external validation partners without legal review cycles, accelerating model iteration while maintaining full regulatory defensibility.

How can a WealthTech team get started with synthetic KYC profiles for risk scoring development without a procurement process?

Sovereign Forger provides 100 free synthetic KYC profiles available for instant download via work email registration, with no credit card required. Each profile contains 29 interlocked fields covering risk ratings across low, medium, and high tiers, PEP status, sanctions screening indicators, source-of-wealth categories, and multi-jurisdictional attributes. The dataset is structured for immediate integration into risk scoring pipelines, enabling model calibration and compliance testing from day one. Teams can validate coverage against MiFID II and EDD requirements before committing to larger dataset volumes.

Learn more about WealthTech risk scoring data and how Born Synthetic data addresses this in our glossary and comparison guides.