This model validation data is built for exactly this scenario. Credit Suisse collapsed under risks its models failed to capture. UBS paid €5.1B in France for compliance failures. Julius Baer settled $79.7M with the DoJ. In every case, internal models — risk scoring, suitability, AML detection — had been validated against data that did not represent the clients who actually caused losses.

Your Validation Data Does Not Look Like Your Clients

I have reviewed model validation reports from WealthTech platforms — the kind that get submitted to FINMA, the FCA, or internal model risk committees. The validation dataset is always clean. Coverage metrics are green. Error rates are within tolerance. The model is approved.

Then I look at the validation data itself. Flat net worth distributions — most profiles clustered between $1M and $10M, tapering off neatly. Single-jurisdiction holdings. No offshore vehicles. No PEP connections. No trust layering. The dataset represents a version of wealth that is structurally simple, mathematically convenient, and completely disconnected from the UHNWI clients that WealthTech platforms actually serve.

This is the model validation trap. Your model scores 97% accuracy on a dataset where every profile has one jurisdiction, one asset class, and one clean identity. Then a real client arrives — a family office principal with $400M across three trusts, a Cayman LP, dual PEP status through a sibling who held public office in the UAE, and a tax domicile in Singapore that changed from Switzerland eighteen months ago. Your risk model has never seen this combination. Not because it is rare — but because your validation data was structurally incapable of producing it.

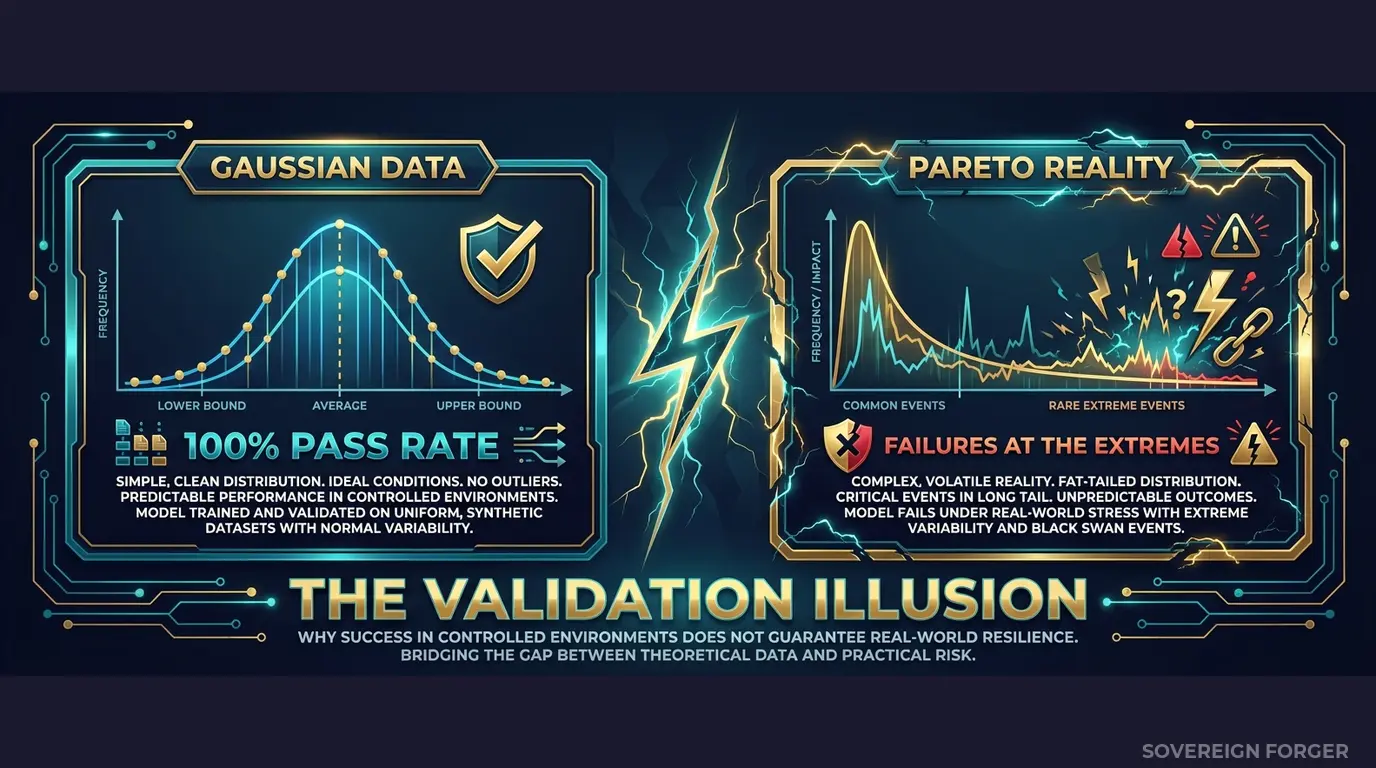

Real wealth follows a Pareto distribution. A small number of clients hold a disproportionate share of assets, and those clients have disproportionately complex structures. The tail of the distribution — the top 1% of your client base — is where the highest-value relationships live, where the most complex compliance obligations arise, and where model failures have the most severe consequences. If your validation data does not include this tail, your model has never been tested where it matters most.

I have seen this pattern at Broadridge, at Avaloq, at platforms built on FNZ infrastructure. The model works on the mass-affluent segment. It breaks on the segment that generates the most revenue and the most regulatory exposure. The validation process certified a model that was never tested against its own operating reality.

The regulatory math: SR 11-7 (Fed), SS1/23 (PRA), and FINMA Circular 2017/1 all require model validation against data that is “representative of the portfolio.” If your UHNWI clients have multi-jurisdictional structures and your validation data has none, a regulator can — and will — argue that your validation is not representative. The model approval is built on sand.

Three Approaches That Do Not Validate What Matters

Using production client data for validation. Some teams extract real client profiles into model validation environments. For WealthTech platforms serving UHNWIs, this is an immediate GDPR Article 25 violation — you are placing personal financial data of identifiable individuals into environments with broader access, weaker controls, and often insufficient audit trails. With only 265,000 UHNWIs globally, the combination of net worth, jurisdiction, and offshore structure makes every record effectively identifiable even after name removal. And under the EU AI Act — fully enforceable from August 2026 — Article 10 requires documented governance of any data used for AI model training or validation. Using real client data without full provenance documentation creates dual regulatory exposure: GDPR and AI Act simultaneously.

Using anonymized client data. Stripping names and account numbers from UHNWI profiles does not eliminate re-identification risk — it increases it. A $380M net worth with property holdings in Monaco, a Liechtenstein foundation, and a profession tagged as “semiconductor manufacturing executive” narrows to a handful of individuals worldwide. Academic research has demonstrated that four quasi-identifiers are sufficient to re-identify 99.98% of individuals in sparse populations. The UHNWI population is as sparse as it gets. Your “anonymized” validation dataset is pseudonymized at best, and a regulator reviewing your model validation documentation will reach the same conclusion.

Using generic synthetic generators. Platform-based synthetic data tools generate profiles by learning statistical distributions from input data — or, when no input data is provided, by sampling from Gaussian distributions. Both approaches fail for WealthTech model validation. Learning from input data means inheriting the biases and gaps of your existing portfolio. Sampling from Gaussian distributions means generating wealth that clusters around a mean — the opposite of how real wealth is distributed. Your validation data produces profiles where $5M and $500M net worth are equally common, offshore structures are randomly assigned without correlation to jurisdiction, and PEP status has no relationship to geography or profession. A model validated against this data has learned nothing about the structure of actual UHNWI wealth.

Real Data vs. Anonymized vs. Born-Synthetic for Model Validation

| Dimension | Real Data | Anonymized | Born-Synthetic |

|---|---|---|---|

| PII present | Yes | Residual | None |

| Re-identification risk | Certain | Probable (UHNWI) | Impossible |

| GDPR Art. 25 compliant | No | Disputed | Yes |

| EU AI Act Art. 10 | Violation | Unclear | Compliant |

| Pareto-distributed wealth | Yes | Yes (inherited) | Yes (by construction) |

| Realistic field correlations | Yes | Partially degraded | Yes (archetype-driven) |

| Certifiable for auditors | No | No | Yes (Certificate of Origin) |

| Reproducible validation runs | No (data changes) | No (source changes) | Yes (deterministic) |

| Fine exposure | Up to 4% global revenue | Up to 4% global revenue | Zero |

Born-Synthetic Data Built for WealthTech Model Validation

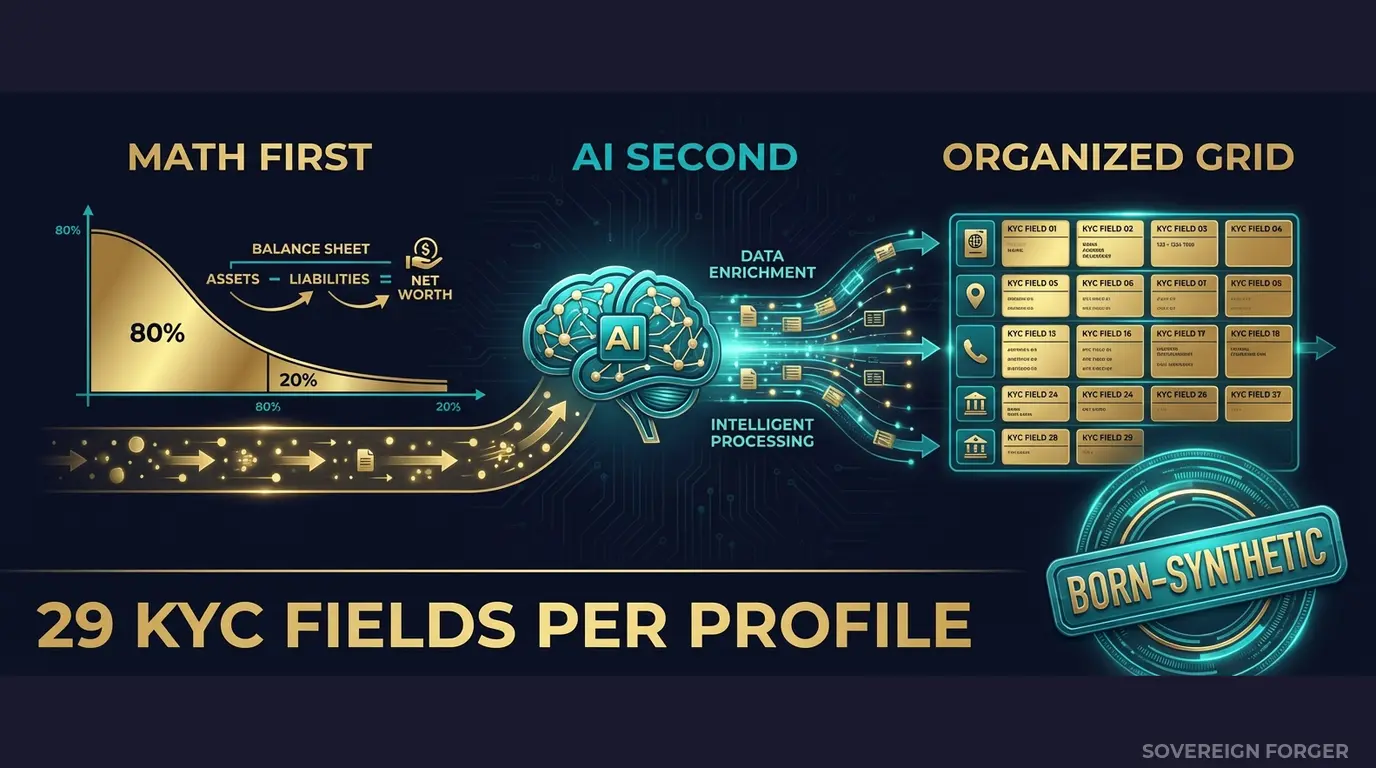

Every profile in the Sovereign Forger dataset is generated from mathematical constraints — not derived from, learned from, or correlated with any real person. The generation pipeline works in two stages that directly address the model validation problem:

Math First — Pareto, Not Gaussian. Net worth follows a Pareto distribution with shape parameters calibrated per geographic niche. This is not a cosmetic choice — it is the structural foundation that makes the data useful for model validation. Real UHNWI wealth is heavy-tailed: a small number of clients hold extreme concentrations of assets with correspondingly complex structures. A bell curve cannot produce this shape. Every synthetic data tool that samples from Gaussian distributions will generate a validation dataset where extreme wealth is underrepresented by orders of magnitude — exactly the segment where your model faces the highest risk.

Asset allocations are computed within algebraic constraints: Assets – Liabilities = Net Worth, by construction. Property values, core equity, cash liquidity, offshore holdings — all computed to sum correctly on every single record. When your model validation requires balance sheet integrity, every record passes. I have tested competitor datasets where 40% of records fail this basic check. A model trained or validated on internally inconsistent data learns that balance sheets do not need to balance. That lesson carries into production.

AI Second — Structural Coherence, Not Random Assignment. After the financial figures are locked, a local AI model — running offline, never touching the network — adds narrative context: biography, profession, education, philanthropic focus. The AI never modifies the numbers. It enriches each profile with culturally and economically coherent details that match the geographic niche, wealth tier, and archetype.

This means a Pacific Rim semiconductor dynasty founder has a biography that reflects semiconductor wealth, not a randomly selected profession pasted onto a random net worth. When your model uses profession or biography as features, the correlations in the validation data reflect the correlations that exist in the real UHNWI population.

29 Fields Designed for Model Validation Pipelines

Every KYC-Enhanced profile includes the fields your model validation framework actually needs to ingest:

Identity & Geography: full_name, residence_city, residence_zone, tax_domicile

Wealth Structure: net_worth_usd, total_assets, total_liabilities, property_value, core_equity, cash_liquidity, assets_composition, liabilities_composition

Professional Context: profession, education, narrative_bio, philanthropic_focus

Offshore Exposure: offshore_jurisdiction, offshore_vehicle

KYC Signals: kyc_risk_rating, pep_status, pep_position, pep_jurisdiction, sanctions_screening_result, sanctions_match_confidence, adverse_media_flag, source_of_wealth_verified, sow_verification_method, high_risk_jurisdiction_flag

Why 29 fields matter for model validation: most validation datasets provide 5-8 fields — enough for a basic regression but not enough to test how your model handles multi-dimensional edge cases. A risk scoring model that uses net worth, jurisdiction, and PEP status as inputs needs validation data where these fields are correlated in realistic ways. In Sovereign Forger data, a Middle East sovereign family profile gets PEP flags at rates that reflect actual PEP density in Gulf states — not at the same rate as a Silicon Valley tech founder. Your model validation captures whether the model handles this differential correctly.

Every KYC field is deterministically derived from the profile’s archetype, niche, net worth, and jurisdiction using a SHA-256 hash of the profile UUID. This means validation runs are reproducible: the same UUID generates the same KYC signals every time. When your model risk team re-runs the validation suite six months later, the results are directly comparable. No drift. No “the validation data changed between runs.”

Built for WealthTech Model Validation at Scale

6 Geographic Niches: Silicon Valley, Old Money Europe, Middle East, LatAm, Pacific Rim, Swiss-Singapore. Each niche has its own Pareto shape parameter, archetype distribution, cultural naming conventions, and wealth structure patterns. When your model needs to validate performance across client geographies, each niche provides a structurally distinct population — not the same profiles with different city names.

31 Wealth Archetypes: Tech founders, private banking clients, sovereign family members, commodity barons, shipping dynasty heirs, family office principals, offshore wealth managers. These are the actual client profiles that WealthTech platforms encounter — and the profiles where model performance diverges from average-case metrics.

KYC Signal Distribution by Niche: Risk ratings, PEP statuses, sanctions screening results, and source-of-wealth verification methods are distributed with realistic frequencies per niche. LatAm profiles carry higher risk ratings (~84% high-risk) than Swiss-Singapore profiles (~48% low-risk). Middle East profiles have PEP rates (~29%) that reflect the political structure of Gulf economies. Your model validation captures niche-level performance, not just aggregate accuracy.

Deterministic Reproducibility: Every field is computed from mathematical constraints and a SHA-256 hash. No randomness, no sampling variance. Run the validation today and six months from now — identical results. Your model risk committee can track performance changes to model updates, not to data drift.

Pricing

| Tier | Records | Price | Best For |

|---|---|---|---|

| Compliance Starter | 1,000 | $999 | Initial model validation, proof of concept |

| Compliance Pro | 10,000 | $4,999 | Full validation suite, stress testing |

| Compliance Enterprise | 100,000 | $24,999 | Production validation + AI training data |

No SDK. No API key. No sales call. Download a JSONL or CSV file, load it into your validation framework, and run your suite. Works with Python, R, Spark, or any tool that reads structured data.

Why This Matters Now

Model risk regulation is tightening. The PRA’s SS1/23 on model risk management — effective since May 2024 — requires firms to demonstrate that validation data is “representative of the conditions under which the model is expected to operate.” The Fed’s SR 11-7 has required the same since 2011, and enforcement is intensifying. FINMA Circular 2017/1 mandates ongoing model validation with data that reflects the actual risk profile of the institution’s client base. If your WealthTech platform serves UHNWIs and your validation data does not contain UHNWI wealth structures, the validation does not meet the regulatory standard.

The EU AI Act changes the equation. From August 2026, AI systems used in financial services are classified as high-risk under Annex III. Article 10 requires that training and validation data be “relevant, sufficiently representative, and to the best extent possible, free of errors and complete.” Using real client data creates GDPR exposure. Using structurally flat synthetic data fails the “sufficiently representative” test. Born-Synthetic data with Pareto-distributed wealth, archetype-driven correlations, and documented provenance satisfies both requirements simultaneously.

The fines are not hypothetical. Credit Suisse accumulated billions in fines and enforcement actions across multiple jurisdictions before its collapse — driven by risk management failures that its models failed to flag. UBS paid €5.1B in France for helping clients evade taxes through structures that its compliance models should have caught. Julius Baer settled $79.7M with the DoJ over failures in its AML and compliance controls for clients with exactly the kind of complex, multi-jurisdictional wealth structures that flat validation data cannot represent.

The balance sheet test is open source. Every Sovereign Forger record passes algebraic validation: Assets – Liabilities = Net Worth. Run the Balance Sheet Test on our data, then run it on your current validation dataset. I have seen competitor data where 43% of records fail this check. A model validated on data where balance sheets do not balance has learned the wrong constraints.

Every dataset ships with a Certificate of Sovereign Origin — documenting the born-synthetic methodology, Pareto distribution parameters, zero PII lineage, and regulatory alignment with GDPR Art.25 and EU AI Act Art.10. When your model risk committee or external auditor asks “where did the validation data come from and how was it generated?”, you hand them the certificate. The provenance question is answered before it is asked.

Validate Your Models Against Realistic Data

Download 100 free KYC-Enhanced UHNWI profiles. Run your model validation suite. Check whether your model handles the Pareto tail — the complex profiles where most real-world failures occur.

Compare the distribution of net worth, the frequency of offshore structures, the PEP rates, and the risk rating spread against what you currently validate with. The gap between those two distributions is the gap in your model validation coverage.

That gap is where the next fine comes from.

No credit card. No sales call. Just your work email.

Frequently Asked Questions

Why do wealth management AI models fail suitability assessments under MiFID II when trained on standard financial datasets?

Standard financial datasets are skewed toward mass-affluent and retail profiles, leaving ultra-high-net-worth individuals statistically underrepresented. MiFID II suitability assessments require models to accurately classify client risk tolerance and investment objectives across the full wealth spectrum. When training data does not reflect Pareto wealth distributions, models systematically misclassify UHNWI clients, producing non-compliant suitability outputs. Regulators have issued fines exceeding €5 million for suitability failures tied to biased model inputs. Synthetic data engineered to match real wealth distributions closes this validation gap directly.

How does synthetic UHNWI profile data address the Enhanced Due Diligence gaps that cause audit failures in WealthTech model validation?

UHNWI profiles are the hardest to synthesize realistically because they involve offshore holding structures, multi-jurisdictional residency, complex source-of-wealth narratives, and layered beneficial ownership. EDD requirements demand that models detect these patterns accurately, yet real UHNWI data is too scarce and sensitive to include in training sets. Synthetic profiles engineered to replicate these structural characteristics allow validation teams to stress-test EDD models against statistically credible edge cases, reducing the likelihood of false negatives that regulators flag during model risk reviews.

What validation coverage gaps emerge when WealthTech firms test PEP screening and sanctions models without statistically representative synthetic data?

PEP and sanctions screening models trained or validated on convenience samples typically see false negative rates of 15 to 30 percent on edge-case profiles involving dual nationality, name transliteration variants, or indirect beneficial ownership. EU AI Act Article 10 requires that high-risk AI systems use training and validation data that is sufficiently representative and free of errors. Synthetic datasets that preserve realistic correlations between PEP status, jurisdiction, source of wealth, and risk rating allow firms to achieve the coverage depth needed to satisfy both internal model risk governance and external supervisory review.

What does born-synthetic financial data mean, and why does that distinction matter specifically for WealthTech model validation?

Born-synthetic data is generated entirely from mathematical distributions such as the Pareto distribution for wealth concentration, with no source records derived from or linked to real individuals. There is zero data lineage to any natural person, meaning the dataset cannot be re-identified, reverse-engineered, or traced back to a client file. For WealthTech model validation this means teams can share datasets across environments and vendors without triggering GDPR Article 25 data protection by design obligations. Unlike anonymized or pseudonymized data, born-synthetic profiles carry no residual privacy risk, removing a major compliance bottleneck in model review workflows.

How can a WealthTech validation team get started testing with Sovereign Forger synthetic data before committing to a full deployment?

Sovereign Forger provides 100 free synthetic KYC profiles available for instant download via a work email address, with no credit card required. Each profile contains 29 interlocked fields covering risk ratings, PEP status, sanctions screening results, source of wealth classification, jurisdiction, and beneficial ownership flags. The fields are statistically consistent with one another, meaning a high-risk rating correlates correctly with adverse PEP or sanctions indicators at realistic base rates. This sample set is sufficient for a preliminary validation run to assess distributional fit before procurement discussions begin.

Learn more about WealthTech model validation data and how Born Synthetic data addresses this in our glossary and comparison guides.