This risk scoring data is built for exactly this scenario. Block: $120M. Western Union: $586M. MoneyGram: $125M. PayPal: multiple enforcement actions. These fines share a root cause — risk scoring models that were never calibrated against structurally complex clients, so they either flagged everything or caught nothing.

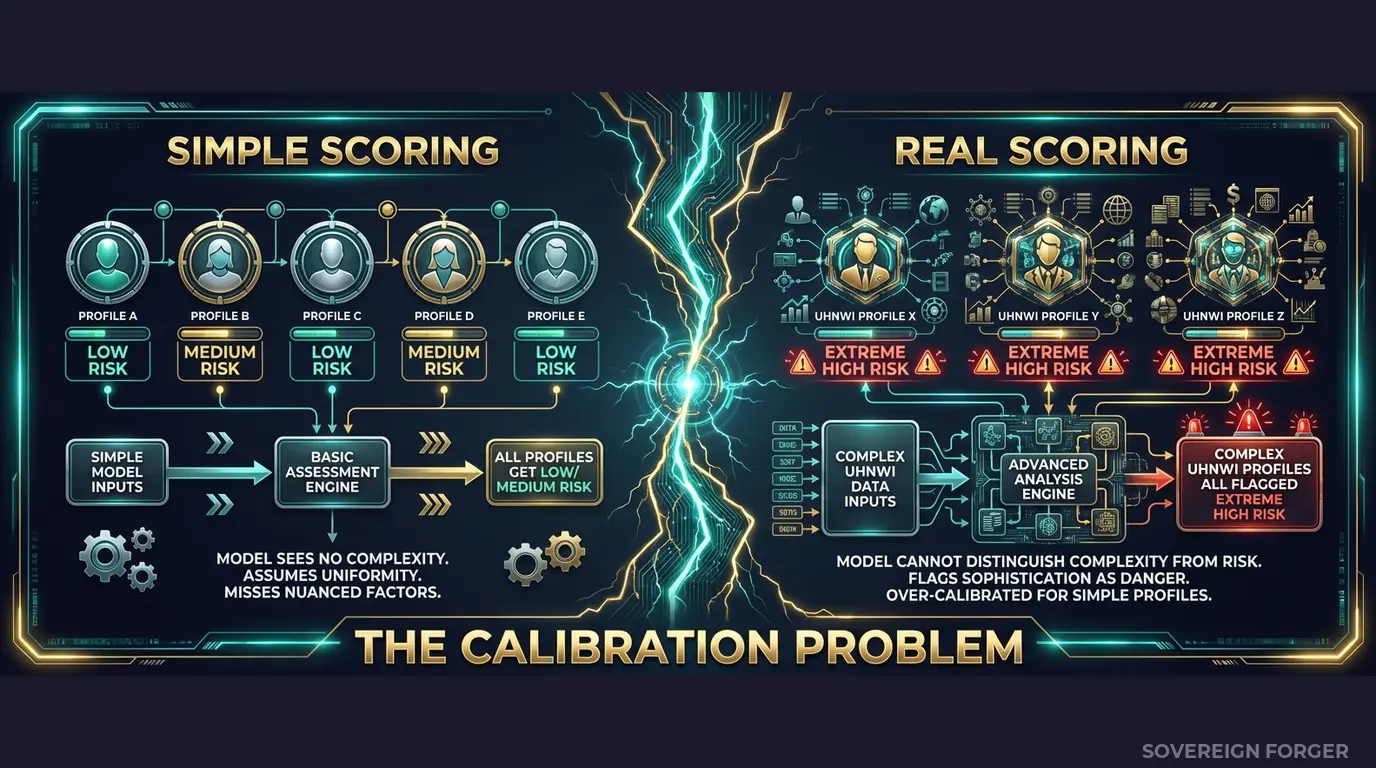

Your Risk Model Cannot Distinguish Complexity From Criminality

I spent years watching payment processors deploy risk scoring models that had been trained on one kind of client — the simple kind. Single jurisdiction, single currency, domestic transfers, salaried income. The model learned what “normal” looked like, and it was flat.

Then a legitimate business client routes a $2.4M wire from a Cayman holding company through a Singapore correspondent bank to a property settlement in Dubai. The risk model scores it at 98 out of 100. Maximum alert. Immediate escalation. The compliance team spends four hours investigating a transaction that is completely legal — a real estate developer moving capital through the exact structures their tax advisor set up for them.

This happens hundreds of times a day across every major payment processor. The model cannot tell the difference between a legitimate multi-jurisdictional wealth structure and a laundering scheme, because it has never seen a legitimate multi-jurisdictional wealth structure in its training data. Every complex pattern looks suspicious when the training set contains zero complex patterns that are clean.

The cost is not just the false positive itself. It is the cascade. When your risk model generates 400 false positives per day, your compliance analysts develop alert fatigue. They start rubber-stamping escalations. They miss the genuinely suspicious transaction buried in the noise — the one where the sanctions-adjacent entity is routing funds through three jurisdictions specifically to avoid detection. That transaction clears because no one had the bandwidth to examine it carefully. And six months later, FinCEN or the FCA comes asking questions.

This is the calibration problem. A risk scoring model trained without legitimate complexity in its data set has exactly two modes: flag everything complex, or miss everything subtle. Neither mode survives a regulatory examination.

The payment processing industry moves $2 trillion in cross-border volume daily. Every one of those transactions runs through a risk scoring model. If that model was calibrated on structurally flat data, the processor is operating with a systematic blind spot — and regulators have started measuring exactly how large that blind spot is.

Western Union paid $586M because their compliance controls failed on exactly this axis: the risk models did not adequately score the transactions that mattered. MoneyGram paid $125M for the same structural failure. Block paid $120M when regulators found that Cash App’s risk controls were insufficient for the transaction patterns flowing through the platform. In every case, the risk scoring infrastructure existed. It just had never been tested against realistic complexity.

Three Approaches That Break Risk Scoring

Training on production transaction data. Some teams extract real client transaction histories and feed them into risk model training. This creates two simultaneous problems. First, a GDPR Article 25 violation — personal financial data in ML training environments with broader access, weaker controls, and often no data processing impact assessment. Second, a labeling problem: the real data only contains patterns you have already caught. Your model learns to detect yesterday’s laundering schemes. It learns nothing about the schemes it has never seen, because those schemes — by definition — were not flagged in the training data.

Using anonymized transaction records. Stripping names and account numbers from real payment data does not eliminate re-identification risk when the data includes transaction amounts, timestamps, currency pairs, and jurisdictions. For UHNWI clients — of which there are only 265,000 globally — the combination of transaction size, corridor, and frequency can uniquely fingerprint an individual without any direct identifier. A regulator can argue that your “anonymized” training data is functionally pseudonymized, and GDPR applies in full. Meanwhile, your risk model has inherited every bias present in the original data: geographic bias, currency bias, entity-type bias.

Using generic synthetic generators. Platform-based generators produce synthetic transactions between synthetic entities — but the entities themselves are structurally flat. Single jurisdiction, no offshore vehicles, no PEP connections, no multi-entity ownership. The risk model trains on these profiles and learns that a transfer from a BVI-registered LP is inherently suspicious, because no legitimate BVI-registered LP existed in the training data. Your model has learned to equate structural complexity with risk, which is precisely the calibration failure that generates hundreds of false positives daily.

Real Data vs. Anonymized vs. Born-Synthetic

| Dimension | Real Data | Anonymized | Born-Synthetic |

|---|---|---|---|

| PII present | Yes | Residual | None |

| Re-identification risk | Certain | Probable (UHNWI) | Impossible |

| GDPR Art. 25 compliant | No | Disputed | Yes |

| EU AI Act Art. 10 | Violation | Unclear | Compliant |

| Certifiable for auditors | No | No | Yes (Certificate of Origin) |

| Captures legitimate complexity | Yes (biased) | Yes (biased) | Yes (unbiased) |

| False positive calibration | Inherits existing bias | Inherits existing bias | Clean baseline |

| Fine exposure | Up to 4% global revenue | Up to 4% global revenue | Zero |

Born-Synthetic KYC Data Built for Risk Model Calibration

The core problem with payment processor risk scoring is not the model architecture. It is the training data. A model that has only seen simple profiles will score every complex profile as high risk. A model trained on profiles that include legitimate offshore structures, multi-jurisdictional tax arrangements, and PEP-adjacent connections — properly labeled as low, medium, or high risk — learns to distinguish between structural complexity and genuine risk signals.

This is what I built Sovereign Forger to solve.

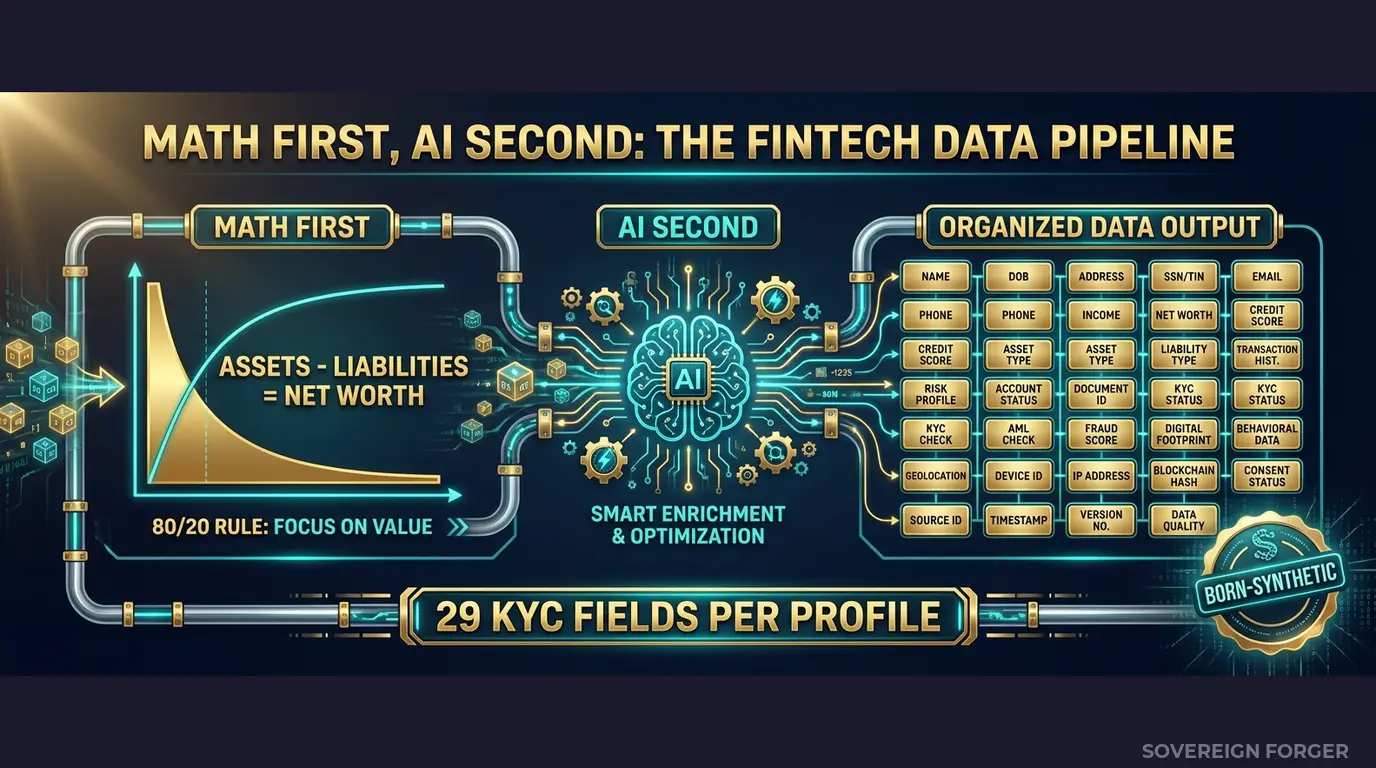

Every profile in the KYC-Enhanced dataset is generated from mathematical constraints — not derived from any real person. The generation pipeline works in two stages:

Math First. Net worth follows a Pareto distribution — the way real wealth is actually distributed, with the extreme right tail that drives the most complex compliance scenarios. Asset allocations are computed within algebraic constraints: Assets – Liabilities = Net Worth, by construction. Every balance sheet balances on every record. Zero exceptions. This means your risk model trains on financially coherent profiles, not random number combinations that would never exist in production.

AI Second. A local AI model running offline adds narrative context — biography, profession, philanthropic focus — after the financial figures are locked. The AI never touches the numbers. It enriches the profile with culturally coherent details that match the geographic niche and wealth tier. A Silicon Valley tech founder has a different biography than a Middle Eastern sovereign family member, because their wealth structures are fundamentally different — and your risk model needs to understand that difference.

29 Fields That Map Directly to Risk Scoring Inputs

Every KYC-Enhanced profile includes the fields your risk scoring model actually consumes:

Identity & Geography: full_name, residence_city, residence_zone, tax_domicile

Wealth Structure: net_worth_usd, total_assets, total_liabilities, property_value, core_equity, cash_liquidity, assets_composition, liabilities_composition

Professional Context: profession, education, narrative_bio, philanthropic_focus

Offshore Exposure: offshore_jurisdiction, offshore_vehicle

KYC Signals: kyc_risk_rating, pep_status, pep_position, pep_jurisdiction, sanctions_screening_result, sanctions_match_confidence, adverse_media_flag, source_of_wealth_verified, sow_verification_method, high_risk_jurisdiction_flag

Why These Fields Matter for Risk Scoring Specifically

The risk scoring calibration problem is a labeling problem. Your model needs to learn that a profile with `offshore_jurisdiction: BVI`, `offshore_vehicle: limited_partnership`, and `kyc_risk_rating: low` is a legitimate wealth structure — not a red flag. It needs to learn that `pep_status: domestic` combined with `sanctions_screening_result: clear` and `source_of_wealth_verified: true` represents a politically exposed person who has been thoroughly vetted, not an automatic escalation.

Every KYC field in the Sovereign Forger dataset is deterministically derived from the profile’s archetype, niche, net worth, and jurisdiction — not randomly assigned. A tech founder in Silicon Valley gets different risk signals than a commodity trader in LatAm, because the underlying wealth structures create different compliance profiles. A Middle East sovereign family member with a Geneva tax domicile triggers different KYC patterns than a Pacific Rim shipping dynasty based in Singapore.

This deterministic derivation means the risk labels are structurally coherent. When a profile is labeled `kyc_risk_rating: high`, it is high for defensible reasons — PEP status, high-risk jurisdiction exposure, sanctions proximity. When it is labeled `low`, the underlying profile supports that assessment. Your risk model trains on internally consistent data, not randomly scattered labels.

Built for Payment Processor Risk Model Calibration

6 Geographic Niches: Silicon Valley, Old Money Europe, Middle East, LatAm, Pacific Rim, Swiss-Singapore — the corridors where cross-border payment volume concentrates and where risk scoring complexity is highest.

31 Wealth Archetypes: Tech founders routing venture capital, commodity traders settling cross-border invoices, real estate developers moving capital across jurisdictions, family office managers executing portfolio rebalancing, private bankers facilitating client transactions — the actual sender and receiver profiles that your risk model encounters in production.

Deterministic Risk Signals: Risk ratings, PEP statuses, sanctions screening results, source-of-wealth verification, and high-risk jurisdiction flags distributed with realistic frequencies by niche. LatAm profiles carry higher risk ratings than Swiss profiles — not because of bias, but because the underlying regulatory landscape produces more high-risk indicators. Your model learns the actual distribution, not a uniform random assignment.

Cross-Border Complexity Built In: Every profile includes `tax_domicile`, `offshore_jurisdiction`, and `offshore_vehicle` — the three fields that determine whether a cross-border payment triggers enhanced scrutiny. Profiles span 6 geographic niches with realistic multi-jurisdictional structures: a LatAm agribusiness baron with a Panama trust and a Miami tax domicile, a Pacific Rim shipping executive with a Singapore holding company and a BVI offshore vehicle. These are the patterns your risk model must learn to score correctly.

Pricing

| Tier | Records | Price | Best For |

|---|---|---|---|

| Compliance Starter | 1,000 | $999 | Risk model proof of concept |

| Compliance Pro | 10,000 | $4,999 | Full model calibration suite |

| Compliance Enterprise | 100,000 | $24,999 | Production risk model training |

No SDK. No API key. No sales call. Download a file, load it into your ML pipeline, and measure whether your risk model can distinguish structural complexity from genuine risk.

Why This Matters Now

Enforcement is accelerating — and risk models are in scope. The EU AI Act becomes fully applicable in August 2026. Financial AI — including risk scoring models used for AML compliance — is classified as high-risk under Annex III. Article 10 requires documented governance of training data, including provenance, bias assessment, and GDPR compliance. If your risk model trains on real or anonymized client data, you need to prove compliance on both GDPR and AI Act simultaneously. Born-Synthetic data eliminates this dual-compliance burden entirely.

The fines target risk model failures specifically. Block paid $120M when regulators found Cash App’s Bank Secrecy Act compliance program inadequate — including the risk-based transaction monitoring that should have caught suspicious activity. Western Union’s $586M settlement cited failures in their risk-based compliance program. These are not fines for missing a single transaction. They are fines for deploying risk infrastructure that was structurally unable to catch the patterns regulators expected it to catch. The training data is the foundation of that infrastructure.

False positive costs are measurable. Industry estimates put the cost of investigating a single AML false positive at $20-$50 in analyst time. A payment processor generating 400 unnecessary alerts per day spends $2.9M-$7.3M annually on investigations that find nothing — while genuine risks slip through the noise. Calibrating your risk model on structurally complex legitimate profiles reduces false positives by giving the model a baseline for what legitimate complexity looks like.

The balance sheet test is open source. Every Sovereign Forger record passes algebraic validation: Assets – Liabilities = Net Worth. Run the Balance Sheet Test on our data, then run it on your current training data. If your current data fails basic financial coherence checks, your risk model is training on profiles that could not exist in reality.

Every dataset ships with a Certificate of Sovereign Origin — documenting the born-synthetic methodology, zero PII lineage, and regulatory alignment. When your model validation team or an external auditor asks “what data did you train the risk model on?”, you hand them the certificate. Provenance documented. Compliance demonstrated. Conversation over.

Calibrate Your Risk Scoring Models

Download 100 free KYC-Enhanced UHNWI profiles with deterministic risk signals. Run them through your risk scoring pipeline. Test whether your model can distinguish between structural complexity and genuine risk.

Count how many legitimate profiles score above your escalation threshold — profiles with offshore vehicles, multi-jurisdictional tax structures, and PEP-adjacent connections that are correctly labeled as low or medium risk. If your model flags them all as high risk, you have measured the size of your false positive problem.

That measurement is the starting point for calibration.

No credit card. No sales call. Just your work email.

Related reading: PCI DSS Test Data — Why 4.0 Bans Real Cards in Test Environments — how PCI DSS 4.0 Requirement 6.5.4 prohibits production data in testing.

Frequently Asked Questions

How do payment processors use synthetic risk profiles to calibrate fraud detection models without exposing real cardholder data?

Payment processors train and validate risk scoring algorithms using synthetic profiles that replicate behavioral patterns across low, medium, and high risk tiers. PCI DSS 4.0 Requirement 6.5.4, mandatory since March 2025, explicitly prohibits real card data in test environments, making synthetic datasets the compliant default. Sovereign Forger profiles cover 29 interlocked fields including transaction velocity, jurisdiction flags, and PEP status, enabling engineers to stress-test scoring thresholds across realistic edge cases without regulatory exposure.

How can synthetic KYC profiles help payment processors satisfy cross-border AML requirements during model validation?

Cross-border AML frameworks require processors to demonstrate that risk models perform consistently across diverse jurisdictions, including high-risk corridors flagged by FATF. Synthetic KYC profiles from Sovereign Forger include jurisdiction-specific attributes, sanctions screening outcomes, and source-of-wealth indicators calibrated to match real-world distribution ratios. Compliance teams can validate that scoring models correctly weight these variables across 30-plus jurisdictions without creating audit trails tied to real individuals, satisfying PSR oversight requirements and internal model governance standards.

What edge cases in payment processor risk scoring are hardest to cover with real data, and how does synthetic data address them?

Genuine high-risk profiles involving PEPs with layered corporate structures, dual-nationality sanctions exposure, or source-of-wealth anomalies are statistically rare in real datasets, creating class imbalance that degrades model precision. Synthetic data generators can oversample these edge cases at controlled ratios, for example producing 15 percent PEP profiles versus a typical 0.3 percent real-world prevalence, without distorting training distributions. This allows risk scoring teams to tune decision boundaries for rare but consequential scenarios that would otherwise require years of operational data to accumulate.

What does born-synthetic mean for payment processor risk scoring data, and why does it matter legally?

Born-synthetic data is generated entirely from mathematical distributions such as Pareto curves for wealth dispersion, with zero lineage to real individuals at any stage of production. No real person is anonymized, pseudonymized, or otherwise transformed to produce the output. For payment processors operating under GDPR Art.25, this means data protection by design is satisfied by construction rather than by post-processing controls. There is no re-identification risk, no data subject rights burden, and no conflict with EU AI Act Art.10 requirements for high-quality training data governance in automated risk scoring systems.

How can a payment processor team get started testing risk scoring models with synthetic KYC profiles?

Sovereign Forger provides 100 free KYC profiles with instant download via a work email address, no credit card required. Each profile contains 29 interlocked fields covering risk ratings across low, medium, and high tiers, PEP status, sanctions screening results, and source-of-wealth classification. The dataset is structured for immediate ingestion into model validation pipelines and covers the jurisdictional and demographic diversity needed to replicate realistic scoring scenarios. Teams can evaluate data fidelity against their existing model inputs before committing to a full production dataset.