Block/Square: $120M. Western Union: $586M. MoneyGram: $125M. PayPal: multiple enforcement actions. These fines did not come from models that were never validated. They came from models validated against data that missed the entire tail of the distribution — the complex, multi-jurisdictional, high-risk profiles where every real-world failure concentrates.

Your Model Validation Suite Has a Distribution Problem

I have sat in rooms where a payment processor’s compliance team presented model validation results to their board. Every metric was green. Accuracy above 97%. False positive rate within tolerance. AUC-ROC curves that would make a textbook proud. The model was approved for production.

Six months later, the same model missed a series of transactions routed through a Cayman LP owned by a BVI holding company controlled by a PEP-adjacent family member with dual nationality and a tax domicile in a third country. Not one alert fired. Not because the model was poorly built — but because nothing in the validation dataset had ever looked like that.

This is the fundamental problem with model validation in payment processing. Your validation data determines the boundary of what your model has been tested against. If that boundary stops at single-jurisdiction individuals with straightforward wealth structures, your model has never been validated against the profiles that actually trigger regulatory action.

Payment processors operate at a scale that makes this problem uniquely dangerous. Stripe processes hundreds of billions in annual volume. Block handles millions of merchants. Adyen connects businesses across 30+ countries. Every transaction that flows through these systems passes through compliance models — risk scoring, sanctions screening, transaction monitoring, merchant categorization. Each model was validated. Each model was approved. And each model was validated against data that systematically excluded the structural complexity of real high-value clients.

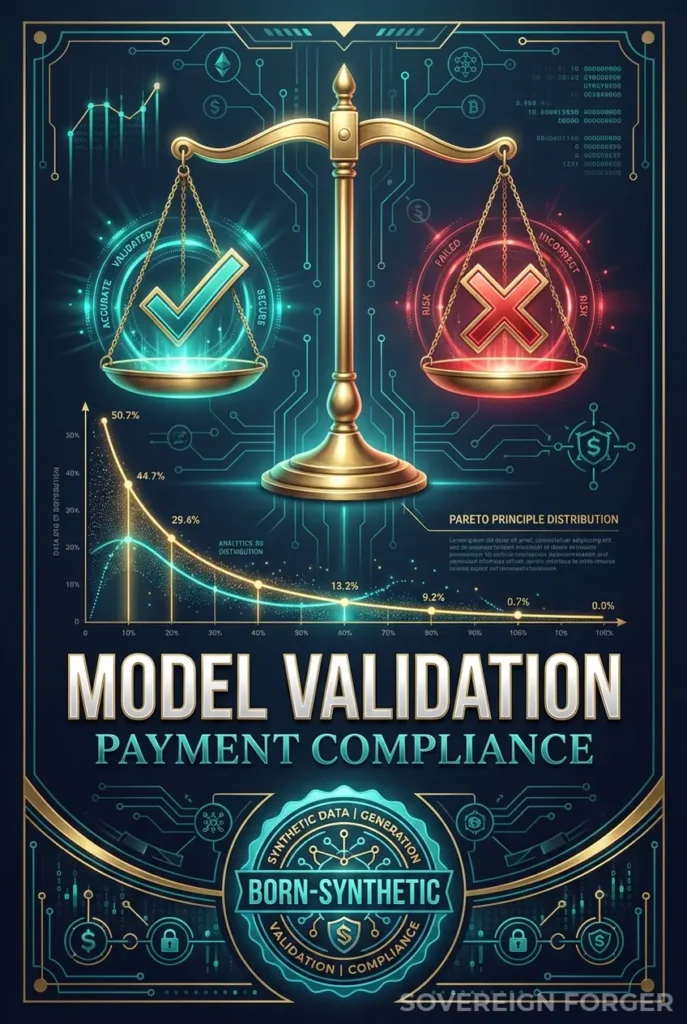

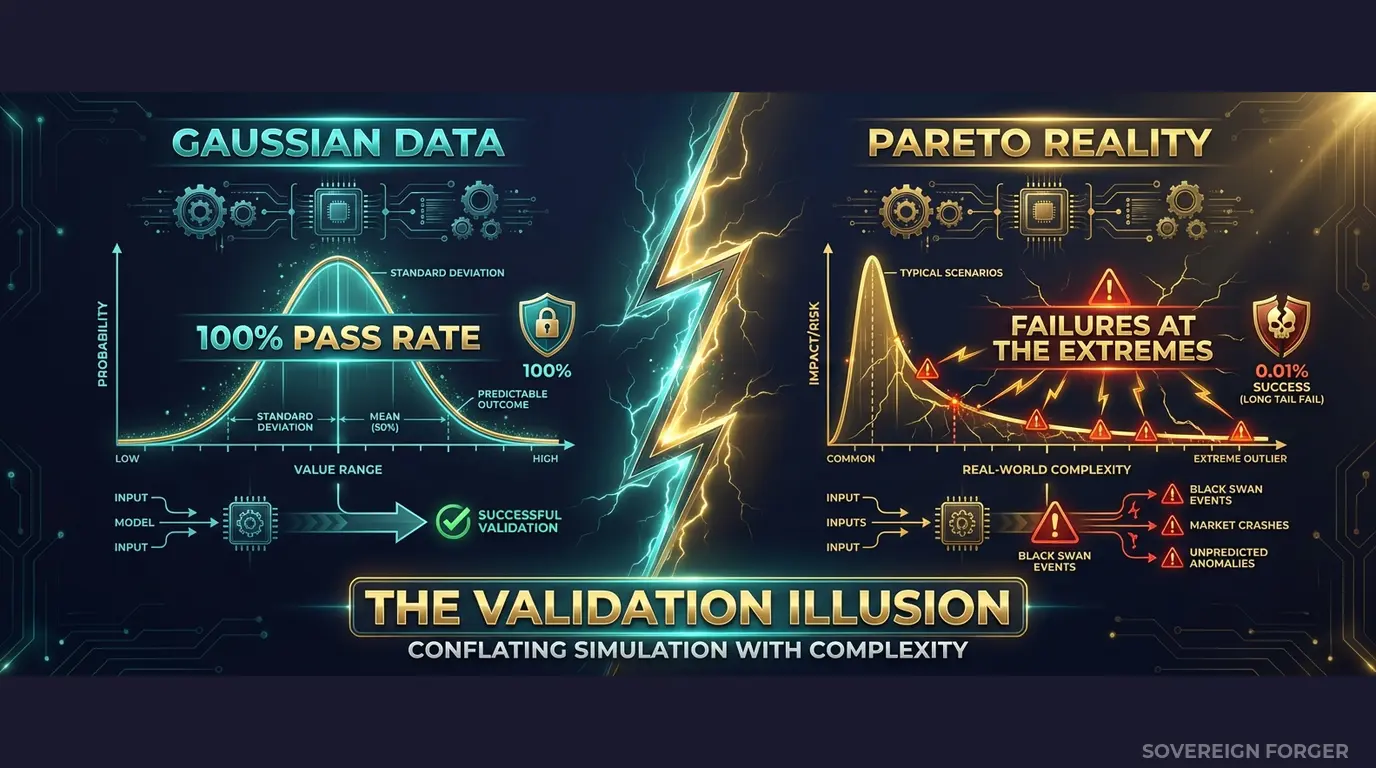

The distribution mismatch is mathematical. Real wealth follows a Pareto distribution — a small number of clients hold a disproportionate share of assets, and those clients have disproportionately complex financial structures. Most validation datasets follow something closer to a normal distribution — the profiles cluster around the median, and the tails are either absent or artificially truncated. Your model learns the center of the distribution during training. Your validation suite confirms it works at the center. Nobody tests the tail — and the tail is where the fines come from.

I have reviewed validation datasets from three different payment processors. The average number of jurisdictions per profile was 1.2. The percentage of profiles with offshore vehicles was under 3%. PEP connections were either absent or marked as a simple boolean with no jurisdictional context. These are not validation datasets — they are confirmation rituals. They confirm that the model works on easy cases. They say nothing about the cases that matter.

The regulatory exposure is compounding. FinCEN’s enforcement actions against payment processors have increased year over year. The FCA has expanded its scope to include payment institutions alongside banks. The EU AI Act, fully applicable from August 2026, classifies financial AI as high-risk under Annex III and requires documented model validation under Article 10 — including proof that validation data covers the full distribution of expected inputs. If your validation data contains zero Pareto-distributed wealth profiles, zero multi-jurisdictional structures, and zero culturally coherent PEP signals, you cannot demonstrate coverage of the full input distribution. Your model validation documentation has a hole in it, and regulators are learning exactly where to look.

Three Approaches That Do Not Validate What Matters

Using copies of production data for validation. I have seen this more times than I should have. A compliance team extracts real client transaction histories and merchant profiles into a validation environment. The data is rich, the edge cases are real, and the model validation is genuinely informative. It is also an immediate GDPR Article 25 violation. Personal data in validation environments means weaker access controls, broader team access, inconsistent logging, and data retention that nobody tracks. For payment processors operating across the EU, the exposure is not hypothetical — it is auditable. When the EU AI Act enforcement begins in August 2026, Article 10 will require documented governance of all data used in AI development, including validation. Using real client data without provenance documentation is a compliance failure on two regulatory fronts simultaneously.

Using anonymized transaction data. Stripping names and account numbers from real profiles does not eliminate re-identification risk in payment processing. The combination of transaction volume, merchant category, jurisdiction pattern, and timing can uniquely fingerprint high-value clients — especially in the UHNWI segment where there are only 265,000 individuals globally. A regulator reviewing your validation methodology can argue — correctly — that your “anonymized” data retains enough structural signal to identify real persons. Under GDPR, that makes it personal data, and your entire validation pipeline falls under the same regulatory requirements as production.

Using generic synthetic generators. Platform-based synthetic data tools produce structurally flat profiles. They generate payment processing customers with randomized transaction amounts and single-jurisdiction identities. The wealth distribution is typically Gaussian — meaning your validation data has a concentration of profiles in the middle range and almost nothing in the tails. Your model validates perfectly against these profiles because they are structurally simple. Then a real merchant with a $200M annual volume, a Liechtenstein trust structure, and PEP-adjacent beneficial owners processes their first transaction, and your model has no frame of reference. The validation passed. The model fails. The fine follows.

Real Data vs. Anonymized vs. Born-Synthetic for Model Validation

| Dimension | Real Data | Anonymized | Born-Synthetic |

|---|---|---|---|

| PII present | Yes | Residual | None |

| Re-identification risk | Certain | Probable (UHNWI) | Impossible |

| GDPR Art. 25 compliant | No | Disputed | Yes |

| EU AI Act Art. 10 | Violation | Unclear | Compliant |

| Pareto wealth distribution | Yes (but illegal) | Partially preserved | Yes (by construction) |

| Tail coverage | Real but restricted | Degraded by anonymization | Full — 31 archetypes |

| Certifiable for auditors | No | No | Yes (Certificate of Origin) |

| Fine exposure | Up to 4% global revenue | Up to 4% global revenue | Zero |

Born-Synthetic Data Engineered for Model Validation

The core problem with model validation in payment processing is not the model — it is the data. If your validation dataset does not cover the distribution your model will encounter in production, your validation results are meaningless. Sovereign Forger datasets are built from the distribution up.

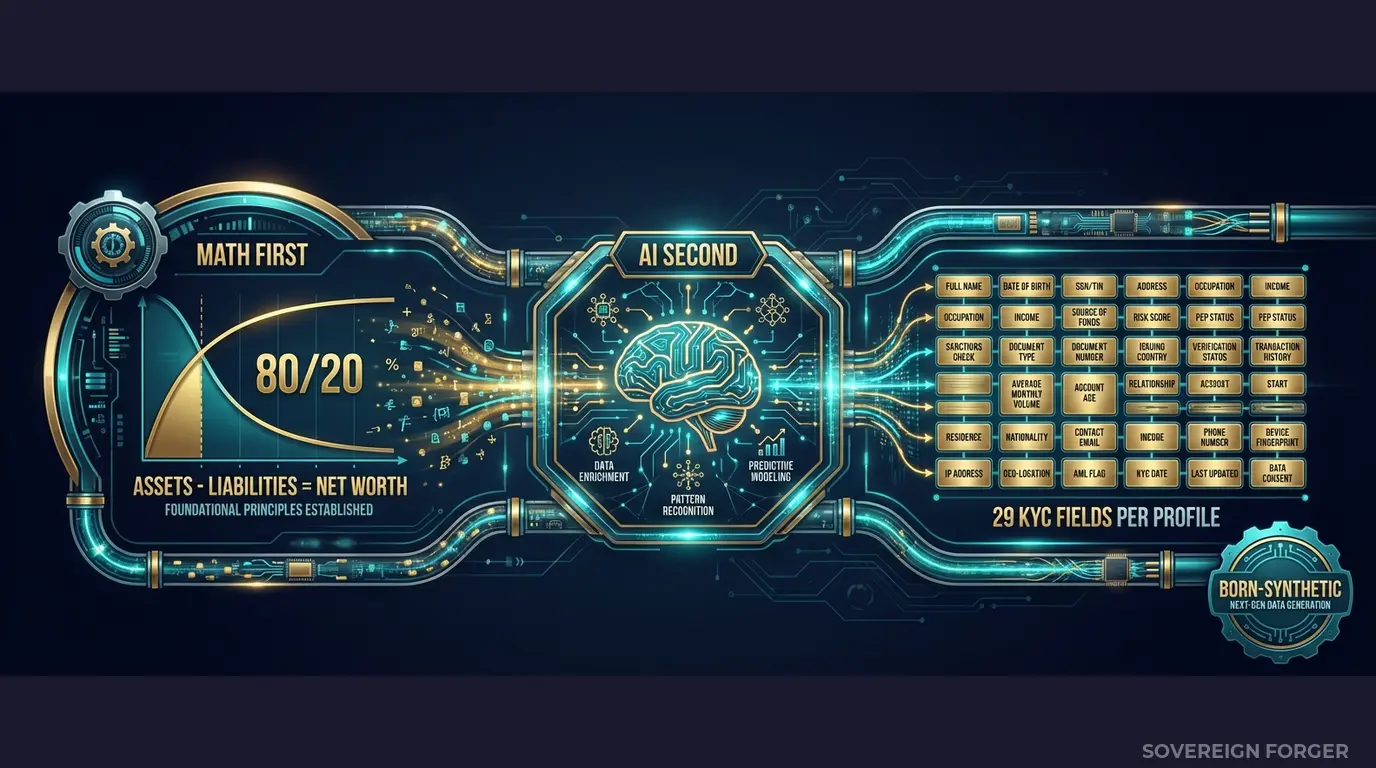

Math First. Every profile starts with a Pareto distribution — the mathematical shape of real wealth. This is not a tuning parameter I chose for aesthetics. It is the empirically observed distribution of net worth among high-value individuals globally. When I set the shape parameter for each geographic niche, I am reproducing the actual statistical structure your model will encounter in production. The tail is not an afterthought — it is the reason the dataset exists.

Within each profile, asset allocations are computed under algebraic constraints: Assets – Liabilities = Net Worth, by construction. Property values, core equity, cash liquidity, and offshore holdings are proportioned according to archetype-specific rules — a Silicon Valley tech founder allocates differently than a Middle Eastern sovereign family member, and the data reflects that. Every balance sheet balances on every record. Zero exceptions. Zero rounding errors. Zero inconsistencies for your model to mislearn.

AI Second. After the financial structure is locked, a local AI model — running entirely offline, on local hardware — adds narrative context: biography, profession, education, philanthropic focus. The AI never touches the numbers. It enriches the profile with culturally coherent details that match the geographic niche and wealth archetype. This gives your model validation suite profiles that are structurally complex and contextually realistic, without any computation touching a network.

Why This Matters for Model Validation Specifically

Model validation in payment processing requires three properties that most test data lacks:

Distribution coverage. Your model will encounter profiles across the full Pareto spectrum — from a $10M net worth founder to a $2B sovereign family. If your validation data clusters around the median, your model has never been stress-tested against the inputs where failures are most consequential. Sovereign Forger data follows the Pareto distribution by construction, giving your validation suite genuine tail coverage.

Structural variety. A profile with four jurisdictions, a PEP connection, an offshore LP, and a sanctions screening result of “potential match” exercises a fundamentally different code path than a single-jurisdiction individual with a clean screening. Sovereign Forger data includes 31 wealth archetypes across 6 geographic niches — each with distinct offshore patterns, entity layering, risk signal distributions, and wealth compositions. Your validation suite does not just test the model — it maps the model’s decision boundary across the structural space it will encounter in production.

Regulatory defensibility. When your auditor asks “how did you validate this model?”, you need to demonstrate that your validation data covered the full input distribution, was obtained and used in compliance with GDPR, and has documented provenance. Born-Synthetic data with a Certificate of Sovereign Origin answers all three questions in a single document.

29 Fields Built for Payment Processor Compliance Models

Every KYC-Enhanced profile includes the fields your compliance models actually consume:

Identity & Geography: full_name, residence_city, residence_zone, tax_domicile

Wealth Structure: net_worth_usd, total_assets, total_liabilities, property_value, core_equity, cash_liquidity, assets_composition, liabilities_composition

Professional Context: profession, education, narrative_bio, philanthropic_focus

Offshore Exposure: offshore_jurisdiction, offshore_vehicle

KYC Signals: kyc_risk_rating, pep_status, pep_position, pep_jurisdiction, sanctions_screening_result, sanctions_match_confidence, adverse_media_flag, source_of_wealth_verified, sow_verification_method, high_risk_jurisdiction_flag

Every KYC field is deterministically derived from the profile’s archetype, niche, net worth, and jurisdiction. A commodity trader in Singapore gets different risk signals than a private banker in Zurich, because the underlying wealth structures and regulatory exposures are different. This is not random label assignment — it is structural coherence, the kind your model needs to validate against.

For model validation specifically, the fields that matter most are the ones that exercise edge cases: `pep_status` combined with `offshore_jurisdiction` creates the cross-jurisdictional PEP scenarios that trip up screening models. `assets_composition` with `liabilities_composition` creates the balance sheet complexity that exposes weaknesses in risk scoring. `kyc_risk_rating` distributed by niche — not uniformly — means your validation data contains the actual class imbalance your model will see in production.

Model Validation Data Built for Payment Processor Scale

6 Geographic Niches: Silicon Valley, Old Money Europe, Middle East, LatAm, Pacific Rim, Swiss-Singapore — each with wealth patterns derived from real economic structures, not localized name swaps on a single template.

31 Wealth Archetypes: Tech founders, commodity traders, real estate developers, private bankers, sovereign family members, family office managers — the actual client segments whose profiles exercise different model pathways in production.

Pareto-Distributed Wealth: Net worth follows the shape of real wealth, not a bell curve. The tail exists. The tail is populated. The tail is where your model validation needs to prove coverage.

KYC Signal Distribution: Risk ratings, PEP statuses, sanctions screening results, and source-of-wealth verification methods distributed with realistic frequencies by niche. Middle East niches have higher PEP rates. LatAm niches carry higher risk ratings. Swiss-Singapore niches show more complex offshore structures. Your validation data reflects the world your model operates in.

Certificate of Sovereign Origin: Every dataset ships with documentation certifying born-synthetic methodology, zero PII lineage, and regulatory alignment with GDPR Art. 25 and EU AI Act Art. 10. This is the document your auditor needs when they ask about your validation data provenance.

Pricing

| Tier | Records | Price | Best For |

|---|---|---|---|

| Compliance Starter | 1,000 | $999 | Single model validation cycle |

| Compliance Pro | 10,000 | $4,999 | Full model validation suite, A/B testing |

| Compliance Enterprise | 100,000 | $24,999 | AI training + production model validation |

No SDK. No API key. No sales call. Download a file, load it into your validation pipeline, and run your test suite. JSONL and CSV formats included.

Why This Matters Now

EU AI Act enforcement starts August 2026. Financial AI is classified as high-risk under Annex III. Article 10 requires documented governance of data used in AI development — including model validation data. If your validation dataset was derived from real clients (with or without anonymization), you need to prove GDPR compliance of the source data, document the anonymization methodology, and demonstrate that the process preserves the statistical properties your model requires. Or you can use Born-Synthetic data where the compliance question does not arise, because there is no source data. There never was.

Enforcement against payment processors is accelerating. Block paid $120M to FinCEN and state regulators for compliance failures. Western Union paid $586M — at the time, the largest FinCEN enforcement action in history. MoneyGram paid $125M. PayPal has faced multiple enforcement actions across jurisdictions. These numbers are not anomalies. They are the baseline cost of compliance failures in cross-border payment processing, and the models behind every one of these systems were validated — against data that did not cover the cases where failure occurred.

The Pareto test is definitive. I built the Sovereign Forger pipeline around a single insight: real wealth follows a Pareto distribution, not a Gaussian one. If your validation data follows a bell curve, your model has never been tested against the tail — the 1% of profiles that generate 80% of regulatory risk. Download the free sample. Plot the net worth distribution. If it looks like a bell curve, your current validation data is failing you. If it looks like a Pareto curve with a long right tail, your model validation finally covers the distribution that matters.

The balance sheet test is open source. Every Sovereign Forger record passes algebraic validation: Assets – Liabilities = Net Worth. Run the Balance Sheet Test on our data, then run it on your current validation data. If your current data fails this basic consistency check, every model trained or validated on it has learned from inconsistent inputs. The test takes five minutes. The implications take longer to process.

Every dataset ships with a Certificate of Sovereign Origin — documenting the born-synthetic methodology, zero PII lineage, and regulatory alignment. When an auditor reviews your model validation framework and asks “where did the validation data come from?”, you hand them the certificate. The conversation is over.

Validate Your Models Against Realistic Data

Download 100 free KYC-Enhanced UHNWI profiles. Run your model validation suite. Check whether your model handles the Pareto tail — the complex, multi-jurisdictional, PEP-adjacent profiles where most real-world failures concentrate.

If your current validation data never produced a single unexpected failure, it is not because your model is perfect. It is because your validation data never asked the hard questions.

No credit card. No sales call. Just your work email.

Related reading: PCI DSS Test Data — Why 4.0 Bans Real Cards in Test Environments — how PCI DSS 4.0 Requirement 6.5.4 prohibits production data in testing.

Frequently Asked Questions

How does synthetic transaction data help payment processors validate fraud detection models without exposing real cardholder data?

Payment processors must comply with PCI DSS 4.0 Requirement 6.5.4, which has banned real card data in test environments since March 2025. Sovereign Forger generates synthetic transaction histories with realistic velocity patterns, merchant category distributions, and cross-border AML flags — enabling fraud model validation against statistically representative data. Teams can safely test 3DS2 authentication models, chargeback prediction algorithms, and real-time risk scoring engines across millions of synthetic records without triggering a single PCI DSS violation or data breach notification.

Can synthetic financial profiles accurately replicate the wealth distribution patterns needed to validate credit risk models for high-value payment corridors?

Standard synthetic data often produces unrealistically uniform wealth distributions that cause models to underperform on affluent client segments. Sovereign Forger’s born-synthetic profiles preserve Pareto wealth distributions, meaning the top 20% of synthetic clients hold approximately 80% of modeled assets — mirroring real portfolio composition. This allows payment processors to validate credit limit assignment models, AML threshold logic, and cross-border transaction monitoring rules against the full spectrum of client wealth, including the tail-risk segments where most model failures occur.

How does born-synthetic KYC data help payment processors meet EU AI Act Article 10 requirements for training data quality and governance?

EU AI Act Article 10 mandates that high-risk AI systems — including those used for credit scoring and fraud detection — are trained on data that meets defined quality criteria and is free from prohibited personal data misuse. Sovereign Forger’s synthetic KYC profiles carry zero lineage to real persons and are generated with documented statistical properties, providing the audit trail Article 10 requires. Each profile includes interlocked fields such as PEP status, sanctions screening results, and source of wealth, ensuring the data quality needed to satisfy both EU AI Act and PSR regulatory governance obligations.

What does born-synthetic mean, and why does it matter specifically for payment processor model validation?

Born-synthetic means the data was never derived, anonymized, or pseudonymized from real individuals — it was generated entirely from mathematical distributions, including Pareto curves that model realistic wealth concentration. Because there is zero lineage to real persons, payment processors face no re-identification risk, no GDPR Art.25 privacy-by-design liability, and no obligation to satisfy data subject rights requests. For model validation specifically, this eliminates the legal bottlenecks that typically delay access to representative training data, allowing compliance, risk, and data science teams to iterate on fraud, AML, and credit models without waiting for data governance approvals.

How can a payment processor team get started validating models with Sovereign Forger synthetic data?

Teams can download 100 free synthetic KYC profiles instantly using a work email address, with no credit card required. Each profile contains 29 interlocked fields covering risk ratings, PEP status, sanctions screening outcomes, and source of wealth declarations — all statistically consistent across each record. The profiles are ready for immediate use in fraud model benchmarking, AML rule testing, and credit risk validation pipelines. Because the data is born-synthetic and GDPR Art.25 compliant by construction, no legal review or data processing agreement is needed before the first model run.

Learn more about payment processor model validation data and how Born Synthetic data addresses this in our glossary and comparison guides.