This transaction monitoring data is built for exactly this scenario. Block: $120M. Western Union: $586M. MoneyGram: $125M. PayPal: multiple enforcement actions across jurisdictions. Every one of these penalties traces back to transaction monitoring systems that could not distinguish legitimate cross-border wealth flows from suspicious activity — because the calibration data never contained realistic multi-jurisdictional patterns.

Your Transaction Monitoring System Is Calibrated Against the Wrong Reality

I have spent years watching payment processors build transaction monitoring systems that work perfectly in test environments and then drown in false positives the moment they go live. The pattern is always the same.

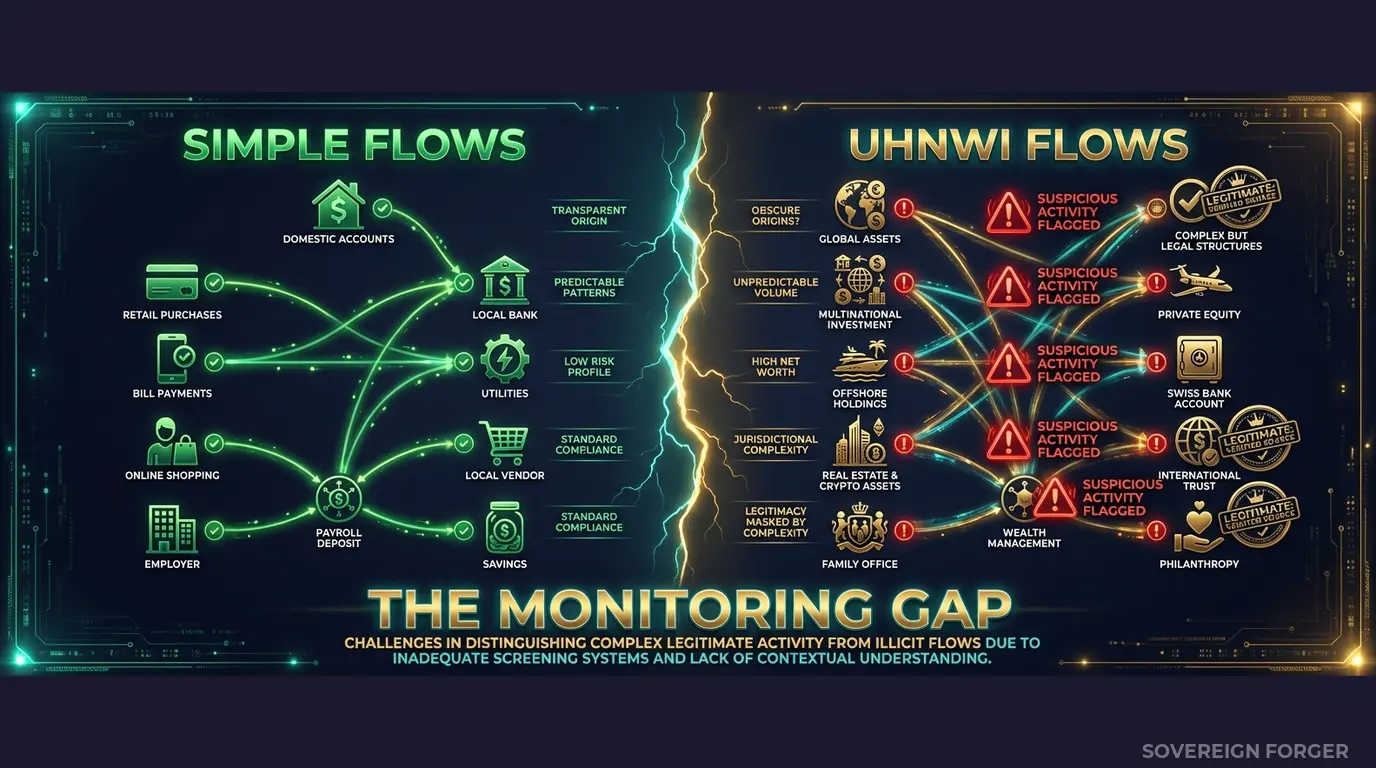

The compliance team calibrates their monitoring thresholds using test profiles — synthetic or sampled — that represent single-jurisdiction customers moving money in one currency through one corridor. Domestic sender, domestic receiver, clean payment chain. The thresholds are set. The rules are tuned. Alert volumes look manageable. Everyone signs off.

Then the system hits production. A tech founder in Palo Alto sends $2.4M through a Delaware LLC to a property SPV registered in the Cayman Islands. The funds route through Stripe, clear in USD, convert to GBP, and settle against a trust account at a Swiss private bank. The monitoring system has never seen this shape of transaction. It flags it. It flags the next one too. And the one after that. Within a week, the compliance team is buried under thousands of alerts — most of them legitimate wealth movements that the system was never trained to recognize.

This is the fundamental problem with transaction monitoring at payment processors. You are not a bank with a fixed client base. You are infrastructure. Stripe processes payments for millions of businesses. Adyen handles cross-border commerce across 200+ markets. Block moves money between consumers, merchants, and financial institutions simultaneously. PayPal sits at the intersection of consumer payments, merchant services, and cross-border remittance.

Every one of these flows generates transactions that cross jurisdictions. A significant percentage involve entities — LLCs, trusts, holding companies — not just individuals. And the high-value clients, the ones who move the most volume through your platform, are exactly the ones whose wealth structures are most complex. They have offshore vehicles. They have multiple tax domiciles. They have PEP-adjacent connections through board seats or family members. They are, by definition, the profiles that transaction monitoring systems must handle correctly — and they are the profiles that never appear in standard test data.

The calibration gap is measurable. If your test data contains zero offshore jurisdictions, zero multi-entity ownership chains, and zero PEP exposure, then every threshold your monitoring system uses was set without accounting for the transaction patterns these profiles generate. Your false positive rate is not a tuning problem — it is a data problem. The system is doing exactly what you trained it to do. You trained it on the wrong reality.

The regulatory consequence is predictable. When Western Union paid $586M in penalties, the core finding was that their compliance program failed to detect and report suspicious transactions — transactions that involved cross-border corridors and high-risk jurisdictions. When Block paid $120M, regulators cited inadequate transaction monitoring controls. When MoneyGram settled for $125M, the issue was the same: the monitoring system did not catch what it should have caught, because it was never calibrated to recognize the patterns that matter.

I built Sovereign Forger’s KYC profiles specifically to fill this gap. Not with more random data, but with structurally complex profiles that reflect how high-net-worth individuals actually move money across borders — the exact patterns your transaction monitoring system needs to learn.

Three Approaches That Don’t Work for Transaction Monitoring Calibration

Payment processors face a unique version of the test data problem. You are not just onboarding clients — you are monitoring ongoing transaction flows across millions of payment events per day. The calibration data needs to represent not just who the client is, but how their wealth structure generates transaction patterns. Most approaches fail at this intersection.

Using copies of production data. Some teams extract real transaction logs and client profiles into test environments. For a payment processor handling cross-border flows, this is a GDPR Article 25 violation waiting to happen — the data includes real sender and receiver identities, real jurisdictions, real payment amounts. The moment this data enters a test environment with broader access, you have personal data in an environment with weaker controls. Under the EU AI Act (fully applicable August 2026), if your monitoring models train on this data, Article 10 requires documented governance of training data provenance. You need to prove you had the right to use it — and for cross-border data, that means GDPR compliance in every jurisdiction the data touches.

Using anonymized transaction records. Stripping names and account numbers from real payment flows does not eliminate re-identification risk. Payment processors handle transactions with highly distinctive patterns — a $3.2M wire from a Delaware entity to a BVI vehicle through a Singapore clearing account is not anonymous just because you removed the name. The combination of amount, corridor, timing, and entity type can re-identify the sender in a population of 265,000 UHNWIs. A regulator can argue — and increasingly does argue — that this data remains pseudonymized under GDPR, not anonymized. Full compliance obligations still apply.

Using generic synthetic generators. Platform-based generators produce flat profiles with random transaction attributes. They generate “a person” who “sends money” to “another person” — no offshore vehicles, no multi-jurisdictional wealth structures, no PEP exposure, no entity layering. Your monitoring system trains on these profiles and learns that wealth is simple and transactions are domestic. Then a real client routes a legitimate asset reallocation through three jurisdictions and two entity types, and the system flags it because it has never seen this shape before. Your false positive rate explodes — not because the rules are wrong, but because the training data was structurally insufficient.

Real Data vs. Anonymized vs. Born-Synthetic

| Dimension | Real Data | Anonymized | Born-Synthetic |

|---|---|---|---|

| PII present | Yes | Residual | None |

| Re-identification risk | Certain | Probable (UHNWI) | Impossible |

| GDPR Art. 25 compliant | No | Disputed | Yes |

| EU AI Act Art. 10 | Violation | Unclear | Compliant |

| Cross-border patterns | Authentic but restricted | Degraded by stripping | Structurally coherent |

| Certifiable for auditors | No | No | Yes (Certificate of Origin) |

| Fine exposure | Up to 4% global revenue | Up to 4% global revenue | Zero |

Born-Synthetic KYC Data Built for Transaction Monitoring Calibration

The reason your transaction monitoring system generates too many false positives — or worse, misses truly suspicious patterns — is that it was calibrated against profiles that do not reflect how wealth actually moves across borders. Sovereign Forger solves this at the data layer.

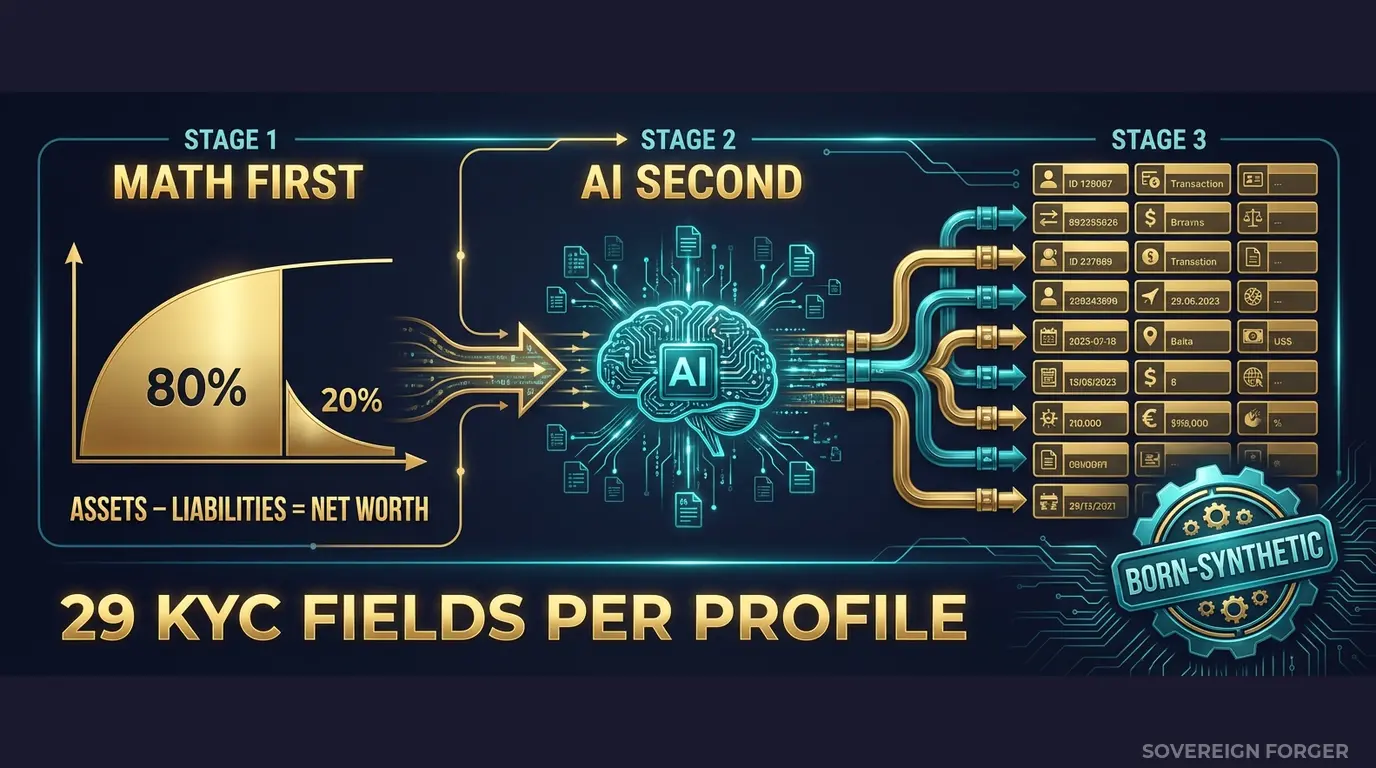

Every profile in the Sovereign Forger KYC dataset is generated from mathematical constraints — not derived from any real person. The generation pipeline works in two stages:

Math First. Net worth follows a Pareto distribution — the way real wealth is distributed, not a bell curve. Asset allocations are computed within algebraic constraints: Assets – Liabilities = Net Worth, by construction. Every balance sheet balances on every record. Zero exceptions. This matters for transaction monitoring because the asset composition directly determines what kind of transactions a profile generates. A client with 40% in core equity and 15% in offshore vehicles produces fundamentally different payment flows than a client with 80% in cash liquidity and zero offshore exposure.

AI Second. A local AI model adds narrative context — biography, profession, philanthropic focus — after the financial figures are locked. The AI never touches the numbers. It enriches the profile with culturally coherent details that match the geographic niche and wealth tier. This narrative layer is what separates a useful test profile from a row of numbers — your compliance analysts need to understand why a transaction pattern makes sense for a given client, not just whether it triggers a rule.

How This Solves Transaction Monitoring Specifically

Transaction monitoring systems fail when they encounter patterns that fall outside their calibration range. For payment processors, the critical patterns are:

Cross-border wealth flows. Every Sovereign Forger profile includes `tax_domicile`, `offshore_jurisdiction`, and `offshore_vehicle` — the three fields that determine whether a client’s transactions cross borders legitimately. A tech founder domiciled in the US with a Cayman LP holding generates different cross-border patterns than a commodity trader domiciled in Singapore with a BVI trust. Your monitoring system needs to see both shapes during calibration, not just the first one.

Jurisdiction risk correlation. The `high_risk_jurisdiction_flag` field is deterministically derived from the profile’s offshore exposure and tax domicile. This means your monitoring rules can be calibrated against profiles that realistically combine high-risk jurisdictions with wealth structures that make those jurisdictions appropriate — not profiles where “high risk” is randomly assigned with no structural context.

PEP and sanctions signal interaction. Real UHNWI transaction monitoring requires understanding how PEP status, sanctions screening results, and source-of-wealth verification interact. A profile flagged as PEP with verified source of wealth generates legitimate high-value transactions that should not trigger alerts. A profile with a potential sanctions match and unverified source of wealth should trigger escalation. Your system needs to be trained on both scenarios — and the KYC signals need to be internally consistent, not randomly sprinkled.

Asset composition as transaction predictor. The `assets_composition` and `liabilities_composition` fields break down wealth into property, equity, cash, offshore holdings, and liabilities. For transaction monitoring, this is the structural DNA that determines what transactions a client should be generating. A client with heavy property holdings makes large periodic payments (taxes, maintenance, transfers). A client with heavy equity holdings generates trading flows. A client with significant offshore vehicles generates cross-border movements. Your monitoring thresholds need to be set against these different shapes — not against a flat average.

29 Fields Designed for Transaction Monitoring Calibration

Every KYC-Enhanced profile includes the fields your monitoring pipeline needs to calibrate correctly:

Identity & Geography: full_name, residence_city, residence_zone, tax_domicile

Wealth Structure: net_worth_usd, total_assets, total_liabilities, property_value, core_equity, cash_liquidity, assets_composition, liabilities_composition

Professional Context: profession, education, narrative_bio, philanthropic_focus

Offshore Exposure: offshore_jurisdiction, offshore_vehicle

KYC Signals: kyc_risk_rating, pep_status, pep_position, pep_jurisdiction, sanctions_screening_result, sanctions_match_confidence, adverse_media_flag, source_of_wealth_verified, sow_verification_method, high_risk_jurisdiction_flag

Every KYC field is deterministically derived from the profile’s archetype, niche, net worth, and jurisdiction — not randomly assigned. A sovereign family in the Middle East gets different risk signals than a private banker in Switzerland, because the underlying wealth structures and jurisdictional exposures are fundamentally different. Your monitoring system learns these structural correlations during calibration, so it can distinguish legitimate patterns from suspicious ones in production.

Built for Payment Processor Transaction Monitoring at Scale

6 Geographic Niches: Silicon Valley, Old Money Europe, Middle East, LatAm, Pacific Rim, Swiss-Singapore — each with culturally coherent wealth patterns, offshore structures, and cross-border corridors that your monitoring system needs to recognize as legitimate.

31 Wealth Archetypes: Tech founders, commodity traders, shipping dynasty heirs, sovereign family members, private bankers, real estate developers — the actual client profiles that generate the cross-border transaction patterns your system must distinguish from suspicious activity.

KYC Signal Distribution: Risk ratings, PEP statuses, sanctions screening results, and source-of-wealth verification methods distributed with realistic frequencies by niche. Middle East profiles carry higher PEP rates (~29%) than Silicon Valley (~3%), because that reflects the actual population. LatAm profiles show higher risk ratings (~84%) due to jurisdictional exposure. These are not arbitrary percentages — they are derived from the structural characteristics of each niche.

Internally Consistent Profiles. Every field in every record is algebraically and logically connected. A profile with a BVI offshore vehicle, PEP-adjacent status, and $200M net worth generates a coherent set of KYC signals that reflect the actual regulatory complexity of that profile. Your monitoring system learns from structural truth, not random noise.

Pricing

| Tier | Records | Price | Best For |

|---|---|---|---|

| Compliance Starter | 1,000 | $999 | Threshold calibration, proof of concept |

| Compliance Pro | 10,000 | $4,999 | Full regression suite, model retraining |

| Compliance Enterprise | 100,000 | $24,999 | AI training + production monitoring calibration |

No SDK. No API key. No sales call. Download a file, open it in Python or your monitoring platform, and use it to recalibrate your transaction monitoring thresholds against structurally realistic profiles.

Why This Matters Now

The enforcement pressure on payment processors is intensifying. FinCEN, the FCA, and EU regulators have made cross-border payment compliance a priority. Western Union’s $586M penalty was specifically about failures in transaction monitoring and suspicious activity reporting across corridors. Block’s $120M fine cited inadequate compliance controls for the payment volumes they process. MoneyGram’s $125M settlement was driven by the same gap: monitoring systems that failed to flag what regulators consider obviously suspicious patterns. The trend is clear — payment processors are no longer getting the benefit of the doubt.

The EU AI Act changes the calculus. Fully applicable from August 2026, financial AI is classified as high-risk under Annex III. If your transaction monitoring uses machine learning models — and most modern systems do — Article 10 requires documented governance of all training data. Provenance, bias assessment, GDPR compliance. If your models were trained on real client data or poorly anonymized records, you now need to prove compliance on both GDPR and AI Act simultaneously. Born-synthetic data eliminates this entire problem: zero PII by construction, documented provenance via the Certificate of Sovereign Origin.

False positive rates are a business problem, not just a compliance problem. Payment processors operate on volume and speed. Every false positive in your transaction monitoring system requires manual review. At scale — millions of transactions per day — even a 0.5% false positive rate can overwhelm your compliance team. The root cause is almost always the same: the monitoring model was calibrated on structurally simple data, so it treats every complex cross-border pattern as anomalous. Better calibration data does not just reduce regulatory risk — it reduces operational cost.

The balance sheet test is open source. Every Sovereign Forger record passes algebraic validation: Assets – Liabilities = Net Worth. Run the Balance Sheet Test on our data, then run it on your current test data. If your test profiles do not pass this basic mathematical check, your monitoring system is calibrated against financially impossible scenarios.

Every dataset ships with a Certificate of Sovereign Origin — documenting the born-synthetic methodology, zero PII lineage, and regulatory alignment. When your auditor asks “where did you get this calibration data?”, you hand them the certificate. When the EU AI Act auditor asks about your training data governance, you show the same document.

Calibrate Your Transaction Monitoring

Download 100 free KYC-Enhanced UHNWI profiles with realistic multi-jurisdictional exposure, offshore structures, and wealth composition. Use them to baseline your monitoring thresholds. Feed them into your transaction monitoring system and count how many trigger alerts that your current calibration data never produced — and how many legitimate patterns your system currently flags as suspicious.

That gap between what your system catches and what it misses is the size of your transaction monitoring blind spot.

No credit card. No sales call. Just your work email.

Related reading: PCI DSS Test Data — Why 4.0 Bans Real Cards in Test Environments — how PCI DSS 4.0 Requirement 6.5.4 prohibits production data in testing.

Frequently Asked Questions

How does synthetic transaction data help payment processors meet PCI DSS 4.0 requirements for test environments?

PCI DSS 4.0 Requirement 6.5.4, mandatory since March 2025, explicitly prohibits real card data in test and development environments. Payment processors using Sovereign Forger synthetic profiles can run full transaction monitoring pipelines, including fraud scoring and alert threshold tuning, without ever ingesting live PANs or cardholder data. This eliminates a common audit failure point and allows engineering teams to share test datasets freely across vendors and QA contractors without triggering PCI scope expansion.

Can synthetic payment profiles realistically simulate the cross-border transaction patterns that trigger AML alert queues?

Sovereign Forger profiles are built to reproduce the behavioural signatures that AML systems flag: structuring sequences below reporting thresholds, rapid fund movement across three or more jurisdictions, and velocity spikes consistent with layering. Each synthetic customer carries a consistent transaction history, risk rating, and source-of-wealth narrative so that rule-based and machine-learning monitors produce alert volumes comparable to production. Teams can calibrate false-positive rates against PSR and cross-border AML requirements before a single live transaction is processed.

How do payment processors use synthetic PEP and sanctions profiles to stress-test screening coverage without exposing real subject data?

Screening engines require adversarial test cases: PEPs with transliterated names, sanctioned entities using variant spellings, and dual-use corporate structures. Sovereign Forger generates synthetic profiles with interlocked PEP status, sanctions flags, and ownership chains that mirror genuine complexity without referencing any real individual. Compliance teams can measure match rates, fuzzy-matching recall, and escalation latency across hundreds of edge cases, satisfying internal model validation requirements and demonstrating to regulators that screening logic has been empirically tested rather than assumed to work.

What does born-synthetic mean for payment processor transaction monitoring data, and why does it matter?

Born-synthetic means every profile is generated entirely from mathematical distributions, including Pareto-distributed wealth and transaction frequency, with zero lineage to any real person or payment record. No anonymisation, tokenisation, or masking step is involved because there is no source data to protect. For payment processor transaction monitoring, this distinction is material: it means the dataset carries no residual re-identification risk, satisfies GDPR Art.25 data-protection-by-design requirements without additional controls, and can be shared across jurisdictions and third-party vendors without legal review of every transfer.

How quickly can a payment processor compliance team get started testing with Sovereign Forger synthetic profiles?

Teams can download 100 free synthetic KYC profiles immediately after registering with a work email, no credit card required. Each profile contains 29 interlocked fields covering risk ratings, PEP status, sanctions screening flags, and source-of-wealth narratives, all consistent with one another across the full record. The dataset is ready for direct ingestion into transaction monitoring systems, allowing alert threshold testing and model validation to begin the same day without waiting for data governance approvals or anonymisation pipelines.