Your clients are getting fined — Starling Bank £29M, HSBC £63.9M, N26 €9.2M — and every one of those fines traces back to screening systems that were never tested against the kind of profiles that actually trigger sanctions alerts in production. If your product was in the stack, your product gets the blame.

Your Sanctions Screening Engine Has Never Been Properly Stress-Tested

I have spent years watching RegTech companies demo their sanctions screening products. The demos are always impressive. Real-time matching against OFAC, EU consolidated lists, UN sanctions. Fuzzy name matching with configurable thresholds. Beautiful dashboards with alert prioritization and case management workflows.

Then I look at the test data behind the demo. Five hundred profiles. Clean Western names. Single nationality. One jurisdiction each. No PEP connections. No offshore vehicles. No naming complexity — no transliterated Arabic names, no Chinese names rendered in three different romanization systems, no hyphenated European aristocratic surnames that span four generations.

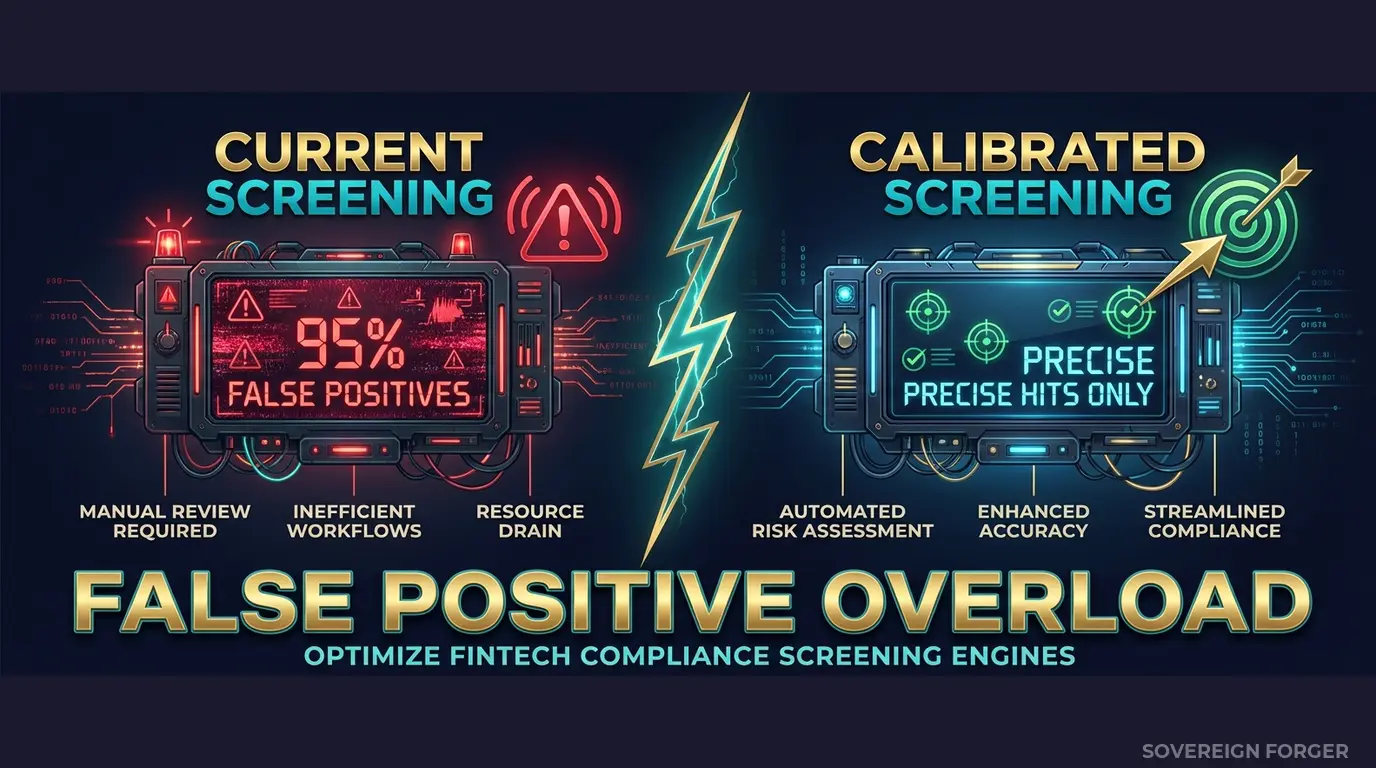

The screening engine flags zero false positives on this data. The product manager calls it “high precision.” The sales deck says “industry-leading accuracy.” And it is accurate — against data that looks nothing like the client portfolios where sanctions screening actually matters.

Here is what happens in production. A RegTech client — a neobank, a payment processor, a wealth manager — onboards a real UHNWI. The client has a Cayman Islands LP, a tax domicile in Singapore, a family member who held a minor government position in the UAE, and a name that transliterates three different ways depending on which passport you are reading. The sanctions screening engine produces fourteen alerts on a single client. Twelve are false positives. The compliance team spends four hours resolving them. The next week, it happens again. And again.

Within six months, the compliance team is drowning in false positives. They start batch-dismissing alerts. They raise the fuzzy matching threshold to reduce noise. They build workarounds that bypass the screening logic for certain jurisdiction combinations. And then a real sanctions match slips through the cracks — because the team trained itself to ignore alerts, and the engine was never calibrated against realistic complexity.

This is not your client’s failure. This is your product’s failure. Your screening engine was validated against structurally simple profiles that never tested the edge cases where sanctions screening actually breaks down: multi-jurisdictional exposure, PEP-adjacent connections, name transliteration variance, and high-risk jurisdiction combinations that produce cascading alerts.

The liability equation for RegTech is different from everyone else. When a bank gets fined for sanctions failures, the RegTech vendor does not pay the fine — but they lose the contract. And the next prospect calls the bank that just got fined and asks what went wrong. The answer is always the same: “The screening tool did not catch it.” Your product reputation is only as strong as the worst outcome your client has experienced. And those worst outcomes are caused by the gap between your test data and production reality.

I have watched this pattern repeat across ComplyAdvantage deployments, Napier AI implementations, Unit21 integrations. The technology is sophisticated. The test data is not. And the gap between the two is where fines happen.

Three Approaches That Leave Your Product Exposed

RegTech vendors face a unique version of the test data problem. You cannot use your clients’ data — they will not share it, and even if they did, handling real PII creates liability you do not want. So you build your own test data. And every approach has the same structural flaw.

Using production-adjacent data from clients. Some RegTech companies negotiate access to anonymized client data for product validation. This creates two problems simultaneously. First, the anonymization is rarely sufficient for UHNWI profiles — with only 265,000 UHNWIs globally, the combination of net worth tier, offshore jurisdiction, and profession can re-identify individuals even without names attached. Second, you now have GDPR obligations as a data processor for test data that sits in your QA environment with weaker access controls than production. If a regulator audits your client and traces the data lineage into your test systems, both of you have a problem.

Using generic synthetic generators. Platform-based synthetic data tools produce profiles that are structurally flat. Single jurisdiction. No offshore vehicles. No PEP connections. Names drawn from a limited Western-centric name pool. When you test your sanctions screening engine against these profiles, you get clean results — because the profiles are too simple to trigger the edge cases where screening logic breaks down. Your product passes QA. Your client deploys it. The first complex UHNWI triggers a cascade of false positives that the system was never designed to handle, because the test data never contained that level of structural complexity.

Building test data in-house. I have seen RegTech engineering teams spend months building custom test data generators. They hard-code a few hundred profiles with specific sanctions signals. The profiles are internally consistent but they lack the combinatorial depth that causes real screening failures — the intersection of a high-risk jurisdiction with a PEP-adjacent connection with a name that fuzzy-matches against three different sanctions lists simultaneously. You cannot hand-craft these combinations at scale. And the profiles become stale within months as sanctions lists evolve and your product adds new screening logic.

Client Data vs. Generic Synthetic vs. Born-Synthetic

| Dimension | Client Data | Generic Synthetic | Born-Synthetic |

|---|---|---|---|

| PII present | Yes (anonymized) | None | None |

| Re-identification risk | Probable (UHNWI) | None | None |

| GDPR Art. 25 compliant | Disputed | Yes | Yes |

| Multi-jurisdictional exposure | Yes | Rare | Yes (6 niches) |

| PEP/sanctions signal realism | Reflects single client | Uniform random | Niche-calibrated |

| Name complexity (transliteration) | Limited | Western-only | 31 archetypes, cultural naming |

| Certifiable for auditors | No | Varies | Yes (Certificate of Origin) |

| Scales to 100K+ | Rarely | Yes | Yes |

Born-Synthetic Sanctions Screening Data Built for RegTech Product Validation

I built Sovereign Forger’s KYC-Enhanced dataset specifically for the problem I kept seeing in RegTech implementations: screening engines that performed flawlessly in QA and failed in production because the test data never contained the structural complexity that triggers real screening failures.

Every profile is generated from mathematical constraints — not derived from any real person, not anonymized from any client database. The generation pipeline works in two stages:

Math First. Net worth follows a Pareto distribution — the actual shape of wealth distribution, not a bell curve. Asset allocations are computed within algebraic constraints: Assets – Liabilities = Net Worth, by construction. Offshore vehicle assignments follow from archetype and geography. A semiconductor dynasty in Pacific Rim gets a different ownership structure than a private banker in Swiss-Singapore, because the underlying wealth architectures are fundamentally different — and your screening engine needs to handle both.

AI Second. A local AI model adds narrative context — biography, profession, philanthropic focus — after the financial figures are locked. The AI runs entirely offline. No record ever touches an external server. The AI never modifies the numbers or the KYC signals. It enriches the profile with culturally coherent details that produce realistic name patterns, jurisdictional connections, and biographical complexity.

Why This Matters for Sanctions Screening Specifically

Sanctions screening engines break down at specific failure points. Every one of them is represented in the Sovereign Forger dataset:

Name complexity and transliteration variance. The dataset includes 31 wealth archetypes across 6 geographic niches, each with culturally authentic naming patterns. Middle Eastern names with patronymic structures. Chinese names in multiple romanization variants. European aristocratic surnames with generational prefixes. Pacific Rim names that combine Chinese, Malay, and Indian naming conventions within a single niche. Your fuzzy matching algorithm needs to handle all of these — and it needs test data that contains all of these to be properly calibrated.

Multi-jurisdictional exposure. Every UHNWI profile includes a residence city, a tax domicile, and an offshore jurisdiction — three separate geographic data points that may span three different sanctions regimes. A profile with residence in London, tax domicile in Singapore, and a BVI vehicle creates a screening scenario that touches OFAC, EU, and MAS sanctions lists simultaneously. Your engine needs to evaluate all three jurisdictions — and your test data needs to contain these combinations.

PEP-adjacent connections. The dataset includes deterministic PEP signals — pep_status (none, domestic, foreign, international_org), pep_position, and pep_jurisdiction — distributed with realistic frequencies by niche. Middle East profiles have a higher PEP incidence than Silicon Valley profiles, because that reflects the real distribution. Your screening engine needs to handle PEP-sanctions intersection logic — a profile that is both PEP and associated with a high-risk jurisdiction should trigger a different alert level than either signal alone.

Sanctions signal calibration. Every profile includes sanctions_screening_result (clear, potential_match, confirmed_match) and sanctions_match_confidence (0-100 for potential matches). These signals are deterministically derived from the profile’s archetype, niche, and jurisdictional exposure — not randomly assigned. This means your screening engine can be tested against a realistic distribution of true positives, false positives, and true negatives.

High-risk jurisdiction flags. Profiles with offshore vehicles in FATF-identified jurisdictions carry a high_risk_jurisdiction_flag with the specific jurisdictions listed. Your engine needs to test its jurisdiction-based escalation logic against profiles that actually contain high-risk jurisdictions — not profiles where every offshore vehicle is in Delaware.

29 Fields Designed for Screening Pipeline Integration

Every KYC-Enhanced profile includes the fields your sanctions screening engine needs to process:

Identity & Geography: full_name, residence_city, residence_zone, tax_domicile

Wealth Structure: net_worth_usd, total_assets, total_liabilities, property_value, core_equity, cash_liquidity, assets_composition, liabilities_composition

Professional Context: profession, education, narrative_bio, philanthropic_focus

Offshore Exposure: offshore_jurisdiction, offshore_vehicle

Screening Signals: kyc_risk_rating, pep_status, pep_position, pep_jurisdiction, sanctions_screening_result, sanctions_match_confidence, adverse_media_flag, source_of_wealth_verified, sow_verification_method, high_risk_jurisdiction_flag

Every field is deterministically derived from the profile’s archetype, niche, net worth, and jurisdiction. The same UUID always produces the same KYC signals — making your test results reproducible across screening engine versions.

Built for RegTech Product Validation at Scale

6 Geographic Niches: Silicon Valley, Old Money Europe, Middle East, LatAm, Pacific Rim, Swiss-Singapore. Each niche produces distinct sanctions screening challenges — different name patterns, different jurisdictional overlaps, different PEP distributions. Your engine needs to handle all six.

31 Wealth Archetypes: Tech founders, sovereign family members, commodity traders, private bankers, shipping dynasty heirs, real estate developers — the actual client profiles that produce the most complex sanctions screening scenarios in production.

Realistic Signal Distribution: KYC risk ratings, PEP statuses, sanctions results, and source-of-wealth verification methods are distributed with niche-calibrated frequencies. Middle East profiles carry higher PEP incidence. LatAm profiles show higher risk ratings. Swiss-Singapore profiles present more complex multi-jurisdictional exposure. These distributions reflect real-world patterns — they are not uniformly random.

Reproducible Results: Every profile is generated deterministically from a UUID seed. The same input produces the same output on every run. When your screening engine produces an unexpected result on profile X, you can re-run the test and get the identical profile to debug against.

Pricing

| Tier | Records | Price | Best For |

|---|---|---|---|

| Compliance Starter | 1,000 | $999 | QA validation, proof of concept |

| Compliance Pro | 10,000 | $4,999 | Full regression suite, threshold calibration |

| Compliance Enterprise | 100,000 | $24,999 | ML model training + production validation |

No SDK. No API key. No sales call. Download a JSONL or CSV file and feed it directly into your screening pipeline. Most RegTech engineering teams have it integrated within a day.

Why This Matters Now

Your clients are under regulatory pressure — and they are looking at your product. The EU AI Act becomes fully applicable in August 2026. Financial AI — including AML and sanctions screening — is classified as high-risk under Annex III. Article 10 requires documented governance of training data, including provenance and bias assessment. If your screening models were trained or validated on real client data, your clients need to prove GDPR compliance and AI Act compliance simultaneously. If they cannot, they face fines up to 7% of global revenue under the AI Act. And they will ask why your product did not flag this risk.

The fines keep accelerating. HSBC: £63.9M for transaction monitoring failures. Starling Bank: £29M for inadequate financial crime controls. N26: €9.2M. Block: $120M. Every one of these fines hits a RegTech vendor’s pipeline — the bank that was fined is now shopping for a new solution, and the first question they ask is “how do you test your product?” If your answer is “internal test data” without provenance documentation, you have already lost the deal.

Born-Synthetic eliminates the provenance question. Every Sovereign Forger dataset ships with a Certificate of Sovereign Origin — documenting the born-synthetic methodology, zero PII lineage, and regulatory alignment with GDPR Art. 25 and EU AI Act Art. 10. When your client’s auditor asks where the validation data came from, you hand them the certificate. No lineage to real persons. No anonymization chain to defend. No re-identification risk to assess. Compliant by construction — not by anonymization.

The balance sheet test is open source. Every Sovereign Forger record passes algebraic validation: Assets – Liabilities = Net Worth. Run the Balance Sheet Test on our data, then run it on your current test data. If your test profiles do not pass basic mathematical consistency checks, your screening engine is being validated against data that could not exist in reality. That is not validation — that is theater.

RegTech is a trust business. Your clients buy your product because they trust it to protect them from regulatory exposure. That trust is built on validation rigor. If your product validation relies on structurally simple test data that never contained the edge cases where screening breaks down, your trust is built on a foundation that has not been tested. Born-Synthetic data does not make your product better. It proves your product works — against the profiles that actually matter.

Stress-Test Your Sanctions Screening

Download 100 free KYC-Enhanced UHNWI profiles with sanctions screening signals, PEP indicators, and multi-jurisdictional exposure. Run them through your screening engine. Count the false positives. Count the missed alerts. Count the jurisdiction combinations your engine has never encountered.

That gap between your current test results and these results is the gap your clients will discover in production. Better to find it now.

No credit card. No sales call. Just your work email.

Frequently Asked Questions

How does synthetic sanctions screening data help RegTech providers demonstrate their engine’s accuracy to prospective clients?

RegTech vendors need controlled, repeatable test environments to show hit rates, false positive ratios, and fuzzy-matching precision without exposing real customer data. Sovereign Forger’s born-synthetic profiles include deliberate near-matches against OFAC SDN, EU Consolidated, and UN Security Council lists, alongside clear-pass profiles and edge cases like name transliterations and partial DOB matches. Vendors can run the same 500-profile dataset across multiple prospect demos, producing consistent metrics that satisfy EU AI Act Art.10 requirements for representative test data in high-risk AI systems.

Why do neobanks use synthetic sanctions data to stress-test screening systems before going live?

Neobanks face disproportionate regulatory exposure: Starling Bank was fined £29M and N26 €9.2M for sanctions and AML control failures. Stress-testing with real customer data risks GDPR violations, while generic dummy data fails to surface realistic engine weaknesses. Synthetic profiles built from actual sanctions list distributions replicate the name-matching complexity, dual-nationality scenarios, and politically exposed person flags that cause live systems to miss hits or flood compliance teams with false positives, allowing remediation before production deployment.

What specific edge cases should sanctions screening test data include to expose engine gaps across multiple jurisdictions?

Effective test data must cover at least five failure categories: phonetic name variants for Russian, Iranian, and North Korean designated persons; entities with multiple transliterations of the same sanctioned name; individuals sharing names with sanctioned parties but with clean profiles; date-of-birth ranges used when exact dates are unknown on OFAC or EU lists; and beneficial ownership chains linking non-sanctioned individuals to sanctioned legal entities. Sovereign Forger profiles span OFAC SDN, EU Consolidated List, UK HM Treasury, and UN Security Council jurisdictions within a single interlocked dataset.

What does born-synthetic mean for sanctions screening test data, and why does it matter under GDPR?

Born-synthetic data is generated entirely from mathematical distributions, such as Pareto and log-normal models calibrated to real-world financial populations, with zero lineage to any living or deceased natural person. No real individual is anonymised, pseudonymised, or re-identified, which means the dataset satisfies GDPR Art.25 data protection by design and by default at the point of creation, not as a post-processing step. For RegTech sanctions screening, this eliminates the legal risk of accidental exposure of genuine sanctioned-person data or real customer records during vendor testing and client demonstrations.

How can a RegTech team get started with synthetic sanctions screening data, and what does the free tier include?

Sovereign Forger provides instant access to 100 free KYC profiles upon registration with a work email address, with no credit card required and no sales process. Each profile includes 29 interlocked fields covering full name, nationality, date of birth, risk rating, PEP status, sanctions screening flag, source of wealth category, and jurisdiction-specific identifiers, all internally consistent across the record set. The free dataset is structured for direct ingestion into screening engines, enabling a functional proof-of-concept evaluation within hours of download.

Learn more about RegTech sanctions screening data and how Born Synthetic data addresses this in our glossary and comparison guides.