HSBC: £63.9M. Danske Bank: ~$2B. ABN AMRO: €480M. ING: €775M. Standard Chartered: $1.1B. Every one of these fines started the same way — a client walked through the door, and the onboarding workflow could not handle what they brought with them.

Your Onboarding Simulation Tests the Wrong Clients

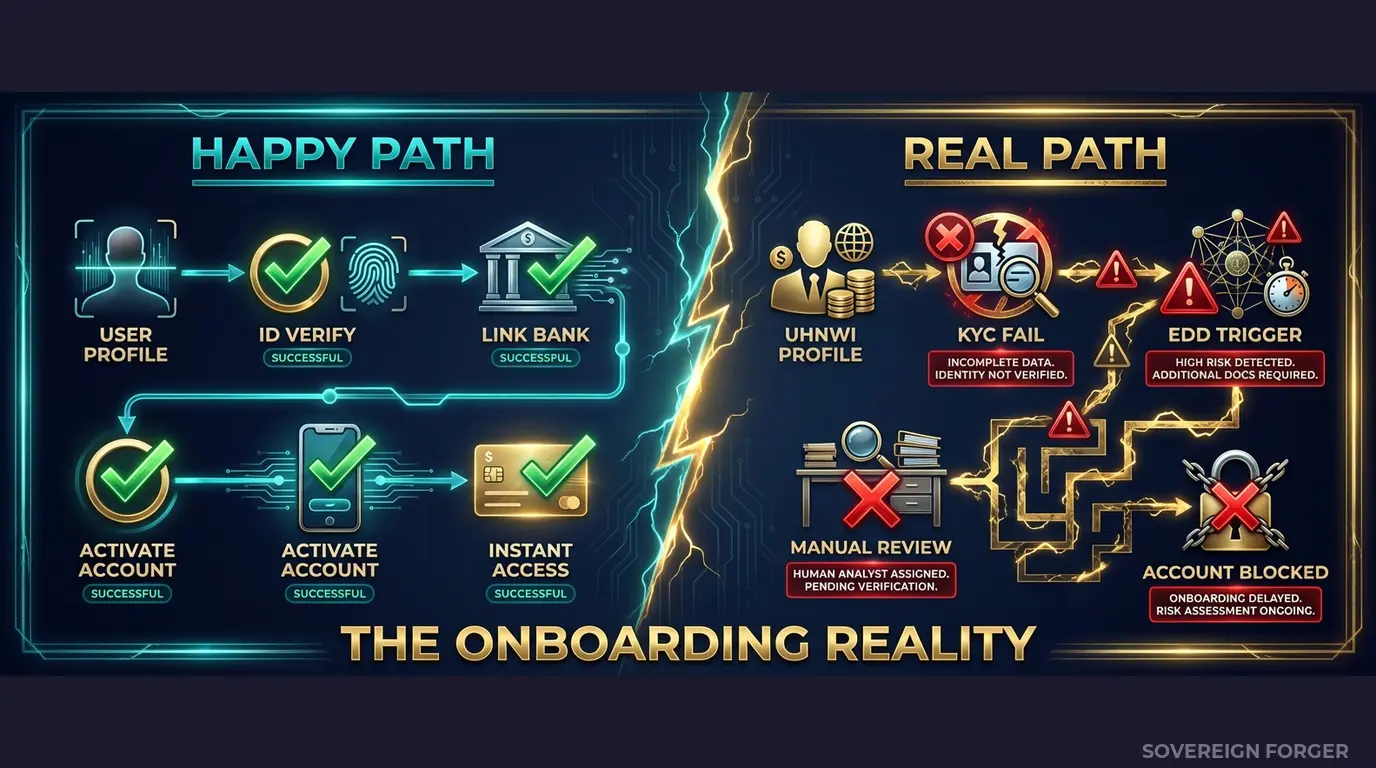

I spent years inside financial institutions watching onboarding simulations run. The pattern was always the same. The project team would build a test suite of 200 to 500 profiles — clean names, single nationality, one tax domicile, a salary-based income source, no offshore exposure. The simulation would run. Every workflow step would complete. Every compliance check would return green. The team would sign off. The system would go live.

Then the private banking division would refer a new client. A German national, tax-resident in Switzerland, with a family trust registered in Liechtenstein holding a BVI subsidiary that owns commercial real estate in London. PEP-adjacent — a brother-in-law served as a state secretary in Bavaria until 2019. Net worth north of €80M, spread across property, equity, and a collection of vintage automobiles that needed its own valuation methodology.

The onboarding workflow failed at step three. The system expected one tax domicile — it received a mismatch between nationality, residence, and tax jurisdiction. The PEP screening module flagged the client but could not process the indirect connection because the test data had never contained a PEP relationship beyond the direct type. The source-of-wealth verification step timed out because the asset composition included categories the system had never encountered during simulation.

I have seen this exact scenario play out at four different institutions. The specifics change — sometimes it is a Middle Eastern family office, sometimes a Pacific Rim shipping dynasty — but the structural failure is identical. The onboarding simulation tested a world where clients are simple. Production delivered clients who are not.

The compounding problem for traditional banks is institutional scale. A neobank onboards hundreds of thousands of retail clients and occasionally encounters a complex one. A traditional bank with a private banking arm, a corporate banking division, and correspondent banking relationships encounters structural complexity every single day. Multi-jurisdictional entity chains are the norm, not the exception. Dual-nationality clients with tax residencies in a third country are standard. Family wealth structures that span three generations and four jurisdictions are Tuesday morning.

When your onboarding simulation uses profiles that lack this complexity, you are not testing your system. You are testing a fantasy version of your client base — one that has never existed and never will.

The regulatory exposure is not theoretical. The FCA fined HSBC £63.9M for transaction monitoring failures that trace directly to inadequate testing of compliance controls. Danske Bank’s ~$2B in penalties stemmed from an Estonian branch where onboarding controls were insufficient for the client profiles being processed. ABN AMRO paid €480M because their client due diligence systems were not calibrated for the actual risk profiles walking through the door. In every case, the gap between what the system was tested against and what it needed to handle in production was the root cause.

Three Approaches That Break Under Institutional Complexity

Traditional banks face a unique version of the test data problem. Unlike neobanks that can sometimes get away with simpler profiles, a bank that serves private wealth, corporate clients, and correspondent relationships simultaneously needs onboarding simulations that reflect all three layers of complexity. Here is why the standard approaches fail at this scale.

Using copies of production client data. I have been in compliance meetings where someone proposed extracting a sample of real client profiles into the test environment. At a traditional bank, this is not just a GDPR Article 25 violation — it is an operational catastrophe. Real UHNWI client data in a test environment with developer access, third-party QA contractors, and insufficient logging creates liability that would make your DPO physically ill. The August 2026 EU AI Act enforcement compounds this: if your onboarding AI models train on extracted client data, Article 10 now requires documented provenance and bias assessment for that training data. Try documenting the provenance of data you were not supposed to extract in the first place.

Using anonymized client records. Traditional banks serve a concentrated population. With approximately 265,000 UHNWIs globally, the combination of net worth range, domicile jurisdiction, offshore vehicle type, and profession is often enough to re-identify an individual even after direct identifiers are stripped. When your private banking division serves 2,000 UHNW clients and you anonymize 500 of them for testing, the remaining data points — a $200M net worth, residence in Zurich, a Liechtenstein trust, and a pharmaceutical executive — narrow the field to perhaps three or four real people. A regulator can argue, and has argued, that this constitutes pseudonymization, not anonymization, and GDPR applies in full.

Using generic synthetic data generators. Platform-based generators produce profiles that look like retail banking customers with inflated balances. Single jurisdiction, single nationality, salary-based income, no offshore structures, no entity layering. Your onboarding simulation runs against these profiles and reports that everything works. Of course it works — you tested the simplest possible case. The moment a real client presents a multi-layered trust structure with PEP connections and three source-of-wealth categories, the simulation results are meaningless.

Real Data vs. Anonymized vs. Born-Synthetic

| Dimension | Real Data | Anonymized | Born-Synthetic |

|---|---|---|---|

| PII present | Yes | Residual | None |

| Re-identification risk | Certain | Probable (UHNWI) | Impossible |

| GDPR Art. 25 compliant | No | Disputed | Yes |

| EU AI Act Art. 10 | Violation | Unclear | Compliant |

| Certifiable for auditors | No | No | Yes (Certificate of Origin) |

| Multi-jurisdictional complexity | Yes | Degraded by stripping | Full (by construction) |

| Fine exposure | Up to 4% global revenue | Up to 4% global revenue | Zero |

Born-Synthetic Onboarding Data Built for Traditional Bank Complexity

I built Sovereign Forger because I watched institutional onboarding simulations fail for the same reason over and over: the test data was structurally simpler than the real client base. The profiles I generate are designed to break onboarding workflows in exactly the ways that real UHNWI clients break them — before those clients arrive in production.

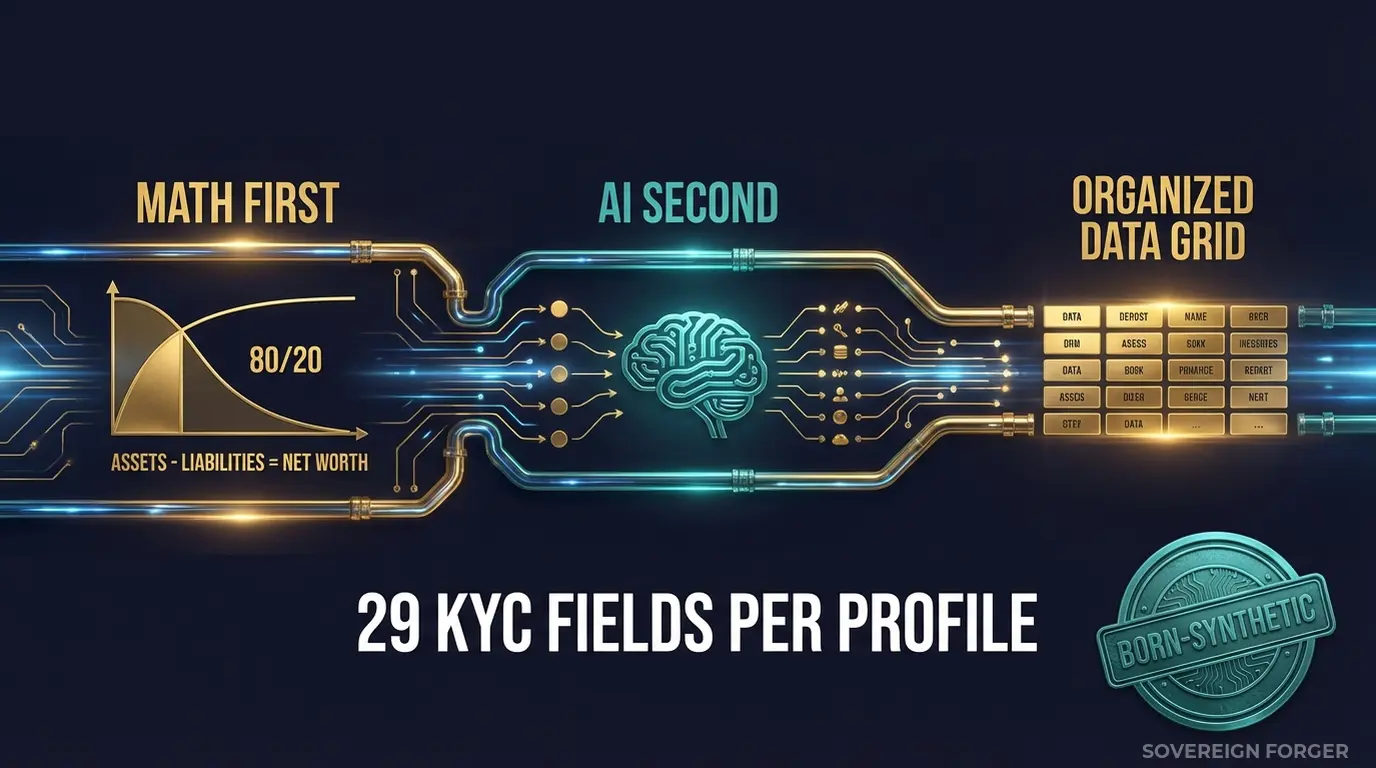

Every profile in the Sovereign Forger KYC dataset is generated from mathematical constraints — not derived from any real person. The generation pipeline works in two stages:

Math First. Net worth follows a Pareto distribution — the actual shape of wealth concentration, not a bell curve that spreads values evenly. Asset allocations are computed within algebraic constraints: Assets – Liabilities = Net Worth, by construction. Property value, core equity, cash liquidity, and liability composition are interlocked so that every balance sheet balances on every record. Zero exceptions. This matters for onboarding simulation because your system needs to process asset declarations that are internally consistent — not random numbers that would fail the first plausibility check.

AI Second. A local AI model running entirely offline adds narrative context — biography, profession, education, philanthropic focus — after the financial figures are locked. The AI never touches the numbers. It enriches the profile with culturally coherent details that match the geographic niche and wealth archetype. A private banker in Zurich gets a different biography than a tech founder in Palo Alto, because their wealth structures, professional histories, and institutional relationships are different.

How This Maps to Your Onboarding Workflow

Traditional bank onboarding is not a single step — it is a pipeline. Each stage makes decisions based on the client profile, and complexity at any stage can cascade into downstream failures. Here is how the 29 KYC-Enhanced fields map to the stages where onboarding simulations typically break:

Stage 1 — Initial Data Capture. Fields: `full_name`, `residence_city`, `tax_domicile`, `profession`, `education`. The simulation tests whether your intake form and data validation can handle names with cultural naming conventions (patronymics, compound surnames, honorifics), mismatches between residence city and tax domicile, and professions that do not fit neat dropdown categories. I have seen onboarding forms break on a name like “Sheikh Mohammed bin Rashid Al Nahyan” because the system expected first-name/last-name format.

Stage 2 — KYC Risk Scoring. Fields: `kyc_risk_rating`, `pep_status`, `pep_position`, `pep_jurisdiction`, `high_risk_jurisdiction_flag`. The simulation tests whether your risk scoring engine correctly processes indirect PEP connections, jurisdiction combinations that elevate risk, and the interaction between multiple risk factors on a single profile. A profile that is medium-risk on jurisdiction alone might become high-risk when combined with PEP adjacency and an offshore vehicle in a high-risk jurisdiction.

Stage 3 — Source of Wealth Verification. Fields: `source_of_wealth_verified`, `sow_verification_method`, `net_worth_usd`, `total_assets`, `assets_composition`. The simulation tests whether your SoW verification process can handle multi-category wealth sources — a client whose assets span real estate, equity, and offshore holdings requires different verification paths than a salaried executive. The `sow_verification_method` field (tax_returns, bank_statements, third_party, self_declared) lets you test how your system routes different verification types.

Stage 4 — Enhanced Due Diligence Triggers. Fields: `offshore_jurisdiction`, `offshore_vehicle`, `sanctions_screening_result`, `sanctions_match_confidence`, `adverse_media_flag`. The simulation tests whether your EDD workflow activates correctly on the combinations that matter — a BVI trust structure alone might not trigger EDD, but combined with a PEP connection and a potential sanctions match, it should. These field values are deterministically derived from the profile archetype and niche, so the distributions match realistic patterns rather than random assignment.

29 Fields Purpose-Built for Onboarding Simulation

Identity & Geography: full_name, residence_city, residence_zone, tax_domicile

Wealth Structure: net_worth_usd, total_assets, total_liabilities, property_value, core_equity, cash_liquidity, assets_composition, liabilities_composition

Professional Context: profession, education, narrative_bio, philanthropic_focus

Offshore Exposure: offshore_jurisdiction, offshore_vehicle

KYC Signals: kyc_risk_rating, pep_status, pep_position, pep_jurisdiction, sanctions_screening_result, sanctions_match_confidence, adverse_media_flag, source_of_wealth_verified, sow_verification_method, high_risk_jurisdiction_flag

Every field is deterministically derived from the profile’s archetype, geographic niche, net worth tier, and jurisdiction. A German-resident Old Money dynasty heir with a Liechtenstein trust gets different risk signals than a Silicon Valley founder with a Delaware LLC — because the underlying wealth architecture is different. This is not random data with labels. It is structurally coherent complexity.

Built for Traditional Bank Onboarding at Institutional Scale

6 Geographic Niches: Silicon Valley, Old Money Europe, Middle East, LatAm, Pacific Rim, Swiss-Singapore. Traditional banks operate across all of these jurisdictions simultaneously. Your onboarding simulation needs profiles from each niche to test whether your workflow handles cross-niche complexity — a client with a Dubai residence, Swiss banking, and a Singapore-registered trust is not unusual for a global bank.

31 Wealth Archetypes: Dynastic heirs, private equity principals, sovereign family members, commodity traders, real estate developers, family office managers, diplomatic officials. These are the client profiles that trigger multi-stage EDD in production. Each archetype generates different offshore structures, PEP likelihood, and risk signal distributions.

KYC Signal Distribution by Niche: Risk ratings, PEP statuses, sanctions screening results, and source-of-wealth verification methods are distributed with realistic frequencies by geographic niche. Middle East profiles carry a higher PEP rate (~29%) because of the region’s governance structures. LatAm profiles show elevated risk ratings (~84% high) reflecting the region’s regulatory environment. These distributions are not arbitrary — they are calibrated to the compliance reality of each niche.

Multi-Jurisdictional Coverage: Every profile includes residence city, tax domicile, offshore jurisdiction, and offshore vehicle — the four-point jurisdiction chain that onboarding workflows must process correctly. When these four fields point to four different countries, your simulation tests whether the system can handle the routing logic without defaulting to the simplest case.

Pricing

| Tier | Records | Price | Best For |

|---|---|---|---|

| Compliance Starter | 1,000 | $999 | QA cycle, proof of concept |

| Compliance Pro | 10,000 | $4,999 | Full regression testing across niches |

| Compliance Enterprise | 100,000 | $24,999 | AI training + production simulation |

No SDK. No API key. No sales call. Download a file, open it in Python or Excel, and feed it into your onboarding pipeline.

Why This Matters Now

The enforcement timeline is concrete. The EU AI Act becomes fully applicable in August 2026. Financial AI — including automated KYC risk scoring and client onboarding decisioning — is classified as high-risk under Annex III. Article 10 requires documented governance of training data, including provenance, bias assessment, and GDPR compliance. If your onboarding models train on real or anonymized client data, you need to prove compliance on both GDPR and AI Act simultaneously. Born-synthetic data eliminates the conflict entirely.

Traditional bank fines are measured in billions, not millions. Danske Bank: ~$2B for failures in their Estonian branch onboarding controls. ING: €775M for systematic deficiencies in client due diligence. Standard Chartered: $1.1B for sanctions and AML failures. ABN AMRO: €480M. HSBC: £63.9M from the FCA alone, with additional penalties from other regulators. These are not neobank-scale fines — they reflect the institutional scale of the failure and the regulatory expectation that global banks should know better.

Multiple regulators, simultaneous scrutiny. A traditional bank operating in London, Frankfurt, New York, and Singapore faces the FCA, ECB, FinCEN, and MAS simultaneously. Each regulator has its own expectations for onboarding controls, and each can fine independently. Your onboarding simulation needs to produce results that satisfy all of them — which means the test data needs to contain the complexity that each regulator expects you to handle.

The balance sheet test is open source. Every Sovereign Forger record passes algebraic validation: Assets – Liabilities = Net Worth. Run the Balance Sheet Test on our data, then run it on your current test data. If your current test profiles contain balance sheet errors, every onboarding simulation that processed those profiles tested a scenario that could never exist in production.

Every dataset ships with a Certificate of Sovereign Origin — documenting the born-synthetic methodology, zero PII lineage, and regulatory alignment. When your auditor asks where the onboarding test data came from, you hand them the certificate. When the FCA asks how you validated your KYC controls, you show them simulation results built on data that is compliant by construction — not by anonymization.

Simulate Realistic Client Onboarding

Download 100 free KYC-Enhanced UHNWI profiles. Run the full onboarding simulation — from initial data capture through KYC checks, risk scoring, and EDD triggers. Count how many profiles expose workflow failures, routing errors, or risk scoring gaps that your current test data never generated.

That count is the distance between your onboarding simulation and production reality.

No credit card. No sales call. Just your work email.

Related reading: DORA Synthetic Data Requirements for Resilience Testing — how DORA Article 24-25 mandates synthetic data for threat-led penetration testing.

Frequently Asked Questions

How does synthetic customer onboarding data help traditional banks stress-test KYC workflows without exposing real customer records?

Traditional banks operate under stricter OCC SR 11-7 model risk management obligations than neobanks, requiring documented evidence that onboarding models perform across edge cases before deployment. Synthetic KYC profiles allow compliance and engineering teams to inject adversarial inputs — mismatched document types, transliterated multi-cultural names, conflicting source-of-wealth narratives — into pre-production environments without touching live PII. Banks that have run 10,000-plus synthetic onboarding cycles report defect detection rates 3x higher than manual test scripting alone.

What specific onboarding failure modes can traditional banks expose using diverse synthetic customer profiles?

Traditional banks commonly discover three failure categories: name-matching failures on non-Latin scripts that trigger false AML hits, document-type gaps where the workflow rejects valid foreign passports not in the training set, and risk-rating miscalibration on customers with complex multi-jurisdiction financial backgrounds. Basel III/IV capital models downstream depend on accurate risk segmentation at onboarding, so a miscategorised customer at intake can distort capital calculations for years. Synthetic profiles spanning 40-plus nationalities and 12 document types surface these gaps before they reach a regulator.

How do traditional banks satisfy EBA ML model validation guidelines when validating automated onboarding decisioning systems?

EBA guidelines on ML model validation require banks to demonstrate performance across demographic sub-populations, not just aggregate accuracy. Supervisory reviewers increasingly ask for stratified validation reports showing false-positive and false-negative rates segmented by customer risk tier, nationality cluster, and document type. Synthetic datasets with controlled demographic distributions allow model owners to construct these reports without privacy constraints. With the EU AI Act Article 10 becoming enforceable in August 2026, traditional banks face binding data-quality obligations for high-risk AI systems, and synthetic validation corpora provide a defensible audit trail.

What does born-synthetic financial data mean, and why does it matter specifically for traditional bank onboarding testing?

Born-synthetic data is generated entirely from mathematical distributions — including Pareto-distributed wealth figures and correlated credit variables — and has zero lineage to any real person at any stage of its creation. This is distinct from anonymised or masked data, which retains statistical shadows of real individuals and can fail re-identification tests. For traditional banks, where onboarding test environments are subject to the same data governance audits as production under GDPR Article 25 privacy-by-design requirements, born-synthetic profiles eliminate the legal exposure entirely. There is no consent requirement, no cross-border transfer restriction, and no breach notification obligation if a test environment is compromised.

How can a traditional bank’s compliance or QA team get started testing onboarding flows with synthetic KYC profiles from Sovereign Forger?

Sovereign Forger provides 100 free KYC profiles with 29 interlocked fields per record, available via instant download upon registration with a work email address — no credit card required. Each profile includes risk ratings, PEP status, sanctions screening flags, and source-of-wealth narratives structured to mirror the data points traditional banks collect at onboarding. The 29 fields are internally consistent, meaning document numbers, date-of-birth, and address history do not contradict one another, which is essential for testing validation logic rather than just field-presence checks.

Learn more about bank onboarding test synthetic data and how Born Synthetic data addresses this in our glossary and comparison guides.