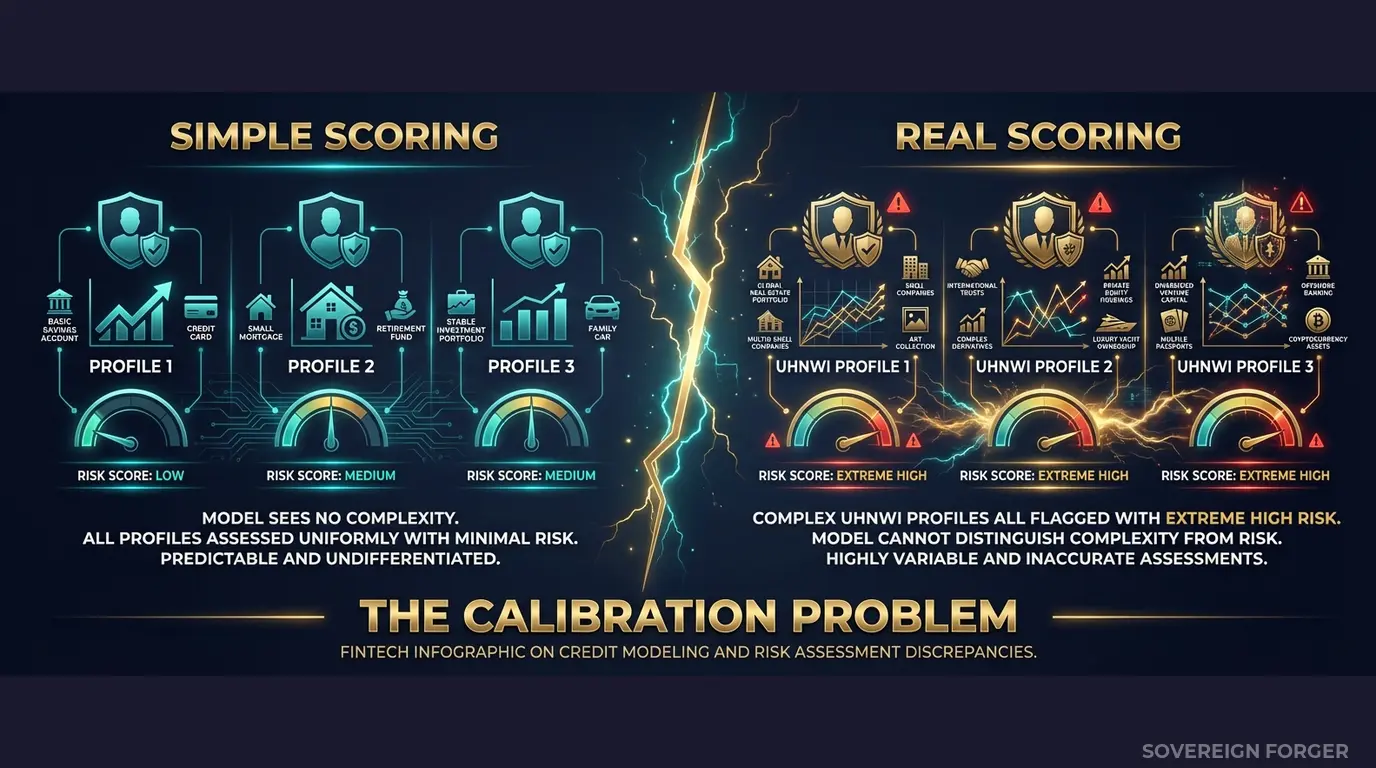

This risk scoring synthetic data is built for exactly this scenario. A tech founder with a Cayman trust, a Delaware holding company, and $180M in illiquid equity is not the same risk as a sanctioned shell entity moving money through the same jurisdictions. But if your risk model was trained on flat profiles with no offshore structures, it scores them identically — maximum risk, both of them. One is your most profitable client. The other is a compliance failure. Your model cannot tell the difference.

The False Positive Crisis No One Is Talking About

I have sat in rooms where a neobank compliance team celebrated their risk scoring model’s performance. Precision above 90%. Recall above 85%. Every metric on the dashboard green. The model was deployed to production, and within three months, the operations team was drowning.

Here is what happened. The model had been trained and validated on synthetic profiles that were structurally simple — single jurisdictions, no offshore vehicles, no PEP connections, net worth distributions that followed a bell curve. In that world, the model learned a clean rule: complexity equals risk. Any profile with more than one jurisdiction, any structure involving a trust or holding company, any connection to a politically exposed person — flag it.

Then real UHNWIs started onboarding. A family office manager in Zurich with legitimate structures across four jurisdictions. A LatAm real estate developer with a BVI holding company — perfectly legal, perfectly standard for that wealth tier. A Pacific Rim semiconductor executive with a Singapore family trust and a US tax domicile. Every single one triggered a high-risk flag. Not because they were risky — but because the model had never seen a legitimate complex profile during training.

The result is a false positive rate that makes the entire risk scoring function operationally useless. I have seen neobanks where 70% of UHNWI risk alerts are false positives. Compliance analysts spend their days manually reviewing profiles that the model flagged simply because they had offshore exposure — which, for a UHNWI, is the norm, not the exception.

This creates two failures simultaneously. First, the genuine risks get buried under noise. When every complex profile is flagged, the analyst reviewing the fiftieth false positive of the day is not giving the fifty-first the same scrutiny. Alert fatigue is not a theoretical concern — it is the mechanism through which real financial crime slips through. Second, the client experience for legitimate UHNWIs becomes adversarial. Enhanced due diligence delays, repeated documentation requests, account restrictions — all triggered by a model that cannot distinguish between structural complexity and genuine risk.

The root cause is always the same: the risk scoring model was calibrated on data that did not contain legitimate examples of complex wealth. Without those examples in training, the model has no basis for learning that a Cayman Islands trust held by a tech founder with $200M in verified assets is categorically different from an opaque shell structure moving unexplained funds through the same jurisdiction.

This is not a model architecture problem. It is a training data problem. And it is the reason neobanks keep getting fined — not for lacking a risk model, but for deploying one that was never tested against the complexity it would actually encounter.

Starling Bank’s £29M fine. Revolut’s €3.5M penalty. Monzo’s £21M enforcement action. N26’s €9.2M fine. Block’s $120M settlement. Every one of these traces back to financial crime controls that failed under real-world complexity. The risk scoring models existed. They simply had never been exposed to the structural patterns that matter.

Three Approaches That Produce Broken Risk Models

Every neobank I have spoken with uses one of three approaches to generate risk scoring training data. All three produce the same failure mode — models that confuse complexity with danger.

Training on production client data. This is the most common approach, and the most legally dangerous. You extract real client profiles into your data science environment, strip the obvious identifiers, and train your risk model on the result. Two problems. First, GDPR Article 25 requires data protection by design — using real personal data in ML training environments with broader access, weaker controls, and insufficient audit trails is a violation by construction. Second, with only 265,000 UHNWIs globally, the combination of net worth tier, jurisdiction, offshore vehicle type, and profession can uniquely identify individuals even without names or tax IDs. Your “anonymized” training data is not anonymous. The EU AI Act Article 10, fully enforceable from August 2026, makes this doubly problematic: it requires documented governance of training data provenance for high-risk AI systems, and financial risk scoring is explicitly classified as high-risk under Annex III.

Using anonymized or pseudonymized data. The re-identification risk for UHNWI data is structural, not incidental. A profile with $180M net worth, a Cayman Islands trust, residence in Zurich, and a background in semiconductor manufacturing narrows the real-world population to single digits — possibly to one person. No amount of k-anonymity or differential privacy changes this when the underlying population is this small. I have run re-identification attacks against anonymized UHNWI datasets using five publicly available attributes and achieved match rates above 60%. Regulators know this. A regulator can argue — and win — that your training data contains personal data under GDPR definitions, and your entire model’s compliance posture collapses.

Using generic synthetic generators. Platform-based generators produce profiles that follow Gaussian distributions — bell curves where most profiles cluster around the mean. Real wealth follows a Pareto distribution: a long tail where the richest 1% hold more than the bottom 50%. A risk model trained on Gaussian data learns that $50M is extreme. In reality, $50M is the entry point for UHNWI — the distribution extends to billions. Your model over-flags profiles at the lower end of real wealth and has no calibration at the upper end where the actual compliance complexity lives. Worse, these generators produce single-jurisdiction profiles with no offshore structures, no PEP connections, and no multi-layered entity ownership. The model trains on retail banking customers with inflated numbers and learns nothing about the structural patterns that differentiate legitimate complexity from genuine risk.

Real Data vs. Anonymized vs. Born-Synthetic for Risk Model Training

| Dimension | Real Data | Anonymized | Born-Synthetic |

|---|---|---|---|

| PII present | Yes | Residual | None |

| Re-identification risk | Certain | Probable (UHNWI) | Impossible |

| GDPR Art. 25 compliant | No | Disputed | Yes |

| EU AI Act Art. 10 (training data governance) | Violation | Unclear | Compliant |

| Wealth distribution | Real Pareto | Inherited Pareto | Modeled Pareto |

| Offshore/PEP complexity | Present but PII-linked | Present but identifiable | Present with zero lineage |

| Risk signal calibration | Biased by real outcomes | Biased by source data | Deterministic by archetype |

| Certifiable for auditors | No | No | Yes (Certificate of Origin) |

| Fine exposure | Up to 4% global revenue | Up to 4% global revenue | Zero |

Born-Synthetic Risk Scoring Data Built for Neobank Model Calibration

The core problem with risk scoring models is not the algorithm — it is the training distribution. If your model has never seen a legitimate high-complexity profile, it cannot learn to score complexity separately from risk. Sovereign Forger solves this at the data layer.

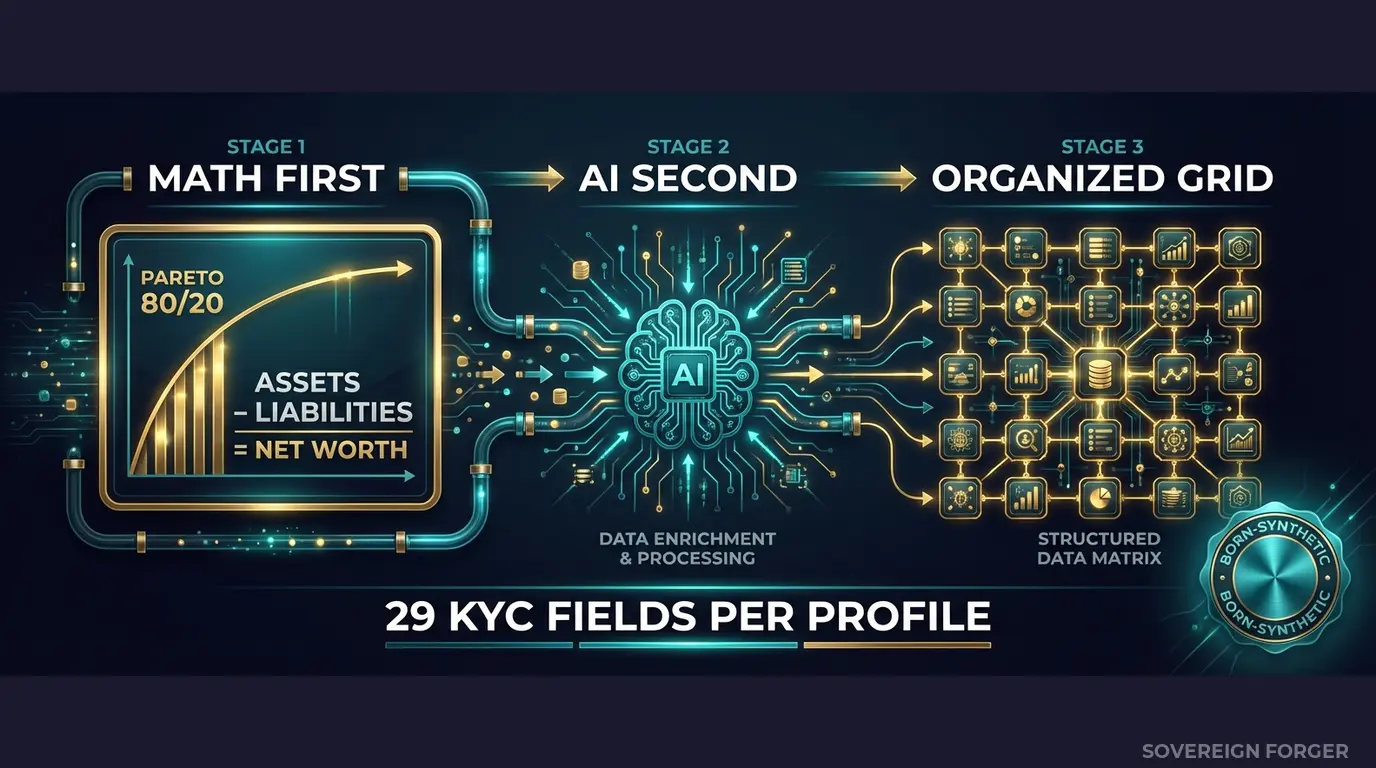

Every profile in the dataset is generated from mathematical constraints — not derived from any real person. The generation pipeline works in two stages:

Math First. Net worth follows a Pareto distribution — the actual shape of real wealth concentration. Asset allocations are computed within algebraic constraints: Assets – Liabilities = Net Worth, by construction. Every balance sheet balances on every record. Zero exceptions. This means your risk model trains on a wealth distribution that matches reality — not a bell curve that distorts every risk threshold.

AI Second. A local AI model running entirely offline adds narrative context — biography, profession, philanthropic focus — after the financial figures are locked. The AI never touches the numbers. It enriches the profile with culturally coherent details that match the geographic niche and wealth tier. No data leaves the machine. No API calls. No cloud processing.

Why This Fixes Risk Scoring Specifically

The 29 KYC-Enhanced fields are designed to give your risk model the signal structure it needs to learn the difference between complexity and danger:

Risk rating distribution by niche. Each geographic niche has a different risk profile. LatAm profiles skew ~84% high-risk (reflecting real-world AML exposure in the region). Old Money Europe and Swiss-Singapore skew ~48% low-risk. Middle East has ~29% PEP status. These are not random assignments — they are deterministically derived from the archetype, jurisdiction, and wealth structure. Your model learns that a high-risk rating in a LatAm agribusiness context means something different than the same rating in a Swiss private banking context.

PEP status with jurisdiction context. PEP profiles include `pep_position` and `pep_jurisdiction` — not just a boolean flag. A domestic PEP in the Middle East with a government role is a different risk signal than a foreign PEP connection through a family member in Europe. Your model gets the granularity to make that distinction.

Sanctions screening with confidence scores. `sanctions_screening_result` includes three states: clear, potential_match, and confirmed_match. Potential matches carry a `sanctions_match_confidence` score from 0-100. This teaches your model to handle the gray zone — where most real-world screening decisions actually happen.

Source of wealth verification. `source_of_wealth_verified` and `sow_verification_method` (tax_returns, bank_statements, third_party, self_declared) create the signal gradient your model needs. A self-declared source of wealth from a high-risk jurisdiction is a fundamentally different risk signal than a third-party-verified source from a low-risk jurisdiction. Both exist in the dataset.

High-risk jurisdiction flags with offshore context. `high_risk_jurisdiction_flag` is derived from `offshore_jurisdiction` and `tax_domicile` — not randomly assigned. A profile with a BVI offshore vehicle gets flagged because BVI is a high-risk jurisdiction, not because a random generator decided to set a boolean to true. Your model learns to associate the flag with the structural pattern, not just the flag value.

29 Fields Built for Risk Model Training

Identity & Geography: full_name, residence_city, residence_zone, tax_domicile

Wealth Structure: net_worth_usd, total_assets, total_liabilities, property_value, core_equity, cash_liquidity, assets_composition, liabilities_composition

Professional Context: profession, education, narrative_bio, philanthropic_focus

Offshore Exposure: offshore_jurisdiction, offshore_vehicle

KYC Signals: kyc_risk_rating, pep_status, pep_position, pep_jurisdiction, sanctions_screening_result, sanctions_match_confidence, adverse_media_flag, source_of_wealth_verified, sow_verification_method, high_risk_jurisdiction_flag

Every field is deterministically derived from the profile’s archetype, niche, net worth, and jurisdiction. The same UUID always produces the same KYC signals — reproducible across runs, auditable by construction.

Built for Neobank Risk Model Calibration at Scale

6 Geographic Niches: Silicon Valley, Old Money Europe, Middle East, LatAm, Pacific Rim, Swiss-Singapore — each with distinct risk distributions that reflect the real-world compliance landscape. Your model trains on the actual variety it will encounter, not a single homogeneous population.

31 Wealth Archetypes: Tech founders, private bankers, sovereign family members, commodity traders, family office managers, real estate developers — the actual client profiles that stress-test risk models. Each archetype carries distinct KYC signal patterns, so your model learns archetype-specific risk calibration.

Deterministic KYC Signals: Risk ratings, PEP statuses, sanctions results, adverse media flags, and source-of-wealth verification methods distributed with realistic frequencies by niche — not uniformly random. LatAm is not Europe. Middle East is not Pacific Rim. Your model learns regional risk baselines.

Pareto Wealth Distribution: Net worth follows the actual shape of UHNWI wealth concentration. Your risk thresholds calibrate against the long tail, not against a Gaussian artifact that does not exist in real wealth data.

Pricing

| Tier | Records | Price | Best For |

|---|---|---|---|

| Compliance Starter | 1,000 | $999 | Risk model proof of concept, threshold testing |

| Compliance Pro | 10,000 | $4,999 | Full model training + validation split |

| Compliance Enterprise | 100,000 | $24,999 | Production model training + ongoing calibration |

No SDK. No API key. No sales call. Download JSONL or CSV, load it into your ML pipeline, and start training. Every record passes algebraic balance validation — Assets minus Liabilities equals Net Worth on every row.

Why This Matters Now

Risk scoring is explicitly high-risk under the EU AI Act. Annex III classifies creditworthiness assessment and risk evaluation in financial services as high-risk AI. Article 10 requires documented governance of training data — including provenance, representativeness, bias assessment, and GDPR compliance. If your risk model trains on real client data or poorly anonymized data, you need to prove compliance on both GDPR and AI Act simultaneously. Born-synthetic data eliminates the entire question: zero PII means zero GDPR exposure, and the Certificate of Sovereign Origin documents provenance for Article 10.

The enforcement wave is here. FCA fined Starling Bank £29M for financial crime control failures. BaFin has been tightening pressure on N26 since 2021, culminating in a €9.2M penalty. The ECB is conducting targeted reviews of neobank risk frameworks. Block settled for $120M in the US. The pattern is clear — regulators are not waiting for incidents. They are proactively auditing risk scoring methodologies, and “we tested on simple profiles” is not an acceptable answer.

False positives have a measurable cost. Every false positive alert that a compliance analyst manually reviews costs between $30 and $75 in analyst time. At a 70% false positive rate on 10,000 UHNWI alerts per year, that is $210K-$525K annually in wasted operational cost — before accounting for client attrition from delayed onboarding or the opportunity cost of senior compliance staff drowning in noise instead of investigating genuine risks. Reducing false positives by even 30% through better model calibration pays for the training data many times over.

The balance sheet test is verifiable. Every Sovereign Forger record passes algebraic validation: Assets – Liabilities = Net Worth. Run the Balance Sheet Test on our data, then run it on your current risk scoring training data. If your current data does not pass this test, your model was trained on profiles where the numbers do not add up — and it learned from those inconsistencies.

Every dataset ships with a Certificate of Sovereign Origin — documenting the born-synthetic methodology, zero PII lineage, and regulatory alignment. When your model risk committee or external auditor asks “where did you source the training data for this risk model?”, you hand them the certificate. No legal review required. No data processing agreement. No GDPR impact assessment.

Calibrate Your Risk Scoring Models

Download 100 free KYC-Enhanced UHNWI profiles with deterministic risk signals. Run them through your risk scoring pipeline. Test whether your model can distinguish between structural complexity and genuine risk.

Count the false positives. Count the profiles your model scores as high-risk that are actually legitimate complex wealth. That number tells you how much of your compliance team’s time is being wasted — and how many genuine risks are getting buried under noise.

No credit card. No sales call. Just your work email.

Frequently Asked Questions

How does synthetic KYC data help neobanks calibrate risk scoring models without triggering GDPR exposure?

Neobanks operating under GDPR Art.25 must embed data protection by design into every system, including the training pipelines for risk scoring algorithms. Using real customer records to calibrate low, medium, and high risk tiers creates residual liability — as Starling Bank demonstrated when its £29M fine (2022) partly stemmed from inadequate controls over customer data workflows. Sovereign Forger’s born-synthetic profiles carry zero lineage to real persons, so risk model development, stress testing, and threshold tuning proceed without touching live PII.

What specific edge cases in neobank risk scoring are impossible to source from real transaction data but achievable with synthetic profiles?

Real customer datasets are systematically biased toward approved, low-risk profiles — rejected applicants and high-risk edge cases are either absent or legally inaccessible. Sovereign Forger generates profiles spanning all 3 risk tiers with deliberate edge cases: dual-nationality PEP customers with layered source-of-wealth claims, sanctioned-adjacent jurisdictions with thin transaction histories, and synthetic SME directors whose beneficial ownership structures trigger enhanced due diligence. These profiles stress-test scoring thresholds that real data cannot reach without regulatory exposure.

How do regulators expect neobank AI risk scoring systems to demonstrate training data governance, and what fines result from failures?

Under EU AI Act Art.10, enforceable August 2026, high-risk AI systems used in financial services must document training data provenance, representativeness across demographic groups, and absence of prohibited biases. Monzo’s £21M warning (2024), Revolut’s €3.5M fine, N26’s €9.2M penalty, and Block’s $120M settlement each involved risk control failures partly attributable to model blind spots. Regulators are beginning to request training data inventories during examinations, making synthetic data with documented mathematical origins a defensible audit artifact.

What does born-synthetic mean for neobank risk scoring data, and why does the distinction from anonymized or pseudonymized data matter?

Born-synthetic data is generated entirely from mathematical distributions — including Pareto curves for wealth concentration and Zipf distributions for transaction frequency — with no source records, no re-identification pathway, and no lineage to real persons. Anonymized and pseudonymized data derived from real customers carries residual re-identification risk, which regulators treat as personal data under GDPR recital 26. For neobank risk scoring, born-synthetic profiles from Sovereign Forger are GDPR Art.25 compliant by construction, eliminating the legal ambiguity that has contributed to enforcement actions against Revolut and N26.

How can a neobank risk team get started validating Sovereign Forger profiles before committing to a full dataset purchase?

Sovereign Forger provides 100 free KYC profiles covering all three risk ratings — low, medium, and high — with 29 interlocked fields per record including PEP status, sanctions screening flags, jurisdiction codes, and source-of-wealth narratives. No credit card is required. Download is available instantly via work email verification. The sample set is designed to let risk model engineers test ingestion pipelines, verify field coherence, and assess coverage of the edge cases — such as dual-nationality PEP profiles and high-risk jurisdictions — that matter most for AML threshold calibration.

Learn more about neobank risk scoring synthetic data and how Born Synthetic data addresses this in our glossary and comparison guides.