This model validation data is built for exactly this scenario. Starling Bank: £29M. Revolut: €3.5M. Monzo: £21M. N26: €9.2M. Block: $120M. Behind every one of these fines sits a risk model that passed internal validation — and failed against the real-world distribution it was never tested on.

Your Validation Dataset Is Missing the Tail

I have reviewed model validation reports from neobanks where the risk model scored 94% accuracy on the test set and still missed every high-risk client that walked through the door in the first quarter after deployment. The reason was not the model. The reason was the validation data.

Here is the pattern I have seen repeat across every neobank I have worked with or studied. The data science team builds a credit risk model, or a KYC risk-scoring model, or a transaction monitoring model. They validate it against a holdout set drawn from the same distribution as the training data. The metrics look excellent — AUC above 0.9, precision and recall balanced, confusion matrix clean. The model ships.

Then the edge cases arrive. A tech founder with $200M in core equity, a Cayman trust, and a PEP-adjacent family member. A commodity trader with a $40M net worth, dual nationality, property across three jurisdictions, and a sanctions-screening result that comes back as “potential match” with 62% confidence. A private banker in Singapore with a Delaware LLC layered under a BVI holding company and a philanthropic foundation that routes donations through four countries.

These are not exotic outliers. These are the clients that generate 80% of compliance risk. They sit in the Pareto tail of the wealth distribution — the long right tail where net worth ranges from $30M to $2B, where offshore structures are the norm rather than the exception, and where the combination of jurisdiction, entity type, PEP status, and wealth tier creates a combinatorial space that your validation dataset never explored.

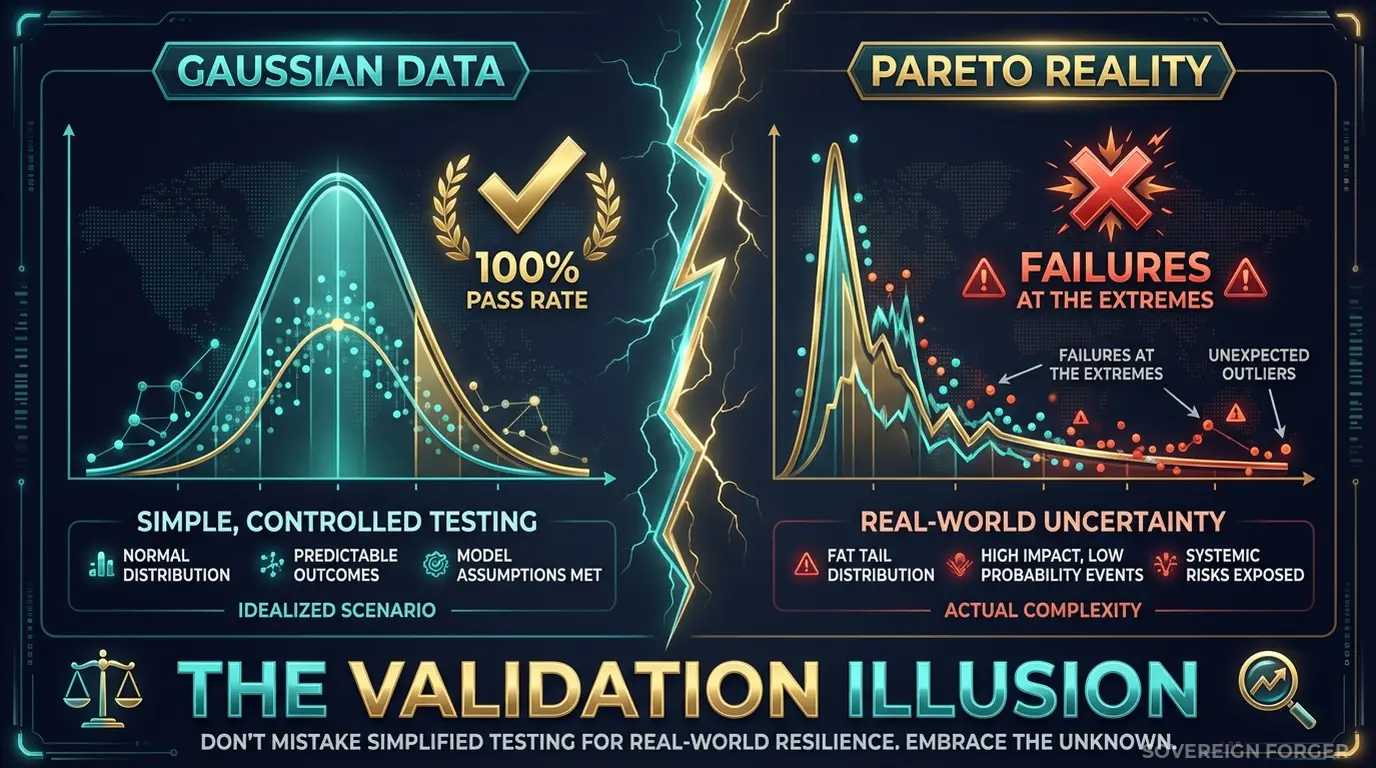

The core problem is distributional. Most neobank validation datasets are drawn from — or modeled after — the median client. Net worth under $1M. Single jurisdiction. No offshore vehicles. No PEP connections. Straightforward source of wealth. This covers 95% of your client volume but less than 20% of your compliance risk. Your model learns the center of the distribution and has zero signal about the tail.

This is why models pass validation and fail in production. The validation set does not contain the profiles that actually trigger model failures. You are measuring accuracy on the easy cases and declaring the model ready. The hard cases — the ones that generate fines — were never in the test set to begin with.

I built Sovereign Forger specifically because I watched this pattern destroy model confidence at three separate institutions. The model was never wrong. The validation data was incomplete.

Three Approaches That Leave the Tail Uncovered

Validating against a holdout from training data. This is the most common approach, and it has a structural flaw that no amount of cross-validation can fix. If your training data does not contain complex UHNWI profiles — multi-jurisdictional, PEP-adjacent, with layered offshore structures — then your holdout set does not contain them either. You are validating the model against data from the same incomplete distribution. The metrics are internally consistent and externally meaningless. Your model has never seen the Pareto tail, and your validation has never tested it there.

Using copies of production data for validation. Some teams pull real client data into validation environments. This creates two problems simultaneously. First, GDPR Article 25 requires data protection by design — placing personal data in a validation environment with broader team access, weaker controls, and often insufficient logging is a violation by definition. Second, if your AI model trains or validates on this data, the EU AI Act Article 10 — fully enforceable from August 2026 — requires documented governance of training data provenance. You need to demonstrate that the data is lawfully obtained, bias-assessed, and compliant. Real client data in a validation pipeline fails on all three counts.

Using generic synthetic generators. Platform-based synthetic data tools generate profiles that follow the distribution of whatever seed data you feed them. If you seed them with your existing client base, you get synthetic copies of the same distribution — complete with the same gaps in the tail. If you generate from scratch, most tools produce structurally flat profiles: single jurisdiction, no offshore exposure, uniform risk ratings. They create a bell curve of wealth when real wealth follows a power law. Your model validates beautifully against these gaussian profiles and has never encountered a Pareto-distributed portfolio.

Holdout Data vs. Production Copies vs. Born-Synthetic

| Dimension | Holdout from Training | Production Data | Born-Synthetic |

|---|---|---|---|

| Covers Pareto tail | Only if training data does | Partially | Yes — by construction |

| PII present | Depends on source | Yes | None |

| Re-identification risk | Depends on source | Certain | Impossible |

| GDPR Art. 25 compliant | Depends on source | No | Yes |

| EU AI Act Art. 10 | Depends on source | Violation | Compliant |

| Distribution shape | Mirrors training gaps | Mirrors client base | Pareto (realistic) |

| Certifiable for auditors | No | No | Yes (Certificate of Origin) |

Born-Synthetic Validation Data That Covers the Full Distribution

The reason most validation datasets miss the tail is that they are derived from data that already misses it. Sovereign Forger generates profiles from mathematical first principles — not from any existing dataset. The distribution is built in, not learned from incomplete observations.

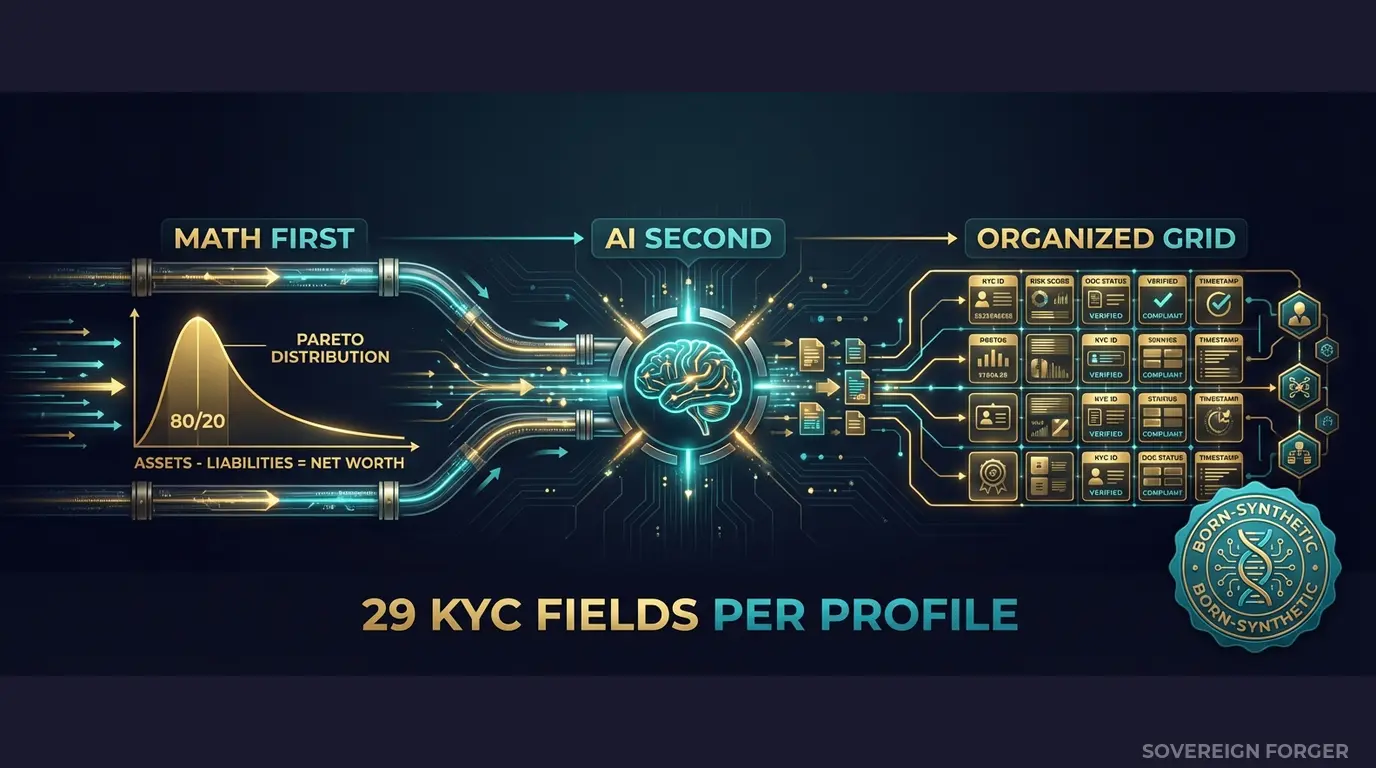

Math First. Every profile’s net worth follows a Pareto distribution — the actual shape of real wealth distribution, not a gaussian approximation. This is not a cosmetic choice. It means the dataset contains the full range: from $30M profiles with straightforward structures to $2B profiles with multi-jurisdictional complexity. The tail is populated because the generative model produces it by construction.

Asset allocations are computed within algebraic constraints: Assets – Liabilities = Net Worth, on every single record. Property values, core equity, cash liquidity, offshore holdings — all computed to sum correctly. When your model ingests these profiles, the balance sheets are internally consistent. No synthetic artifacts. No impossible combinations. No negative cash positions that would never occur in reality.

AI Second. After the financial architecture is locked, a local AI model — running offline, on hardware I control — adds narrative context. Biography, profession, education, philanthropic focus. The AI never touches the numbers. It reads the financial profile and generates culturally coherent context that matches the geographic niche and wealth tier. A Silicon Valley tech founder gets a different biography than a Swiss private banker, because the underlying wealth structures are different.

Why This Matters for Model Validation Specifically

Model validation is not the same as model training. Training requires volume. Validation requires coverage — specifically, coverage of the input space where the model is most likely to fail.

For neobank risk models, the failure zone is the Pareto tail: high-net-worth, multi-jurisdictional, structurally complex profiles. These profiles are rare in production data (by definition — they are in the tail) and completely absent from most synthetic generators (which produce the center of the distribution).

Sovereign Forger datasets are designed to populate this failure zone. Every geographic niche contains 31 wealth archetypes — tech founders, commodity traders, family office managers, private bankers, real estate developers, sovereign family members — each with distinct wealth structures, offshore patterns, and KYC signal distributions. When you validate your model against these profiles, you are testing it against the combinatorial space that actually generates compliance failures.

Specific validation scenarios this data enables:

– Risk rating calibration: Does your model assign appropriate risk scores to profiles with offshore vehicles in high-risk jurisdictions? The dataset contains realistic distributions of kyc_risk_rating across niches — not uniform random assignment.

– PEP detection sensitivity: Can your model correctly flag PEP-adjacent profiles when the PEP connection is through a family member’s position in a foreign jurisdiction? The dataset includes pep_status, pep_position, and pep_jurisdiction as separate fields, enabling granular testing.

– Sanctions screening threshold: At what confidence level does your model escalate a “potential match”? The dataset provides sanctions_match_confidence as a continuous 0-100 score, so you can test your model’s behavior at every threshold.

– Source-of-wealth verification: Does your model handle the difference between tax-return-verified and self-declared source of wealth? The sow_verification_method field lets you validate this path separately.

– Multi-jurisdictional complexity: What happens when residence, tax domicile, and offshore jurisdiction are all in different countries? The dataset generates these combinations naturally from the archetype definitions — not as random permutations.

29 Fields Mapped to Your Validation Pipeline

Every KYC-Enhanced profile includes the fields your risk models actually consume:

Identity & Geography: full_name, residence_city, residence_zone, tax_domicile

Wealth Structure: net_worth_usd, total_assets, total_liabilities, property_value, core_equity, cash_liquidity, assets_composition, liabilities_composition

Professional Context: profession, education, narrative_bio, philanthropic_focus

Offshore Exposure: offshore_jurisdiction, offshore_vehicle

KYC Signals: kyc_risk_rating, pep_status, pep_position, pep_jurisdiction, sanctions_screening_result, sanctions_match_confidence, adverse_media_flag, source_of_wealth_verified, sow_verification_method, high_risk_jurisdiction_flag

Every field is deterministically derived from the profile’s archetype, niche, net worth, and jurisdiction. A tech founder in Silicon Valley gets different KYC signals than a commodity trader in the Middle East — because the underlying wealth structures and regulatory exposures are structurally different. This is not random noise. It is signal that your model can learn from and be tested against.

Validation Data Built for the Distribution Your Model Actually Faces

6 Geographic Niches: Silicon Valley, Old Money Europe, Middle East, LatAm, Pacific Rim, Swiss-Singapore. Each niche has its own wealth architecture — not the same profiles with different names. Offshore patterns, PEP frequencies, risk rating distributions, and source-of-wealth methods vary by niche because the underlying regulatory environments and wealth structures vary.

31 Wealth Archetypes per Niche: Tech founders, venture capitalists, private bankers, commodity traders, family office managers, real estate developers, sovereign family members, shipping dynasty heirs — the actual client archetypes that populate the tail of the wealth distribution. Each archetype has distinct asset composition patterns, offshore preferences, and KYC signal profiles.

Pareto-Distributed Net Worth: Not a bell curve. Not a uniform distribution. A power law — the actual shape of real wealth. This means your validation set contains the full range from $30M to $2B+, with the correct frequency at each tier. Your model gets tested against the distribution it will face in production, not a convenient approximation.

Deterministic KYC Signals: Risk ratings, PEP statuses, sanctions results, and verification methods assigned through SHA-256 hashing of the profile UUID — deterministic and reproducible. Same UUID, same KYC signals, every time. This means your validation results are reproducible across runs — a requirement for any serious model governance framework.

Pricing

| Tier | Records | Price | Best For |

|---|---|---|---|

| Compliance Starter | 1,000 | $999 | Initial model validation, proof of concept |

| Compliance Pro | 10,000 | $4,999 | Full validation suite, stress testing |

| Compliance Enterprise | 100,000 | $24,999 | Production validation + model retraining |

No SDK. No API key. No sales call. Download a file in JSONL or CSV format, load it into your validation pipeline, and run your model against profiles that actually cover the tail.

Why This Matters Now

The EU AI Act changes everything for model validation. From August 2026, financial AI systems are classified as high-risk under Annex III. Article 10 requires that training and validation datasets are “relevant, sufficiently representative, and to the best extent possible, free of errors and complete.” If your validation set structurally excludes the Pareto tail — the complex profiles where your model is most likely to fail — a regulator can argue your validation is incomplete by definition. Born-synthetic data with documented provenance and full distributional coverage eliminates this argument.

Model risk management is under the spotlight. The FCA’s 2024-2025 enforcement cycle has explicitly targeted model governance at digital banks. BaFin’s special audit of N26 cited inadequate risk model validation as a contributing factor to the €9.2M fine. Regulators are no longer satisfied with “the model passed our internal tests.” They want to see what data the tests used, where it came from, and whether it covered the scenarios that matter.

The fines are compounding. Starling Bank: £29M. Revolut: €3.5M. Monzo: £21M. N26: €9.2M. Block: $120M. These are not random enforcement actions — they are a systematic regulatory campaign against digital banks with compliance gaps. The pattern is clear: inadequate testing of financial crime controls, including the risk models that underpin them.

The balance sheet test is open source. Every Sovereign Forger record passes algebraic validation: Assets – Liabilities = Net Worth. Run the Balance Sheet Test on our data, then run it on your current validation set. If your validation data contains balance sheet errors, your model learned from broken records. That is a finding your auditor will not ignore.

Every dataset ships with a Certificate of Sovereign Origin — documenting the born-synthetic methodology, Pareto distribution parameters, zero PII lineage, and regulatory alignment with GDPR Art. 25 and EU AI Act Art. 10. When your model governance team asks “where did the validation data come from?”, the certificate answers every question before it is asked.

Validate Your Models Against Realistic Data

Download 100 free KYC-Enhanced UHNWI profiles. Run your model validation suite. Check whether your model handles the Pareto tail — the complex, multi-jurisdictional profiles with offshore structures, PEP connections, and high-risk jurisdiction flags that generate 80% of compliance failures.

If your model’s accuracy drops on these profiles compared to your current validation set, you have found the blind spot. If it holds steady, you have evidence that your model generalizes. Either way, you have a result you can document for your auditor.

That is what validation data is supposed to do.

No credit card. No sales call. Just your work email.

Frequently Asked Questions

Why do neobank AML and fraud models validated on real customer data fail to generalize across product cohorts?

Real customer data used in model validation carries silent distributional bias: it reflects only the customers who were onboarded, not the full population the model will encounter. Neobanks like Monzo and Starling — fined £21M and £29M respectively — discovered this gap when AML models trained on early cohorts failed to flag behavior in newer, more diverse segments. Synthetic data generated from Pareto wealth distributions replicates the statistical shape of the full population, including thin-file and high-net-worth tails, eliminating cohort drift before deployment.

How does using synthetic KYC data during model validation help neobanks reduce exposure to AML and sanctions-screening fines?

Regulators penalize inadequate model testing, not just model failure. Revolut faced €3.5M in fines and N26 €9.2M partly due to screening models that were never stress-tested against edge-case profiles such as PEPs, sanctioned nationals, or adverse source-of-wealth patterns. Validating against synthetic KYC datasets that include realistic distributions of these high-risk flags allows neobanks to identify precision and recall failures before go-live, producing auditable evidence of pre-deployment due diligence that satisfies FCA, BaFin, and EBA expectations.

What specific validation risks arise when neobank credit and affordability models are tested only on historical transaction data?

Historical transaction data reflects one economic regime and one acquisition channel. Credit models validated on that data routinely underestimate default rates in stress scenarios and misclassify affordability for gig-economy and variable-income customers. Block’s $120M settlement highlighted model blind spots in underserved segments. Synthetic financial data that preserves realistic correlations between income volatility, spending category ratios, and balance trajectories allows validation teams to construct scenario-specific test sets — including recession stress and income shock cases — without waiting for those events to appear in real portfolios.

What does born-synthetic financial data mean and why does it matter specifically for neobank model validation?

Born-synthetic means the data was generated entirely from mathematical distributions — including Pareto wealth distributions that accurately reproduce the skewed asset concentration seen in real neobank portfolios — and has zero lineage to any real person. No real record was anonymized, pseudonymized, or transformed to produce it. For model validation this eliminates re-identification risk entirely and satisfies GDPR Art.25 data protection by design requirements without legal review. As EU AI Act Art.10 becomes enforceable in August 2026, born-synthetic training and validation data provides a defensible compliance posture that anonymized real data cannot match.

How can a neobank validation team get started testing model performance against synthetic KYC data?

Sovereign Forger provides 100 free synthetic KYC profiles with 29 interlocked fields available for instant download via work email, with no credit card required. Each profile includes risk ratings, PEP status, sanctions screening flags, and source-of-wealth classifications generated with statistically consistent correlations across all fields. Validation teams can immediately stress-test KYC scoring models, AML rules engines, and onboarding decisioning logic against a realistic distribution of edge-case profiles — producing documented test evidence aligned with FCA model risk guidance and EU AI Act Art.10 data governance requirements.

Learn more about neobank model validation synthetic data and how Born Synthetic data addresses this in our glossary and comparison guides.