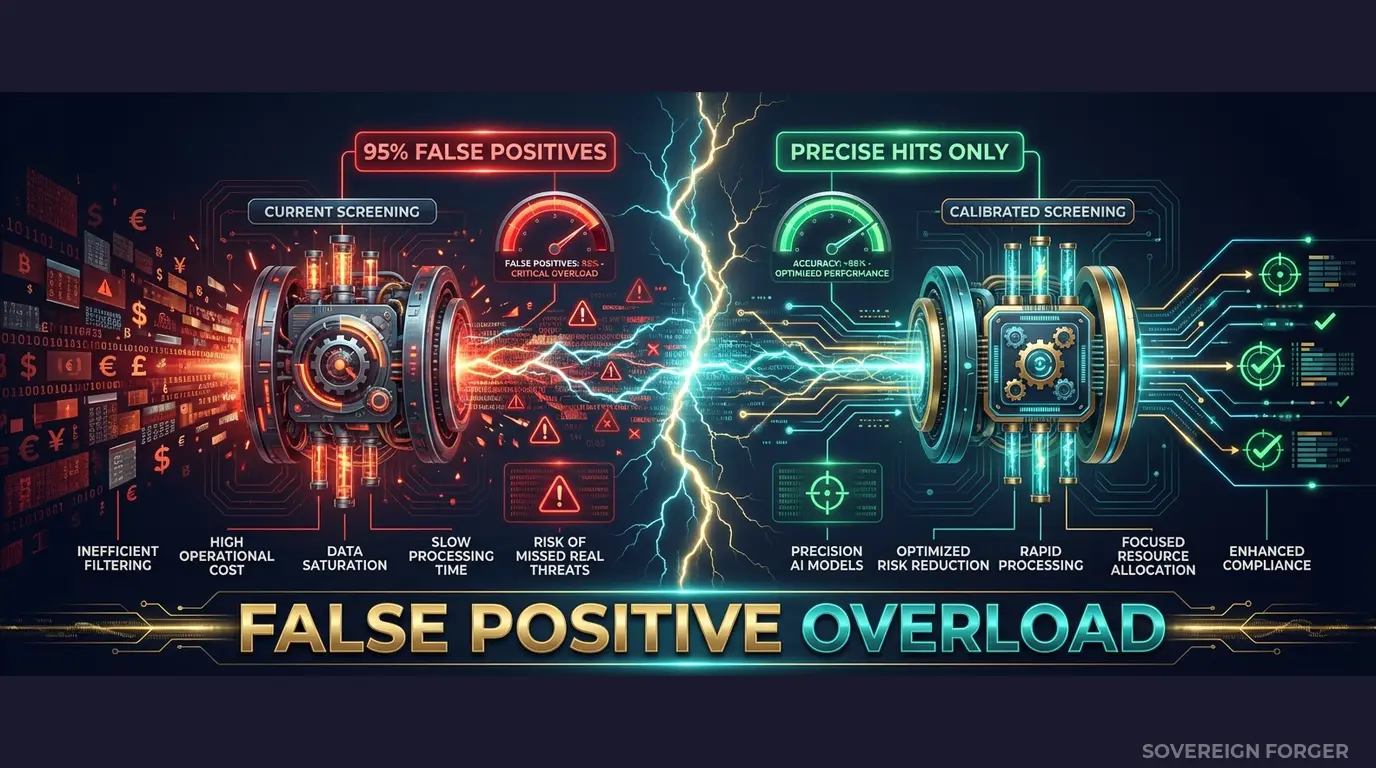

This sanctions screening data is built for exactly this scenario. Your sanctions screening engine flags 95% false positives — or worse, misses the 5% that matter. Starling Bank: £29M. Revolut: €3.5M. N26: €9.2M. The fines were not for missing sanctions hits. They were for screening systems that had never been tested against profiles with real-world jurisdictional complexity.

Your Sanctions Screening Has Never Been Properly Stress-Tested

I have sat in a room where a neobank’s compliance team demonstrated their sanctions screening engine to an FCA examiner. The system was fast. The UI was clean. The match algorithm was sophisticated — fuzzy matching, transliteration support, alias detection. The demo used 200 test profiles. Every sanctioned name was caught. Every clean name passed through. The team was proud.

Six weeks later, a real client triggered a potential match. The name was transliterated from Arabic — three plausible Latin spellings, none matching the sanctions list exactly. The client held a Cayman Islands vehicle through a Jersey trust. The PEP connection was not the client herself, but her brother-in-law, a former deputy minister in a Gulf state. The screening engine returned “clear” on the name match. The jurisdictional risk flags did not fire because the test data had never included a profile where the offshore jurisdiction differed from the tax domicile, which differed from the residence country, which differed from the PEP jurisdiction.

This is the pattern I see repeated across every neobank I have studied. The sanctions screening engine works flawlessly against simple profiles — Western European names, single jurisdictions, direct sanctions list matches. It has never been tested against the profiles that actually create screening complexity in production: multi-jurisdictional structures, culturally diverse naming conventions, indirect PEP connections, and wealth architectures that span four or five regulatory regimes simultaneously.

The false positive problem is the mirror image of the same failure. When your screening engine has only been trained on structurally simple profiles, it has no calibration for what a legitimate complex profile looks like. A Swiss-Singaporean family office manager with interests in Dubai and a Liechtenstein foundation triggers every rule — not because they are sanctioned, but because the engine has never seen a clean profile with that level of structural complexity. Your analysts spend hours clearing false positives that a properly calibrated system would have scored and dismissed in seconds.

The numbers tell the story. Industry-wide, sanctions screening false positive rates range from 90% to 98%. Compliance analysts spend 75% of their time clearing alerts that should never have been generated. The cost is not just operational — it is strategic. When your team is drowning in false positives, they develop alert fatigue. The real match, when it arrives, sits in the same queue as the 200 false positives cleared that morning. This is how sanctions violations happen at well-resourced neobanks with sophisticated screening technology.

Regulators understand this dynamic. The FCA’s 2024 enforcement actions against Starling Bank explicitly cited inadequate screening calibration — not the absence of screening, but the failure to test screening systems against realistic transaction and client complexity. BaFin’s actions against N26 followed the same logic. The screening system existed. The test data behind it did not reflect the client base the system would encounter.

Three Approaches That Leave Your Screening Engine Untested

Every neobank compliance team I have spoken to uses one of three approaches to generate sanctions screening test data. None of them works.

Using copies of production client data. Some teams extract real client records — with or without direct identifiers — into sandbox environments to test screening calibration. The immediate problem is regulatory: GDPR Article 25 requires data protection by design, which means personal data does not belong in test environments with broader access controls and weaker audit logging. But the deeper problem is coverage. Your production client base, however diverse, contains only the profiles you have already onboarded. It does not contain the profiles your system will encounter next quarter — the new geographic markets, the new wealth structures, the edge cases that have not arrived yet. You are testing your screening engine against yesterday’s client base.

Using anonymized or pseudonymized data. Stripping names and tax IDs from real UHNWI profiles creates a false sense of compliance. With only 265,000 UHNWIs globally, the combination of net worth, residence city, offshore jurisdiction, and profession can re-identify individuals even without direct identifiers. A profile with $2.3 billion net worth, residence in Monaco, a BVI holding company, and a background in commodity trading narrows the field to single digits. Your “anonymized” screening test data is pseudonymized at best — and GDPR applies to pseudonymized data in full. More practically, anonymization destroys the naming complexity that is the entire point of sanctions screening testing. If you strip the names, you cannot test name-matching algorithms. If you keep the names, you have real PII in your test environment.

Using generic synthetic generators. Platform-based synthetic data tools produce names from uniform random distributions — no cultural weighting, no transliteration variants, no naming conventions that reflect how UHNWI names actually appear across jurisdictions. The profiles are single-jurisdiction, single-nationality, with no offshore exposure. Your screening engine trains on “John Smith, New York, no PEP connections” and calls it tested. Then it encounters “Mohammed bin Khalid Al-Rashid, tax domicile UAE, residence London, Cayman LP, brother is a former government minister” — and every assumption in the model breaks.

Real Data vs. Anonymized vs. Born-Synthetic for Sanctions Screening

| Dimension | Real Data | Anonymized | Born-Synthetic |

|---|---|---|---|

| PII present | Yes | Residual | None |

| Name complexity preserved | Yes | Stripped or exposed | Full (culturally generated) |

| Re-identification risk | Certain | Probable (UHNWI) | Impossible |

| Multi-jurisdictional coverage | Limited to current clients | Limited to current clients | 6 niches, 31 archetypes |

| PEP/sanctions signal diversity | Reflects existing base only | Degraded by anonymization | Deterministically modeled |

| GDPR Art. 25 compliant | No | Disputed | Yes |

| EU AI Act Art. 10 | Violation | Unclear | Compliant |

| Certifiable for auditors | No | No | Yes (Certificate of Origin) |

Born-Synthetic Screening Data Built for Neobank Sanctions Testing

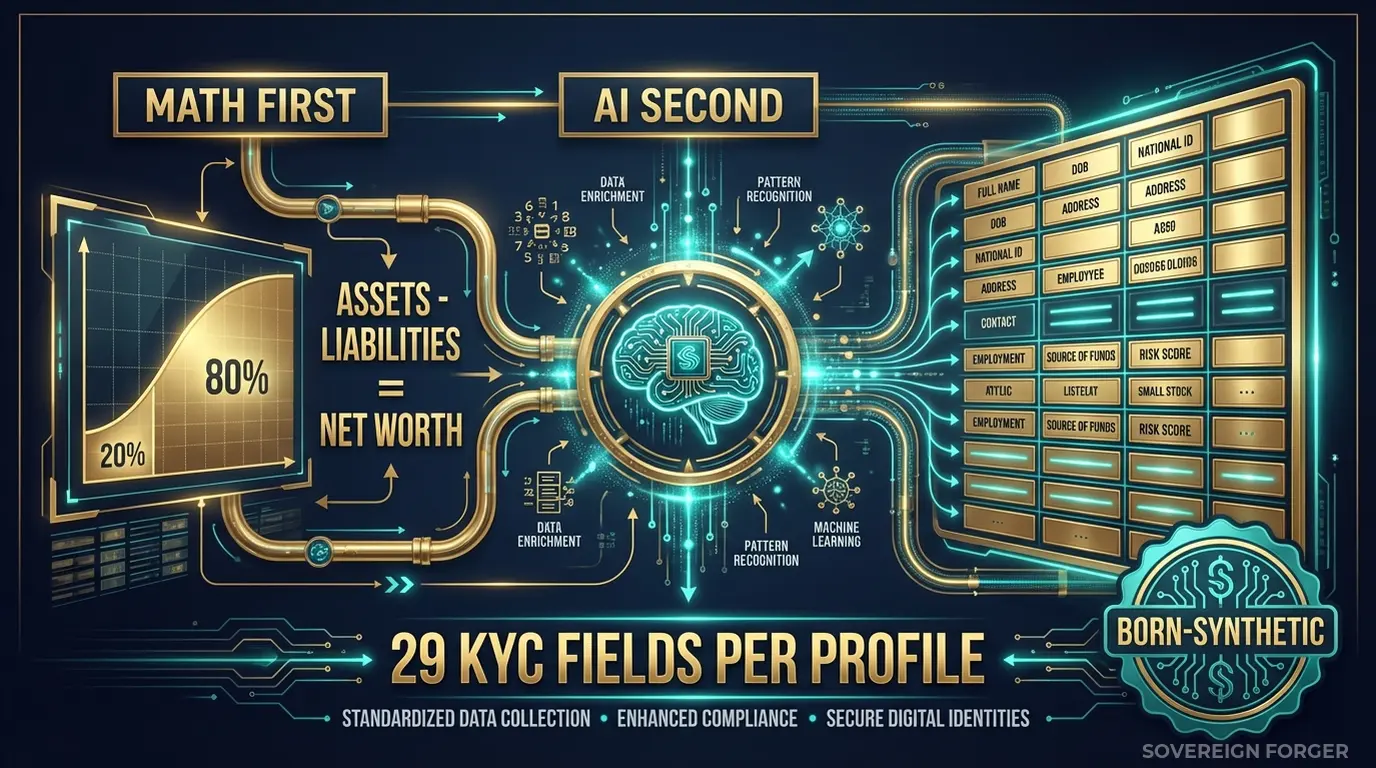

I built Sovereign Forger to solve the exact problem I watched compliance teams fail at repeatedly: testing sophisticated screening engines against structurally simple data. Every profile in the KYC-Enhanced dataset is generated from mathematical constraints and enriched with culturally coherent identity data — not derived from any real person, not anonymized from any existing record.

Math First. Net worth follows a Pareto distribution — the statistical shape of real wealth concentration. Asset allocations are computed within algebraic constraints: Assets – Liabilities = Net Worth, by construction. Offshore jurisdictions, entity structures, and geographic distributions are modeled from the wealth architectures that actually exist in each niche. This is not random data with large numbers. It is structurally coherent financial architecture.

AI Second. A local AI model — running offline, on my own hardware, never touching the network — adds narrative biography, professional context, and philanthropic focus after the financial figures and KYC signals are locked. The AI enriches the profile. It never generates the numbers or the compliance signals.

The Six Fields That Transform Sanctions Screening Testing

Every KYC-Enhanced profile includes 29 fields. Six of those fields are specifically designed to stress-test sanctions screening engines:

`sanctions_screening_result` — Three possible values: `clear`, `potential_match`, `confirmed_match`. Distribution varies by niche and archetype. A Silicon Valley tech founder has different screening outcomes than a Middle Eastern sovereign family member — because the underlying risk profile is different, not because of random assignment.

`sanctions_match_confidence` — A score from 0 to 100, populated only for `potential_match` results. This is the field your screening engine needs to calibrate against. A confidence score of 35 on a multi-jurisdictional profile with transliterated naming should be handled differently than a confidence score of 35 on a single-jurisdiction profile with a common Western name. Without test data that contains both scenarios, your engine cannot learn the difference.

`pep_status` — Four states: `none`, `domestic`, `foreign`, `international_org`. Combined with `pep_jurisdiction`, this creates the indirect PEP connections that cause the most screening failures in production. A profile is not PEP themselves, but their jurisdiction and wealth structure place them one connection away from a PEP — exactly the scenario your Enhanced Due Diligence process needs to detect.

`pep_jurisdiction` — The country of the PEP connection. When this differs from `residence_city`, `tax_domicile`, and `offshore_jurisdiction`, you have a four-jurisdiction profile that most screening engines have never encountered in testing.

`high_risk_jurisdiction_flag` — Boolean flag with an associated list of high-risk jurisdictions derived from the profile’s offshore and tax structure. Profiles with BVI, Cayman, Panama, or Liechtenstein exposure are flagged — but the flag is derived from the actual financial architecture, not randomly assigned.

`offshore_jurisdiction` — The jurisdiction of the profile’s offshore vehicle. Combined with `tax_domicile` and `residence_city`, this creates the cross-border complexity matrix that defines real UHNWI screening risk.

Why Deterministic Derivation Matters for Screening Calibration

Every KYC signal in the dataset is deterministically derived from the profile’s archetype, niche, net worth, and jurisdiction using SHA-256 hashing of the UUID. This means the same profile generates the same signals on every run — the data is reproducible. But more importantly, it means the signals are internally consistent.

A commodity trader in the Middle East niche with $500M net worth and a BVI offshore vehicle gets a different risk profile than a tech founder in Silicon Valley with $500M and a Delaware LLC. The screening result, PEP status, and high-risk jurisdiction flag all derive from the underlying wealth architecture — not from a random number generator that assigns “high risk” to 10% of profiles regardless of context.

This internal consistency is what allows your screening engine to calibrate. When every test profile’s risk signals are coherent with its financial structure, the engine learns to distinguish between a genuinely suspicious pattern and a legitimate complex profile. That calibration is the difference between a 95% false positive rate and a 40% false positive rate.

Built for Neobank Sanctions Screening at Scale

6 Geographic Niches: Silicon Valley, Old Money Europe, Middle East, LatAm, Pacific Rim, Swiss-Singapore. Each niche produces profiles with the naming conventions, jurisdictional patterns, and wealth structures that your screening engine will encounter from clients in that region. Middle Eastern profiles include transliterated names with multiple plausible spellings. Pacific Rim profiles include CJK-origin names with Romanized variants. European profiles include multi-national naming conventions across Germanic, Francophone, and Mediterranean traditions.

31 Wealth Archetypes: Tech founders, private bankers, commodity traders, sovereign family members, shipping dynasty heirs, family office managers, real estate developers. Each archetype generates different screening risk profiles — because the underlying wealth structures, jurisdictions, and professional contexts differ. Your screening engine needs to have seen all 31 before it encounters them in production.

Realistic Signal Distribution: Sanctions screening results, PEP statuses, and high-risk jurisdiction flags are distributed with frequencies that vary by niche. The Middle East niche produces approximately 29% PEP-connected profiles. LatAm produces approximately 84% high-risk ratings. Silicon Valley skews toward lower risk with higher net worth. These distributions are not arbitrary — they reflect the structural characteristics of wealth in each geography.

Name Complexity: Full names are generated from culturally weighted naming libraries — not anglicized placeholders. Arabic patronymics, Chinese compound surnames, Swiss-German multi-part names, Singaporean naming conventions with family name positioning that differs from Western order. This is the name diversity your fuzzy matching algorithm needs to train against.

Pricing

| Tier | Records | Price | Best For |

|---|---|---|---|

| Compliance Starter | 1,000 | $999 | Screening engine calibration, POC |

| Compliance Pro | 10,000 | $4,999 | Full screening regression testing |

| Compliance Enterprise | 100,000 | $24,999 | AI model training + threshold tuning |

No SDK. No API key. No sales call. Download a file, load it into your screening engine, and measure the difference in false positive rates.

Why This Matters Now

Enforcement is accelerating — and screening is the focus. The FCA’s enforcement against Starling Bank (£29M, 2024) specifically cited failures in financial crime screening controls. BaFin’s €9.2M fine against N26 targeted inadequate transaction monitoring and screening processes. These regulators are not asking “do you have a screening system?” They are asking “have you tested it against realistic complexity?” If the answer relies on test data with zero multi-jurisdictional profiles, zero PEP connections, and zero sanctions signal variation, the answer is no.

The EU AI Act changes the compliance surface. Fully applicable from August 2026, the EU AI Act classifies financial AI as high-risk under Annex III. Article 10 requires documented governance of training data — including provenance, representativeness, and bias assessment. If your sanctions screening AI was trained or calibrated on data derived from real persons, you need GDPR compliance documentation. If it was trained on structurally simple synthetic data, the bias assessment will show that the model has never encountered the profiles that generate the most screening complexity. Born-synthetic data with realistic structural diversity solves both problems simultaneously.

The false positive cost is quantifiable. Industry estimates place the cost of manually reviewing a single sanctions screening alert at $15-$75, depending on complexity. At a 95% false positive rate on 10,000 daily alerts, that is $142,500-$712,500 per day in analyst time spent clearing alerts that should never have been generated. Reducing the false positive rate from 95% to 60% through better screening calibration represents $49,875-$249,375 in daily savings. The $24,999 cost of a Compliance Enterprise dataset pays for itself in hours, not months.

The balance sheet test is open source. Every Sovereign Forger record passes algebraic validation: Assets – Liabilities = Net Worth. Run the Balance Sheet Test on our data, then run it on your current test data. If the numbers do not balance, the profiles are not structurally coherent — and neither are the screening signals derived from them.

Every dataset ships with a Certificate of Sovereign Origin — documenting the born-synthetic methodology, zero PII lineage, and regulatory alignment. When your auditor or the FCA asks “how did you calibrate your screening engine?”, you hand them the certificate. Born-Synthetic data. Compliant by construction — not by anonymization.

Stress-Test Your Sanctions Screening

Download 100 free KYC-Enhanced UHNWI profiles with sanctions screening signals, PEP indicators, and multi-jurisdictional exposure. Run them through your screening engine. Count how many generate alerts that your current test data never produced. Count how many legitimate complex profiles are flagged as false positives.

Those two numbers tell you exactly how calibrated your screening system actually is.

No credit card. No sales call. Just your work email.

Frequently Asked Questions

How does synthetic sanctions screening data help neobanks reduce false positive rates without exposing real customer records?

Neobanks typically see false positive rates of 95–99% in sanctions screening, creating massive operational drag. Sovereign Forger generates profiles engineered to include near-match name variants, partial OFAC/UN/EU list overlaps, and edge-case nationalities across 40+ sanctioned jurisdictions. Because no real person underlies the data, compliance teams can tune threshold parameters and test fuzzy-matching algorithms at scale without triggering GDPR obligations or risking data leakage — directly addressing the blind spots that led to Starling Bank’s £29M fine in 2022.

Which sanctioned jurisdictions and watchlist scenarios are covered in Sovereign Forger’s neobank sanctions screening datasets?

Profiles span entities linked to OFAC SDN, EU Consolidated List, UN Security Council, UKFT, and SECO watchlists, covering jurisdictions including Iran, Russia, North Korea, Belarus, and Myanmar. Each profile includes risk-tiered flags, dual-nationality edge cases, and PEP adjacency markers that reflect real screening complexity. This breadth allows neobank compliance teams to validate coverage gaps before regulators do — a critical step given Revolut’s €3.5M and N26’s €9.2M penalties for inadequate transaction monitoring controls.

Can synthetic KYC profiles support neobank AML model validation under EU AI Act requirements?

The EU AI Act Article 10 classifies AML and sanctions screening models as high-risk AI systems, with training data governance provisions enforceable from August 2026. Sovereign Forger’s datasets provide documented, bias-auditable synthetic records suitable for model validation and stress-testing without relying on live customer data. This supports explainability obligations and reduces the compliance gap that contributed to Monzo’s £21M warning in 2024 and Block’s $120M settlement, both partly attributed to inadequate model testing infrastructure.

What does born-synthetic mean for sanctions screening data, and why does it matter for neobanks specifically?

Born-synthetic means each profile is generated entirely from mathematical distributions, including Pareto-based wealth modeling, with zero lineage to any real individual — no anonymisation, no pseudonymisation, no re-identification risk. For neobanks operating under GDPR Article 25, data protection by design is a legal obligation, not a best practice. Because born-synthetic records never originate from personal data, they satisfy Art.25 by construction, eliminating the legal exposure that comes with using masked or tokenised real customer records in sanctions screening test environments.

How can a neobank compliance team get started with Sovereign Forger’s sanctions screening profiles?

Sovereign Forger provides 100 free KYC profiles available via instant download upon registration with a work email address, with no credit card required. Each profile includes 29 interlocked fields covering risk ratings, PEP status, sanctions screening flags, source of wealth indicators, and dual-nationality markers. The dataset is structured for direct ingestion into screening platforms, enabling teams to run a first false positive benchmark within hours and establish a measurable baseline before scaling to full test suite volumes.

Learn more about neobank sanctions screening synthetic data and how Born Synthetic data addresses this in our glossary and comparison guides.