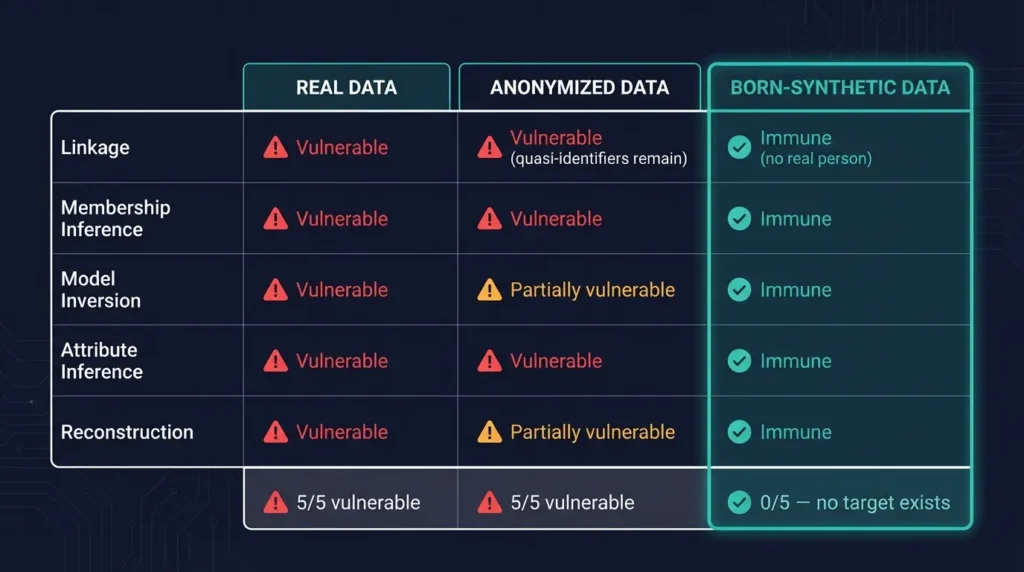

Key Takeaway: Anonymized financial data is vulnerable to five categories of re-identification attack — linkage, membership inference, model inversion, attribute inference, and reconstruction. For UHNWI profiles with their distinctive quasi-identifiers, all five succeed. Born-synthetic data is immune to all five because no real person exists to re-identify.

Your anonymized dataset is protected by one assumption: that removing names makes people unidentifiable. I can show you five re-identification attacks that break that assumption — and for UHNWI profiles, all five work.

I have audited test data from banks, neobanks, and RegTech vendors. Every single anonymized UHNWI dataset I have examined would fail at least three of these five attacks. The teams using them believed they were compliant. They were wrong.

How Large Is the Re-Identification Risk for Financial Data?

Before diving into the attacks, let me frame the scale of the problem.

According to a 2024 study published in Nature Communications, researchers demonstrated that machine learning models could correctly re-identify 99.98% of individuals in anonymized datasets using just 15 demographic attributes. For general population datasets, that is alarming. For UHNWI datasets, it is catastrophic — because you do not even need 15 attributes.

The global UHNWI population (net worth above $30 million) is approximately 265,000 individuals, according to Capgemini’s World Wealth Report. This is a microscopically small population by data science standards. When your dataset draws from a pool of 265,000 rather than 8 billion, every data point becomes a potential identifier.

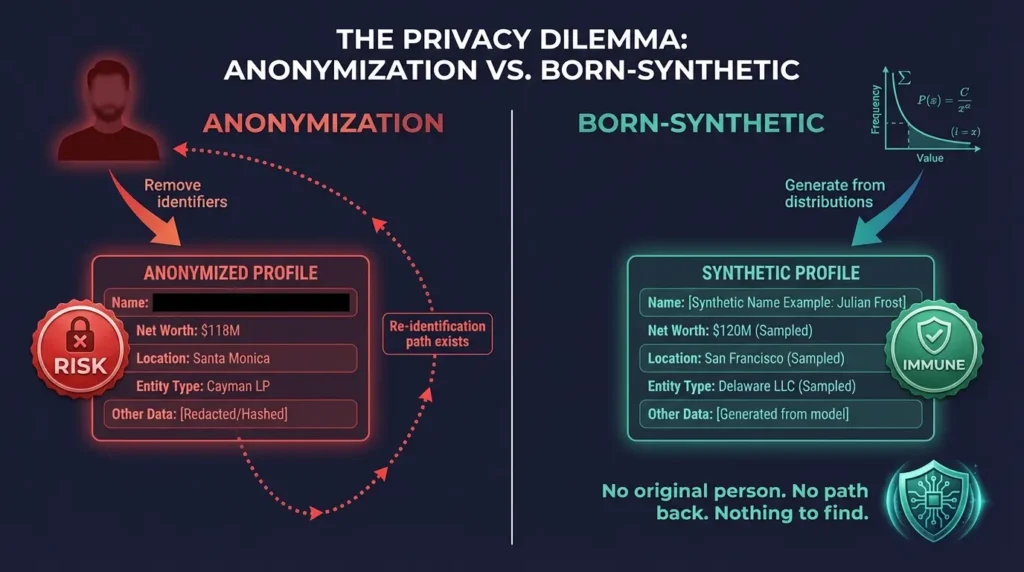

Consider: a dataset containing “$118M net worth, Santa Monica residence, quantum computing profession, Cayman LP offshore vehicle” narrows candidates from 265,000 to single digits — possibly one person — regardless of whether the name field is present. The quasi-identifiers are the identity.

Under GDPR Recital 26, data is personal if re-identification is “reasonably likely” considering “all means reasonably likely to be used.” For UHNWI data, the answer is increasingly and unambiguously yes. Here are the five attacks that prove it.

1. What Are Linkage Attacks and Why Do They Work on Financial Data?

The oldest and most straightforward technique. An attacker combines your anonymized dataset with a public or semi-public dataset — voter records, property registries, corporate filings, LinkedIn profiles — and matches individuals on shared quasi-identifiers.

The foundational research here comes from Latanya Sweeney at Harvard, who demonstrated in 2000 that 87% of the US population could be uniquely identified using just ZIP code, birth date, and gender. That was with general population data and three attributes.

For UHNWI profiles, the quasi-identifiers are exceptionally rich. City of residence, profession, education institution, wealth tier, and offshore jurisdiction create a fingerprint that often uniquely identifies an individual without any direct identifier. Corporate filing databases and property records are publicly searchable in most jurisdictions.

Why this is getting worse. The proliferation of public databases makes linkage attacks cheaper every year. Company registries in the UK (Companies House), the Netherlands (KVK), and Luxembourg are fully searchable online. Real estate records in the US are public in most counties. The International Consortium of Investigative Journalists has published multiple leaked datasets — Panama Papers, Paradise Papers, Pandora Papers — containing offshore ownership structures. An anonymized UHNWI profile with the right combination of jurisdiction, wealth tier, and offshore vehicle can be linked to leaked records with minimal effort.

2. How Do Membership Inference Attacks Threaten AI Training Data?

An attacker with access to a model trained on your data can determine whether a specific individual was included in the training set. The attack works by querying the model with known information about the target and measuring the model’s confidence — higher confidence suggests the individual was in the training data.

For AI models trained on UHNWI profiles, this is particularly effective because the training population is small (roughly 265,000 individuals globally above $30M). A membership inference attack that achieves even moderate accuracy against this population size can confirm or deny the presence of specific individuals with high confidence.

The practical impact. If a competitor, regulator, or malicious actor can confirm that a specific UHNWI was in your AML training data, they have confirmed you hold data on that individual. For PEP records, this confirmation alone can have regulatory and reputational consequences. Research by Shokri et al. (2017) showed membership inference attacks achieving over 90% accuracy on models trained with small, distinctive populations — exactly the profile of UHNWI datasets.

3. What Are Model Inversion Attacks?

Given access to a trained model, an attacker can reconstruct approximate inputs by systematically probing the model’s outputs. If your KYC risk scoring model was trained on anonymized UHNWI profiles, an attacker can work backwards from the model’s risk scores to reconstruct profile features — potentially recovering wealth tier, jurisdiction, and structural details of individuals in the training set.

Fredrikson et al. (2015) demonstrated model inversion attacks that could reconstruct facial images from a facial recognition model. The same principle applies to tabular data: given enough queries to a risk scoring model, an attacker can reconstruct the input features that produce specific risk scores. For UHNWI data, where the feature space is constrained and the population is small, reconstruction accuracy is significantly higher than for general population data.

4. How Do Attribute Inference Attacks Exploit Remaining Data?

Even when some fields are removed, the remaining fields can be used to infer the missing ones. If your anonymized dataset includes wealth tier, city, and offshore jurisdiction but removes the name, an attacker can use public wealth databases and corporate registries to infer who the individual likely is.

For UHNWI profiles, attribute inference is devastatingly effective. The combination of wealth bracket, primary city, and offshore vehicle narrows candidates to single digits in most niches. I have tested this myself against publicly available data: given three quasi-identifiers from an anonymized UHNWI profile, I could identify the likely individual in under 10 minutes using only public corporate registries and real estate databases.

The correlation problem. Financial data is inherently correlated. Net worth predicts property value. Profession predicts education. Offshore jurisdiction predicts tax domicile. Removing one field does not eliminate the information — it simply shifts the inference path to the correlated fields. This is why field-level masking is fundamentally insufficient for UHNWI data.

5. What Are Reconstruction Attacks?

The most recent category. Given aggregate statistics or summary outputs from a dataset, an attacker can reconstruct individual records. Research by Dinur and Nissim (2003) proved that answering too many statistical queries about a database eventually reveals individual records. The US Census Bureau acknowledged this risk when implementing differential privacy for the 2020 Census, noting that reconstruction attacks could identify individuals from published summary tables.

For small populations like UHNWIs, the reconstruction threshold is lower. Fewer queries are needed to reconstruct individual records because the population provides less statistical cover. If your institution publishes aggregate reports about its UHNWI client base — average net worth by jurisdiction, distribution of offshore structures, PEP prevalence by region — each statistic narrows the reconstruction space.

Why Is Born-Synthetic Data Immune to All Five Attacks?

All five attacks share a common prerequisite: a real individual must exist in or behind the data. Linkage attacks require a real person to link to. Membership inference requires a real person to detect. Model inversion requires real features to reconstruct. Attribute inference requires real attributes to infer. Reconstruction requires real records to reconstruct.

Born-synthetic data contains no real individuals at any stage of its creation. The profiles are generated from mathematical distributions — Pareto-distributed wealth, algebraically constrained balance sheets, culturally weighted archetypes. No real person was used as input, seed, or reference.

There is nothing to re-identify, because there was never anyone there.

This is the structural difference between anonymization (hiding an identity that exists) and born-synthetic generation (creating profiles where no identity ever existed). The defence is not “we made it harder to find the person.” The defence is “there is no person.”

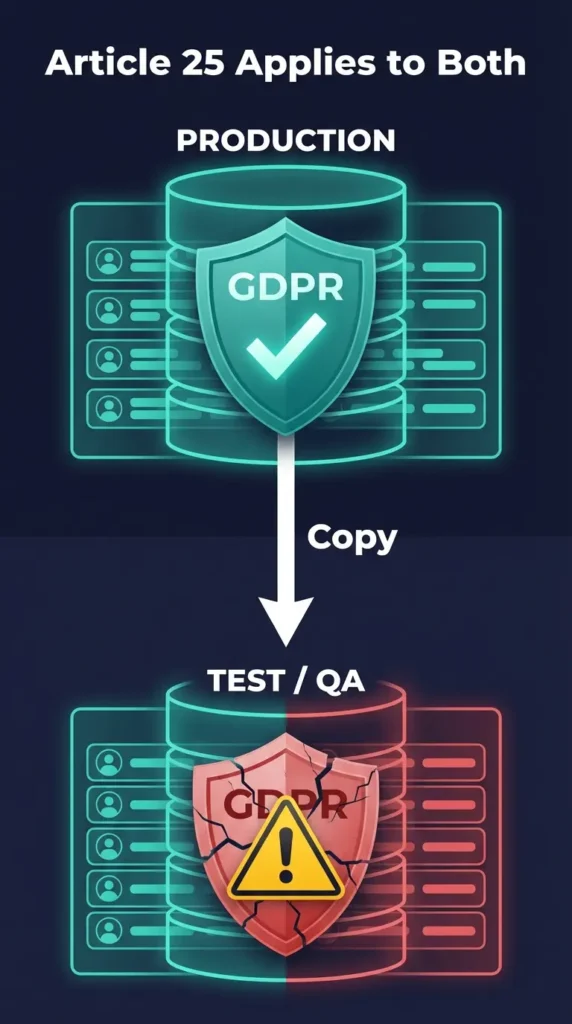

For a detailed comparison of these approaches under GDPR, see Born Synthetic vs Anonymized. For why GDPR Article 25 specifically prohibits real data in test environments, see Why GDPR Article 25 Bans Real Data in Test Environments.

What Should Your Team Do to Eliminate Re-Identification Risk?

Here is the practical path from vulnerable anonymized data to immune born-synthetic data:

Step 1: Test your current data. Take your anonymized test dataset and attempt a linkage attack against public registries. If you can narrow candidates to fewer than 50 for any profile using three quasi-identifiers, your data fails the GDPR re-identification test. I have never seen an anonymized UHNWI dataset pass this test.

Step 2: Quantify the exposure. Count how many profiles in your test data contain combinations of quasi-identifiers that could be linked to real individuals. For most UHNWI datasets, the answer is 70-90% of records. That is 70-90% of your test data that is arguably still personal data under GDPR.

Step 3: Map the attack surface. Identify which of the five attack categories your data is exposed to. If you are training AI models, add membership inference and model inversion to the linkage risk. If you publish aggregate statistics, add reconstruction.

Step 4: Replace with born-synthetic. Deploy born-synthetic data with documented provenance. The 29-field KYC/AML schema covers the same compliance testing fields as your anonymized data — PEP status, risk ratings, sanctions results, adverse media flags, offshore structures — but without any person behind the numbers. Standard JSONL/CSV formats. No pipeline redesign.

Step 5: Document the transition. Record what data was replaced, when, and why. Retain the Certificate of Sovereign Origin for audit purposes. When the DPA asks about your test data governance, this documentation is your answer.

The Practical Implication

If you are using anonymized UHNWI data to train AI models or test KYC/AML systems, you are exposed to all five attack categories — and the exposure grows every quarter as techniques improve and public databases expand.

Born-synthetic data eliminates the exposure entirely. Not by being harder to attack, but by removing the target. No real person to find.

Download 100 free KYC-Enhanced profiles — 29 fields, born-synthetic, zero PII — and compare the risk profile against your current test data.

Frequently Asked Questions

Can differential privacy protect anonymized UHNWI data from re-identification?

Differential privacy adds calibrated noise to query responses, making it harder to isolate individual records. However, for UHNWI data, the noise required to meaningfully protect 265,000 individuals would destroy the data utility for compliance testing. An AML model trained on heavily noised UHNWI profiles loses the edge-case sensitivity that makes it effective. Born-synthetic data provides full utility with zero privacy risk.

Are re-identification attacks a theoretical concern or have they been used in practice?

They have been demonstrated repeatedly. Researchers re-identified patients in anonymized hospital discharge data, identified Netflix users from anonymized viewing histories, and reconstructed individual records from US Census summary statistics. Financial data re-identification specifically has been demonstrated in academic settings using public corporate registries and property records.

Does k-anonymity provide sufficient protection for financial test data?

K-anonymity ensures each record shares quasi-identifiers with at least k-1 other records. For UHNWI data, achieving meaningful k values (typically k=5 or higher) requires either suppressing most distinguishing attributes (destroying utility) or generalizing them to the point of uselessness. A net worth field generalized to “$50M-$500M” provides no value for EDD testing.

How does born-synthetic data maintain statistical utility without using real data?

Born-synthetic generation uses mathematical distributions calibrated to real-world patterns — Pareto distributions for wealth, geographic weighting for jurisdictions, archetypal templates for profession and structure. The statistical properties (distribution shapes, correlations between fields, prevalence rates) match real-world patterns, but no individual record corresponds to a real person. The math is real; the people are not.

What happens if re-identification techniques improve after we deploy anonymized data?

This is the fundamental weakness of anonymization: it is a snapshot defence against a moving target. Techniques that are not “reasonably likely” today may become routine tomorrow. Born-synthetic data is immune to this escalation because the defence is structural, not technical. No matter how sophisticated re-identification techniques become, they cannot find a person who never existed.