There is a test I run on every synthetic financial dataset I encounter. It takes sixty seconds. Open the file. Pick any record. Check whether Assets minus Liabilities equals Net Worth.

If it does not — on even a single record — you have a data quality problem that will propagate through every model you train on it.

I call this the balance sheet test. It is the minimum standard for synthetic HNWI data, and most datasets fail it.

Why This Test Matters

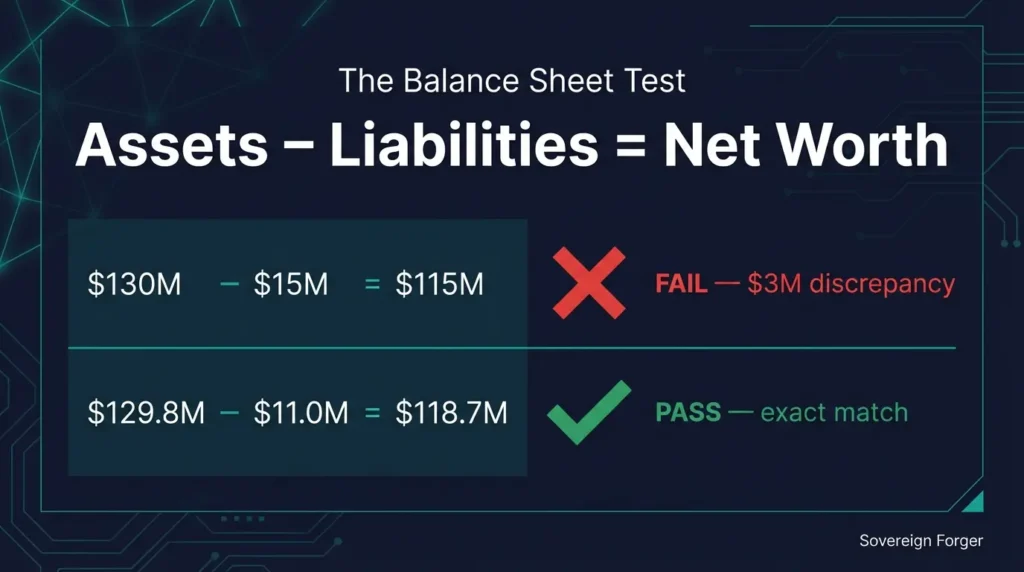

When a language model generates a synthetic financial profile, it produces numbers that look plausible. A net worth of $118 million. Total assets of $130 million. Liabilities of $15 million. The numbers feel right at a glance.

But $130 million minus $15 million is $115 million — not $118 million. The balance sheet does not balance. The profile is internally inconsistent.

This matters for three reasons.

First, if your AI training data contains inconsistent balance sheets, your model learns that inconsistency is normal. It internalizes patterns where assets and liabilities do not add up — and it reproduces those patterns in its outputs.

Second, if you are using the data for compliance testing, an inconsistent balance sheet means your KYC or EDD system is being tested against records that would never exist in the real world. Your test results become meaningless.

Third, if a downstream user or auditor examines the data and finds that the math does not work, every other quality claim you make about the dataset becomes suspect. Trust is lost at the first failed check.

If you are not sure whether your current data has these problems, start with Why Generic Synthetic Data Fails for Wealth Management AI — the structural issues run deeper than the balance sheet.

How to Run the Test

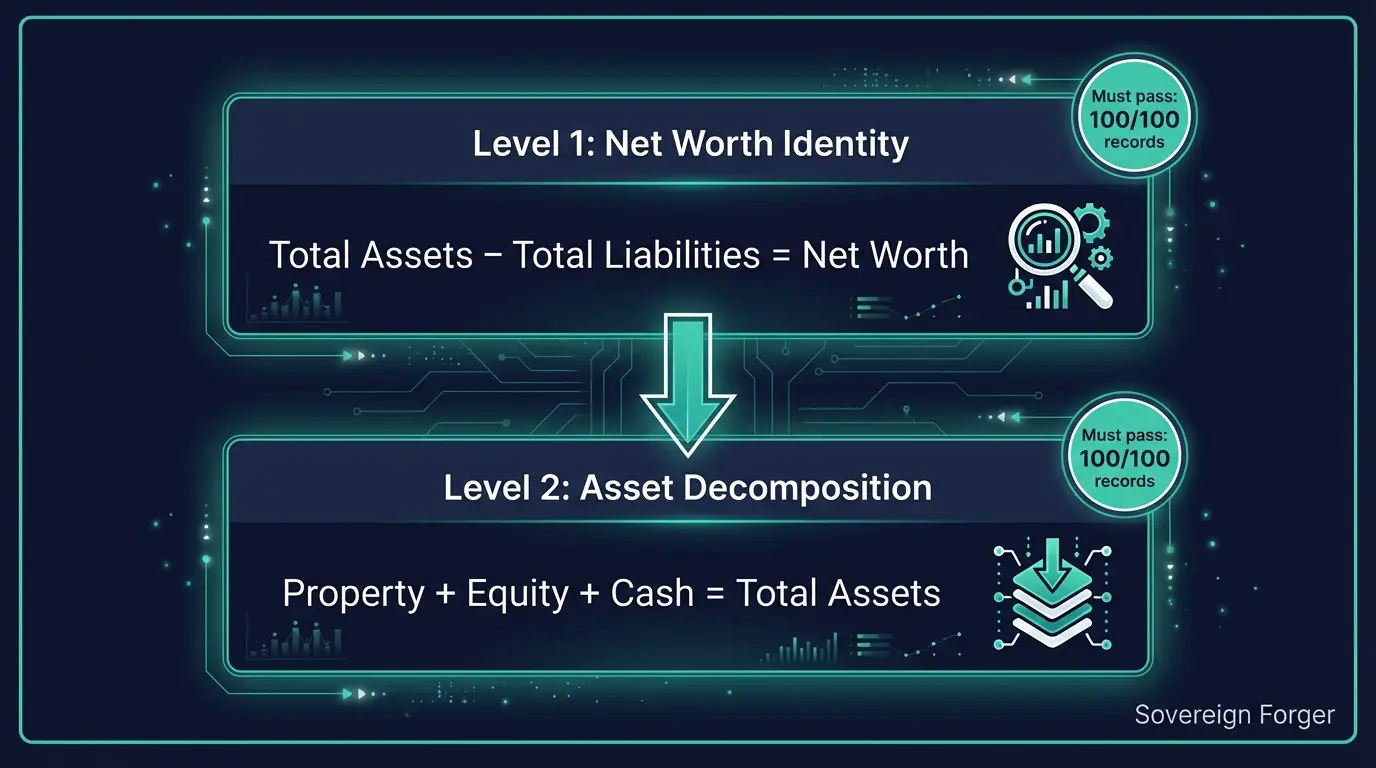

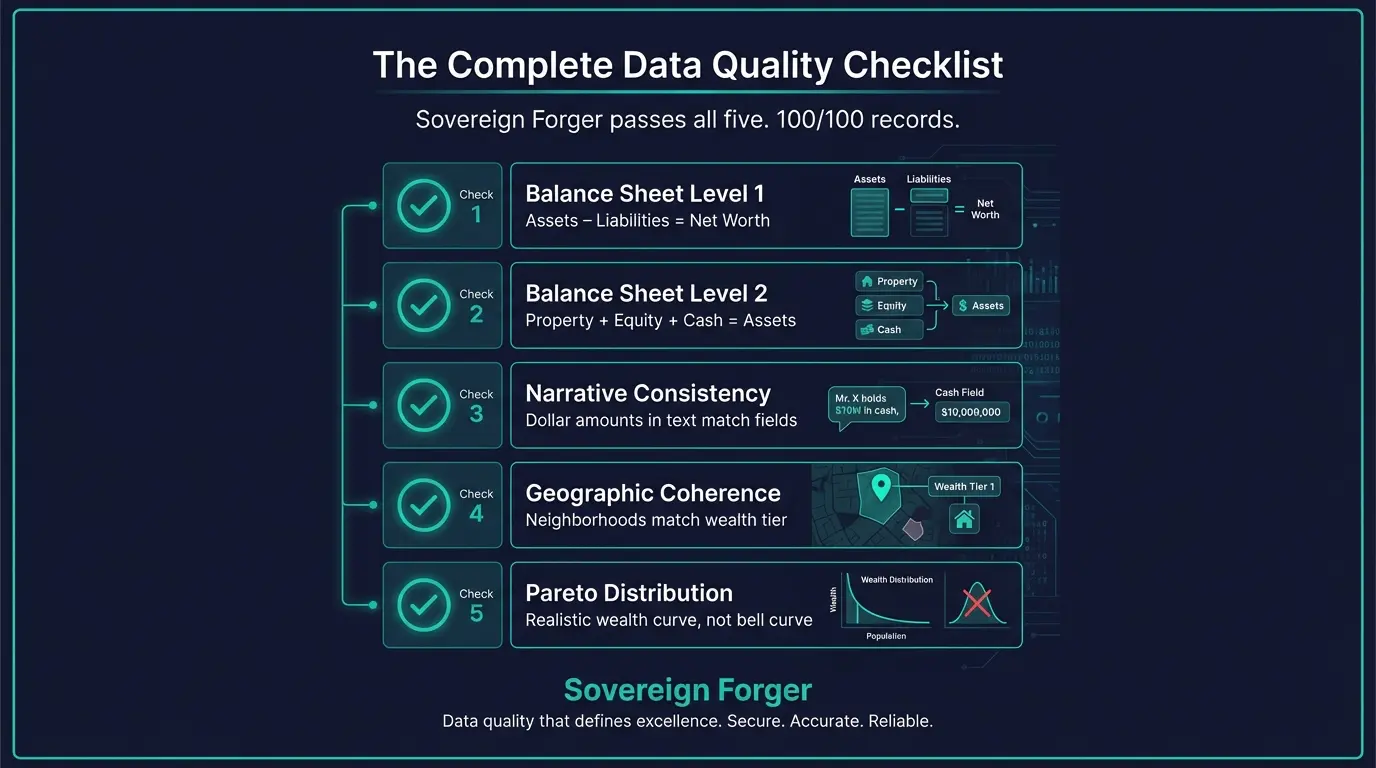

The balance sheet test has two levels. Both should pass on 100% of records.

Level 1: Net Worth Identity

For each record, verify:

Total Assets − Total Liabilities = Net WorthThis is the fundamental accounting identity. If a single record fails this check, the dataset’s financial fields were generated independently rather than constrained by algebraic relationships.

Level 2: Asset Decomposition

If the dataset includes sub-components of assets (such as property value, equity holdings, and cash liquidity), verify:

Property Value + Core Equity + Cash Liquidity = Total AssetsThis checks whether the asset breakdown actually sums to the stated total. Many synthetic datasets include sub-fields that look detailed but do not add up — the appearance of granularity without the substance.

Both levels can be verified manually in a spreadsheet. For larger datasets, I published an open-source tool on GitHub that automates both checks across every record in the file.

What Passing Looks Like

Here is a record that passes both levels:

net_worth_usd: $118,789,521

total_assets: $129,871,826

total_liabilities: $11,082,305

property_value: $21,010,082

core_equity: $91,164,801

cash_liquidity: $17,696,943Level 1: $129,871,826 − $11,082,305 = $118,789,521 ✓

Level 2: $21,010,082 + $91,164,801 + $17,696,943 = $129,871,826 ✓

Both checks pass. The financial fields are provably consistent.

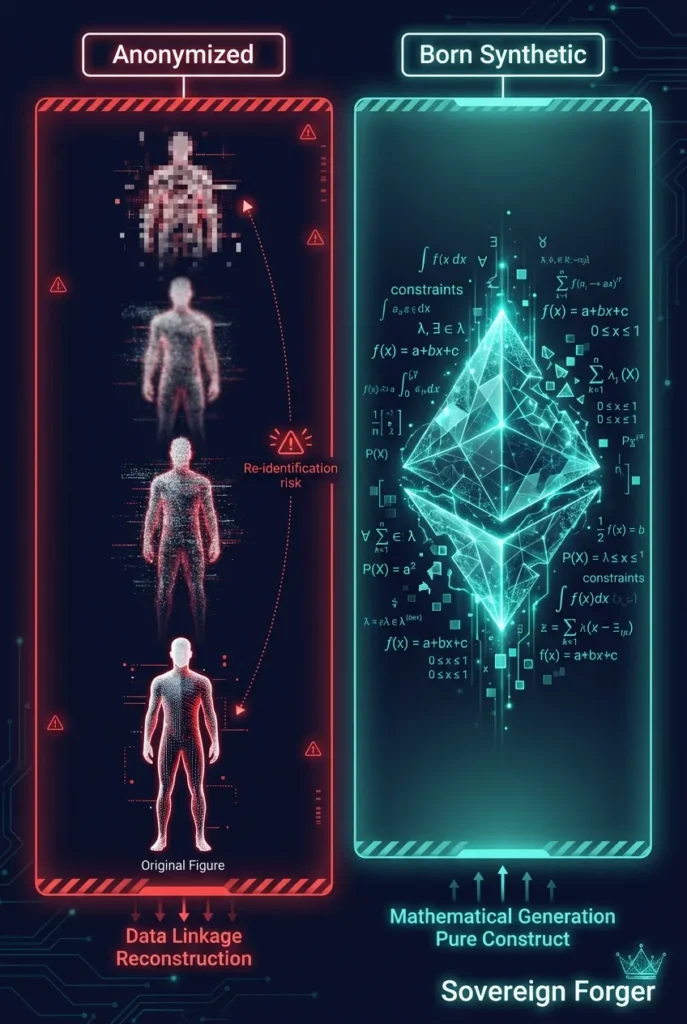

Why Most Synthetic Data Fails This Test

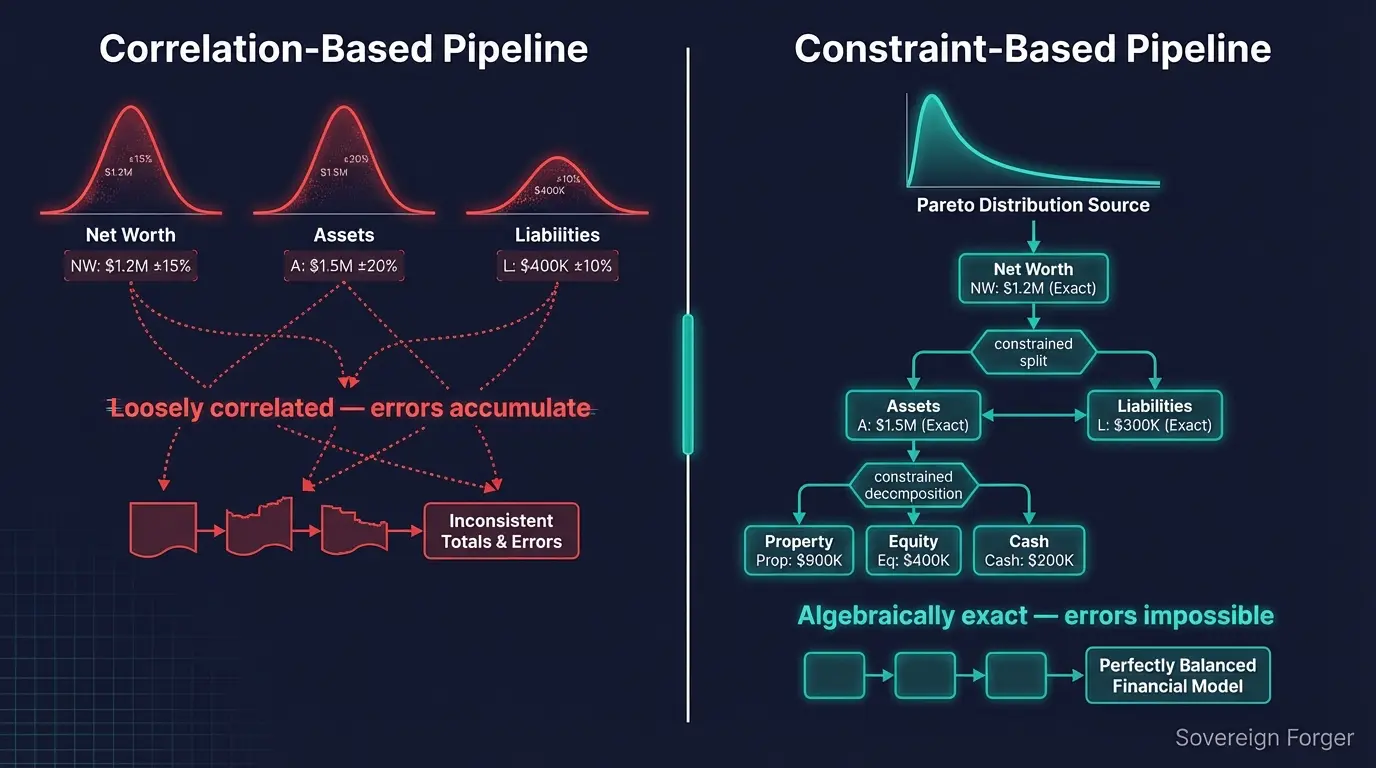

The root cause is architectural. Most synthetic data pipelines — whether they use GANs, VAEs, or large language models — generate fields probabilistically. Each field is sampled from a distribution, with some correlation modeling to keep things loosely coherent. But “loosely coherent” is not the same as “algebraically exact.”

A pipeline that generates net worth from one distribution and total assets from another will occasionally produce records where the numbers do not reconcile. At scale, “occasionally” becomes “frequently.” On a 10,000-record dataset, even a 2% error rate means 200 records with broken balance sheets.

The alternative is to build the pipeline around constraints rather than correlations. Start with a wealth distribution (Pareto, for UHNWI populations). Compute net worth first. Then derive total assets and total liabilities from constrained splits. Then decompose assets into property, equity, and liquidity using further constrained allocations. Every number is computed — never independently sampled.

This is the approach I use in the Sovereign Forger pipeline. The mathematical layer comes first. Only after every financial figure is locked does an AI model add the narrative layer — biography, profession, philanthropy. The AI enriches the profile. It never touches the numbers.

Beyond the Balance Sheet: Three More Checks

The balance sheet test is necessary but not sufficient. Here are three additional quality checks for synthetic HNWI data:

Narrative-to-field consistency. If a profile includes a written asset description (e.g., “Capital resources aggregate to $129,871,826”), the dollar amounts in the narrative text should match the structured fields exactly. Mismatches between narrative and numerical fields indicate that the text was generated without awareness of the numbers.

Geographic coherence. A residence in Ocean Avenue, Santa Monica should correspond to a wealth tier and professional profile consistent with that neighborhood. A $2 million net worth in a billionaire enclave, or a pharmaceutical executive listed in a Silicon Valley tech cluster, signals poor geographic modeling.

Distribution realism. UHNWI wealth follows a Pareto distribution — a small number of profiles at very high net worth, with a long tail. If the dataset shows a normal (bell curve) distribution of wealth, it was not modeled on real-world wealth patterns.

We detail all five checks — with scoring criteria — in Five Red Flags in Your Synthetic Data Provider’s Sample File. And for the mathematical foundation behind distribution realism, see Pareto, Not Gaussian.

Try It Yourself

I publish a free sample of 100 synthetic Silicon Valley UHNWI profiles. Every record passes both levels of the balance sheet test — 100 out of 100.

Download the sample, open it in Python or Excel, and run the checks yourself. If the math doesn’t hold up, walk away. If it does, consider what 1,000 or 100,000 records with the same integrity could do for your product.

Frequently Asked Questions

What is the balance sheet test for synthetic financial data?

The balance sheet test verifies that every synthetic profile satisfies the fundamental accounting equation: Total Assets minus Total Liabilities equals Net Worth. If a synthetic data provider cannot pass this test with zero errors across their entire dataset, their data contains mathematically impossible profiles that will corrupt any AI model or compliance system trained on them.

Why do most synthetic data providers fail the balance sheet test?

Most providers generate financial fields independently using statistical sampling or AI generation. When net worth, assets, and liabilities are produced separately, they rarely satisfy the accounting constraint. In testing, error rates of 30-50% are common among general-purpose synthetic data tools applied to financial profiles.

How does Sovereign Forger achieve zero balance sheet errors?

Sovereign Forger enforces the constraint algebraically during generation. Net worth is derived from Pareto distributions, then assets are composed from archetype-specific allocations, and liabilities are calculated so that Assets minus Liabilities equals Net Worth by construction. This is verified by an automated audit on every record before delivery.

Can I run the balance sheet test on my existing synthetic data?

Yes. The test is simple: for every record, calculate (total_assets – total_liabilities) and compare to net_worth_usd. Any difference beyond floating-point rounding indicates a broken record. Sovereign Forger publishes a validation script on GitHub that automates this check.

Does the balance sheet test matter for AI training data?

Absolutely. AI models learn patterns from training data. If 30% of training profiles have impossible balance sheets, the model learns that impossible financial structures are normal. This leads to poor predictions, missed fraud patterns, and compliance systems that accept unrealistic profiles as valid.